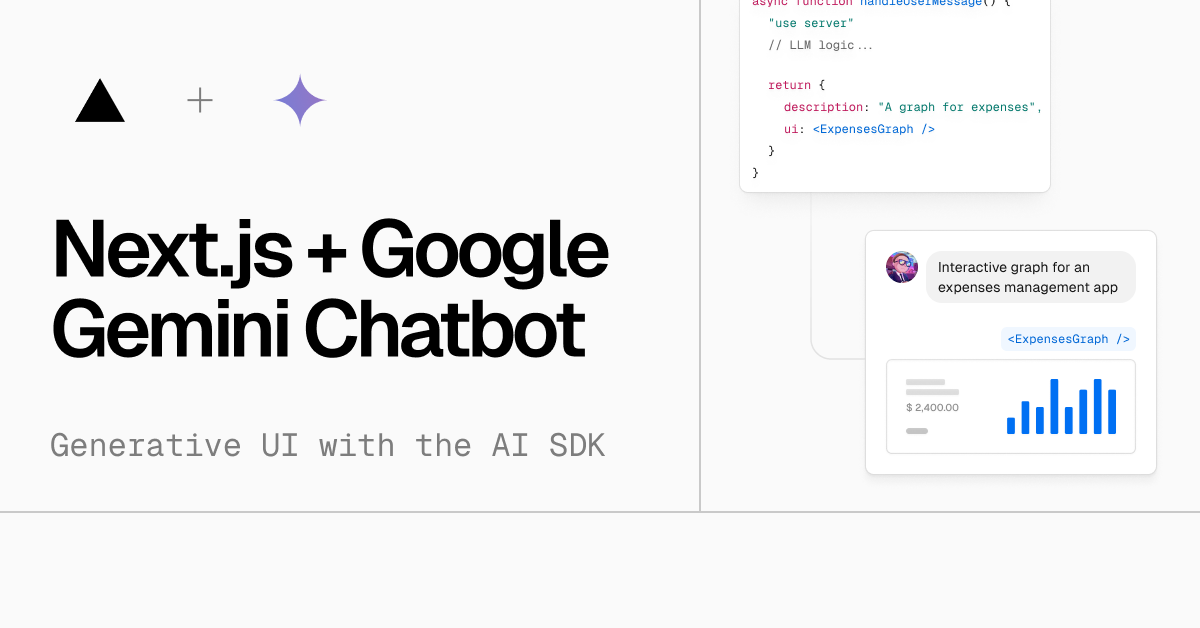

An open-source AI chatbot app template built with Next.js, the Vercel AI SDK, Google Gemini, and Vercel KV.

Features · Model Providers · Deploy Your Own · Running locally · Authors

- Next.js App Router

- React Server Components (RSCs), Suspense, and Server Actions

- Vercel AI SDK for streaming chat UI

- Support for Google Gemini (default), OpenAI, Anthropic, Cohere, Hugging Face, or custom AI chat models and/or LangChain

- shadcn/ui

- Styling with Tailwind CSS

- Radix UI for headless component primitives

- Icons from Phosphor Icons

- Chat History, rate limiting, and session storage with Vercel KV

- NextAuth.js for authentication

This template ships with Google Gemini models/gemini-1.0-pro-001 as the default. However, thanks to the Vercel AI SDK, you can switch LLM providers to OpenAI, Anthropic, Cohere, Hugging Face, or using LangChain with just a few lines of code.

You can deploy your own version of the Next.js AI Chatbot to Vercel with one click:

You will need to use the environment variables defined in .env.example to run Next.js AI Chatbot. It's recommended you use Vercel Environment Variables for this, but a .env file is all that is necessary.

Note: You should not commit your

.envfile or it will expose secrets that will allow others to control access to your various Google Cloud and authentication provider accounts.

- Install Vercel CLI:

npm i -g vercel - Link local instance with Vercel and GitHub accounts (creates

.verceldirectory):vercel link - Download your environment variables:

vercel env pull

pnpm install

pnpm devYour app template should now be running on localhost:3000.

This library is created by Vercel and Next.js team members, with contributions from:

- Jared Palmer (@jaredpalmer) - Vercel

- Shu Ding (@shuding_) - Vercel

- shadcn (@shadcn) - Vercel

- Jeremy Philemon (@jrmyphlmn) - Vercel