English | 中文

SpikingJelly is an open-source deep learning framework for Spiking Neural Network (SNN) based on PyTorch.

The documentation of SpikingJelly is written in both English and Chinese: https://spikingjelly.readthedocs.io.

- Installation

- Build SNN In An Unprecedented Simple Way

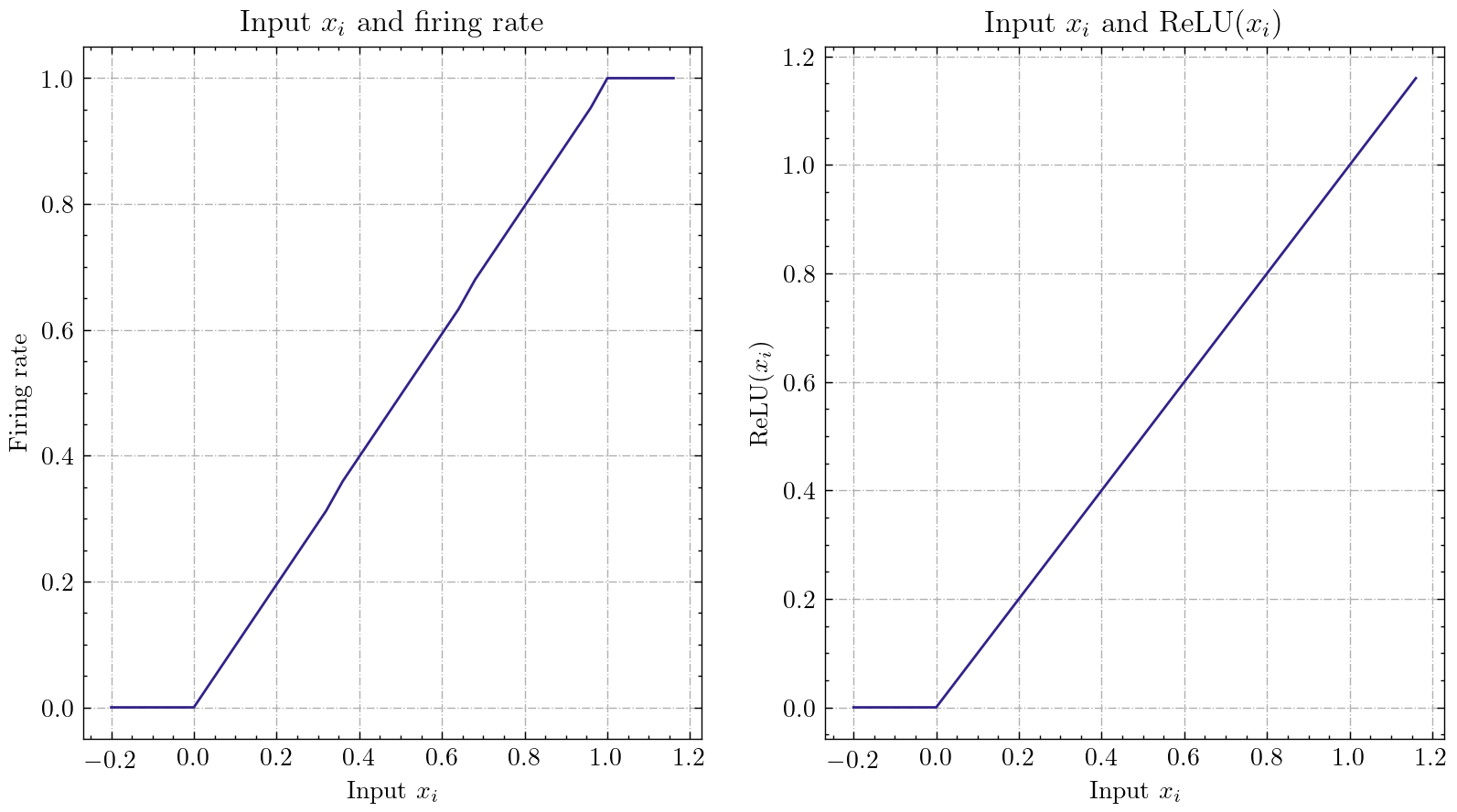

- Fast And Handy ANN-SNN Conversion

- CUDA-Enhanced Neuron

- Device Supports

- Neuromorphic Datasets Supports

- Tutorials

- Publications and Citation

- Contribution

- About

Note that SpikingJelly is based on PyTorch. Please make sure that you have installed PyTorch before you install SpikingJelly.

The odd version number is the developing version, which is updated with GitHub/OpenI repository. The even version number is the stable version and available at PyPI.

Note that the default doc is for the latest developing version. If you are using the stable version, do not forget to switch to the doc in the corresponding version: https://spikingjelly.readthedocs.io/zh_CN/0.0.0.0.12/.

Install the last stable version from PyPI:

pip install spikingjellyInstall the latest developing version from the source codes:

From GitHub:

git clone https://github.com/fangwei123456/spikingjelly.git

cd spikingjelly

python setup.py installFrom OpenI:

git clone https://openi.pcl.ac.cn/OpenI/spikingjelly.git

cd spikingjelly

python setup.py installIf you use an old version of SpikingJelly, you may meet some fatal bugs. Refer to Bugs History with Releases for more details.

SpikingJelly is user-friendly. Building SNN with SpikingJelly is as simple as building ANN in PyTorch:

nn.Sequential(

layer.Flatten(),

layer.Linear(28 * 28, 10, bias=False),

neuron.LIFNode(tau=tau, surrogate_function=surrogate.ATan())

)This simple network with a Poisson encoder can achieve 92% accuracy on MNIST test dataset. Read the tutorial of clock driven for more details. You can also run this code in Python terminal for training on classifying MNIST:

python -m spikingjelly.activation_based.examples.lif_fc_mnist -tau 2.0 -T 100 -device cuda:0 -b 64 -epochs 100 -data-dir <PATH to MNIST> -amp -opt adam -lr 1e-3 -j 8SpikingJelly implements a relatively general ANN-SNN Conversion interface. Users can realize the conversion through PyTorch. What's more, users can customize the conversion mode.

class ANN(nn.Module):

def __init__(self):

super().__init__()

self.network = nn.Sequential(

nn.Conv2d(1, 32, 3, 1),

nn.BatchNorm2d(32, eps=1e-3),

nn.ReLU(),

nn.AvgPool2d(2, 2),

nn.Conv2d(32, 32, 3, 1),

nn.BatchNorm2d(32, eps=1e-3),

nn.ReLU(),

nn.AvgPool2d(2, 2),

nn.Conv2d(32, 32, 3, 1),

nn.BatchNorm2d(32, eps=1e-3),

nn.ReLU(),

nn.AvgPool2d(2, 2),

nn.Flatten(),

nn.Linear(32, 10)

)

def forward(self,x):

x = self.network(x)

return xThis simple network with analog encoding can achieve 98.44% accuracy after converiosn on MNIST test dataset. Read the tutorial of ann2snn for more details. You can also run this code in Python terminal for training on classifying MNIST using converted model:

>>> import spikingjelly.activation_based.ann2snn.examples.cnn_mnist as cnn_mnist

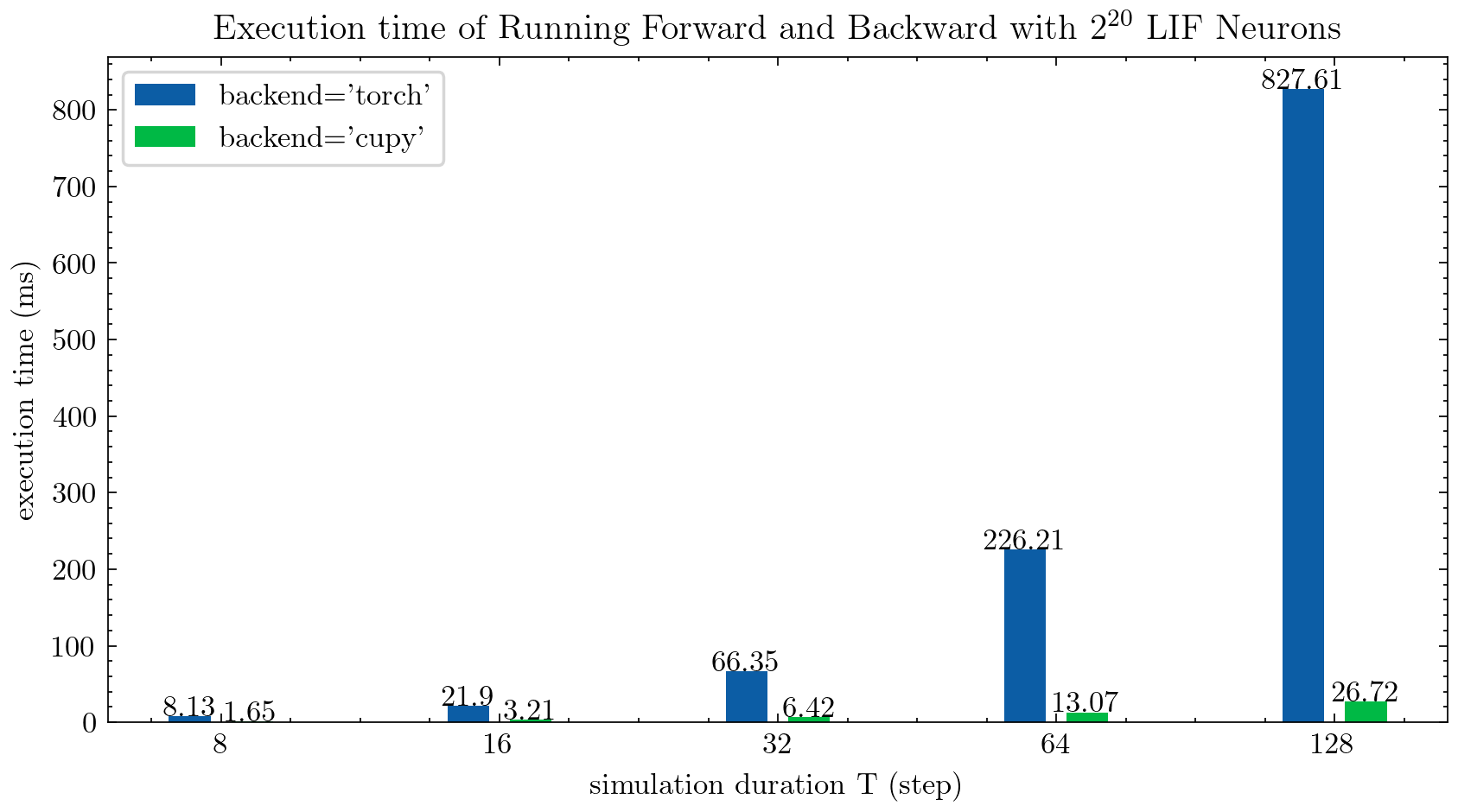

>>> cnn_mnist.main()SpikingJelly provides two backends for multi-step neurons (read Tutorials for more details). You can use the user-friendly torch backend for easily codding and debugging, and use cupy backend for faster training speed.

The followed figure compares execution time of two backends of Multi-Step LIF neurons (float32):

float16 is also provided by the cupy backend and can be used in automatic mixed precision training.

To use the cupy backend, please install CuPy. Note that the cupy backend only supports GPU, while the torch backend supports both CPU and GPU.

- Nvidia GPU

- CPU

As simple as using PyTorch.

>>> net = nn.Sequential(layer.Flatten(), layer.Linear(28 * 28, 10, bias=False), neuron.LIFNode(tau=tau))

>>> net = net.to(device) # Can be CPU or CUDA devicesSpikingJelly includes the following neuromorphic datasets:

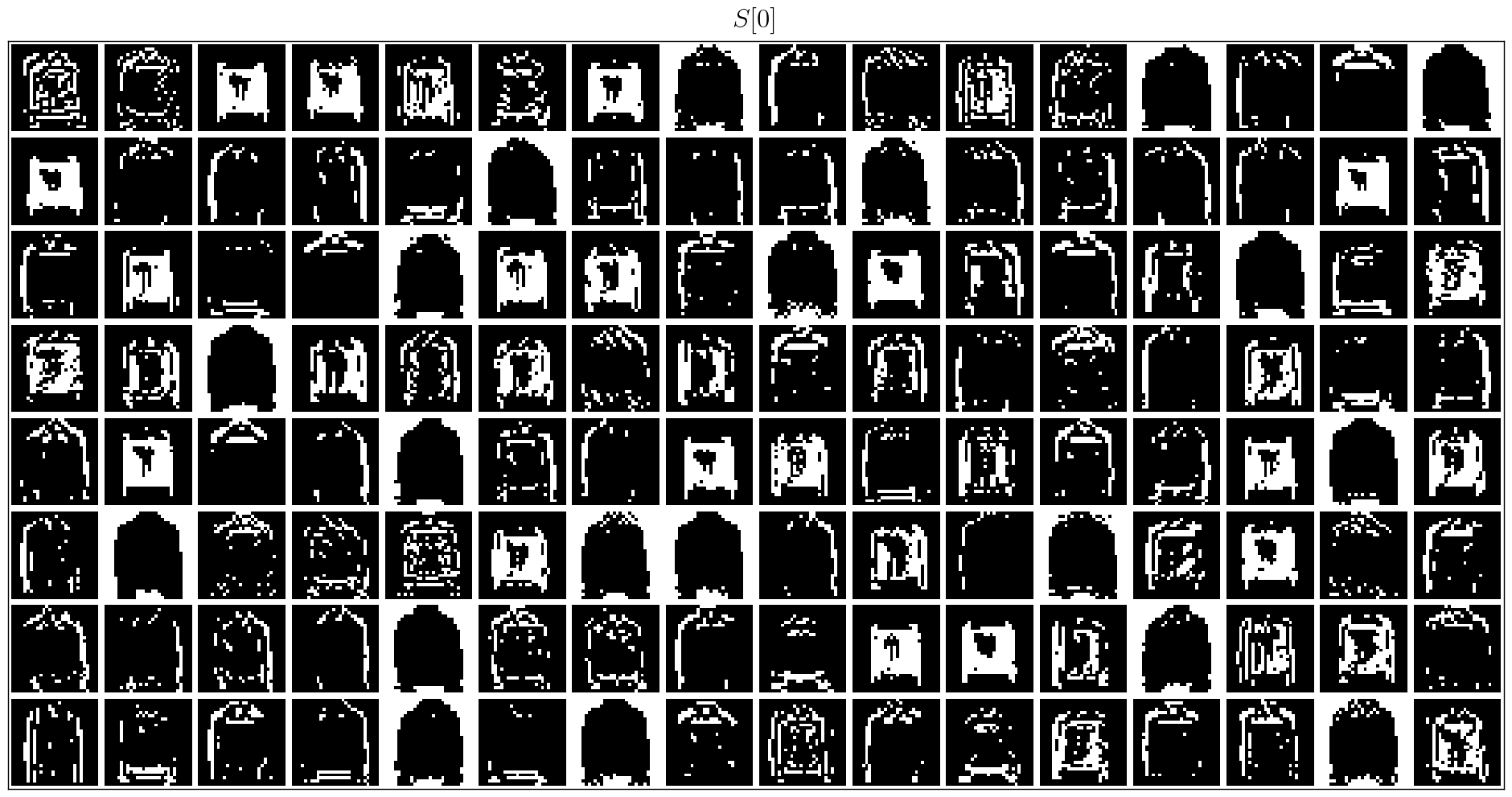

Users can use both the origin events data and frames data integrated by SpikingJelly:

import torch

from torch.utils.data import DataLoader

from spikingjelly.datasets import pad_sequence_collate, padded_sequence_mask

from spikingjelly.datasets.dvs128_gesture import DVS128Gesture

root_dir = 'D:/datasets/DVS128Gesture'

event_set = DVS128Gesture(root_dir, train=True, data_type='event')

event, label = event_set[0]

for k in event.keys():

print(k, event[k])

# t [80048267 80048277 80048278 ... 85092406 85092538 85092700]

# x [49 55 55 ... 60 85 45]

# y [82 92 92 ... 96 86 90]

# p [1 0 0 ... 1 0 0]

# label 0

fixed_frames_number_set = DVS128Gesture(root_dir, train=True, data_type='frame', frames_number=20, split_by='number')

rand_index = torch.randint(low=0, high=fixed_frames_number_set.__len__(), size=[2])

for i in rand_index:

frame, label = fixed_frames_number_set[i]

print(f'frame[{i}].shape=[T, C, H, W]={frame.shape}')

# frame[308].shape=[T, C, H, W]=(20, 2, 128, 128)

# frame[453].shape=[T, C, H, W]=(20, 2, 128, 128)

fixed_duration_frame_set = DVS128Gesture(root_dir, data_type='frame', duration=1000000, train=True)

for i in range(5):

x, y = fixed_duration_frame_set[i]

print(f'x[{i}].shape=[T, C, H, W]={x.shape}')

# x[0].shape=[T, C, H, W]=(6, 2, 128, 128)

# x[1].shape=[T, C, H, W]=(6, 2, 128, 128)

# x[2].shape=[T, C, H, W]=(5, 2, 128, 128)

# x[3].shape=[T, C, H, W]=(5, 2, 128, 128)

# x[4].shape=[T, C, H, W]=(7, 2, 128, 128)

train_data_loader = DataLoader(fixed_duration_frame_set, collate_fn=pad_sequence_collate, batch_size=5)

for x, y, x_len in train_data_loader:

print(f'x.shape=[N, T, C, H, W]={tuple(x.shape)}')

print(f'x_len={x_len}')

mask = padded_sequence_mask(x_len) # mask.shape = [T, N]

print(f'mask=\n{mask.t().int()}')

break

# x.shape=[N, T, C, H, W]=(5, 7, 2, 128, 128)

# x_len=tensor([6, 6, 5, 5, 7])

# mask=

# tensor([[1, 1, 1, 1, 1, 1, 0],

# [1, 1, 1, 1, 1, 1, 0],

# [1, 1, 1, 1, 1, 0, 0],

# [1, 1, 1, 1, 1, 0, 0],

# [1, 1, 1, 1, 1, 1, 1]], dtype=torch.int32)More datasets will be included in the future.

If some datasets' download link are not available for some users, the users can download from the OpenI mirror:

https://openi.pcl.ac.cn/OpenI/spikingjelly/datasets?type=0

All datasets saved in the OpenI mirror are allowable by their licence or authors' agreement.

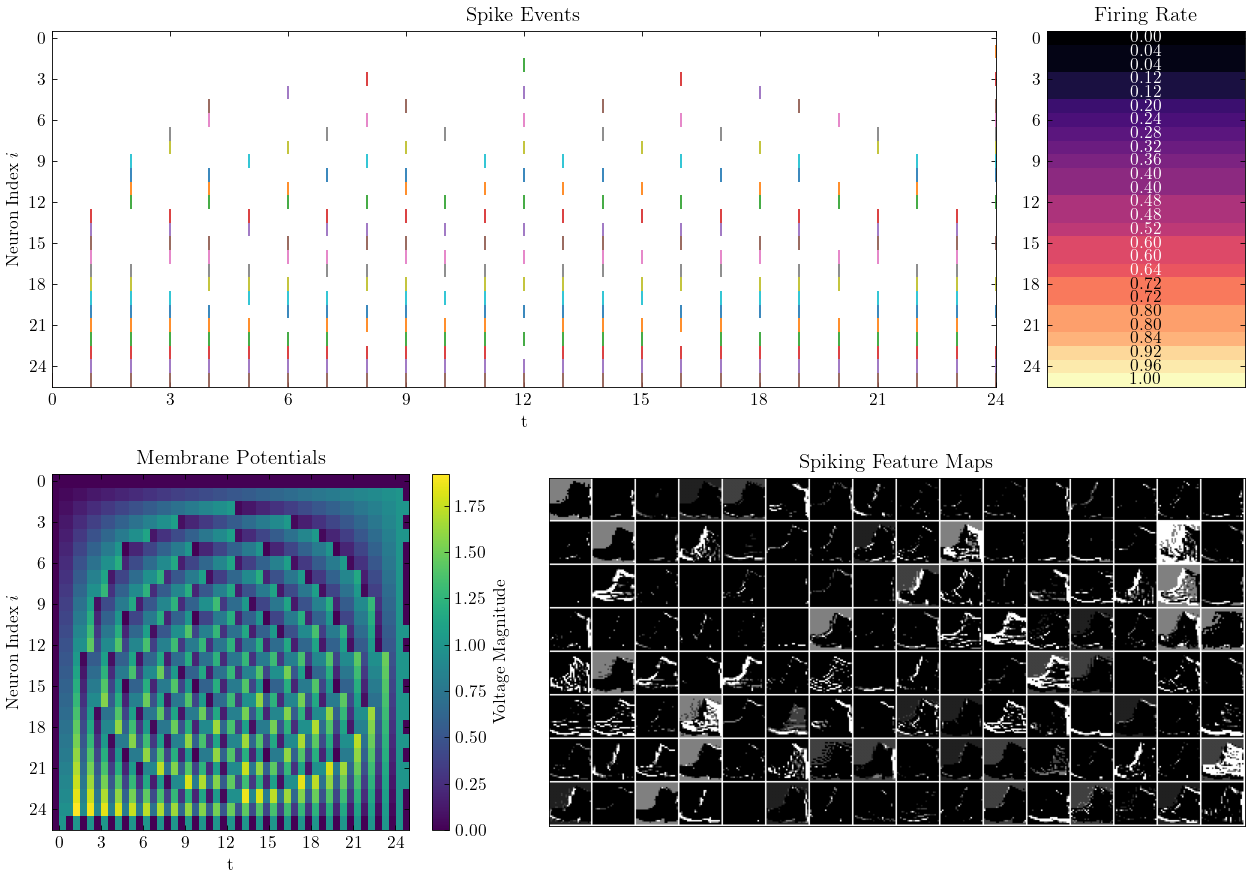

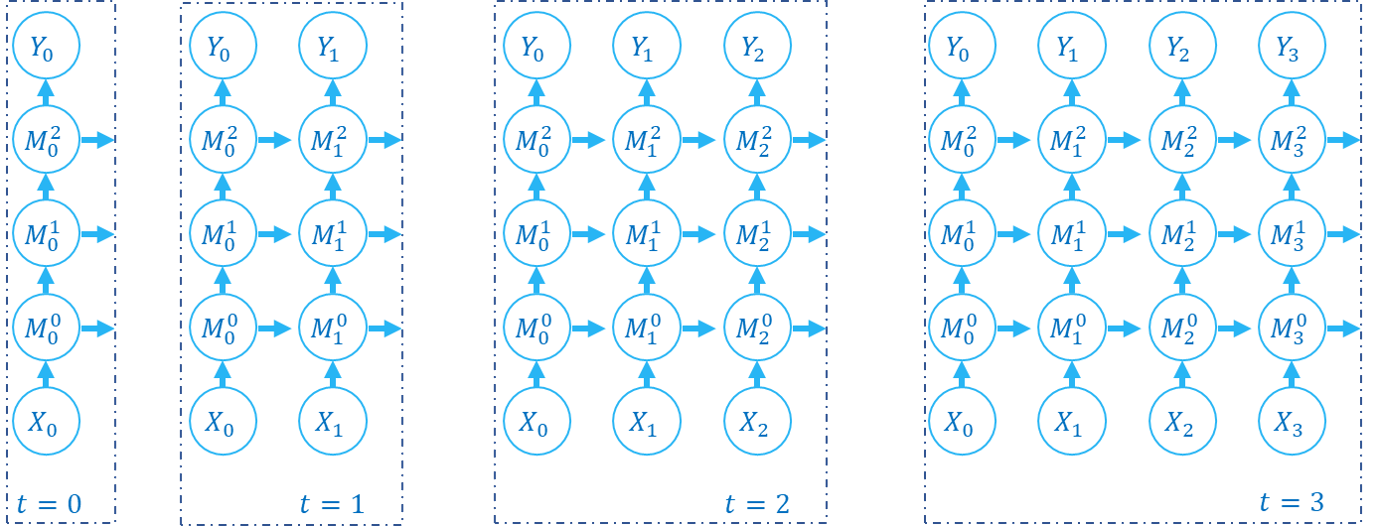

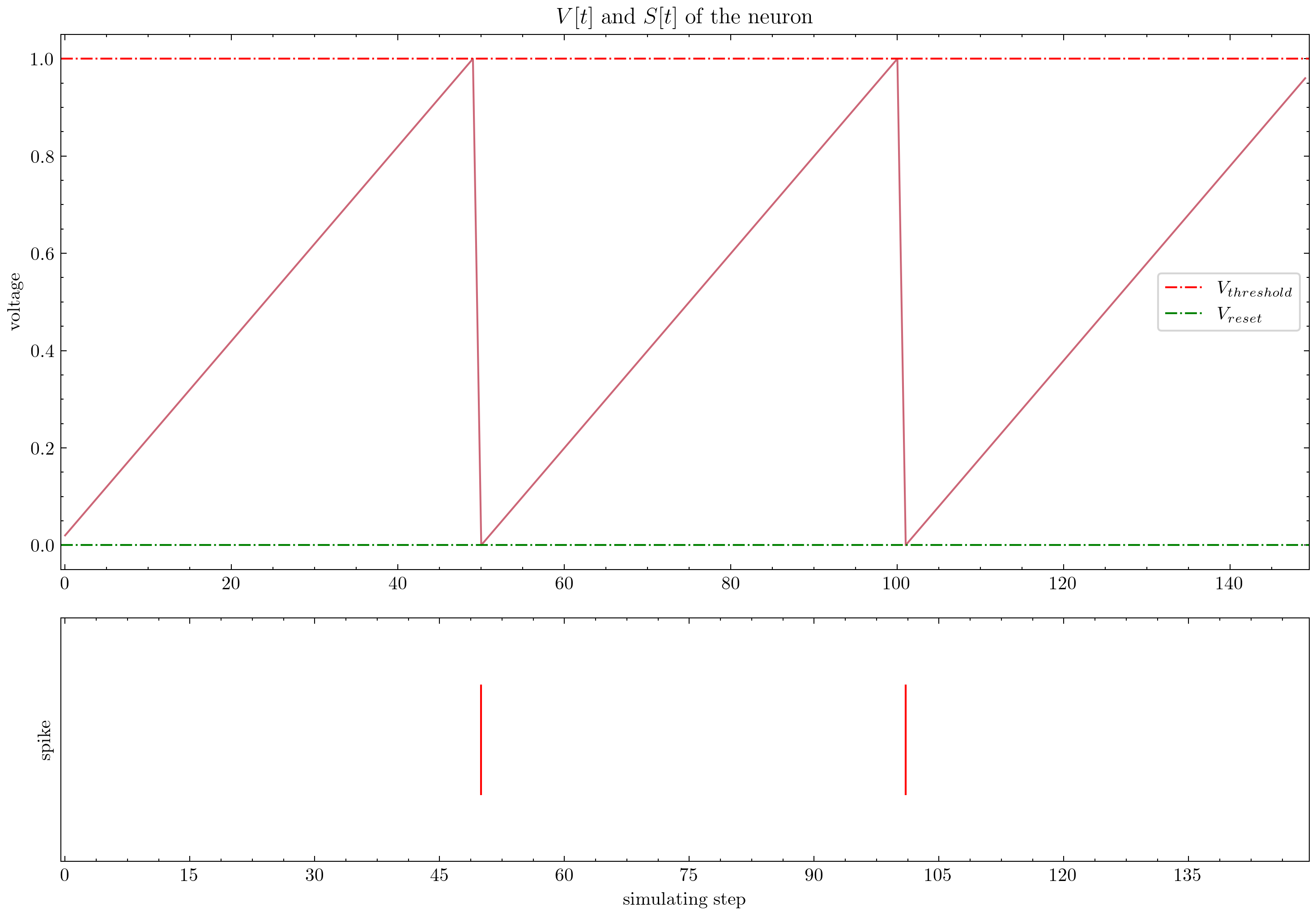

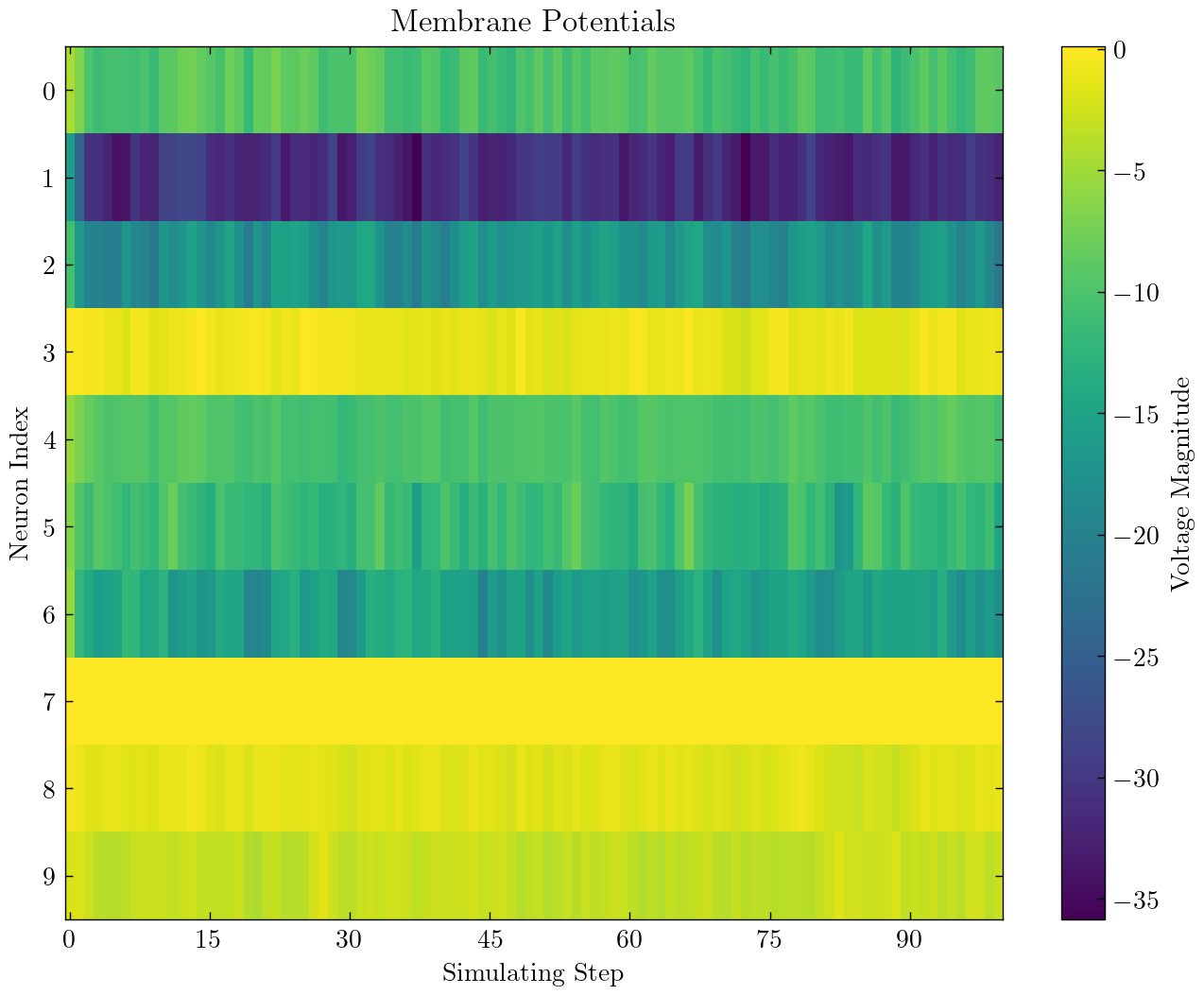

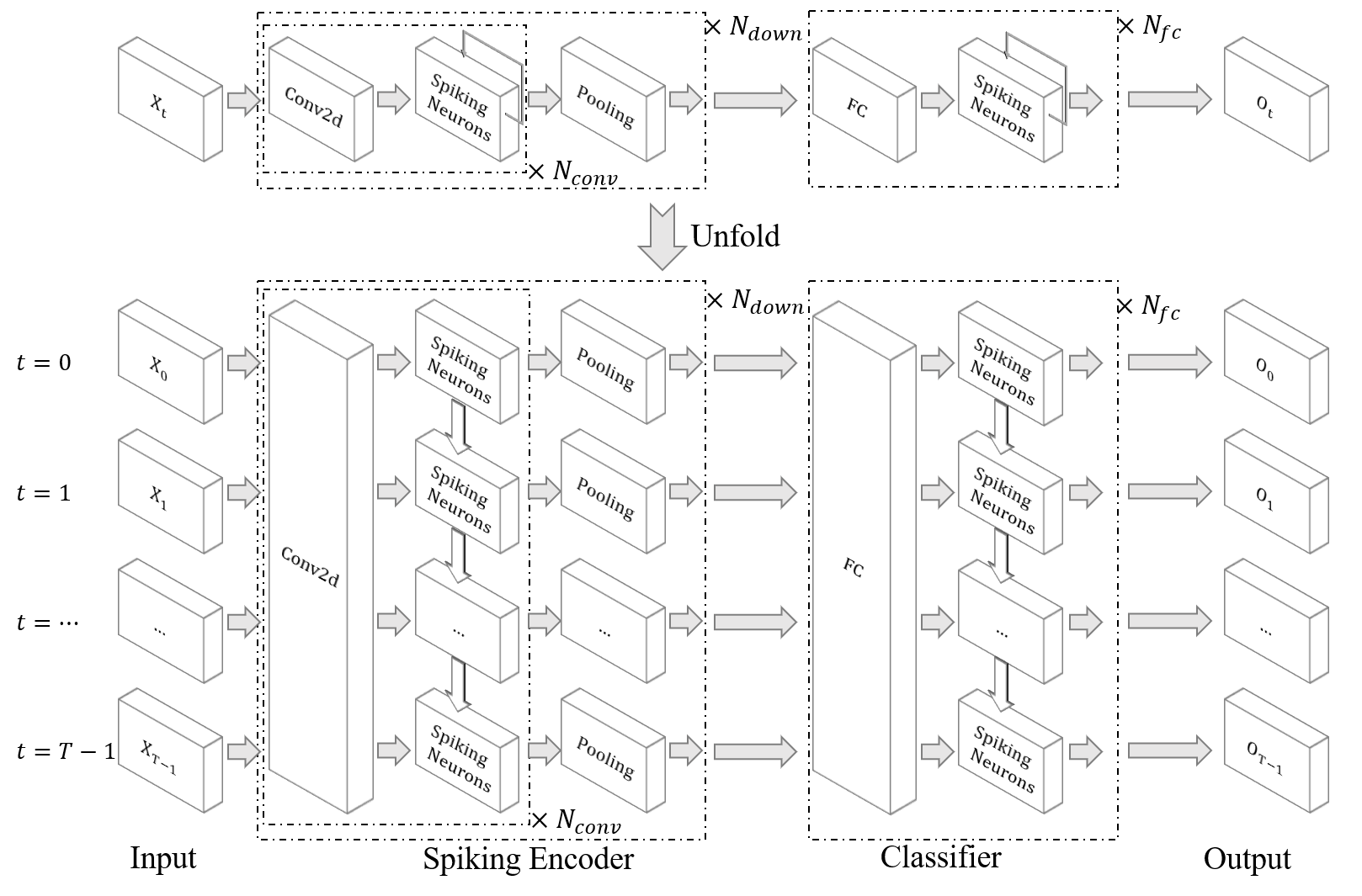

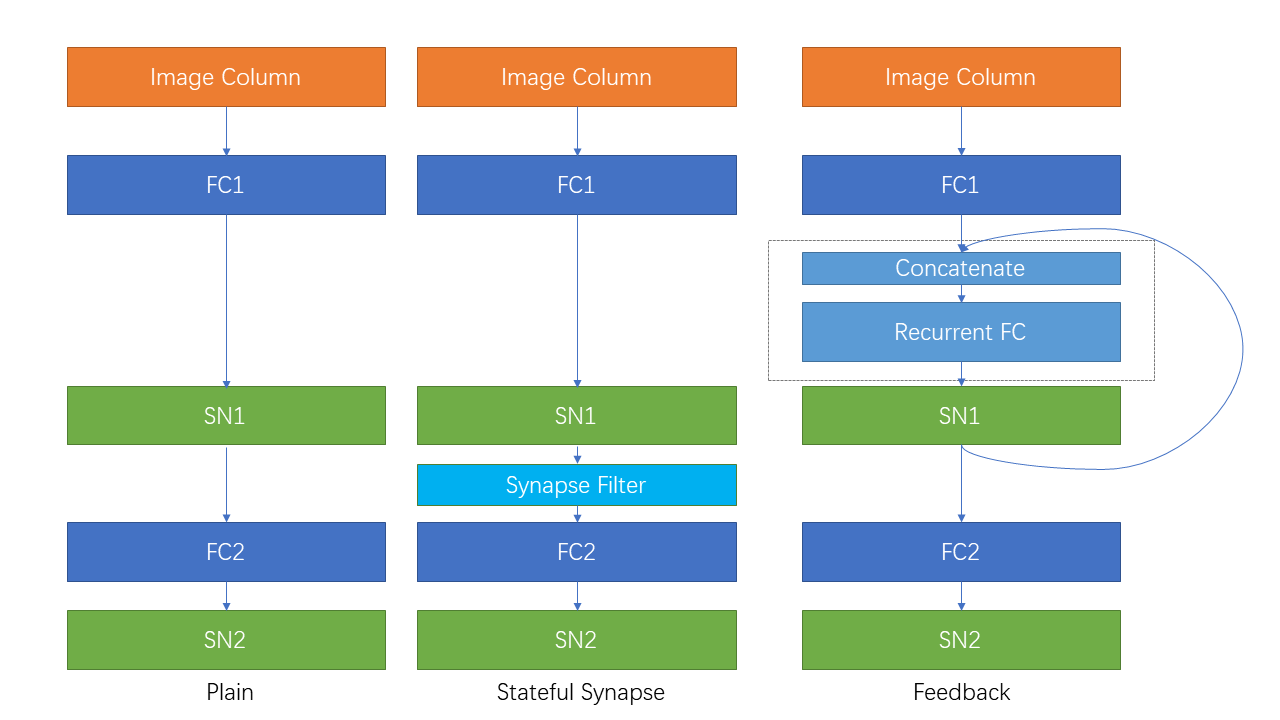

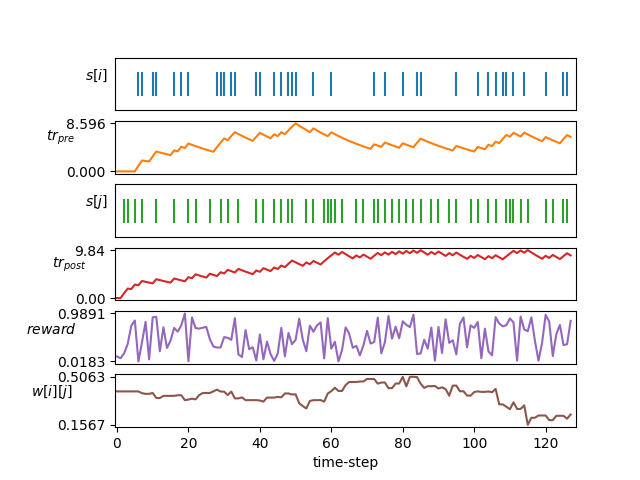

SpikingJelly provides elaborate tutorials. Here are some of tutorials:

Other tutorials that are not listed here are also available at the document https://spikingjelly.readthedocs.io.

Publications using SpikingJelly are recorded in Publications. If you use SpikingJelly in your paper, you can also add it to this table by pull request.

If you use SpikingJelly in your work, please cite it as follows:

@misc{SpikingJelly,

title = {SpikingJelly},

author = {Fang, Wei and Chen, Yanqi and Ding, Jianhao and Chen, Ding and Yu, Zhaofei and Zhou, Huihui and Timothée Masquelier and Tian, Yonghong and other contributors},

year = {2020},

howpublished = {\url{https://github.com/fangwei123456/spikingjelly}},

note = {Accessed: YYYY-MM-DD},

}

Note: To specify the version of framework you are using, the default value YYYY-MM-DD in the note field should be replaced with the date of the last change of the framework you are using, i.e. the date of the latest commit.

You can read the issues and get the problems to be solved and latest development plans. We welcome all users to join the discussion of development plans, solve issues, and send pull requests.

Not all API documents are written in both English and Chinese. We welcome users to complete translation (from English to Chinese, or from Chinese to English).

Multimedia Learning Group, Institute of Digital Media (NELVT), Peking University and Peng Cheng Laboratory are the main developers of SpikingJelly.

The list of developers can be found here.