By Mengde Xu*, Zheng Zhang*, Han Hu, Jianfeng Wang, Lijuan Wang, Fangyun Wei, Xiang Bai, Zicheng Liu.

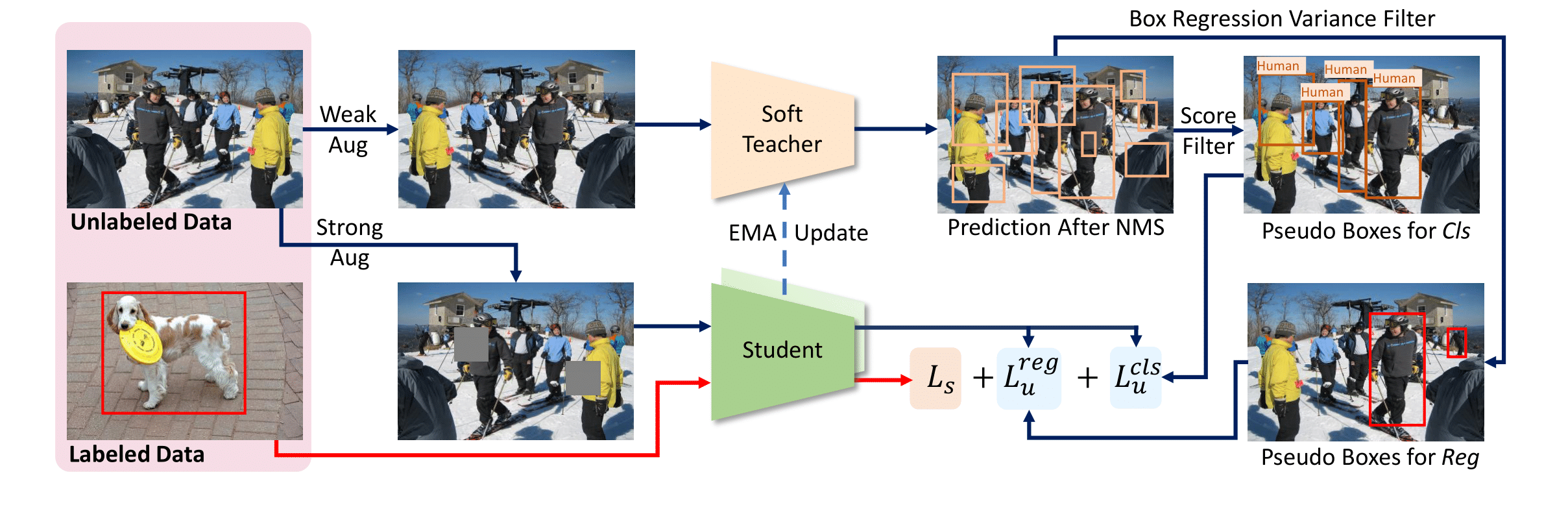

This repo is the official implementation of ICCV2021 paper "End-to-End Semi-Supervised Object Detection with Soft Teacher".

This repo is the official implementation of ICCV2021 paper "End-to-End Semi-Supervised Object Detection with Soft Teacher".

@article{xu2021end,

title={End-to-End Semi-Supervised Object Detection with Soft Teacher},

author={Xu, Mengde and Zhang, Zheng and Hu, Han and Wang, Jianfeng and Wang, Lijuan and Wei, Fangyun and Bai, Xiang and Liu, Zicheng},

journal={Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

year={2021}

}We followed STAC[1] to evaluate on 5 different data splits for each setting, and report the average performance of 5 splits. The results are shown in the following:

| Method | mAP | Model Weights | Config Files |

|---|---|---|---|

| Baseline | 10.0 | - | Config |

| Ours (thr=5e-2) | 21.62 | Drive | Config |

| Ours (thr=1e-3) | 22.64 | Drive | Config |

| Method | mAP | Model Weights | Config Files |

|---|---|---|---|

| Baseline | 20.92 | - | Config |

| Ours (thr=5e-2) | 30.42 | Drive | Config |

| Ours (thr=1e-3) | 31.7 | Drive | Config |

| Method | mAP | Model Weights | Config Files |

|---|---|---|---|

| Baseline | 26.94 | - | Config |

| Ours (thr=5e-2) | 33.78 | Drive | Config |

| Ours (thr=1e-3) | 34.7 | Drive | Config |

| Model | mAP | Model Weights | Config Files |

|---|---|---|---|

| Baseline | 40.9 | - | Config |

| Ours (thr=5e-2) | 44.05 | Drive | Config |

| Ours (thr=1e-3) | 44.6 | Drive | Config |

| Ours* (thr=5e-2) | 44.5 | - | Config |

| Ours* (thr=1e-3) | 44.9 | - | Config |

| Model | mAP | Model Weights | Config Files |

|---|---|---|---|

| Baseline | 43.8 | - | Config |

| Ours* (thr=5e-2) | 46.9 | Drive | Config |

| Ours* (thr=1e-3) | 47.6 | Drive | Config |

- Ours* means we use longer training schedule.

thrindicatesmodel.test_cfg.rcnn.score_thrin config files. This inference trick was first introduced by Instant-Teaching[2].- All models are trained on 8*V100 GPUs

Ubuntu 16.04Anaconda3withpython=3.6Pytorch=1.9.0mmdetection=2.16.0+fe46ffemmcv=1.3.9wandb=0.10.31

- We use wandb for visualization, if you don't want to use it, just comment line

273-284inconfigs/soft_teacher/base.py. - The project should be compatible to the latest version of

mmdetection. If you want to switch to the same versionmmdetectionas ours, runcd thirdparty/mmdetection && git checkout v2.16.0

make install

- Download the COCO dataset

- Execute the following command to generate data set splits:

# YOUR_DATA should be a directory contains coco dataset.

# For eg.:

# YOUR_DATA/

# coco/

# train2017/

# val2017/

# unlabeled2017/

# annotations/

ln -s ${YOUR_DATA} data

bash tools/dataset/prepare_coco_data.sh conduct

For concrete instructions of what should be downloaded, please refer to tools/dataset/prepare_coco_data.sh line 11-24

- To train model on the partial labeled data setting:

# JOB_TYPE: 'baseline' or 'semi', decide which kind of job to run

# PERCENT_LABELED_DATA: 1, 5, 10. The ratio of labeled coco data in whole training dataset.

# GPU_NUM: number of gpus to run the job

for FOLD in 1 2 3 4 5;

do

bash tools/dist_train_partially.sh <JOB_TYPE> ${FOLD} <PERCENT_LABELED_DATA> <GPU_NUM>

doneFor example, we could run the following scripts to train our model on 10% labeled data with 8 GPUs:

for FOLD in 1 2 3 4 5;

do

bash tools/dist_train_partially.sh semi ${FOLD} 10 8

done- To train model on the full labeled data setting:

bash tools/dist_train.sh <CONFIG_FILE_PATH> <NUM_GPUS>For example, to train ours R50 model with 8 GPUs:

bash tools/dist_train.sh configs/soft_teacher/soft_teacher_faster_rcnn_r50_caffe_fpn_coco_full_720k.py 8- To train model on new dataset:

The core idea is to convert a new dataset to coco format. Details about it can be found in the adding new dataset.

bash tools/dist_test.sh <CONFIG_FILE_PATH> <CHECKPOINT_PATH> <NUM_GPUS> --eval bbox --cfg-options model.test_cfg.rcnn.score_thr=<THR>

To inference with trained model and visualize the detection results:

# [IMAGE_FILE_PATH]: the path of your image file in local file system

# [CONFIG_FILE]: the path of a confile file

# [CHECKPOINT_PATH]: the path of a trained model related to provided confilg file.

# [OUTPUT_PATH]: the directory to save detection result

python demo/image_demo.py [IMAGE_FILE_PATH] [CONFIG_FILE] [CHECKPOINT_PATH] --output [OUTPUT_PATH]For example:

- Inference on single image with provided

R50model:

python demo/image_demo.py /tmp/tmp.png configs/soft_teacher/soft_teacher_faster_rcnn_r50_caffe_fpn_coco_full_720k.py work_dirs/downloaded.model --output work_dirs/After the program completes, a image with the same name as input will be saved to work_dirs

- Inference on many images with provided

R50model:

python demo/image_demo.py '/tmp/*.jpg' configs/soft_teacher/soft_teacher_faster_rcnn_r50_caffe_fpn_coco_full_720k.py work_dirs/downloaded.model --output work_dirs/[1] A Simple Semi-Supervised Learning Framework for Object Detection

[2] Instant-Teaching: An End-to-End Semi-Supervised Object Detection Framework