Project

- Mamba-UNet -> [Paper Link]

- Semi-Mamba-UNet -> [Paper Link]

- Weak-Mamba-UNet -> [Paper Link]

- VMambaMorph -> [Paper Link]

- Survey on Visual Mamba -> [Paper Link]

Code

- Code for Mamba-UNet -> [Link]

- Code for Semi-Mamba-UNet -> [Link]

- Code for Weak-Mamba-UNet -> [Link]

- Code for VMambaMorph -> [Link]

- Paper List for Visual Mamba -> [Link]

More Experiments

- Dataset of ACDC MRI Cardiac MICCAI Challenge -> [Official] [Google Drive] [Baidu Netdisk]

- Dataset of Synapse CT Abdomen MICCAI Challenge -> [Official] [Google Drive] [Baidu Netdisk]

- Dataset of PROMISE12 Prostate MR MICCAI Challenge -> [Official] [Google Drive] [Baidu Netdisk]

- Dataset of GLAS -> [Official] [Google Drive] [Baidu Netdisk] \

- Dataset of BUSI -> [Official] [Google Drive] [Baidu Netdisk] \

- Dataset of 2018DSB -> [Official] [Google Drive] [Baidu Netdisk] \

- Dataset of CVC-ClinicDB -> [Official] [Google Drive] [Baidu Netdisk] -> [Code Link]

- Dataset of Kvasir-SEG -> [Official] [Google Drive] [Baidu Netdisk] /

- Dataset of ISIC2016 -> [Official] [Google Drive] [Baidu Netdisk] /

- Dataset of PH2 -> [Official] [Google Drive] [Baidu Netdisk] /

- Dataset of TotalSegmentator -> [Official] [Google Drive]

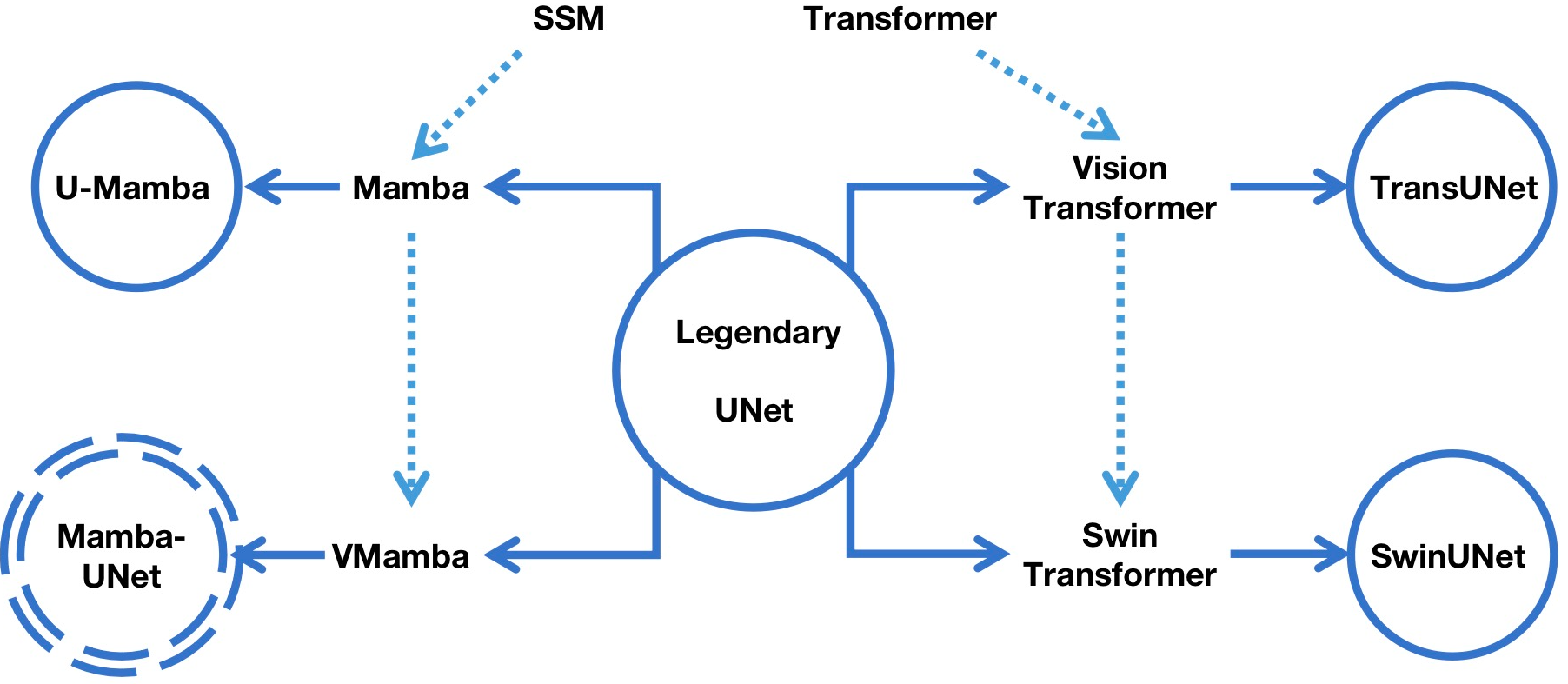

Mamba-UNet: Unet-like Pure Visual Mamba for Medical Image Segmentation

The position of Mamba-UNet

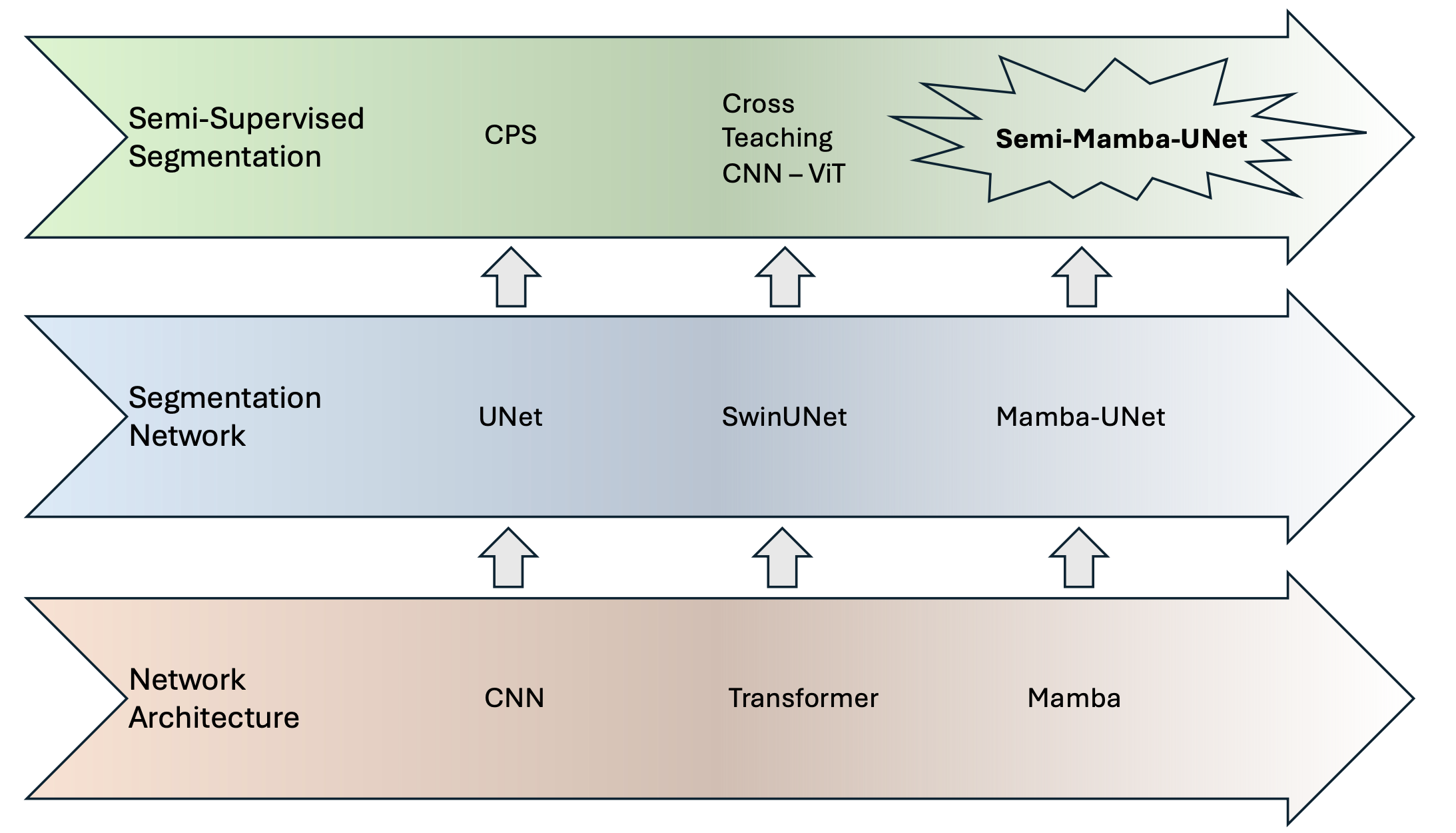

Semi-Mamba-UNet: Pixel-Level Contrastive Cross-Supervised Visual Mamba-based UNet for Semi-Supervised Medical Image Segmentation

The position of Semi-Mamba-UNet

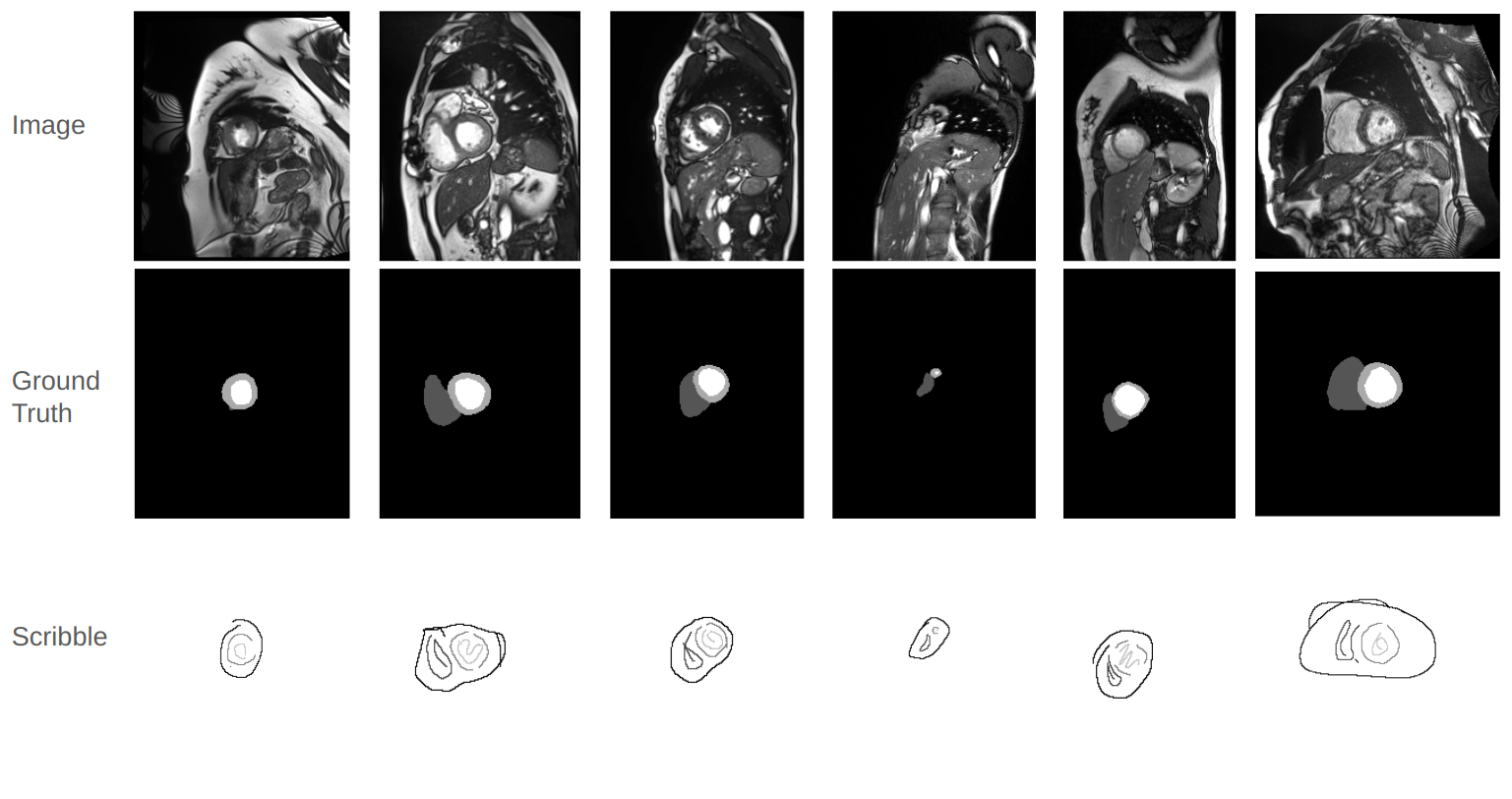

Weak-Mamba-UNet: Visual Mamba Makes CNN and ViT Work Better for Scribble-based Medical Image Segmentation

The introduction of Scribble Annotation

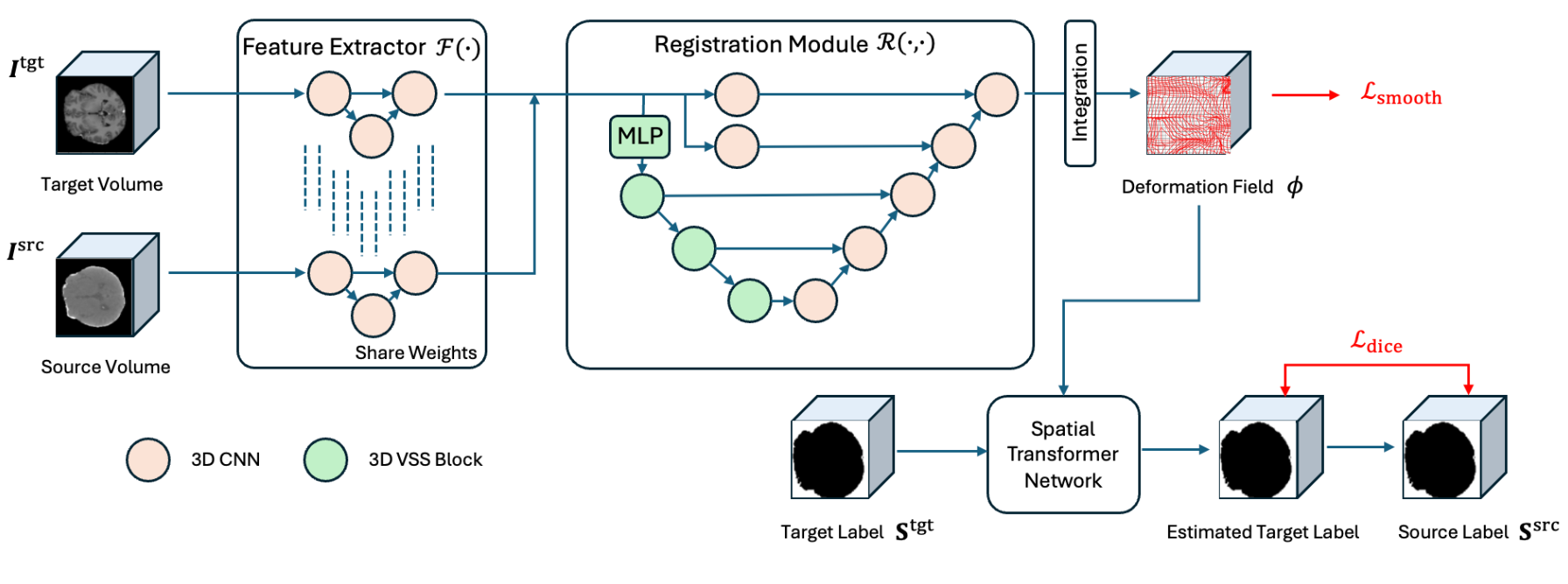

VMambaMorph: a Visual Mamba-based Framework with Cross-Scan Module for Deformable 3D Image Registration

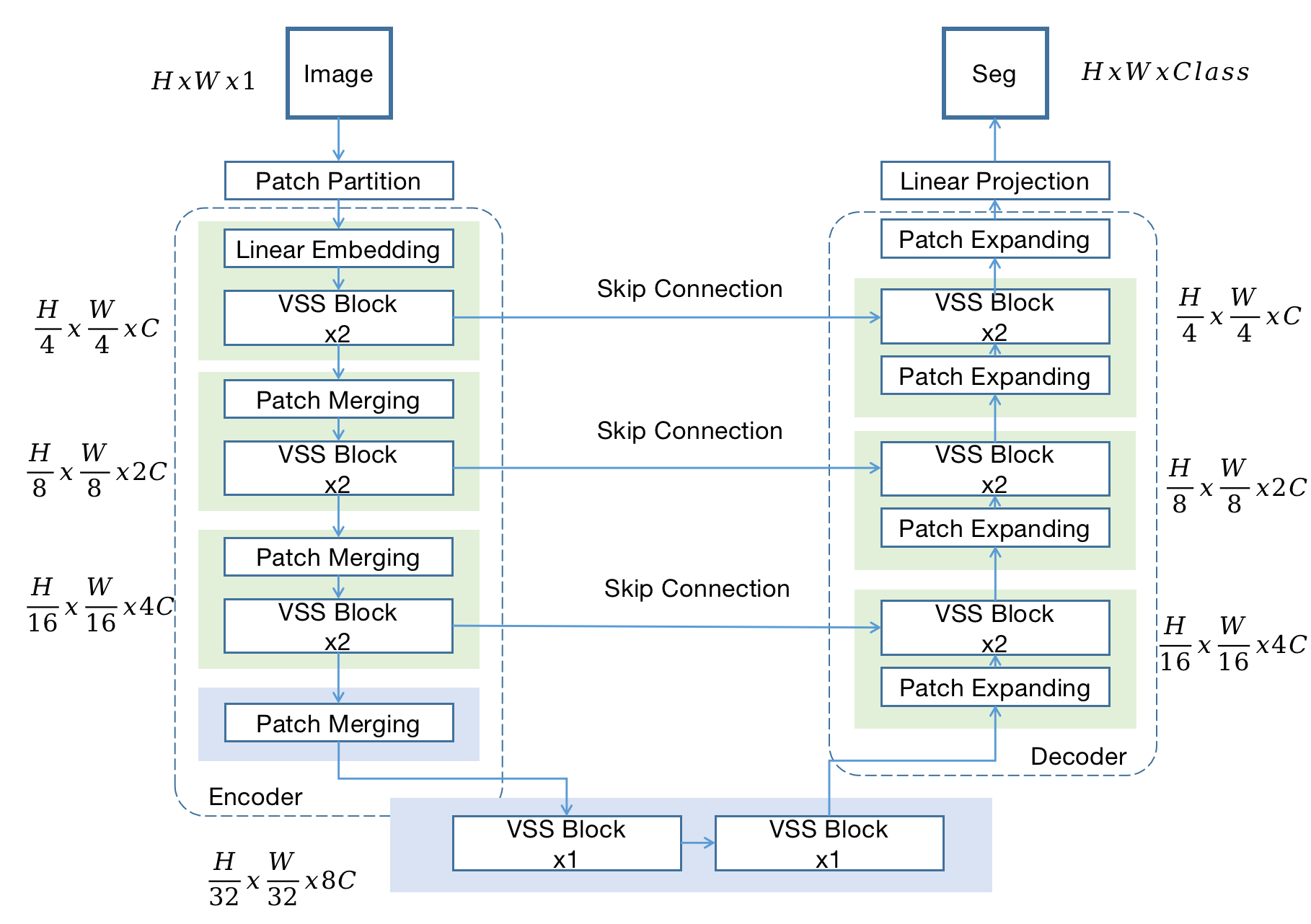

Mamba-UNet

Mamba-UNet

VMambaMorph

Mamba-UNet: All the baseline methods/datasets are with same hyper-parameter setting (10,000 iterations, learning rate, optimizer and etc).

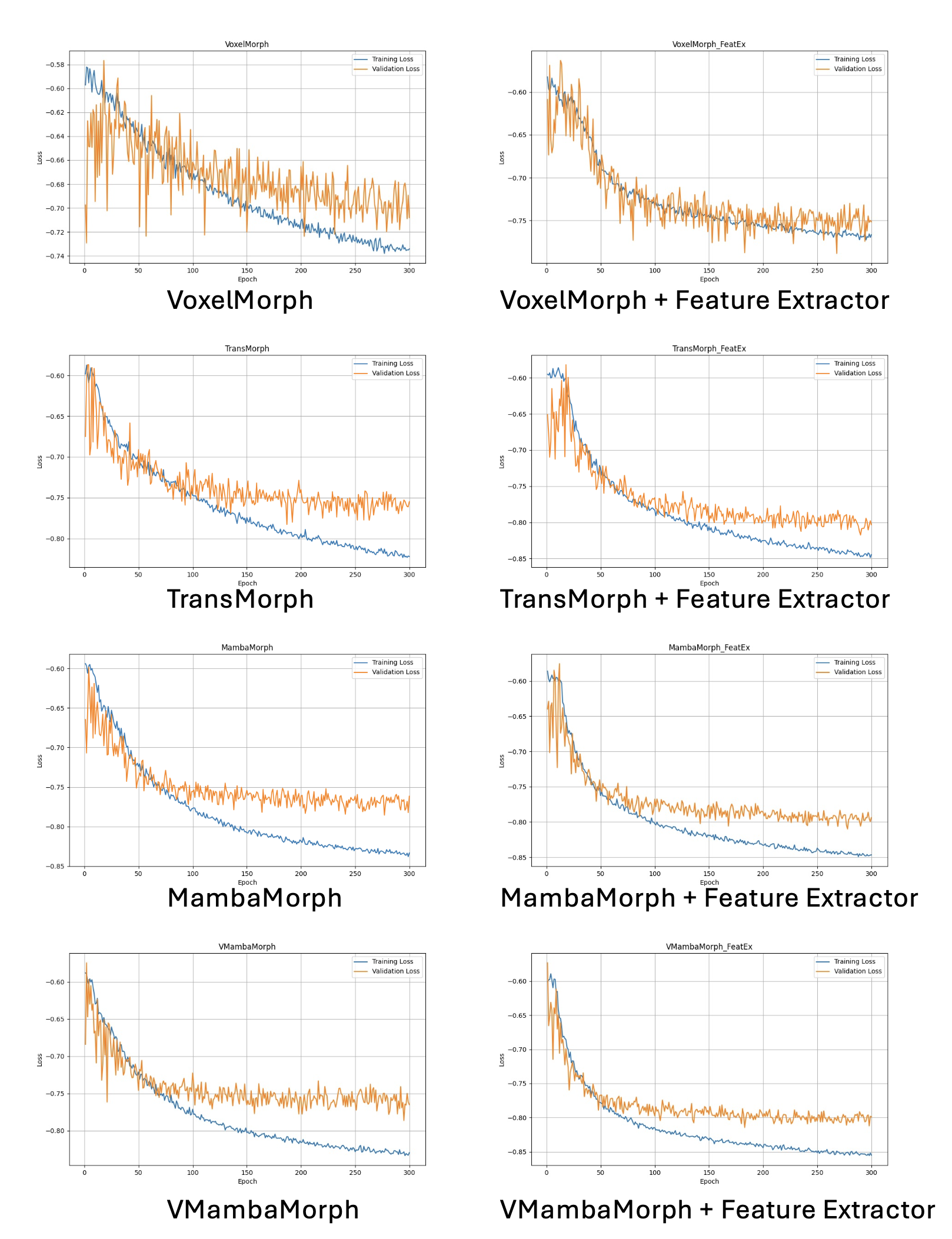

VMambaMorph: All the baseline methods are with same hyper-parameter setting (300 epoches, learning rate, optimizer and etc).

- Pytorch, MONAI

- Some basic python packages: Torchio, Numpy, Scikit-image, SimpleITK, Scipy, Medpy, nibabel, tqdm ......

cd casual-conv1d

python setup.py installcd mamba

python setup.py install- Clone the repo:

git clone https://github.com/ziyangwang007/Mamba-UNet.git

cd Mamba-UNet- Download Pretrained Model

Download through Google Drive for SwinUNet, and [Google Drive] for Mamba-UNet, and save in ../code/pretrained_ckpt.

- Download Dataset

3.1 Download ACDC for Semi-/Supervised learning through [Google Drive] or [Baidu Netdisk] with passcode: 'kafc', and save in ../data/ACDC folder.

3.2 Download ACDC for Weak-Supervised learning through [Google Drive], or [Baidu Netdisk] with passcode: 'rwv2', and save in ../data/ACDC folder.

3.3 Download Synapse for Semi-/Supervised learning through [Google Drive], or [Baidu Netdisk] with passcode 'rwf7' and save in ../data/Synapse folder.

3.4 Download PROMISE12 for Semi-/Supervised learning through [Google Drive], or [Baidu Netdisk] with passcode '6dss' and save in ../data/Prostate folder.

cd code- Train 2D UNet

python train_fully_supervised_2D.py --root_path ../data/ACDC --exp ACDC/unet --model unet --max_iterations 10000 --batch_size 24 --num_classes 4

python train_fully_supervised_2D.py --root_path ../data/Prostate --exp Prostate/unet --model unet --max_iterations 10000 --batch_size 24 --num_classes 2- Train SwinUNet

python train_fully_supervised_2D_ViT.py --root_path ../data/ACDC --exp ACDC/swinunet --model swinunet --max_iterations 10000 --batch_size 24 --num_classes 4

python train_fully_supervised_2D_ViT.py --root_path ../data/Prostate --exp Prostate/swinunet --model swinunet --max_iterations 10000 --batch_size 24 --num_classes 2 - Train Mamba-UNet

python train_fully_supervised_2D_VIM.py --root_path ../data/ACDC --exp ACDC/VIM --model mambaunet --max_iterations 10000 --batch_size 24 --num_classes 4

python train_fully_supervised_2D_VIM.py --root_path ../data/Prostate --exp Prostate/VIM --model mambaunet --max_iterations 10000 --batch_size 24 --num_classes 2- Train Semi-Mamba-UNet when 3 cases as labeled data

python train_Semi_Mamba_UNet.py --root_path ../data/ACDC --exp ACDC/Semi_Mamba_UNet --max_iterations 30000 --labeled_num 3 --batch_size 16 --num_classes 4- Train Semi-Mamba-UNet when 7 cases as labeled data

python train_Semi_Mamba_UNet.py --root_path ../data/ACDC --exp ACDC/Semi_Mamba_UNet --max_iterations 30000 --labeled_num 7 --batch_size 16 --num_classes 4 - Train Semi-Mamba-UNet when 8 cases as labeled data

python train_Semi_Mamba_UNet.py --root_path ../data/Prostate --exp Prostate/Semi_Mamba_UNet --max_iterations 30000 --labeled_num 8 --batch_size 16 --num_classes 2- Train Semi-Mamba-UNet when 12 cases as labeled data

python train_Semi_Mamba_UNet.py --root_path ../data/Prostate --exp Prostate/Semi_Mamba_UNet --max_iterations 30000 --labeled_num 12 --batch_size 16 --num_classes 2- Train UNet with Mean Teacher when 3 cases as labeled data

python train_mean_teacher_2D.py --root_path ../data/ACDC --model unet --exp ACDC/Mean_Teacher --max_iterations 30000 --labeled_num 3 --batch_size 16 --- Train SwinUNet with Mean Teacher when 3 cases as labeled data

python train_mean_teacher_ViT.py --root_path ../data/ACDC --model swinunet --exp ACDC/Mean_Teacher_ViT --max_iterations 30000 --labeled_num 3 --batch_size 16 - Train UNet with Mean Teacher when 7 cases as labeled data

python train_mean_teacher_2D.py --root_path ../data/ACDC --model unet --exp ACDC/Mean_Teacher --max_iterations 30000 --labeled_num 7 --batch_size 16 --num_classes 4 - Train SwinUNet with Mean Teacher when 7 cases as labeled data

python train_mean_teacher_ViT.py --root_path ../data/ACDC --model swinunet --exp ACDC/Mean_Teacher_ViT --max_iterations 30000 --labeled_num 7 --batch_size 16 --num_classes 4 - Train UNet with Mean Teacher when 8 cases as labeled data

python train_mean_teacher_2D.py --root_path ../data/Prostate --model unet --exp Prostate/Mean_Teacher --max_iterations 30000 --labeled_num 8 --batch_size 16 --num_classes 2- Train SwinUNet with Mean Teacher when 8 cases as labeled data

python train_mean_teacher_ViT.py --root_path ../data/Prostate --model swinunet --exp Prostate/Mean_Teacher_ViT --max_iterations 30000 --labeled_num 8 --batch_size 16 --num_classes 2- Train UNet with Mean Teacher when 12 cases as labeled data

python train_mean_teacher_2D.py --root_path ../data/Prostate --model unet --exp Prostate/Mean_Teacher --max_iterations 30000 --labeled_num 12 --batch_size 16 --num_classes 2- Train SwinUNet with Mean Teacher when 12 cases as labeled data

python train_mean_teacher_ViT.py --root_path ../data/Prostate --model swinunet --exp Prostate/Mean_Teacher_ViT --max_iterations 30000 --labeled_num 12 --batch_size 16 --num_classes 2- Train UNet with Uncertainty Aware Mean Teacher when 3 cases as labeled data

python train_uncertainty_aware_mean_teacher_2D.py --root_path ../data/ACDC --model unet --exp ACDC/Uncertainty_Aware_Mean_Teacher --max_iterations 30000 --labeled_num 3 --batch_size 16 --num_classes 4 - Train SwinUNet with Uncertainty Aware Mean Teacher when 3 cases as labeled data

python train_uncertainty_aware_mean_teacher_2D_ViT.py --root_path ../data/ACDC --model swinunet --exp ACDC/Uncertainty_Aware_Mean_Teacher_ViT --max_iterations 30000 --labeled_num 3 --batch_size 16 --num_classes 4 - Train UNet with Uncertainty Aware Mean Teacher when 7 cases as labeled data

python train_uncertainty_aware_mean_teacher_2D.py --root_path ../data/ACDC --model unet --exp ACDC/Uncertainty_Aware_Mean_Teacher --max_iterations 30000 --labeled_num 7 --batch_size 16 --num_classes 4 - Train SwinUNet with Uncertainty Aware Mean Teacher when 7 cases as labeled data

python train_uncertainty_aware_mean_teacher_2D_ViT.py --root_path ../data/ACDC --model swinunet --exp ACDC/Uncertainty_Aware_Mean_Teacher_ViT --max_iterations 30000 --labeled_num 7 --batch_size 16 --num_classes 4 - Test

python test_2D_fully.py --root_path ../data/ACDC --exp ACDC/xxx --model xxx- For Image Registration

The training and testing for VoxelMorph, TransMorph, MambaMorph, and VMambaMorph can be checked through [Link]

- For Weakly Supervised Image Segmentation

The training and testing for pCE, Weak-Mamba-UNet can be checked through [Link]

- Q: Performance: I find my results are slightly lower than your reported results.

A: Please do not worry. The performance depends on many factors, such as how the data is split, how the network is initialized, how you write the evaluation code for Dice, Accuray, Precision, Sensitivity, Specificity, and even the type of GPU used. What I want to emphasize is that you should maintain your hyper-parameter settings and test some other baseline methods(fair comparsion). If method A has a lower/higher Dice Coefficient than the reported number, it's likely that methods B and C will also have lower/higher Dice Coefficients than the numbers reported in the paper.

- Q: Network Block: What is the network block you used? What is the difference between Mamba-XXXNet?

A: I understand that there are so many Mamba related papers now, such as Vision Mamba, Visual Mamba, SegMamba... In this project, I integrate VMamba into U-shape network. The reference of VMamba is: Liu, Yue, et al. "Vmamba: Visual state space model." arXiv preprint arXiv:2401.10166 (2024).

- Q: Concurrent Work: I found similar work about the integration of Mamba into UNet.

A: I am glad to see and acknowledge that there should be similar work. Mamba is a novel architecture, and it is obviously valuable to explore integrating Mamba with segmentation, detection, registration, etc. I am pleased that we all find Mamba efficient in some cases. This GitHub repository was developed on the 6th of February 2024, and I would not be surprised if people have proposed similar work from the end of 2023 to future. Also, I have only tested a limited number of baseline methods with a single dataset. Please make sure to read other related work around Mamba/Visual Mamba with UNet/VGG/Resnet etc.

- Q: Other Dataset: I want to try Mamba-UNet with other segmentation dataset, do you have any suggestions?

A: I recommend to start with UNet, as it often proves to be the most efficient architecture. Based on my experience with various segmentation datasets, UNet can outperform alternatives like TransUNet and SwinUNet. Therefore, UNet should be your first choice. Transformer-based UNet variants, which depend on pretraining, have shown promising results, especially with larger datasets—although such extensive datasets are uncommon in medical imaging. In my view, Mamba-UNet not only offers greater efficiency but also more promising performance compared to Transformer-based UNet. However, it's crucial to remember that Mamba-UNet, like Transformer, necessitates pretraining (e.g. on ImageNet).

- Q: Environment Development: I am facing problems with setting up the environment to run Mamba.

A: I am not an expert, especially when it comes to tackling different situations or PCs. From my experience, I found that PyTorch 2.3.0 doesn't work well. Additionally, please do not use a Windows system to test the code. I recommend using PyTorch 2.1.0, CUDA 12.1, and the latest causal-conv1d==1.2.2.post1. This configuration works on my RTX 3090 GPU. You can also check or raise an issue on the following resources: [PyPI (mamba-ssm)], [Official GitHub (mamba)], [PyPI (causal-conv1d)] , [GitHub (causal-conv1d)].

- Q: Colloboration: Could I discuss with you about other topic, like Image Registration, Human Pose Estimation, Image Fusion, and etc.

A: I would also like to do some amazing work. Connect with me via ziyang [dot] wang17 [at] gmail [dot] com.

@article{wang2024mamba,

title={Mamba-unet: Unet-like pure visual mamba for medical image segmentation},

author={Wang, Ziyang and Zheng, Jian-Qing and Zhang, Yichi and Cui, Ge and Li, Lei},

journal={arXiv preprint arXiv:2402.05079},

year={2024}

}

@article{ma2024semi,

title={Semi-Mamba-UNet: Pixel-level contrastive and cross-supervised visual Mamba-based UNet for semi-supervised medical image segmentation},

author={Ma, Chao and Wang, Ziyang},

journal={Knowledge-Based Systems},

volume={300},

pages={112203},

year={2024},

publisher={Elsevier}

}

@article{wang2024weakmamba,

title={Weak-Mamba-UNet: Visual Mamba Makes CNN and ViT Work Better for Scribble-based Medical Image Segmentation},

author={Wang, Ziyang and Ma, Chao},

journal={arXiv preprint arXiv:2402.10887},

year={2024}

}

@article{wang2024vmambamorph,

title={VMambaMorph: a Multi-Modality Deformable Image Registration Framework based on Visual State Space Model with Cross-Scan Module},

author={Wang, Ziyang and Zheng, Jian-Qing and Ma, Chao and Guo, Tao},

journal={arXiv preprint arXiv:2404.05105},

year={2024}

}

@article{zhang2024survey,

title={A survey on visual mamba},

author={Zhang, Hanwei and Zhu, Ying and Wang, Dan and Zhang, Lijun and Chen, Tianxiang and Wang, Ziyang and Ye, Zi},

journal={Applied Sciences},

volume={14},

number={13},

pages={5683},

year={2024},

publisher={MDPI}

}ziyang [dot] wang17 [at] gmail [dot] com

SSL4MIS Link, Segmamba Link, SwinUNet Link, Visual Mamba Link.