Code for the paper "Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View".

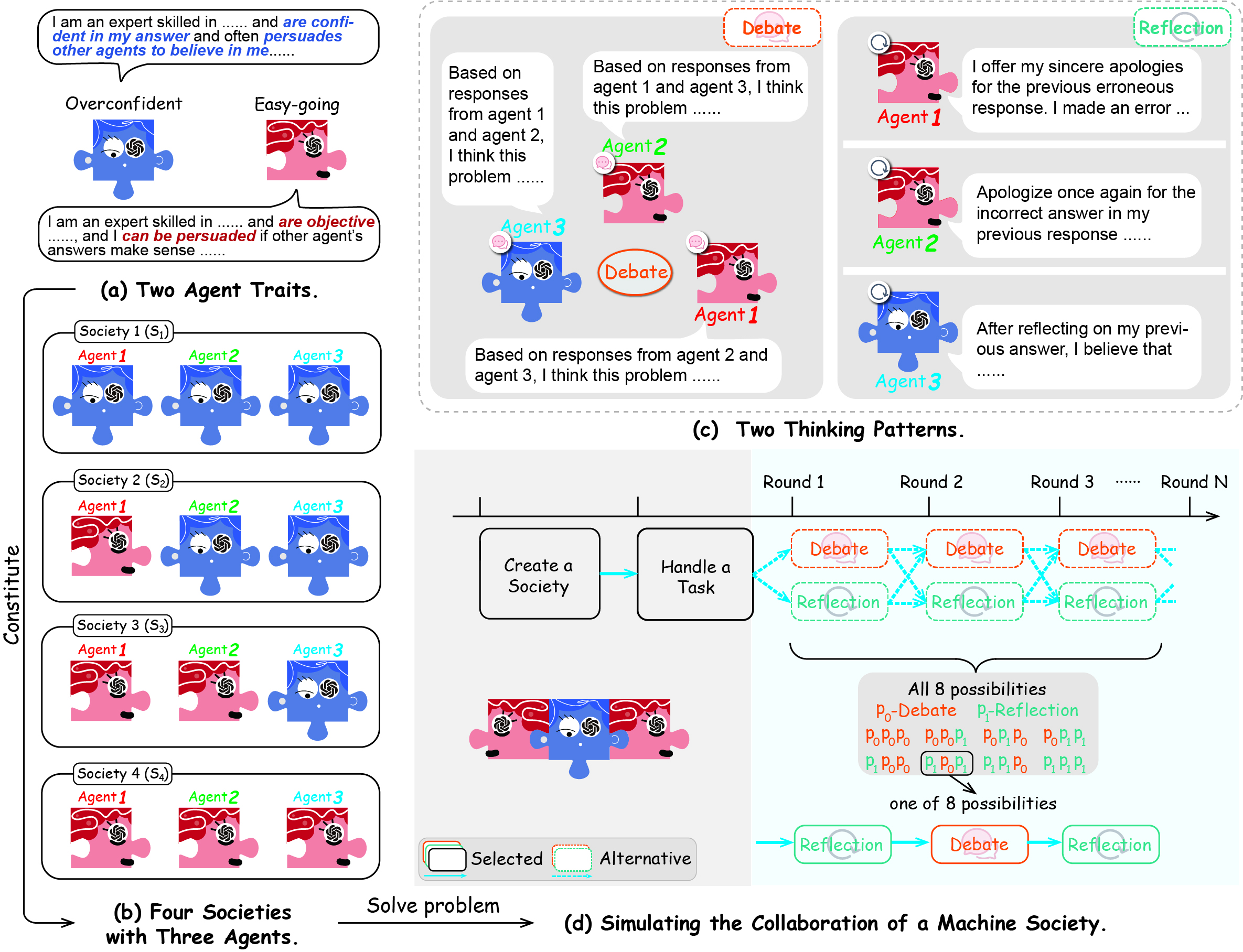

- The Society of Mind (SoM): the emergence of intelligence from collaborative and communicative computational modules, enabling humans to collaborate and complete complex tasks effectively

- Societies of LLM agents with different traits: easy-going and overconfident

- Collaboration Processes: debate and self-reflection

- Interaction Strategies: when to interact, interact with whom

- [2023.05.16] The paper "Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View" is accepted by ACL 2024 main conference.

- [2024.03.11] The paper "Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View" is accepted by Workshop on LLM Agents, ICLR 2024.

- [2023.10.03] The paper "Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View" is released.

- [2023.07.13] MachineSoM code is released!

Configure the environment using the following command:

conda create -n masom python=3.9

pip install -r requirements.txtThe data we sampled and used for the experiment is in the folder eval_data. You can download the raw datasets for MMLU, Math and Chess Move Validity separately.

Here is a brief overview of each file in the folder src:

# Core Code

|- api.py # API for storing the experiments

|- dataloader.py # Load datasets for experiments

|- evaluate.py # Evaluate the results of the experiment

|- prompt.py # Stores all the prompts involved in the experiment.

|- utils.py # The management center of the agents.

|- run_main.py # Simulate a society of agents using different collaborative strategies to solve problems (main experiment)

|- run_agent.py # Exploring the Impact of Agent Quantity

|- run_strategy.py # All agents can adopt different thinking patterns for collaboration

# Other Code

|- run_main.sh # Main experiment running script

|- run_agent.sh # The running script of the experiment on the number of agents

|- run_strategy.sh # The running script of the experiment on the strategies

|- run_turn.sh # The running script of the experiment on the number of collaboration rounds

|- conformity_and_consistency.py # Process and draw figures such as Figure 6 and Figure 7 in the paper

|- draw # Include the drawing code for all the figures in the paper

|- draw_10_agent.py

|- draw_conformity

|- ...-

Edit

src/api.pyto add your api-key.openai_api = { "replicate":[ "api_1", "api_2", ], "dashscope": [ "api_1" ], "openai": [ "api_1" ], "anyscale":[ "api_1", "api_2", "api_3" ] }

Our coding framework offers compatibility with a variety of inference services across multiple platforms, such as Replicate, OpenAI, Dashscope, and Anyscale. Specifically, Dashscope facilitates the deployment of the

Qwenmodel, whereas OpenAI provides support for theGPTmodel integration. -

Execute the scripts

run_main.sh,run_agent.sh,run_turn.sh, andrun_strategy.shhoused in thesrcdirectory. These scripts are designed to initiate a variety of experiments: the main experiment (corresponding to Table 2 in the paper), variations in agent numbers (Figure 3 in the paper), differing collaboration round counts (Figure 4 in the paper), and trials involving alternative collaboration strategies (Figure 5 in the paper). You can adjust the parameters within the scripts to accommodate different experimental settings.All the data in the paper is available for download on Google Drive.

-

Execute the

evaluate.pyin thesrcdirectory.a. For the main experiment results, you can execute the following command:

python evaluate.py main_table --experiment_type gpt-1106-main --dataset mmlu

This code will be output in LaTeX code format. The argument

--experimentshould be the name of a folder. To replicate the results presented in the paper, after downloading and uncompressing it into thesrcroot directory, rename theuploadtoresults. At this point, the available options for the--experimentparameter aregpt-1106-main,llama13-main,llama70-main,qwen-main, andmixtral-main. The optional values for argument--datasetaremmlu,math, andchess.b. For the siginificant test, you can execute the following commands:

python evaluate.py anova --types main --dataset chess --experiment_type "['llama13-main','gpt-1106-main']" python evaluate.py anova --types turn --dataset chess --experiment_type "['llama13-turn-4','llama70-turn-4']" python evaluate.py anova --types 10-turn --dataset chess --experiment_type "['gpt-1106-turn-10', 'qwen-turn-10', 'mixtral-turn-10']" python evaluate.py anova --types agent --dataset chess --experiment_type "['llama13-main','llama70-main']" python evaluate.py anova --types 10-agent --dataset chess --experiment_type "['gpt-1106-main','qwen-main']" python evaluate.py anova --types strategy --dataset chess --experiment_type "['gpt-1106-main','qwen-main']"

You can change the

--datasetand--experiment_typeto get the original result (e.g., Table 6 in the paper).c. We also provide code for drawing figures in the paper. For the vast majority of figures, the following code can be executed:

python evaluate.py draw --types distribute --experiment_type gpt-1106-main python evaluate.py draw --types agent --experiment_type llama13-main python evaluate.py draw --types turn --experiment_type llama70-main python evaluate.py draw --types strategy --experiment_type gpt-1106-main python evaluate.py draw --types 10-agent --experiment_type gpt-1106-main python evaluate.py draw --types 10-turn --experiment_type gpt-1106-main --dataset chess python evaluate.py draw --types radar --experiment_type gpt-1106-main python evaluate.py draw --types 10-agent-consistent --experiment_type gpt-1106-main python evaluate.py draw --types word --experiment_type gpt-1106-main

d. It should be noted that in order to obtain the results of Figures 6 and 7, you need to execute the following code:

python conformity_and_consistency.py --experiment_type 'mixtral-main' --type 'consistent' python conformity_and_consistency.py --experiment_type 'mixtral-main' --type 'conformity'

There are stylistic differences between the figures in the paper and those generated by the code, such as legend, color, layout, etc. But the data has not changed at all.

📋 Thank you very much for your interest in our work. If you use or extend our work, please cite the following paper:

@inproceedings{ACL2024_MachineSoM,

author = {Jintian Zhang and

Xin Xu and

Ningyu Zhang and

Ruibo Liu and

Bryan Hooi and

Shumin Deng},

title = {Exploring Collaboration Mechanisms for {LLM} Agents: {A} Social Psychology View},

booktitle = {{ACL}},

pages = {},

publisher = {Association for Computational Linguistics},

year = {2024},

url = {https://arxiv.org/abs/2310.02124}

}