Fast Bayesian optimization, quadrature, inference over arbitrary domain with GPU parallel acceleration based on GPytorch and BoTorch. The paper is here arXiv,

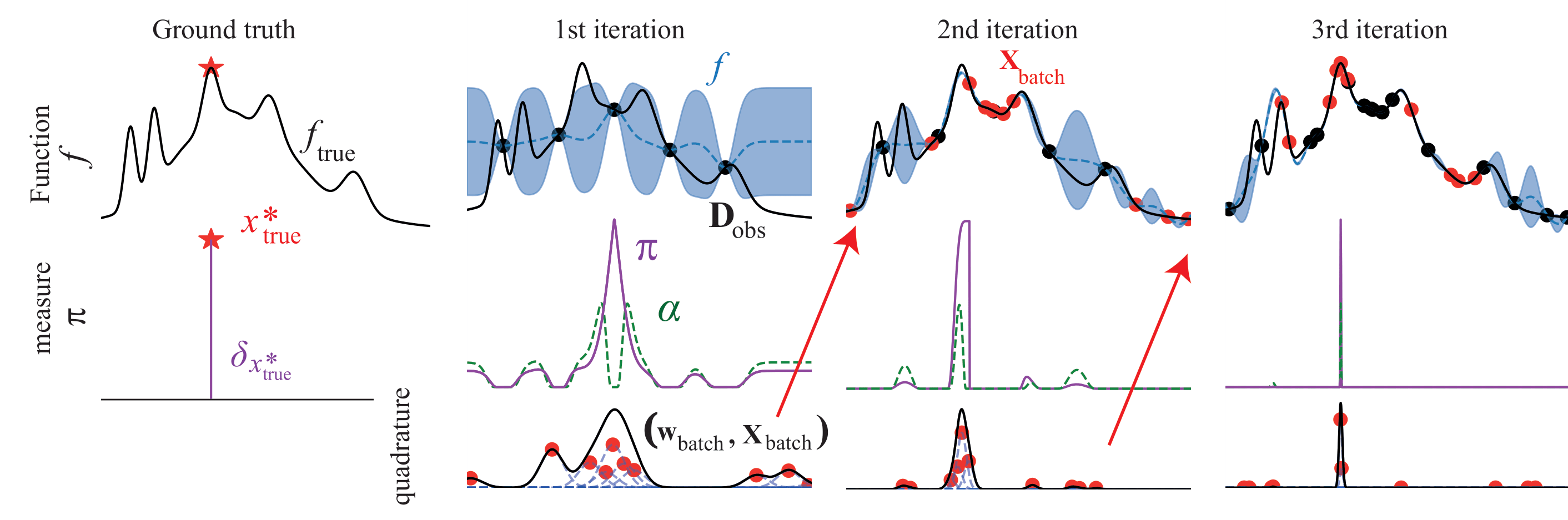

- Red star: ground truth

- black crosses: next batch queries recommended by SOBER

- white dots: historical observations

- Branin function: blackbox function to maximise

-

$\pi$ : the probability of global optimum locations estimated by SOBER

- fast batch Bayesian optimization

- fast batch Bayesian quadrature

- fast Bayesian inference

- fast fully Bayesian Gaussian process modelling and related acquisition functions

- sample-efficient simulation-based inference

- GPU acceleration

- Arbitrary domain space (continuous, discrete, mixture, or domain space as dataset)

- Arbitrary kernel for surrogate modelling

- Arbitrary acquisition function

- Arbitrary prior distribution for Bayesian inference

We prepared the detailed explanations about how to customize SOBER for your tasks.

See tutorials.

- 01 How does SOBER work?

- 02 Customise prior for various domain type

- 03 Customise acquisition function

- 04 Fast fully Bayesian Gaussian process modelling

- 05 Fast Bayesian inference for simulation-based inference

- 06 Tips for drug discovery

See examples for reproducing the results in the paper.

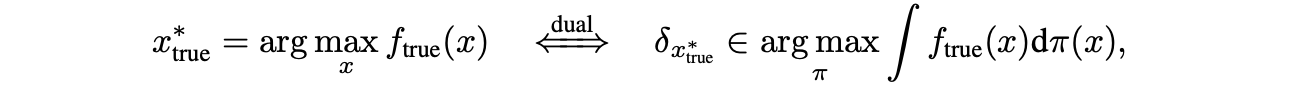

We solve batch global optimization as Bayesian quadrature;

We select the batch query locations to minimize the integration error of the true function

- PyTorch

- GPyTorch

- BoTorch

Please cite this work as

@article{adachi2023sober,

title={SOBER: Highly Parallel Bayesian Optimization and Bayesian Quadrature over Discrete and Mixed Spaces},

author={Adachi, Masaki and Hayakawa, Satoshi and Hamid, Saad and Jørgensen, Martin and Oberhauser, Harald and Osborne, Michael A.},

journal={arXiv preprint arXiv:2301.11832},

year={2023}

}