Positional Prompt Tuning for Efficient 3D Representation Learning. ArXiv

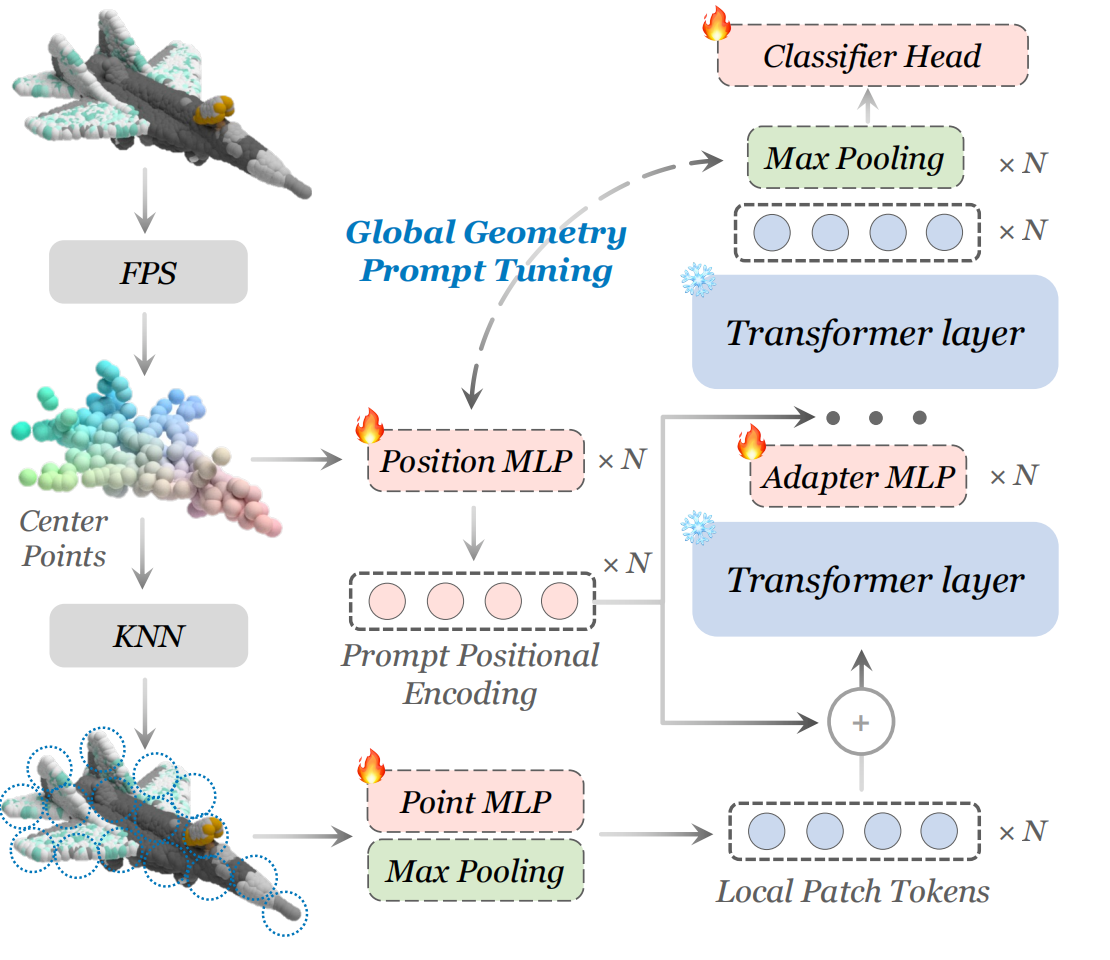

In this work, we rethink the effect of position embedding in Transformer-based point cloud representation learning methods, and present a novel method of Parameter-Efficient Fine-Tuning(PEFT) tasks based on this as well as a new form of prompt and adapter structure. With less than 5% of the trainable parameters, our method, namely PPT, outperforms its PEFT counterparts in classification tasks on ModelNet40 and ScanObjectNN datasets. Our PPT also gets better or on par results in few-shot learning on ModelNet40 and in part segmentation on ShapeNetPart.

PyTorch >= 1.7.0; python >= 3.7; CUDA >= 9.0; GCC >= 4.9; torchvision;

conda create -n ppt python=3.10 -y

conda activate ppt

conda install pytorch==2.0.1 torchvision==0.15.2 cudatoolkit=11.8 -c pytorch -c nvidia

# pip install torch==2.0.1+cu118 torchvision==0.15.2+cu118 -f https://download.pytorch.org/whl/torch_stable.html

pip install -r requirements.txt

# PointNet++

pip install "git+https://github.com/erikwijmans/Pointnet2_PyTorch.git#egg=pointnet2_ops&subdirectory=pointnet2_ops_lib"

We use ScanObjectNN, ModelNet40 and ShapeNetPart in this work. See DATASET.md for details.

| Task | Dataset | Config | Acc. | Download |

|---|---|---|---|---|

| Pre-training | ShapeNet | N.A. | N.A. | here |

| Classification | ScanObjectNN | finetune_scan_hardest.yaml | 89.00% | here |

| Classification | ScanObjectNN | finetune_scan_objbg.yaml | 93.63% | here |

| Classification | ScanObjectNN | finetune_scan_objonly.yaml | 92.60% | here |

| Classification | ModelNet40(1k) | finetune_modelnet.yaml | 93.68% | here |

| Classification | ModelNet40(8k) | finetune_modelnet_8k.yaml | 93.88% | here |

| Part segmentation | ShapeNetPart | segmentation | 85.7% mIoU | here |

| Task | Dataset | Config | Acc. | Download |

|---|---|---|---|---|

| Pre-training | ShapeNet | N.A. | N.A. | here |

| Classification | ScanObjectNN | finetune_scan_hardest.yaml | 89.52% | here |

| Classification | ScanObjectNN | finetune_scan_objbg.yaml | 95.01% | here |

| Classification | ScanObjectNN | finetune_scan_objonly.yaml | 93.28% | here |

| Classification | ModelNet40(1k) | finetune_modelnet.yaml | 93.76% | here |

| Classification | ModelNet40(8k) | finetune_modelnet_8k.yaml | 93.84% | here |

| Part segmentation | ShapeNetPart | segmentation | 85.6% mIoU | here |

| Task | Dataset | Config | 5w10s Acc. (%) | 5w20s Acc. (%) | 10w10s Acc. (%) | 10w20s Acc. (%) |

|---|---|---|---|---|---|---|

| Few-shot learning | ModelNet40 | fewshot.yaml | 97.0 ± 2.7 | 98.7 ± 1.6 | 92.2 ± 5.0 | 95.6 ± 2.9 |

Fine-tuning Point-MAE on ScanObjectNN, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/pointmae_configs/finetune_scan_hardest.yaml \

--finetune_model --exp_name <output_file_name> --ckpts <path/to/pre-trained/model>

Fine-tuning Point-MAE on ModelNet40, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/pointmae_configs/finetune_modelnet.yaml \

--finetune_model --exp_name <output_file_name> --ckpts <path/to/pre-trained/model>

Voting Point-MAE on ModelNet40, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --test --config cfgs/pointmae_configs/finetune_modelnet.yaml \

--exp_name <output_file_name> --ckpts <path/to/best/fine-tuned/model>

Few-shot learning of Point-MAE, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/pointmae_configs/fewshot.yaml --finetune_model \

--ckpts <path/to/pre-trained/model> --exp_name <output_file_name> --way <5 or 10> --shot <10 or 20> --fold <0-9>

Fine-tuning ReCon on ScanObjectNN, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/recon_configs/finetune_scan_hardest.yaml \

--finetune_model --exp_name <output_file_name> --ckpts <path/to/pre-trained/model>

Fine-tuning ReCon on ModelNet40, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/recon_configs/finetune_modelnet.yaml \

--finetune_model --exp_name <output_file_name> --ckpts <path/to/pre-trained/model>

Voting ReCon on ModelNet40, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --test --config cfgs/recon_configs/finetune_modelnet.yaml \

--exp_name <output_file_name> --ckpts <path/to/best/fine-tuned/model>

Few-shot learning of ReCon, run:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/recon_configs/fewshot.yaml --finetune_model \

--ckpts <path/to/pre-trained/model> --exp_name <output_file_name> --way <5 or 10> --shot <10 or 20> --fold <0-9>

Part segmentation on ShapeNetPart, run:

cd segmentation

python main.py --ckpts <path/to/pre-trained/model> --root path/to/data --learning_rate 0.0002 --epoch 300

Our codes are built upon Point-MAE, ReCon, ICCV23-IDPT and DAPT