🚀 [ECCV2024 Oral] Clearer Frames, Anytime: Resolving Velocity Ambiguity in Video Frame Interpolation

by Zhihang Zhong 1,*, Gurunandan Krishnan 2, Xiao Sun 1, Yu Qiao 1, Sizhuo Ma2,†, and Jian Wang 2,†

*First author, †Co-corresponding authors

1Shanghai AI Laboratory, OpenGVLab, 2Snap Inc.

We strongly recommend referring to the project page and interactive demo for a better understanding:

👉 project page

👉 interactive demo

👉 OpenXLab demo

👉 arXiv

👉 slides

Please leave a 🌟 if you like this project! 🔥🔥🔥

- 🎉 2024-08-12: Luckily, this work is recognized as Oral by ECCV2024! 🏁

- 🎉 2024-07-01: This work is accepted to ECCV2024! 🎆

- 🎉 2024-05-02: Our technology is used by CCTV5 and CCTV5+ for slow motion demonstrations of athletes jumping in the 2024 Thomas & Uber Cup! 🔥

- 🎉 2023-11-28: We have added an interface for video inference to the interactive demo, and uploaded checkpoints trained with the LPIPS loss.

cctv5_interpany-clearer.mp4

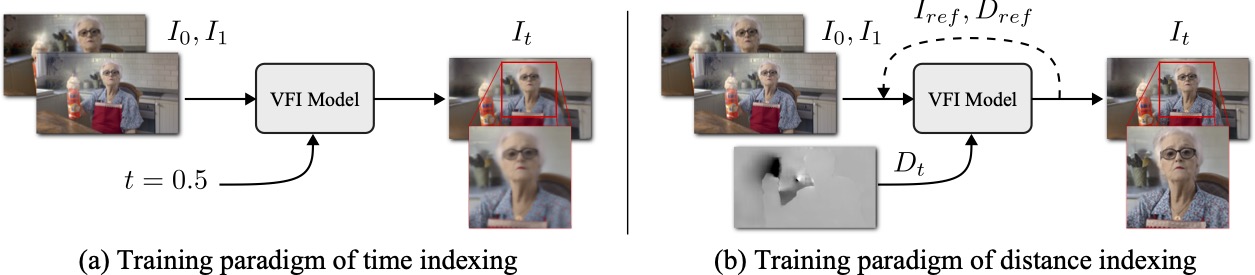

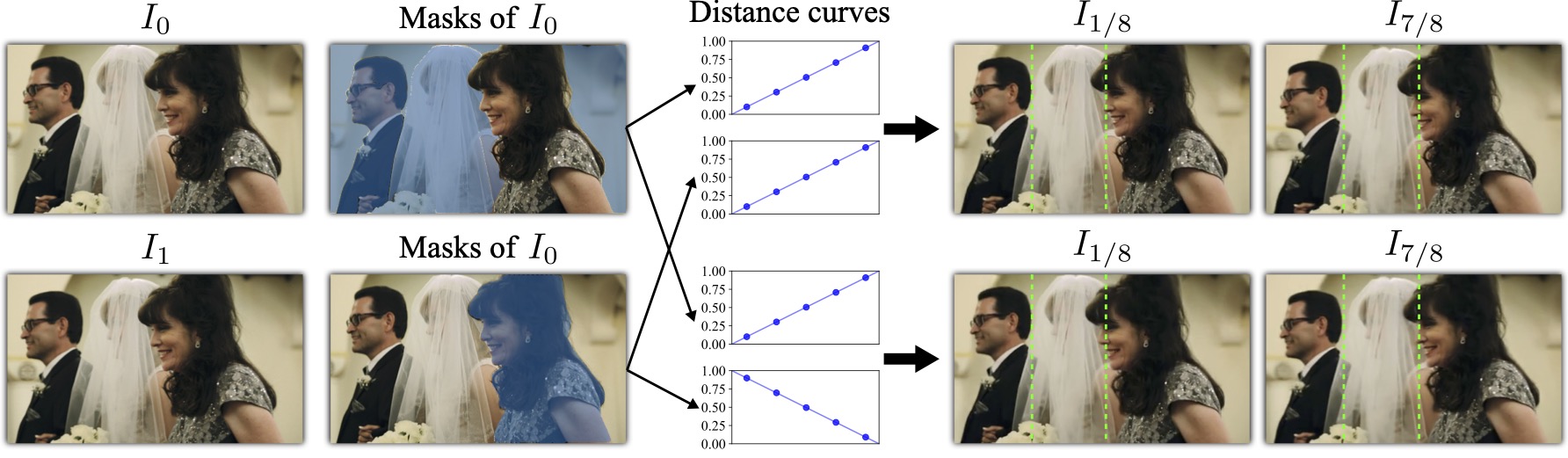

We addressed velocity ambiguity in video frame interpolation through innovative distance indexing and iterative

reference-based

estimation strategies, resulting in:

Clearer anytime frame interpolation & Manipulated

interpolation of anything

Comparison of x128 interpolation using only 2 frames as inputs:

| [T] RIFE | [D,R] RIFE (Ours) | [D,R] RIFE-vgg (Ours) |

|

|

|

|

|

|

[D]: distance indexing

[R]: iterative reference-based estimation

The results of [D,R] RIFE-vgg are perceptually clearer, but may suffer from undesirable distortions (see second row). We recommend using [D,R] RIFE for more stable results.

You can try Anaconda or Docker to setup the environment.

conda create -n InterpAny python=3.8

conda activate InterpAny

pip install torch==1.12.1+cu116 torchvision==0.13.1+cu116 torchaudio==0.12.1 --extra-index-url https://download.pytorch.org/whl/cu116

pip install -r requirements.txtYou can build a docker image with all dependencies installed.

See docker/README.md for more details.

We provide checkpoints for four different models, including RIFE, IFRNet, AMT-S, and EMA-VFI.

Download checkpoints from here (full) / here (separate).

P.S., RIFE-pro denotes the RIFE model trained with more data and epochs; RIFE-vgg denotes the RIFE model trained with the LPIPS loss.

python inference_img.py --img0 [IMG_0] --img1 [IMG_1] --output_dir [OUTPUT_DIR] --model [MODEL_NAME] --variant [VARIANT] --num [NUM] --gifExamples:

python inference_img.py --img0 ./demo/I0_0.png --img1 ./demo/I0_1.png --model RIFE --variant DR --checkpoint ./checkpoints/RIFE/DR-RIFE-pro --save_dir ./results/I0_results_DR-RIFE-pro --num 1 1 1 1 1 1 1 --gif

python inference_img.py --img0 ./demo/I0_0.png --img1 ./demo/I0_1.png --model RIFE --variant DR --checkpoint ./checkpoints/RIFE/DR-RIFE-vgg --save_dir ./results/I0_results_DR-RIFE-vgg --num 1 1 1 1 1 1 1 --gif

python inference_img.py --img0 ./demo/I0_0.png --img1 ./demo/I0_1.png --model EMA-VFI --variant DR --checkpoint ./checkpoints/EMA-VFI/DR-EMA-VFI --save_dir ./results/I0_results_DR-EMA-VFI/ --num 1 1 1 1 1 1 1 --gif

--num NUM means to interpolate NUM frames between every two frames.

--num NUM1 NUM2 ... means that NUM1 frames are interpolated between every two frames, then NUM2 frames are interpolated between every two frames for the result of the interpolation, and so on.

python inference_video.py --video [VIDEO] --output_dir [OUTPUT_DIR] --model [MODEL_NAME] --variant [VARIANT] --num [NUM]Examples:

python inference_video.py --video ./demo/demo.mp4 --model RIFE --variant DR --checkpoint ./checkpoints/RIFE/DR-RIFE-pro --save_dir ./results/demo_results_DR-RIFE-pro --num 3 --fps 15

P.S., if without --fps, the output video will have the same fps as the input video.

|

|

|

You can play with the interactive demo or install the webapp locally.

P.S., not required if you use docker

Follow ./webapp/backend/README.md to setup the environment for Segment Anything.

Follow ./webapp/webapp/README.md to setup the environment for the webapp.

cd ./webapp/backend/

python app.py

# open a new terminal

cd ./webapp/webapp/

yarn && yarn startYou can download the splited Vimeo90K dataset with our distance indexing maps from here ( or full dataet), and then merge them:

cat vimeo_septuplet_split.zipa* > vimeo_septuplet_split.zipAlternatively, you can download original Vimeo90K dataset from here, and then generate

distance indexing (P.S.

Download checkpoints for RAFT

and put them under ./RAFT/models/ in advance):

python multiprocess_create_dis_index.pyTraining command:

python train.py --model [MODEL_NAME] --variant [VARIANT]Examples:

python train.py --model RIFE --variant D

python train.py --model RIFE --variant DR

python train.py --model AMT-S --variant D

python train.py --model AMT-S --variant DR

Testing with precomputed distance maps:

python test.py --model [MODEL_NAME] --variant [VARIANT]Examples:

python test.py --model RIFE --variant D

python test.py --model RIFE --variant DR

Testing using uniform distance maps with the same inputs as the time indexes:

python test.py --model [MODEL_NAME] --variant [VARIANT] --uniformExamples:

python test.py --model RIFE --variant D --uniform

python test.py --model RIFE --variant DR --uniform

If you find this repository useful, please consider citing:

@article{zhong2023clearer,

title={Clearer Frames, Anytime: Resolving Velocity Ambiguity in Video Frame Interpolation},

author={Zhong, Zhihang and Krishnan, Gurunandan and Sun, Xiao and Qiao, Yu and Ma, Sizhuo and Wang, Jian},

journal={arXiv preprint arXiv:2311.08007},

year={2023}

}We thank Dorian Chan, Zhirong Wu, and Stephen Lin for their insightful feedback and advice. Our thanks also go to Vu An Tran for developing the web application, and to Wei Wang for coordinating the user study.

Moreover, we appreciate the following projects for releasing their code:

[CVPR 2018] The Unreasonable Effectiveness of Deep Features as a Perceptual Metric

[ECCV 2020] RAFT: Recurrent All Pairs Field Transforms for Optical Flow

[ECCV 2022] Real-Time Intermediate Flow Estimation for Video Frame Interpolation

[CVPR 2022] IFRNet: Intermediate Feature Refine Network for Efficient Frame Interpolation

[CVPR 2023] AMT: All-Pairs Multi-Field Transforms for Efficient Frame Interpolation

[CVPR 2023] Extracting Motion and Appearance via Inter-Frame Attention for Efficient Video Frame Interpolation

[ICCV 2023] Segment Anything