This repository contains a regularly updated paper list for Speculative Decoding.

- Unlocking Efficiency in Large Language Model Inference: A Comprehensive Survey of Speculative Decoding

Heming Xia, Zhe Yang, Qingxiu Dong, Peiyi Wang, Yongqi Li, Tao Ge, Tianyu Liu, Wenjie Li, Zhifang Sui. [pdf], [code], 2024.01. - Beyond the Speculative Game: A Survey of Speculative Execution in Large Language Models

Chen Zhang, Zhuorui Liu, Dawei Song. [pdf], 2024.04.

-

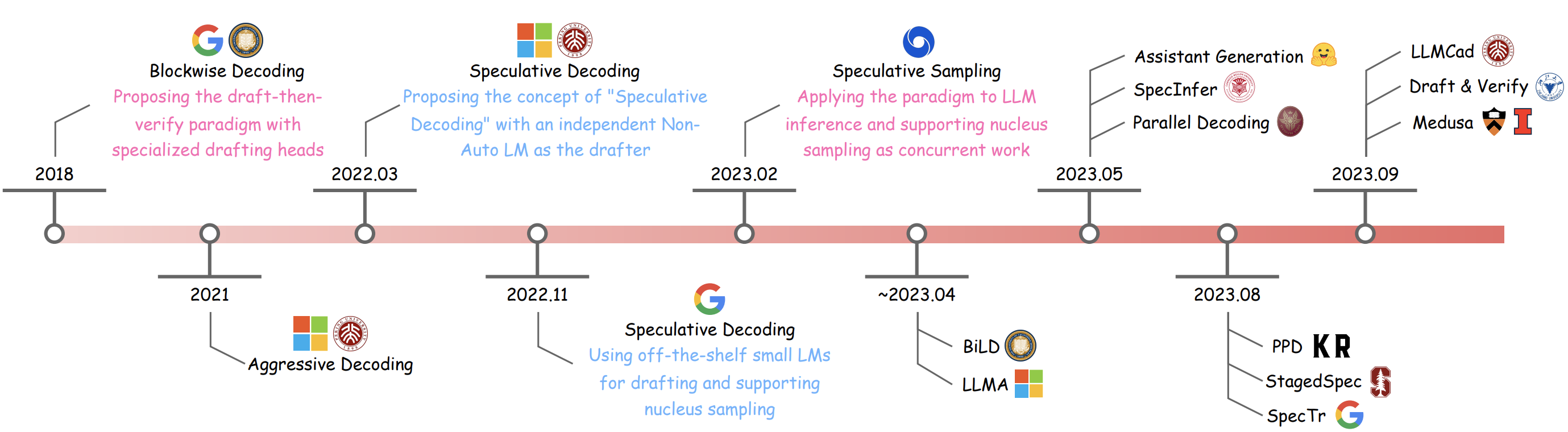

Blockwise Parallel Decoding for Deep Autoregressive Models

Mitchell Stern, Noam Shazeer, Jakob Uszkoreit. [pdf], 2018.11. -

Speculative Decoding: Exploiting Speculative Execution for Accelerating Seq2seq Generation

Heming Xia, Tao Ge, Peiyi Wang, Si-Qing Chen, Furu Wei, Zhifang Sui. [pdf], [code], 2022.03. -

Speculative Decoding with Big Little Decoder

Sehoon Kim, Karttikeya Mangalam, Suhong Moon, John Canny, Jitendra Malik, Michael W. Mahoney, Amir Gholami, Kurt Keutzer. [pdf], [code], 2023.02. -

Accelerating Transformer Inference for Translation via Parallel Decoding

Andrea Santilli, Silvio Severino, Emilian Postolache, Valentino Maiorca, Michele Mancusi, Riccardo Marin, Emanuele Rodolà. [pdf], 2023.05. -

SPEED: Speculative Pipelined Execution for Efficient Decoding

Coleman Hooper, Sehoon Kim, Hiva Mohammadzadeh, Hasan Genc, Kurt Keutzer, Amir Gholami, Sophia Shao. [pdf], 2023.10. -

Fast and Robust Early-Exiting Framework for Autoregressive Language Models with Synchronized Parallel Decoding

Sangmin Bae, Jongwoo Ko, Hwanjun Song, Se-Young Yun. [pdf], [code], 2023.10.

- Fast Inference from Transformers via Speculative Decoding

Yaniv Leviathan, Matan Kalman, Yossi Matias. [pdf], [code], 2022.11. - Accelerating Large Language Model Decoding with Speculative Sampling

Charlie Chen, Sebastian Borgeaud, Geoffrey Irving, Jean-Baptiste Lespiau, Laurent Sifre, John Jumper. [pdf], [code], 2023.02. - Inference with Reference: Lossless Acceleration of Large Language Models

Nan Yang, Tao Ge, Liang Wang, Binxing Jiao, Daxin Jiang, Linjun Yang, Rangan Majumder, Furu Wei. [pdf], 2023.04. - SpecInfer: Accelerating Generative LLM Serving with Speculative Inference and Token Tree Verification

Xupeng Miao, Gabriele Oliaro, Zhihao Zhang, Xinhao Cheng, Zeyu Wang, Rae Ying Yee Wong, Alan Zhu, Lijie Yang, Xiaoxiang Shi, Chunan Shi, Zhuoming Chen, Daiyaan Arfeen, Reyna Abhyankar, Zhihao Jia. [pdf], [code], 2023.05. - Predictive Pipelined Decoding: A Compute-Latency Trade-off for Exact LLM Decoding

Seongjun Yang, Gibbeum Lee, Jaewoong Cho, Dimitris Papailiopoulos, Kangwook Lee. [pdf], 2023.08. - Accelerating LLM Inference with Staged Speculative Decoding

Benjamin Spector, Chris Re. [pdf], 2023.08. - SpecTr: Fast Speculative Decoding via Optimal Transport

Ziteng Sun, Ananda Theertha Suresh, Jae Hun Ro, Ahmad Beirami, Himanshu Jain, Felix Yu, Michael Riley, Sanjiv Kumar. [pdf], 2023.08. - Draft & Verify: Lossless Large Language Model Acceleration via Self-Speculative Decoding

Jun Zhang, Jue Wang, Huan Li, Lidan Shou, Ke Chen, Gang Chen, Sharad Mehrotra. [pdf], [code], 2023.09. - Online Speculative Decoding

Xiaoxuan Liu, Lanxiang Hu, Peter Bailis, Ion Stoica, Zhijie Deng, Alvin Cheung, Hao Zhang. [pdf], 2023.10. - DistillSpec: Improving Speculative Decoding via Knowledge Distillation

Yongchao Zhou, Kaifeng Lyu, Ankit Singh Rawat, Aditya Krishna Menon, Afshin Rostamizadeh, Sanjiv Kumar, Jean-François Kagy, Rishabh Agarwal. [pdf], 2023.10. - REST: Retrieval-Based Speculative Decoding

Zhenyu He, Zexuan Zhong, Tianle Cai, Jason D Lee, Di He. [pdf], [code], 2023.11. - Speculative Contrastive Decoding

Hongyi Yuan, Keming Lu, Fei Huang, Zheng Yuan, Chang Zhou. [pdf], 2023.11. - PaSS: Parallel Speculative Sampling

Giovanni Monea, Armand Joulin, Edouard Grave. [pdf], 2023.11. - Cascade Speculative Drafting for Even Faster LLM Inference

Ziyi Chen, Xiaocong Yang, Jiacheng Lin, Chenkai Sun, Jie Huang, Kevin Chen-Chuan Chang. [pdf], [code], 2023.12. - SLiM: Speculative Decoding with Hypothesis Reduction

Hongxia Jin, Chi-Heng Lin, Shikhar Tuli, James Seale Smith, Yen-Chang Hsu, Yilin Shen. [pdf], 2023.12. - Graph-Structured Speculative Decoding

Zhuocheng Gong, Jiahao Liu, Ziyue Wang, Pengfei Wu, Jingang Wang, Xunliang Cai, Dongyan Zhao, Rui Yan. [pdf], 2023.12. - Multi-Candidate Speculative Decoding

Sen Yang, Shujian Huang, Xinyu Dai, Jiajun Chen. [pdf], [code], 2024.01. - Medusa: Simple LLM Inference Acceleration Framework with Multiple Decoding Heads

Tianle Cai, Yuhong Li, Zhengyang Geng, Hongwu Peng, Jason D. Lee, Deming Chen, Tri Dao. [pdf], [code], 2024.01. - BiTA: Bi-Directional Tuning for Lossless Acceleration in Large Language Models

Feng Lin, Hanling Yi, Hongbin Li, Yifan Yang, Xiaotian Yu, Guangming Lu, Rong Xiao. [pdf], [code], 2024.01. - EAGLE: Speculative Sampling Requires Rethinking Feature Uncertainty

Yuhui Li, Fangyun Wei, Chao Zhang, Hongyang Zhang. [pdf], [code], 2024.01. - GliDe with a CaPE: A Low-Hassle Method to Accelerate Speculative Decoding

Cunxiao Du, Jing Jiang, Xu Yuanchen, Jiawei Wu, Sicheng Yu, Yongqi Li, Shenggui Li, Kai Xu, Liqiang Nie, Zhaopeng Tu, Yang You. [pdf], [code], 2024.02. - Break the Sequential Dependency of LLM Inference Using Lookahead Decoding

Yichao Fu, Peter Bailis, Ion Stoica, Hao Zhang. [pdf], [code], 2024.02. - Hydra: Sequentially-Dependent Draft Heads for Medusa Decoding

Zachary Ankner, Rishab Parthasarathy, Aniruddha Nrusimha, Christopher Rinard, Jonathan Ragan-Kelley, William Brandon. [pdf], [code], 2024.02. - Speculative Streaming: Fast LLM Inference without Auxiliary Models

Nikhil Bhendawade, Irina Belousova, Qichen Fu, Henry Mason, Mohammad Rastegari, Mahyar Najibi. [pdf], 2024.02. - Generation Meets Verification: Accelerating Large Language Model Inference with Smart Parallel Auto-Correct Decoding

Hanling Yi, Feng Lin, Hongbin Li, Peiyang Ning, Xiaotian Yu, Rong Xiao. [pdf], 2024.02. - Sequoia: Scalable, Robust, and Hardware-aware Speculative Decoding

Zhuoming Chen, Avner May, Ruslan Svirschevski, Yuhsun Huang, Max Ryabinin, Zhihao Jia, Beidi Chen. [pdf], [code], 2024.02. - ProPD: Dynamic Token Tree Pruning and Generation for LLM Parallel Decoding

Shuzhang Zhong, Zebin Yang, Meng Li, Ruihao Gong, Runsheng Wang, Ru Huang. [pdf], 2024.02. - Ouroboros: Speculative Decoding with Large Model Enhanced Drafting

Weilin Zhao, Yuxiang Huang, Xu Han, Chaojun Xiao, Zhiyuan Liu, Maosong Sun. [pdf], [code], 2024.02. - Recursive Speculative Decoding: Accelerating LLM Inference via Sampling Without Replacement

Wonseok Jeon, Mukul Gagrani, Raghavv Goel, Junyoung Park, Mingu Lee, Christopher Lott. [pdf], 2024.02. - Chimera: A Lossless Decoding Method for Accelerating Large Language Models Inference by Fusing all Tokens

Ziqian Zeng, Jiahong Yu, Qianshi Pang, Zihao Wang, Huiping Zhuang, Cen Chen. [pdf], 2024.02. - Speculative Decoding via Early-exiting for Faster LLM Inference with Thompson Sampling Control Mechanism

Jiahao Liu, Qifan Wang, Jingang Wang, Xunliang Cai. [pdf], 2024.02. - Specuna: A Speculative Vicuna with Shallow Layer Reuse

Anonymous ACL submission. [pdf], 2024.02. - Minions: Accelerating Large Language Model Inference with Adaptive and Collective Speculative Decoding

Siqi Wang, Hailong Yang, Xuezhu Wang, Tongxuan Liu, Pengbo Wang, Xuning Liang, Kejie Ma, Tianyu Feng, Xin You, Yongjun Bao, Yi Liu, Zhongzhi Luan, Depei Qian. [pdf], 2024.02. - CLLMs: Consistency Large Language Models

Siqi Kou, Lanxiang Hu, Zhezhi He, Zhijie Deng, Hao Zhang. [pdf], [code], [blog], 2024.03. - Recurrent Drafter for Fast Speculative Decoding in Large Language Models

Aonan Zhang, Chong Wang, Yi Wang, Xuanyu Zhang, Yunfei Cheng. [pdf], 2024.03. - Optimal Block-Level Draft Verification for Accelerating Speculative Decoding

Ziteng Sun, Jae Hun Ro, Ahmad Beirami, Ananda Theertha Suresh. [pdf], 2024.03. - SDSAT: Accelerating LLM Inference through Speculative Decoding with Semantic Adaptive Tokens

Chengbo Liu, Yong Zhu. [pdf], 2024.03. - Lossless Acceleration of Large Language Model via Adaptive N-gram Parallel Decoding

Jie Ou, Yueming Chen, Wenhong Tian. [pdf], 2024.04. - Exploring and Improving Drafts in Blockwise Parallel Decoding

Taehyeon Kim, Ananda Theertha Suresh, Kishore Papineni, Michael Riley, Sanjiv Kumar, Adrian Benton. [pdf], 2024.04. - Parallel Decoding via Hidden Transfer for Lossless Large Language Model Acceleration

Pengfei Wu, Jiahao Liu, Zhuocheng Gong, Qifan Wang, Jinpeng Li, Jingang Wang, Xunliang Cai, Dongyan Zhao. [pdf], 2024.04. - BASS: Batched Attention-optimized Speculative Sampling

Haifeng Qian, Sujan Kumar Gonugondla, Sungsoo Ha, Mingyue Shang, Sanjay Krishna Gouda, Ramesh Nallapati, Sudipta Sengupta, Xiaofei Ma, Anoop Deoras. [pdf], 2024.04. - LayerSkip: Enabling Early Exit Inference and Self-Speculative Decoding

Mostafa Elhoushi, Akshat Shrivastava, Diana Liskovich, Basil Hosmer, Bram Wasti, Liangzhen Lai, Anas Mahmoud, Bilge Acun, Saurabh Agarwal, Ahmed Roman, Ahmed A Aly, Beidi Chen, Carole-Jean Wu. [pdf], 2024.04. - Kangaroo: Lossless Self-Speculative Decoding via Double Early Exiting

Fangcheng Liu, Yehui Tang, Zhenhua Liu, Yunsheng Ni, Kai Han, Yunhe Wang. [pdf], [code], 2024.04. - Accelerating Production LLMs with Combined Token/Embedding Speculators

Davis Wertheimer, Joshua Rosenkranz, Thomas Parnell, Sahil Suneja, Pavithra Ranganathan, Raghu Ganti, Mudhakar Srivatsa. [pdf], 2024.04. - Better & Faster Large Language Models via Multi-token Prediction

Fabian Gloeckle, Badr Youbi Idrissi, Baptiste Rozière, David Lopez-Paz, Gabriel Synnaeve. [pdf], 2024.04. - Clover: Regressive Lightweight Speculative Decoding with Sequential Knowledge

Bin Xiao, Chunan Shi, Xiaonan Nie, Fan Yang, Xiangwei Deng, Lei Su, Weipeng Chen, Bin Cui. [pdf], 2024.05. - Accelerating Speculative Decoding using Dynamic Speculation Length

Jonathan Mamou, Oren Pereg, Daniel Korat, Moshe Berchansky, Nadav Timor, Moshe Wasserblat, Roy Schwartz. [pdf], 2024.05. - EMS-SD: Efficient Multi-sample Speculative Decoding for Accelerating Large Language Models

Yunsheng Ni, Chuanjian Liu, Yehui Tang, Kai Han, Yunhe Wang. [pdf], [code], 2024.05. - Nearest Neighbor Speculative Decoding for LLM Generation and Attribution

Minghan Li, Xilun Chen, Ari Holtzman, Beidi Chen, Jimmy Lin, Wen-tau Yih, Xi Victoria Lin. [pdf], 2024.05. - Hardware-Aware Parallel Prompt Decoding for Memory-Efficient Acceleration of LLM Inference

Hao (Mark)Chen, Wayne Luk, Ka Fai Cedric Yiu, Rui Li, Konstantin Mishchenko, Stylianos I. Venieris, Hongxiang Fan. [pdf], [code], 2024.05. - Faster Cascades via Speculative Decoding

Harikrishna Narasimhan, Wittawat Jitkrittum, Ankit Singh Rawat, Seungyeon Kim, Neha Gupta, Aditya Krishna Menon, Sanjiv Kumar. [pdf], 2024.05. - S3D: A Simple and Cost-Effective Self-Speculative Decoding Scheme for Low-Memory GPUs

Wei Zhong, Manasa Bharadwaj. [pdf], 2024.05. - SpecDec++: Boosting Speculative Decoding via Adaptive Candidate Lengths

Kaixuan Huang, Xudong Guo, Mengdi Wang. [pdf], 2024.05. - Accelerated Speculative Sampling Based on Tree Monte Carlo

Zhengmian Hu, Heng Huang. [pdf], 2024.05. - SpecExec: Massively Parallel Speculative Decoding for Interactive LLM Inference on Consumer Devices

Ruslan Svirschevski, Avner May, Zhuoming Chen, Beidi Chen, Zhihao Jia, Max Ryabinin. [pdf], 2024.06. - Amphista: Accelerate LLM Inference with Bi-directional Multiple Drafting Heads in a Non-autoregressive Style

Zeping Li, Xinlong Yang, Ziheng Gao, Ji Liu, Zhuang Liu, Dong Li, Jinzhang Peng, Lu Tian, Emad Barsoum. [pdf], 2024.06. - Optimizing Speculative Decoding for Serving Large Language Models Using Goodput

Xiaoxuan Liu, Cade Daniel, Langxiang Hu, Woosuk Kwon, Zhuohan Li, Xiangxi Mo, Alvin Cheung, Zhijie Deng, Ion Stoica, Hao Zhang. [pdf], 2024.06. - EAGLE-2: Faster Inference of Language Models with Dynamic Draft Trees

Yuhui Li, Fangyun Wei, Chao Zhang, Hongyang Zhang. [pdf], 2024.06. - Make Some Noise: Unlocking Language Model Parallel Inference Capability through Noisy Training

Yixuan Wang, Xianzhen Luo, Fuxuan Wei, Yijun Liu, Qingfu Zhu, Xuanyu Zhang, Qing Yang, Dongliang Xu, Wanxiang Che. [pdf], 2024.06. - OPT-Tree: Speculative Decoding with Adaptive Draft Tree Structure

Jikai Wang, Yi Su, Juntao Li, Qinrong Xia, Zi Ye, Xinyu Duan, Zhefeng Wang, Min Zhang. [pdf], 2024.06. - Cerberus: Efficient Inference with Adaptive Parallel Decoding and Sequential Knowledge Enhancement

Anonymous EMNLP submission. [pdf], 2024.06. - SpecHub: Provable Acceleration to Multi-Draft Speculative Decoding

Anonymous EMNLP submission. [pdf], 2024.06. - S2D: Sorted Speculative Decoding For More Efficient Deployment of Nested Large Language Models

Parsa Kavehzadeh, Mohammadreza Pourreza, Mojtaba Valipour, Tinashu Zhu, Haoli Bai, Ali Ghodsi, Boxing Chen, Mehdi Rezagholizadeh. [pdf], 2024.07. - Multi-Token Joint Speculative Decoding for Accelerating Large Language Model Inference

Zongyue Qin, Ziniu Hu, Zifan He, Neha Prakriya, Jason Cong, Yizhou Sun. [pdf], 2024.07. - PipeInfer: Accelerating LLM Inference using Asynchronous Pipelined Speculation

Branden Butler, Sixing Yu, Arya Mazaheri, Ali Jannesari. [pdf], 2024.07. - Adaptive Draft-Verification for Efficient Large Language Model Decoding

Xukun Liu, Bowen Lei, Ruqi Zhang, Dongkuan Xu. [pdf], 2024.07. - Graph-Structured Speculative Decoding

Zhuocheng Gong, Jiahao Liu, Ziyue Wang, Pengfei Wu, Jingang Wang, Xunliang Cai, Dongyan Zhao, Rui Yan. [pdf], 2024.07.

- On Speculative Decoding for Multimodal Large Language Models

Mukul Gagrani, Raghavv Goel, Wonseok Jeon, Junyoung Park, Mingu Lee, Christopher Lott. [pdf], 2024.04.

- TriForce: Lossless Acceleration of Long Sequence Generation with Hierarchical Speculative Decoding

Hanshi Sun, Zhuoming Chen, Xinyu Yang, Yuandong Tian, Beidi Chen. [pdf], [code],2024.04.

- Direct Alignment of Draft Model for Speculative Decoding with Chat-Fine-Tuned LLMs

Raghavv Goel, Mukul Gagrani, Wonseok Jeon, Junyoung Park, Mingu Lee, Christopher Lott. [pdf], 2024.02.

- Spec-Bench: A Comprehensive Benchmark for Speculative Decoding

Heming Xia, Zhe Yang, Qingxiu Dong, Peiyi Wang, Yongqi Li, Tao Ge, Tianyu Liu, Wenjie Li, Zhifang Sui. [pdf], [code], [blog], 2024.02.

- Instantaneous Grammatical Error Correction with Shallow Aggressive Decoding

Xin Sun, Tao Ge, Furu Wei, Houfeng Wang. [pdf], [code], 2021.07. - LLMCad: Fast and Scalable On-device Large Language Model Inference

Daliang Xu, Wangsong Yin, Xin Jin, Ying Zhang, Shiyun Wei, Mengwei Xu, Xuanzhe Liu. [pdf], 2023.09. - Accelerating Retrieval-Augmented Language Model Serving with Speculation

Zhihao Zhang, Alan Zhu, Lijie Yang, Yihua Xu, Lanting Li, Phitchaya Mangpo Phothilimthana, Zhihao Jia. [pdf], 2023.10. - Lookahead: An Inference Acceleration Framework for Large Language Model with Lossless Generation Accuracy

Yao Zhao, Zhitian Xie, Chenyi Zhuang, Jinjie Gu. [pdf], [code], 2023.12. - A Simple Framework to Accelerate Multilingual Language Model for Monolingual Text Generation

Jimin Hong, Gibbeum Lee, Jaewoong Cho. [pdf], 2024.01. - Accelerating Greedy Coordinate Gradient via Probe Sampling

Yiran Zhao, Wenyue Zheng, Tianle Cai, Xuan Long Do, Kenji Kawaguchi, Anirudh Goyal, Michael Shieh. [pdf], 2024.03. - Optimized Speculative Sampling for GPU Hardware Accelerators

Dominik Wagner, Seanie Lee, Ilja Baumann, Philipp Seeberger, Korbinian Riedhammer, Tobias Bocklet. [pdf], 2024.06. - Towards Fast Multilingual LLM Inference: Speculative Decoding and Specialized Drafters

Euiin Yi, Taehyeon Kim, Hongseok Jeung, Du-Seong Chang, Se-Young Yun. [pdf], 2024.06. - SEED: Accelerating Reasoning Tree Construction via Scheduled Speculative Decoding

Zhenglin Wang, Jialong Wu, Yilong Lai, Congzhi Zhang, Deyu Zhou. [pdf], 2024.06. - Speculative RAG: Enhancing Retrieval Augmented Generation through Drafting

Zilong Wang, Zifeng Wang, Long Le, Huaixiu Steven Zheng, Swaroop Mishra, Vincent Perot, Yuwei Zhang, Anush Mattapalli, Ankur Taly, Jingbo Shang, Chen-Yu Lee, Tomas Pfister. [pdf], 2024.07.

-

The Synergy of Speculative Decoding and Batching in Serving Large Language Models

Qidong Su, Christina Giannoula, Gennady Pekhimenko. [pdf], 2023.10. -

Decoding Speculative Decoding

Minghao Yan, Saurabh Agarwal, Shivaram Venkataraman. [pdf], [code], 2024.02. -

How Speculative Can Speculative Decoding Be?

Zhuorui Liu, Chen Zhang, Dawei Song. [pdf], [code], 2024.05. -

Fast and Slow Generating: An Empirical Study on Large and Small Language Models Collaborative Decoding

Kaiyan Zhang, Jianyu Wang, Ning Ding, Biqing Qi, Ermo Hua, Xingtai Lv, Bowen Zhou. [pdf], [code], 2024.06.

Assisted Generation: a new direction toward low-latency text generation. Huggingface. 2023.05. [Blog] [Code]

Medusa: Simple Framework for Accelerating LLM Generation with Multiple Decoding Heads. Princeton, UIUC. 2023.09. [Blog] [Code]

An Optimal Lossy Variant of Speculative Decoding. Unsupervised Thoughts (blog). 2023.09. [Blog] [Code]

Break the Sequential Dependency of LLM Inference Using Lookahead Decoding. LMSys. 2023.11. [Blog] [Code]

Accelerating Generative AI with PyTorch II: GPT, Fast. Pytorch. 2023.11. [Blog] [Code]

Prompt Lookup Decoding. Apoorv Saxena. 2023.11. [Code] [Colab]

REST: Retrieval-Based Speculative Decoding. Peking University, Princeton University. 2023.11. [Blog] [Code]

EAGLE: Lossless Acceleration of LLM Decoding by Feature Extrapolation. Vector Institute, University of Waterloo, Peking University. 2023.12. [Blog] [Code]

SEQUOIA: Serving exact Llama2-70B on an RTX4090 with half-second per token latency. Carnegie Mellon University, Together AI, Yandex, Meta AI. 2024.02. [Blog] [Code]

- There are cases where we miss important works in this field, please feel free to contribute and promote your awesome work or other related works here! Thanks for the efforts in advance.

If you find the resources in this repository useful, please cite our paper:

@misc{xia2024unlocking,

title={Unlocking Efficiency in Large Language Model Inference: A Comprehensive Survey of Speculative Decoding},

author={Heming Xia and Zhe Yang and Qingxiu Dong and Peiyi Wang and Yongqi Li and Tao Ge and Tianyu Liu and Wenjie Li and Zhifang Sui},

year={2024},

eprint={2401.07851},

archivePrefix={arXiv},

primaryClass={cs.CL}

}