PointGPT: Auto-regressively Generative Pre-training from Point Clouds ArXiv

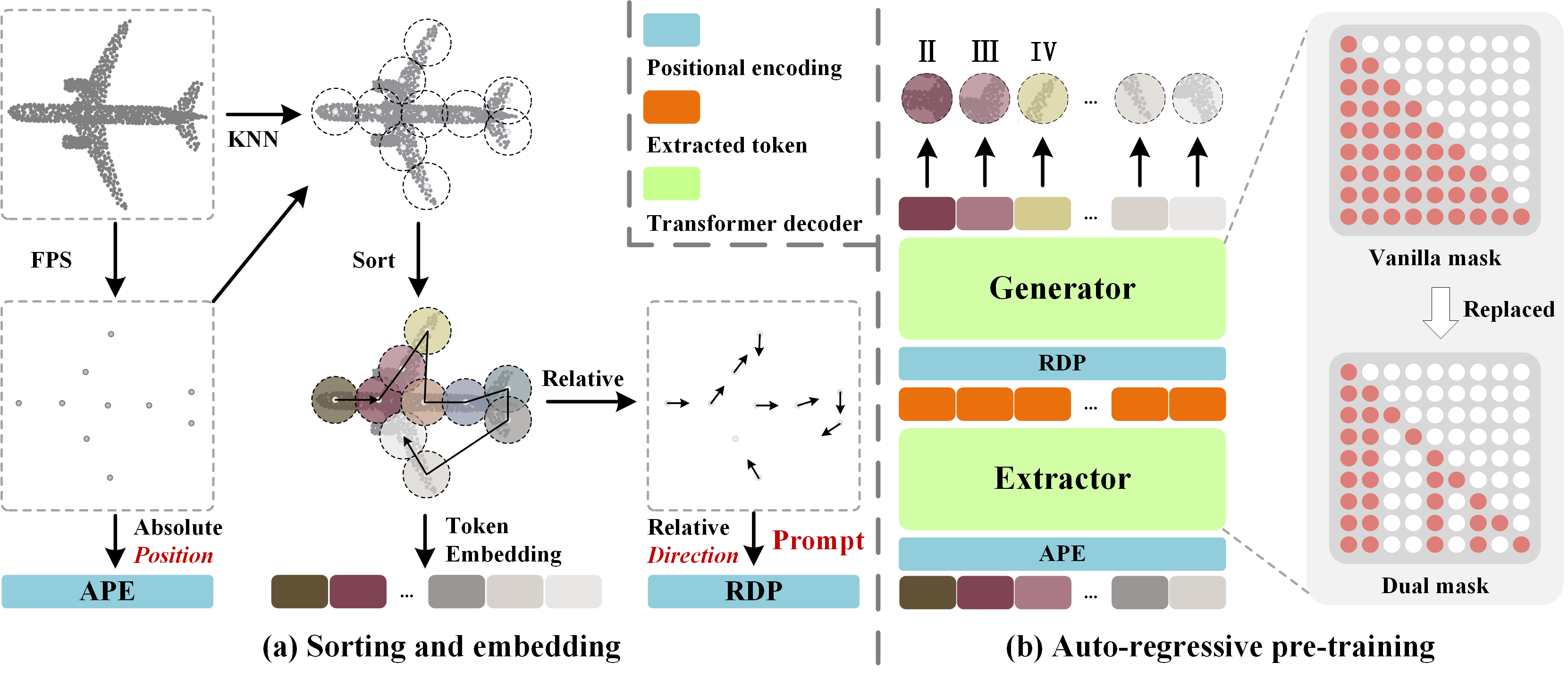

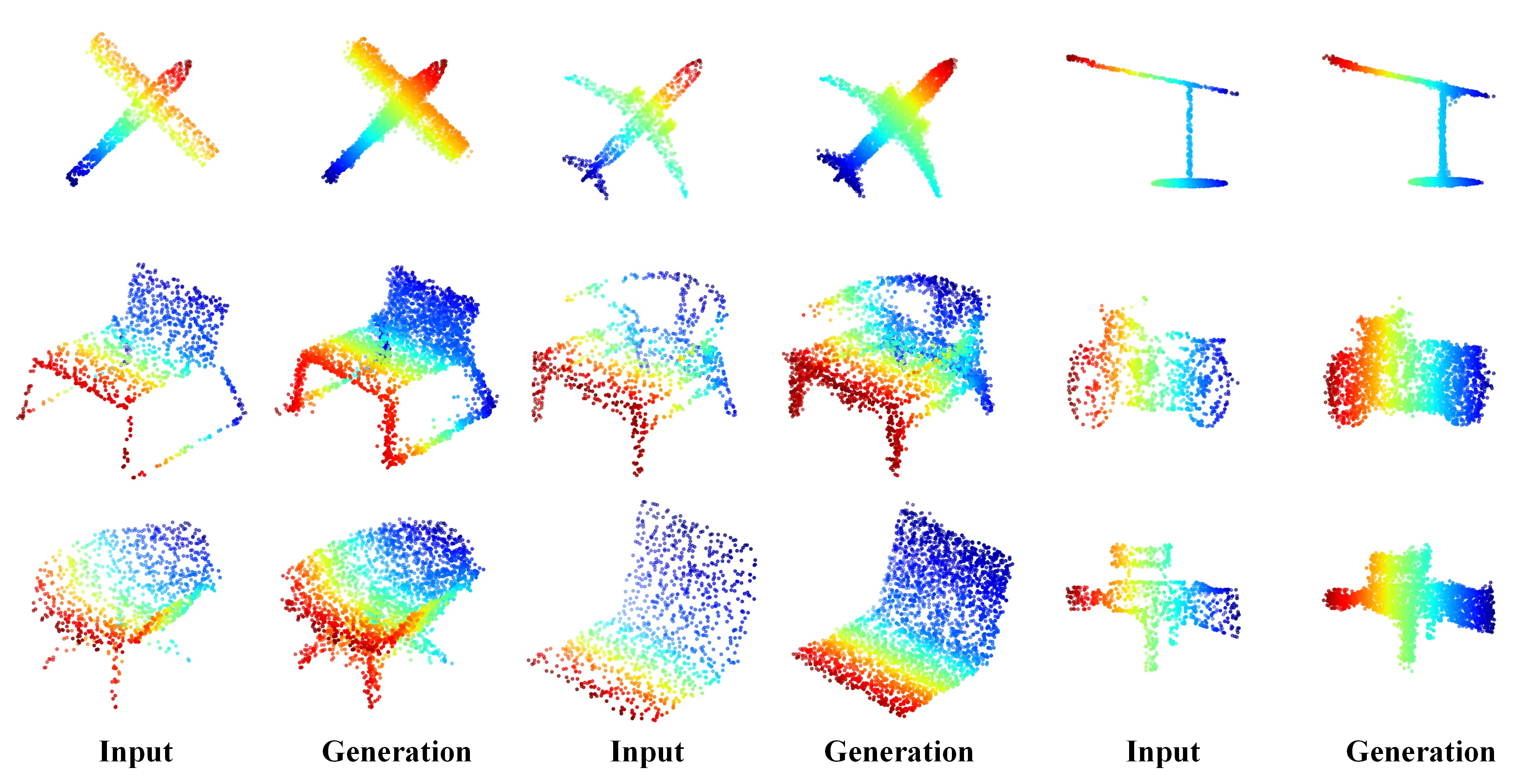

In this work, we present PointGPT, a novel approach that extends the concept of GPT to point clouds, utilizing a point cloud auto-regressive generation task for pre-training transformer models. In object classification tasks, our PointGPT achieves 94.9% accuracy on the ModelNet40 dataset and 93.4% accuracy on the ScanObjectNN dataset, outperforming all other transformer models. In few-shot learning tasks, our method also attains new SOTA performance on all four benchmarks.

[2023.09.22] PointGPT has been accepted by NeurIPS 2023!

[2023.09.08] Unlabeled hybrid dataset and labeled hybrid dataset have been released!

[2023.08.19] Code has been updated; PointGPT-B and PointGPT-L models have been released!

[2023.06.20] Code and the PointGPT-S models have been released!

PyTorch >= 1.7.0; python >= 3.7; CUDA >= 9.0; GCC >= 4.9; torchvision;

pip install -r requirements.txt

# Chamfer Distance & emd

cd ./extensions/chamfer_dist

python setup.py install --user

cd ./extensions/emd

python setup.py install --user

# PointNet++

pip install "git+https://github.com/erikwijmans/Pointnet2_PyTorch.git#egg=pointnet2_ops&subdirectory=pointnet2_ops_lib"

# GPU kNN

pip install --upgrade https://github.com/unlimblue/KNN_CUDA/releases/download/0.2/KNN_CUDA-0.2-py3-none-any.whl

Our training data for the PointGPT-S model encompasses ShapeNet, ScanObjectNN, ModelNet40, and ShapeNetPart datasets. For detailed information, please refer to DATASET.md.

To pretrain the PointGPT-B and PointGPT-L models, we employ both unlabeled hybrid dataset and labeled hybrid dataset, available for download here.

| Task | Dataset | Config | Acc. | Download |

|---|---|---|---|---|

| Pre-training | ShapeNet | pretrain.yaml | N.A. | here |

| Classification | ScanObjectNN | finetune_scan_hardest.yaml | 86.9% | here |

| Classification | ScanObjectNN | finetune_scan_objbg.yaml | 91.6% | here |

| Classification | ScanObjectNN | finetune_scan_objonly.yaml | 90.0% | here |

| Classification | ModelNet40(1k) | finetune_modelnet.yaml | 94.0% | here |

| Classification | ModelNet40(8k) | finetune_modelnet_8k.yaml | 94.2% | here |

| Part segmentation | ShapeNetPart | segmentation | 86.2% mIoU | here |

| Task | Dataset | Config | 5w10s Acc. (%) | 5w20s Acc. (%) | 10w10s Acc. (%) | 10w20s Acc. (%) |

|---|---|---|---|---|---|---|

| Few-shot learning | ModelNet40 | fewshot.yaml | 96.8 ± 2.0 | 98.6 ± 1.1 | 92.6 ± 4.6 | 95.2 ± 3.4 |

| Task | Dataset | Config | Acc. | Download |

|---|---|---|---|---|

| Pre-training | UnlabeledHybrid | pretrain.yaml | N.A. | here |

| Post-pre-training | LabeledHybrid | post_pretrain.yaml | N.A. | here |

| Classification | ScanObjectNN | finetune_scan_hardest.yaml | 91.9% | here |

| Classification | ScanObjectNN | finetune_scan_objbg.yaml | 95.8% | here |

| Classification | ScanObjectNN | finetune_scan_objonly.yaml | 95.2% | here |

| Classification | ModelNet40(1k) | finetune_modelnet.yaml | 94.4% | here |

| Classification | ModelNet40(8k) | finetune_modelnet_8k.yaml | 94.6% | here |

| Part segmentation | ShapeNetPart | segmentation | 86.5% mIoU | here |

| Task | Dataset | Config | 5w10s Acc. (%) | 5w20s Acc. (%) | 10w10s Acc. (%) | 10w20s Acc. (%) |

|---|---|---|---|---|---|---|

| Few-shot learning | ModelNet40 | fewshot.yaml | 97.5 ± 2.0 | 98.8 ± 1.0 | 93.5 ± 4.0 | 95.8 ± 3.0 |

| Task | Dataset | Config | Acc. | Download |

|---|---|---|---|---|

| Pre-training | UnlabeledHybrid | pretrain.yaml | N.A. | here |

| Post-pre-training | LabeledHybrid | post_pretrain.yaml | N.A. | here |

| Classification | ScanObjectNN | finetune_scan_hardest.yaml | 93.4% | here |

| Classification | ScanObjectNN | finetune_scan_objbg.yaml | 97.2% | here |

| Classification | ScanObjectNN | finetune_scan_objonly.yaml | 96.6% | here |

| Classification | ModelNet40(1k) | finetune_modelnet.yaml | 94.7% | here |

| Classification | ModelNet40(8k) | finetune_modelnet_8k.yaml | 94.9% | here |

| Part segmentation | ShapeNetPart | segmentation | 86.6% mIoU | here |

| Task | Dataset | Config | 5w10s Acc. (%) | 5w20s Acc. (%) | 10w10s Acc. (%) | 10w20s Acc. (%) |

|---|---|---|---|---|---|---|

| Few-shot learning | ModelNet40 | fewshot.yaml | 98.0 ± 1.9 | 99.0 ± 1.0 | 94.1 ± 3.3 | 96.1 ± 2.8 |

To pretrain PointGPT, run the following command.

CUDA_VISIBLE_DEVICES=<GPU> python main.py --config cfgs/<MODEL_NAME>/pretrain.yaml --exp_name <output_file_name>

To post-pretrain PointGPT, run the following command.

CUDA_VISIBLE_DEVICES=<GPU> python main.py --config cfgs/<MODEL_NAME>/post_pretrain.yaml --exp_name <output_file_name> --finetune_model

Fine-tuning on ScanObjectNN, run the following command:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/<MODEL_NAME>/finetune_scan_hardest.yaml \

--finetune_model --exp_name <output_file_name> --ckpts <path/to/pre-trained/model>

Fine-tuning on ModelNet40, run the following command:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/<MODEL_NAME>/finetune_modelnet.yaml \

--finetune_model --exp_name <output_file_name> --ckpts <path/to/pre-trained/model>

Voting on ModelNet40, run the following command:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --test --config cfgs/<MODEL_NAME>/finetune_modelnet.yaml \

--exp_name <output_file_name> --ckpts <path/to/best/fine-tuned/model>

Few-shot learning, run the following command:

CUDA_VISIBLE_DEVICES=<GPUs> python main.py --config cfgs/<MODEL_NAME>/fewshot.yaml --finetune_model \

--ckpts <path/to/pre-trained/model> --exp_name <output_file_name> --way <5 or 10> --shot <10 or 20> --fold <0-9>

Part segmentation on ShapeNetPart, run the following command:

cd segmentation

python main.py --ckpts <path/to/pre-trained/model> --root path/to/data --learning_rate 0.0002 --epoch 300 --model_name <MODEL_NAME>

Visulization of pre-trained model on validation set, run:

python main_vis.py --test --ckpts <path/to/pre-trained/model> --config cfgs/<MODEL_NAME>/pretrain.yaml --exp_name <name>

| Methods | ScanObjectNN | ModelNet40 | ShapeNetPart | ||||

|---|---|---|---|---|---|---|---|

| OBJ_BG | OBJ_ONLY | PB_T50_RS | 1k P | 8k P | Cls.mIoU | Inst.mIoU | |

| without post-pre-training | |||||||

| PointGPT-B | 93.6 | 92.5 | 89.6 | 94.2 | 94.4 | 84.5 | 86.4 |

| PointGPT-L | 95.7 | 94.1 | 91.1 | 94.5 | 94.7 | 84.7 | 86.5 |

| with post-pre-training | |||||||

| PointGPT-B | 95.8 (+2.2) | 95.2 (+2.7) | 91.9 (+2.3) | 94.4 (+0.2) | 94.6 (+0.2) | 84.5 (+0.0) | 86.5 (+0.1) |

| PointGPT-L | 97.2 (+1.5) | 96.6 (+2.5) | 93.4 (+2.3) | 94.7 (+0.2) | 94.9 (+0.2) | 84.8 (+0.1) | 86.6 (+0.1) |

Our codes are built upon Point-MAE, Point-BERT, Pointnet2_PyTorch and Pointnet_Pointnet2_pytorch

The unlabeled hybrid dataset and labeled hybrid dataset are built upon ModelNet40, PartNet, ShapeNet, S3DIS, ScanObjectNN, SUN RGB-D, and Semantic3D

@article{chen2024pointgpt,

title={Pointgpt: Auto-regressively generative pre-training from point clouds},

author={Chen, Guangyan and Wang, Meiling and Yang, Yi and Yu, Kai and Yuan, Li and Yue, Yufeng},

journal={Advances in Neural Information Processing Systems},

volume={36},

year={2024}

}

For unlabeled hybrid dataset or labeled hybrid dataset, please also cite the following work.

@inproceedings{wu20153d,

title={3d shapenets: A deep representation for volumetric shapes},

author={Wu, Zhirong and Song, Shuran and Khosla, Aditya and Yu, Fisher and Zhang, Linguang and Tang, Xiaoou and Xiao, Jianxiong},

booktitle={Proceedings of the IEEE conference on computer vision and pattern recognition},

pages={1912--1920},

year={2015}

}

@inproceedings{mo2019partnet,

title={Partnet: A large-scale benchmark for fine-grained and hierarchical part-level 3d object understanding},

author={Mo, Kaichun and Zhu, Shilin and Chang, Angel X and Yi, Li and Tripathi, Subarna and Guibas, Leonidas J and Su, Hao},

booktitle={Proceedings of the IEEE/CVF conference on computer vision and pattern recognition},

pages={909--918},

year={2019}

}

@article{chang2015shapenet,

title={Shapenet: An information-rich 3d model repository},

author={Chang, Angel X and Funkhouser, Thomas and Guibas, Leonidas and Hanrahan, Pat and Huang, Qixing and Li, Zimo and Savarese, Silvio and Savva, Manolis and Song, Shuran and Su, Hao and others},

journal={arXiv preprint arXiv:1512.03012},

year={2015}

}

@inproceedings{armeni20163d,

title={3d semantic parsing of large-scale indoor spaces},

author={Armeni, Iro and Sener, Ozan and Zamir, Amir R and Jiang, Helen and Brilakis, Ioannis and Fischer, Martin and Savarese, Silvio},

booktitle={Proceedings of the IEEE conference on computer vision and pattern recognition},

pages={1534--1543},

year={2016}

}

@inproceedings{uy-scanobjectnn-iccv19,

title = {Revisiting Point Cloud Classification: A New Benchmark Dataset and Classification Model on Real-World Data},

author = {Mikaela Angelina Uy and Quang-Hieu Pham and Binh-Son Hua and Duc Thanh Nguyen and Sai-Kit Yeung},

booktitle = {International Conference on Computer Vision (ICCV)},

year = {2019}

}

@inproceedings{song2015sun,

title={Sun rgb-d: A rgb-d scene understanding benchmark suite},

author={Song, Shuran and Lichtenberg, Samuel P and Xiao, Jianxiong},

booktitle={Proceedings of the IEEE conference on computer vision and pattern recognition},

pages={567--576},

year={2015}

}

@article{hackel2017semantic3d,

title={Semantic3d. net: A new large-scale point cloud classification benchmark},

author={Hackel, Timo and Savinov, Nikolay and Ladicky, Lubor and Wegner, Jan D and Schindler, Konrad and Pollefeys, Marc},

journal={arXiv preprint arXiv:1704.03847},

year={2017}

}