This repository contains simple usage explanations of how the RangeNet++ inference works with the TensorRT and C++ interface.

Developed by Xieyuanli Chen, Andres Milioto and Jens Behley.

For more details about RangeNet++, one could find in LiDAR-Bonnetal.

First you need to install the nvidia driver and CUDA.

-

CUDA Installation guide: Link

-

Then you can do the other dependencies:

$ sudo apt-get update $ sudo apt-get install -yqq build-essential python3-dev python3-pip apt-utils git cmake libboost-all-dev libyaml-cpp-dev libopencv-dev

-

Then install the Python packages needed:

$ sudo apt install python-empy $ sudo pip install catkin_tools trollius numpy

In order to infer with TensorRT during inference with the C++ libraries:

- Install TensorRT: Link.

- Our code and the pretrained model now only works with TensorRT version 5 (Note that you need at least version 5.1.0).

- To make the code also works for higher versions of TensorRT, one could have a look at here.

We use the catkin tool to build the library.

$ mkdir -p ~/catkin_ws/src

$ cd ~/catkin_ws/src

$ git clone https://github.com/ros/catkin.git

$ git clone https://github.com/PRBonn/rangenet_lib.git

$ cd .. && catkin init

$ catkin build rangenet_libTo run the demo, you need a pre-trained model, which can be downloaded here, model.

A single LiDAR scan for running the demo, you could find in the example folder example/000000.bin. For more LiDAR data, you could download from KITTI odometry dataset.

For more details about how to train and evaluate a model, please refer to LiDAR-Bonnetal.

To infer a single LiDAR scan and visualize the semantic point cloud:

# go to the root path of the catkin workspace

$ cd ~/catkin_ws

# use --verbose or -v to get verbose mode

$ ./devel/lib/rangenet_lib/infer -h # help

$ ./devel/lib/rangenet_lib/infer -p /path/to/the/pretrained/model -s /path/to/the/scan.bin --verboseNotice: for the first time running, it will take several minutes to generate a .trt model for C++ interface.

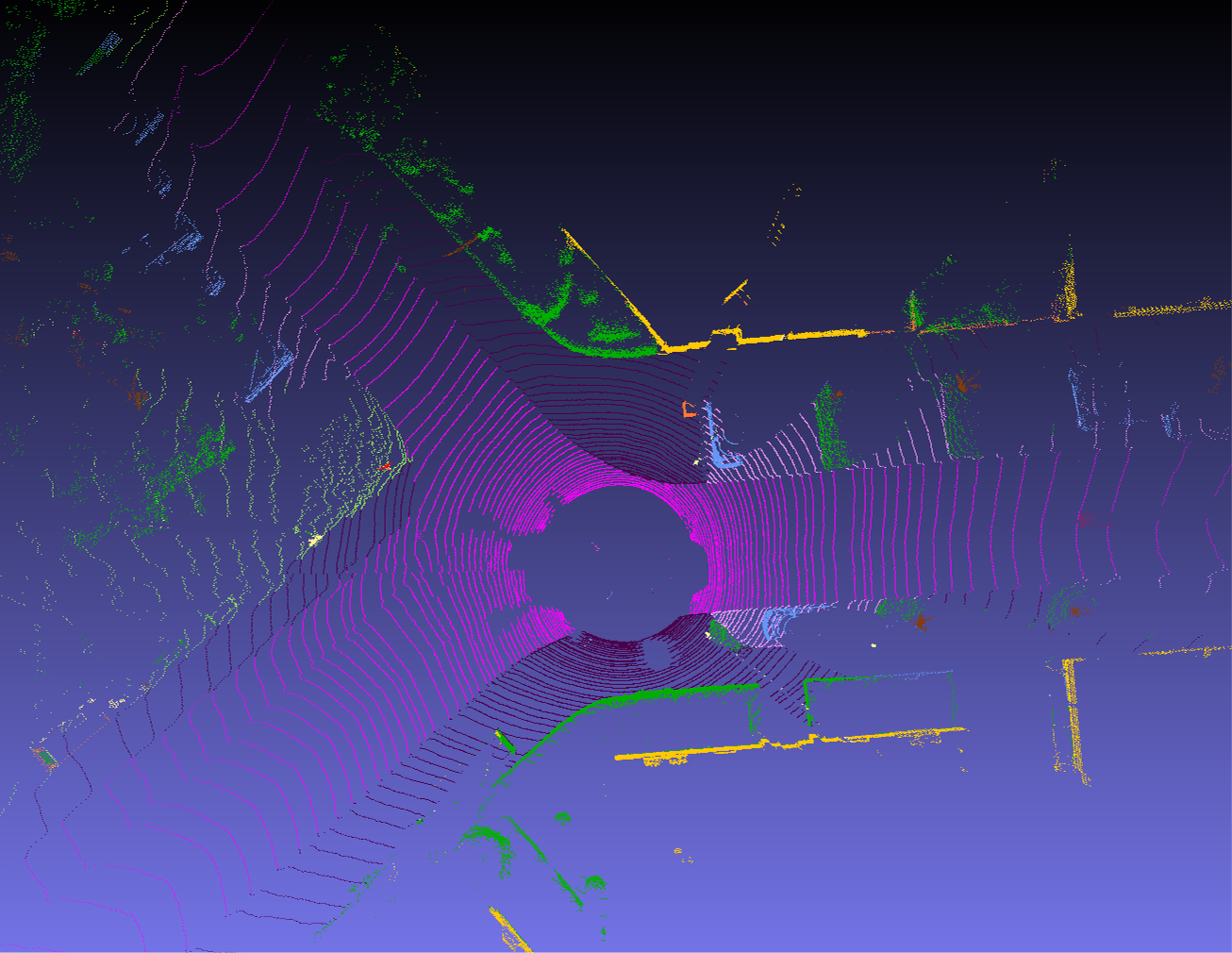

Using rangenet_lib, we built a LiDAR-based Semantic SLAM system, called SuMa++.

You could find more implementation details in SuMa++.

If you use this library for any academic work, please cite the original paper.

@inproceedings{milioto2019iros,

author = {A. Milioto and I. Vizzo and J. Behley and C. Stachniss},

title = {{RangeNet++: Fast and Accurate LiDAR Semantic Segmentation}},

booktitle = {IEEE/RSJ Intl.~Conf.~on Intelligent Robots and Systems (IROS)},

year = 2019,

codeurl = {https://github.com/PRBonn/lidar-bonnetal},

videourl = {https://youtu.be/wuokg7MFZyU},

}

If you use SuMa++, please cite the corresponding paper:

@inproceedings{chen2019iros,

author = {X. Chen and A. Milioto and E. Palazzolo and P. Giguère and J. Behley and C. Stachniss},

title = {{SuMa++: Efficient LiDAR-based Semantic SLAM}},

booktitle = {Proceedings of the IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS)},

year = {2019},

codeurl = {https://github.com/PRBonn/semantic_suma/},

videourl = {https://youtu.be/uo3ZuLuFAzk},

}

Copyright 2019, Xieyuanli Chen, Andres Milioto, Jens Behley, Cyrill Stachniss, University of Bonn.

This project is free software made available under the MIT License. For details see the LICENSE file.