In this project, the pixels of a road in images are labeled using a Fully Connected Network (FCN).

Apart from a few adjustments, the implementation in the ' main.py' is very similar to the original Udacity's repository.

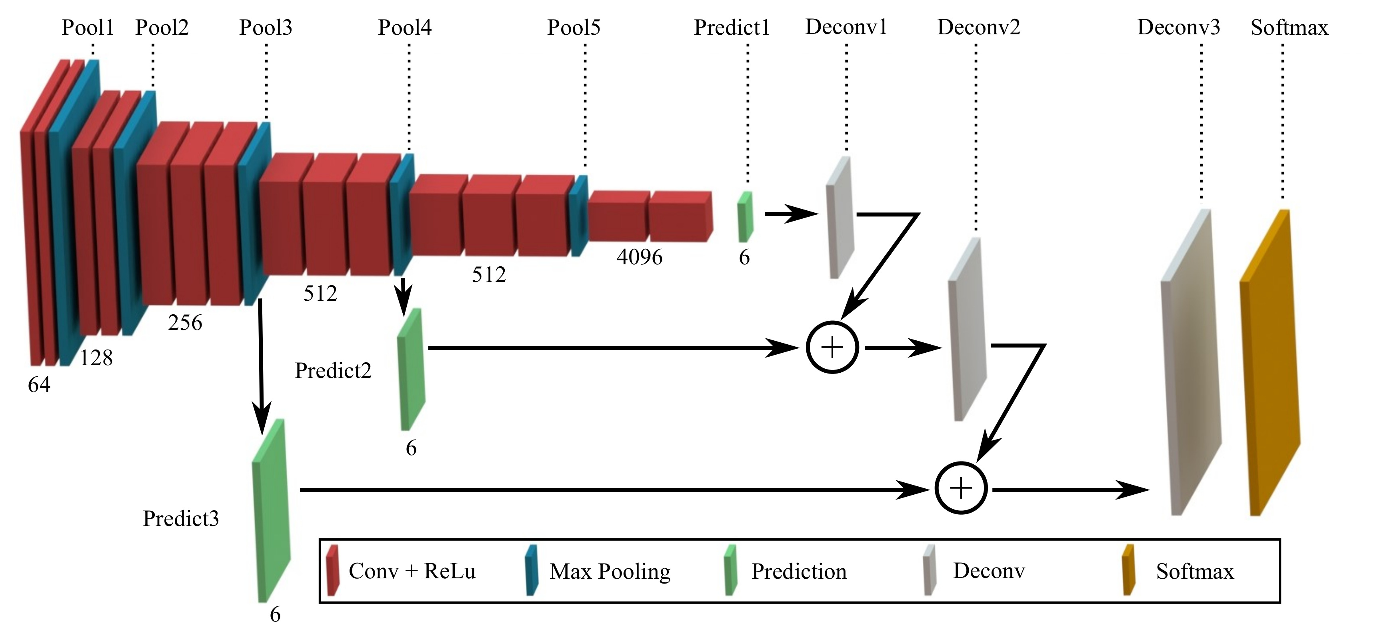

The network architecture (using Layer 3,4 and 7 from VGG and having skip connections and upsampling) are copied from examples and recommendations from the Udacity lectures, as well as the 'strides' and 'kernel_size' variables for the convolutional transpose layers.

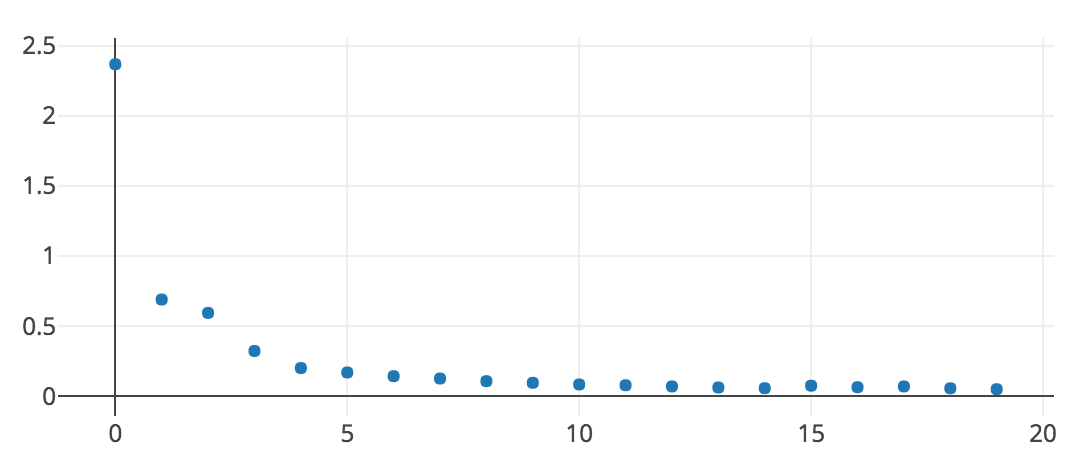

Having done some passes with varying values for the parameters, I came to the quite acceptable results observed in the pictures below, given the following values:

EPOCHS = 20

BATCH SIZE = 1

LEARNING RATE = 0.0001

DROPOUT = 0.75

------------------

shapes of layers:

------------------

layer3 --> (1, 20, 72, 256)

layer4 --> (1, 10, 36, 512)

layer7 --> (1, 5, 18, 4096)

layer3 conv1x1 --> (1, 20, 72, 2)

layer4 conv1x1 --> (1, 10, 36, 2)

layer7 conv1x1--> (1, 5, 18, 2)

decoderlayer1 transpose: layer7 k = 4 s = 2 --> (1, 10, 36, 2)

decoderlayer2 skip: decoderlayer1 and layer4conv1x1 --> (1, 10, 36, 2)

decoderlayer3 transpose: decoderlayer2 k = 4 s = 2 --> (1, 20, 72, 2)

decoderlayer4 skip: decoderlayer3 and layer3conv1x1 --> (1, 20, 72, 2)

decoderlayer5 transpose: decoderlayer4 k = 16 s = 8 --> (1, 160, 576, 2)

main.py will check to make sure you are using GPU - if you don't have a GPU on your system, you can use AWS or another cloud computing platform.

Make sure you have the following is installed:

Download the Kitti Road dataset from here. Extract the dataset in the data folder. This will create the folder data_road with all the training a test images.

Implement the code in the main.py module indicated by the "TODO" comments.

The comments indicated with "OPTIONAL" tag are not required to complete.

Run the following command to run the project:

python main.py

Note If running this in Jupyter Notebook system messages, such as those regarding test status, may appear in the terminal rather than the notebook.

- Ensure you've passed all the unit tests.

- Ensure you pass all points on the rubric.

- Submit the following in a zip file.

helper.pymain.pyproject_tests.py- Newest inference images from

runsfolder (all images from the most recent run)

- The link for the frozen

VGG16model is hardcoded intohelper.py. The model can be found here. - The model is not vanilla

VGG16, but a fully convolutional version, which already contains the 1x1 convolutions to replace the fully connected layers. Please see this post for more information. A summary of additional points, follow. - The original FCN-8s was trained in stages. The authors later uploaded a version that was trained all at once to their GitHub repo. The version in the GitHub repo has one important difference: The outputs of pooling layers 3 and 4 are scaled before they are fed into the 1x1 convolutions. As a result, some students have found that the model learns much better with the scaling layers included. The model may not converge substantially faster, but may reach a higher IoU and accuracy.

- When adding l2-regularization, setting a regularizer in the arguments of the

tf.layersis not enough. Regularization loss terms must be manually added to your loss function. otherwise regularization is not implemented.

If you are unfamiliar with GitHub , Udacity has a brief GitHub tutorial to get you started. Udacity also provides a more detailed free course on git and GitHub.

To learn about REAMDE files and Markdown, Udacity provides a free course on READMEs, as well.

GitHub also provides a tutorial about creating Markdown files.