This repository is the pytorch implementation of our ICCV2023 paper.

Project Page | arXiv | PDF | Video

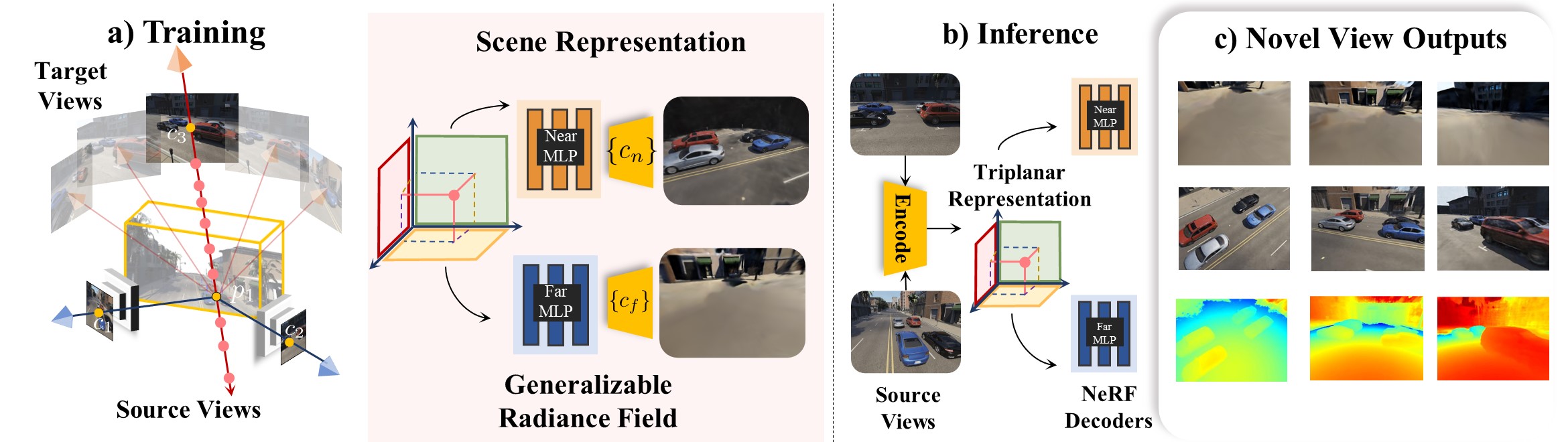

NEO 360: Neural Fields for Sparse View Synthesis

of Outdoor Scenes

Muhammad Zubair Irshad

·

Sergey Zakharov

·

Katherine Liu

·

Vitor Guizilini

·

Thomas Kollar

·

Zsolt Kira

·

Rares Ambrus

International Conference on Computer Vision (ICCV), 2023

Georgia Institute of Technology | Toyota Research Institute

If you find this repository or our NERDS 360 dataset useful, please consider citing:

@inproceedings{irshad2023neo360,

title={NeO 360: Neural Fields for Sparse View Synthesis of Outdoor Scenes},

author={Muhammad Zubair Irshad and Sergey Zakharov and Katherine Liu and Vitor Guizilini and Thomas Kollar and Adrien Gaidon and Zsolt Kira and Rares Ambrus},

journal={Interntaional Conference on Computer Vision (ICCV)},

year={2023},

url={https://arxiv.org/abs/2308.12967},

}

- 🌇 Environment

- ⛳ Dataset

- 🔖 Dataloaders

- 💫 Inference

- 📉 Generalizable Training

- 📊 Overfitting Training Runs

- 📌 FAQ

Create a python 3.7 virtual environment and install requirements:

cd $NeO-360 repo

conda create -n neo360 python=3.7

conda activate neo 360

pip install --upgrade pip

pip install -r requirements.txt

pip install torch==1.11.0+cu113 torchvision==0.12.0+cu113 -f https://download.pytorch.org/whl/torch_stable.html

export neo360_rootdir=$PWDThe code was built and tested on cuda 11.3

NeRDS 360: "NeRF for Reconstruction, Decomposition and Scene Synthesis of 360° outdoor scenes” dataset comprising 75 unbounded scenes with full multi-view annotations and diverse scenes for generalizable NeRF training and evaluation.

- NERDS360 Training Set - 75 Scenes (19.5 GB)

- NERDS360 Test Set - 5 Scenes (2.1 GB)

Extract the data under data directory or provide a symlink under project data directory. The directory structure should look like this $neo360_rootdir/data/PDMuliObjv6

To plot accumulated pointclouds, multi-view camera annotations and bounding boxes annotations as shown in the visualization below, run the following commands. This visualization script is adapted from NeRF++.

Zoom-in the visualization to see the objects. Red cameras are source-view cameras and all green cameras denote evaluation-view cameras.

python visualize/visualize_nerds360.py --base_dir PDMultiObjv6/train/SF_GrantAndCalifornia10We provide two conveneint dataloaders written in pytorch for a. single-scene overfitting i.e. settings like testing MipNeRF-360 and b. generalizable evaluation i.e. few-shot setting introduced in our paper. There is a convenient read_poses function in each of the dataloaders. Use this function to see how we load poses with the corresponding images to use our NERDS360 dataset with any NeRF implementation.

a. Our dataloader for single scene overfitting is provided in datasets/nerds360.py. This dataloader directly outputs RGB, poses pairs in the same format as NeRF/MipNeRF-360 and could be used with any of the NeRF implementation i.e. nerf-studio, nerf-factory or nerf-pl.

b. Our dataloader for generalizable training is provided in datasets/nerds360_ae.py. ae denotes auto-encoder style of training. This datalaoder is used for the few-shot setting proposed in our NeO360 paper. During eery training iteration, we randomly select 3 source views and 1 target view and sample 1000 rays from target view for decoding the radiance field. This is the same dataloader you'll see gets utilized next during training below.

Note: Our NeRDS360 dataset also provides depth map, NOCS maps, instance segmentation, semantic segmentation and 3D bounding box annotations (all of these annotations are not used either during training or during inference and we only use RGB imahes). Although these annotations can be used for other computer vision tasks to push the SOTA for unbounded outdoor tasks like instance segmentation. All the other annotations are easy to load and provided as .png files

Download the validation split from here. These are scenes never seen during training and are used for visualization from a pretrained model.

Download the pretrained checkpoint from here

Extract them under the project folder with directory structure data and ckpts.

Run the following script to run visualization i.e. 360° rendering from just 3 or 5 source views given as input:

python run.py --dataset_name nerds360_ae --exp_type triplanar_nocs_fusion_conv_scene --exp_name multi_map_tp_CONV_scene --encoder_type resnet --batch_size 1 --img_wh 320 240 --eval_mode vis_only --render_name 5viewtest_novelobj30_SF0_360_LPIPS --ckpt_path finetune_lpips_epoch=30.ckpt --root_dir $neo360_rootdir/data/neo360_valsplit/test_novelobjYou would see images which would produce renderings like the last column as shown below. 10 of the 100 rendered views randomly sampled are shown in the second diagram below:

For evaluation i.e. logging psnr, lpips and ssim metrics as reported in the paper, run the following script:

python run.py --dataset_name nerds360_ae --exp_type triplanar_nocs_fusion_conv_scene --exp_name multi_map_tp_CONV_scene --encoder_type resnet --batch_size 1 --img_wh 320 240 --eval_mode full_eval --render_name 5viewtest_novelobj30_SF0_360_LPIPS --ckpt_path finetune_lpips_epoch=30.ckpt --root_dir $neo360_rootdir/data/neo360_valsplit/test_novelobjThe current script evaluates the scenes one by one. Note that 3 or 5 source views are not part of any of the rendered 100 360° views and are chosen randomly from the upper hemisphere (currently hardcoded random views)

If your checkpoint was finetuned with additional LPIPS loss then the render name must have LPIPS in order to load the LPIPS weights during rendering. See our model and code for further clarification.

We train on the full NERDS 360 Dataset in 2 stages. First we train using a mix of photometric loss (i.e. MSE loss) + a distortion auxillary loss for 30-50 epochs. We then finetune with an addition of LPIPS loss for a few epochs to imporve the visual fidelity of results.

All our experiments were performed on 8 Nvidia A100 GPUs. Please refer to our paper for more details.

Stage 1 training, please run:

python run.py --dataset_name nerds360_ae --root_dir $neo360_rootdir/data/PDMultiObjv6/train/ --exp_type triplanar_nocs_fusion_conv_scene --exp_name multi_map_tp_CONV_scene --encoder_type resnet --batch_size 1 --img_wh 320 240 --num_gpus 8Stage 2 finetuning with an additional LPIPS loss, please specificy the checkpoint from which to finetune and add finetune_lpips flag to run the command below:

python run.py --dataset_name nerds360_ae --root_dir $neo360_rootdir/data/PDMultiObjv6/train --exp_type triplanar_nocs_fusion_conv_scene --exp_name multi_map_tp_CONV_scene --encoder_type resnet --batch_size 1 --img_wh 320 240 --num_gpus 8 --ckpt_path epoch=29.ckpt --finetune_lpipsAt the end of training run, you will see checkpoints stored under ckpts/$exp_name directory with stage 1 training run checkpoints labelled as epoch=aa.ckpt and finetune checkpoints labelled as finetune_lpips_epoch=aa.ckpt

We also provide an is_optimize flag to finetune on the few-shot source images of a new domain as well. Please refer to our paper for more details on what this flag refers and if this is useful for your case.

We also provide a home-grown implementation of PixelNeRF which is built on top of NeRF-Factory and Pytorch Lightning. If you are a user of both of these, you might find it helpful. Just use exp_type pixelnerf and our generalizable dataset nerds360_ae to run pixelnerf training and evaluation with the scripts mentioned above :)

While over proposed technique is a generalizable method which works in a few-shot setting, for the ease of reproducibility and to push the state-of-the-art on single scene novel-view-synthesis of unbounded scenes, we provide scripts to overfit to single scenes given many images. We provide NeRF and MipNeRF-360 baselines from NeRF-Factory with our newly proposed NeRDS360 dataset.

To overfit to a single scene using vanilla NeRF on NERDS360 Dataset, simply run:

python run.py --dataset_name nerds360 --root_dir $neo360_rootdir/data/PD_v6_test/test_novel_objs/SF_GrantAndCalifornia6 --exp_type vanilla --exp_name overfitting_test_vanilla_2 --img_wh 320 240 --num_gpus 7For evaluation, run:

python run.py --dataset_name nerds360 --root_dir $neo360_rootdir/data/PD_v6_test/test_novel_objs/SF_GrantAndCalifornia6 --exp_type vanilla --exp_name overfitting_test_vanilla_2 --img_wh 320 240 --num_gpus 1 --eval_mode vis_only --render_name vanilla_evalYou'll see results as below. We achive a test-set PSNR of 24.75 and SSIM of 0.78 for this scene.

To overfit to a single scene using MipNeRF360 on NERDS360 Dataset, simply run:

python run.py --dataset_name nerds360 --root_dir $neo360_rootdir/data/PD_v6_test/test_novel_objs/SF_GrantAndCalifornia6 --exp_type mipnerf360 --exp_name overfitting_test_mipnerf360_2 --img_wh 320 240 --num_gpus 7This code is built upon the implementation from nerf-factory, NeRF++ and PixelNeRF with distortion loss and unbounded scene contraction used from MipNeRF360. Kudos to all the authors for great works and releasing their code. Thanks to the original NeRF implementation and the pytorch implementation nerf_pl for additional inspirations during this project.

This repository and the NERDS360 dataset is released under the CC BY-NC 4.0 license.