Training and Prediction code for Kaggle competition, Lyft Motion Prediction for Autonomous Vehicles. The target is motion predicion over 5 sec for each vehicle, the pink track below. Each prediction is evaluated with "multi-modal negative log-likelihood loss".

First, download the data, here, and full training data, here. You will get the followings.

/your/dataset/root_path

│

├ meta.json

├ single_mode_sample_submission.csv

├ multi_mode_sample_submission.csv

├ aerial_map/

├ semantic_map/

└ scenes/

├ mask.npz

├ sample.zarr

├ test.zarr

├ train.zarr

├ train_full.zarr

└ validate.zarrInstall dependencies,

# clone project

git clone https://github.com/Fkaneko/kaggle-lyft-motion-pred

# install project

cd kaggle-lyft-motion-pred

pip install -r requirements.txtRun training and testing it,

# run training

python run_lyft_mpred.py \

--l5kit_data_folder \

/your/dataset/root_path \

--epochs \

1 \

--lr \

5.2e-4 \

--batch_size \

128 \

--num_workers \

4

# run test

python run_lyft_mpred.py \

--l5kit_data_folder \

/your/dataset/root_path \

--is_test \

--ckpt_path \

/your/trained/ckeckpoint_path

--batch_size \

128 \

--num_workers \

4After testing you will find ./submission.csv, and you can check the test score

throguh this notebook.

The baseline CNN regression approach, just replacing the 1st and final layers of Imagenet pretrained model, was strong. Segmentaion or RNN approeches are not good. And following tips we can get top-10 equivalent performance(11.238) using the baseline approach.

-

Directly optimize evaluation metric. The target metric "multi-modal negative log-likelihood loss". is differential.

-

Use the same filtering configuration as test data is generated, it means

MIN_FRAME_HISTORY = 0andMIN_FRAME_FUTURE = 10atl5kit.dataset.AgentDataset. -

Use all train data, in total 198474478 agents. It's really huge but the loss continuously decrease during training.

Actually I got the following result. The history_frames was 10 at the baseline so if you can use 10 instead of 2 you may get better result than top-10 score, 11.283 with this single model. You can check 11.377 result with my full test pipeline and trained weight at kaggle notebook.

| model | backbone | scenes | iteration x batch_size | loss | history_frames | MIN_FRAME_HISTORY / FUTURE | test score |

|---|---|---|---|---|---|---|---|

| baseline | resnet50 | 11314 | 100k x 64 | single mode | 10 | 10/1 | 104.195 |

| this study | seresnext26 | 134622 | 451k x 440 | multi-modal | 2 (10->2 due to time constraint) | 0/10 | 11.377 |

[Note] The backbone difference is not a matter, within top-10 solution a single resnet18 reaches score < 10.0. But smaller model tends to be better for this task.

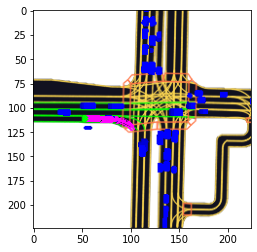

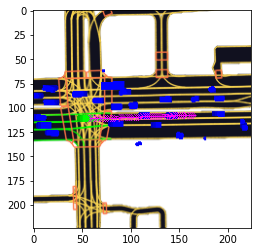

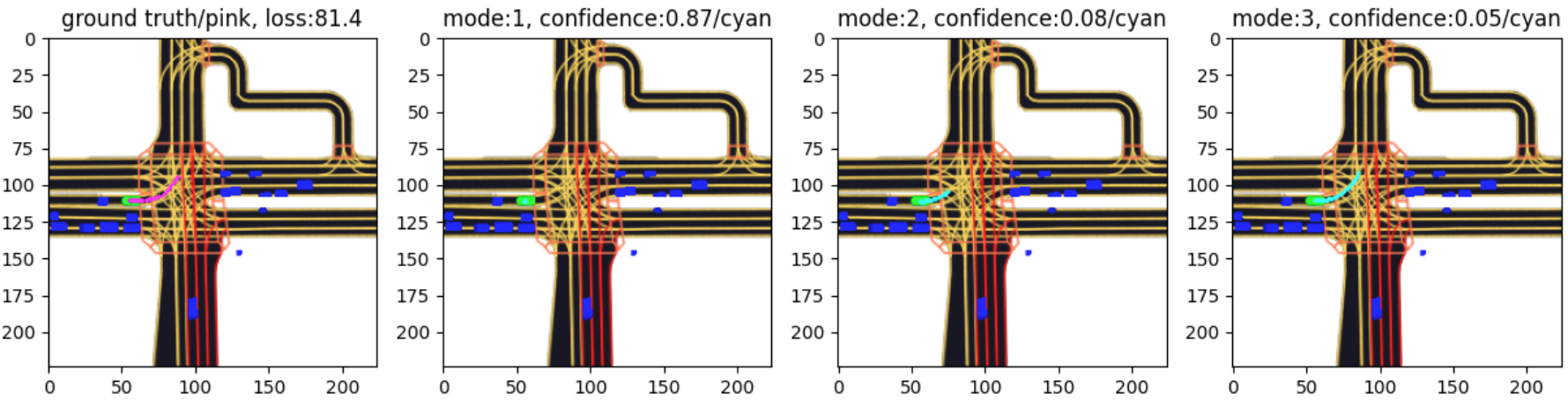

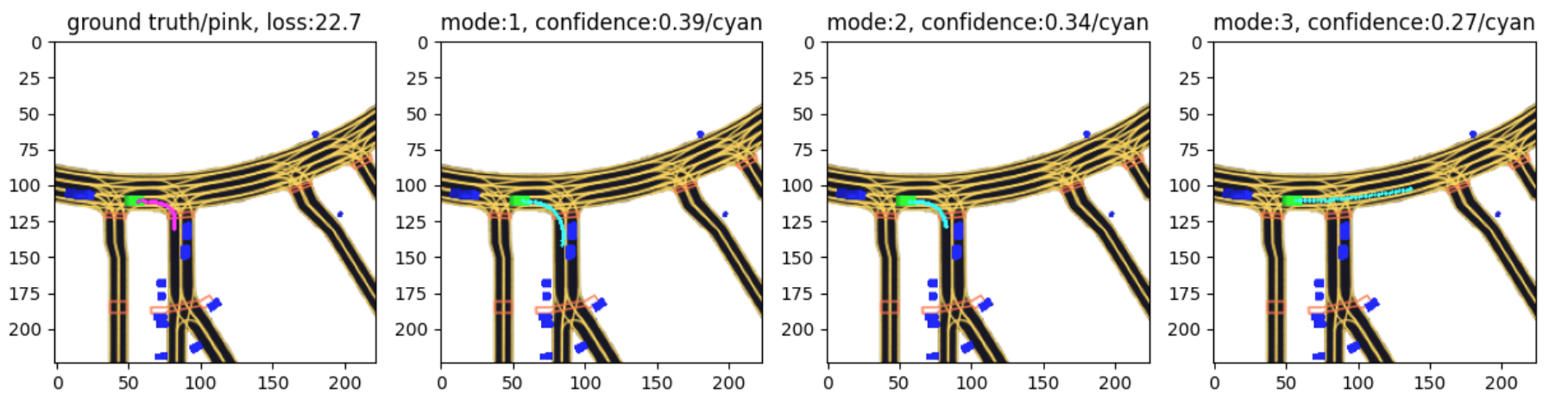

Prediction visualization with this study model. The left most figure is ground truth track is displayed and remaining three images are 3-mode predictions. The loss is over three modes.

A intersecetion scene with a trafic light is hard as expected.

Apache 2.0

Please check, https://self-driving.lyft.com/level5/prediction/

- Github templete from PytorchLighting.

- Nine simple steps for better-looking python code.

- For the dataset, l5kit.

- Leaderboad, kaggle competition page.