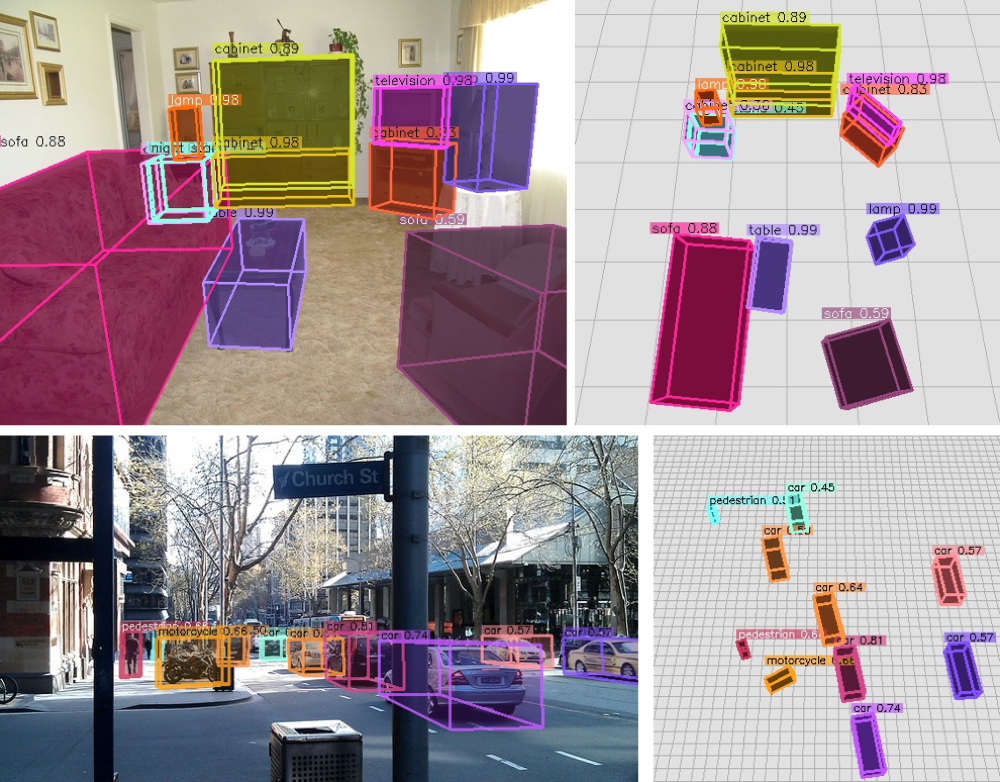

Omni3D: A Large Benchmark and Model for 3D Object Detection in the Wild

Garrick Brazil, Julian Straub, Nikhila Ravi, Justin Johnson, Georgia Gkioxari

[Project Page] [arXiv] [BibTeX]

|

Zero-shot (+ tracking) on Project Aria data

|

# setup new evironment

conda create -n cubercnn python=3.8

source activate cubercnn

# main dependencies

conda install -c fvcore -c iopath -c conda-forge -c pytorch3d -c pytorch fvcore iopath pytorch3d pytorch=1.8 torchvision=0.9.1 cudatoolkit=10.1

# OpenCV, COCO, detectron2

pip install cython opencv-python

pip install 'git+https://github.com/cocodataset/cocoapi.git#subdirectory=PythonAPI'

python -m pip install detectron2 -f https://dl.fbaipublicfiles.com/detectron2/wheels/cu101/torch1.8/index.html

# other dependencies

conda install -c conda-forge scipy seabornFor reference, we used cuda/10.1 and cudnn/v7.6.5.32 for our experiments. We expect that slight variations in versions are also compatible.

To run the Cube R-CNN demo on a folder of input images using our DLA34 model trained on the full Omni3D dataset,

# Download example COCO images

sh demo/download_demo_COCO_images.sh

# Run an example demo

python demo/demo.py \

--config-file cubercnn://omni3d/cubercnn_DLA34_FPN.yaml \

--input-folder "datasets/coco_examples" \

--threshold 0.25 --display \

MODEL.WEIGHTS cubercnn://omni3d/cubercnn_DLA34_FPN.pth \

OUTPUT_DIR output/demo See demo.py for more details. For example, if you know the camera intrinsics you may input them as arguments with the convention --focal-length <float> and --principal-point <float> <float>. See our MODEL_ZOO.md for more model checkpoints.

See DATA.md for instructions on how to download and set up images and annotations of our Omni3D benchmark for training and evaluating Cube R-CNN.

We provide config files for trainin Cube R-CNN on

- Omni3D:

configs/Base_Omni3D.yaml - Omni3D indoor:

configs/Base_Omni3D_in.yaml - Omni3D outdoor:

configs/Base_Omni3D_out.yaml

We train on 48 GPUs using submitit which wraps the following training command,

python tools/train_net.py \

--config-file configs/Base_Omni3D.yaml \

OUTPUT_DIR output/omni3d_example_runNote that our provided configs specify hyperparameters tuned for 48 GPUs. You could train on 1 GPU (though with no guarantee of reaching the final performance) as follows,

python tools/train_net.py \

--config-file configs/Base_Omni3D.yaml --num-gpus 1 \

SOLVER.IMS_PER_BATCH 4 SOLVER.BASE_LR 0.0025 \

SOLVER.MAX_ITER 5568000 SOLVER.STEPS (3340800, 4454400) \

SOLVER.WARMUP_ITERS 174000 TEST.EVAL_PERIOD 1392000 \

VIS_PERIOD 111360 OUTPUT_DIR output/omni3d_example_runOur Omni3D configs are designed for multi-node training.

We follow a simple scaling rule for adjusting to different system configurations. We find that 16GB GPUs (e.g. V100s) can hold 4 images per batch when training with a DLA34 backbone. If

-

SOLVER.IMS_PER_BATCH$=b$ -

SOLVER.BASE_LR$/=r$ -

SOLVER.MAX_ITER$*=r$ -

SOLVER.STEPS$*=r$ -

SOLVER.WARMUP_ITERS$*=r$ -

TEST.EVAL_PERIOD$*=r$ -

VIS_PERIOD$*=r$

We tune the number of GPUs SOLVER.MAX_ITER is in a range between about 90 - 120k iterations. We cannot guarantee that all GPU configurations perform the same. We expect noticeable performance differences at extreme ends of resources (e.g. when using 1 GPU).

To evaluate trained models from Cube R-CNN's MODEL_ZOO.md, run

python tools/train_net.py \

--eval-only --config-file cubercnn://omni3d/cubercnn_DLA34_FPN.yaml \

MODEL.WEIGHTS cubercnn://omni3d/cubercnn_DLA34_FPN.pth \

OUTPUT_DIR output/evaluation

Our evaluation is similar to COCO evaluation and uses

[Coming Soon!] An example script for evaluating any model independent from Cube R-CNN's testing loop is coming soon!

See RESULTS.md for detailed Cube R-CNN performance and comparison with other methods.

Cube R-CNN is released under CC-BY-NC 4.0.

Please use the following BibTeX entry if you use Omni3D and/or Cube R-CNN in your research or refer to our results.

@article{brazil2022omni3d,

author = {Garrick Brazil and Julian Straub and Nikhila Ravi and Justin Johnson and Georgia Gkioxari},

title = {{Omni3D}: A Large Benchmark and Model for {3D} Object Detection in the Wild},

journal = {arXiv:2207.10660},

year = {2022}

}If you use the Omni3D benchmark, we kindly ask you to additionally cite all datasets. BibTex entries are provided below.

Dataset BibTex

@inproceedings{Geiger2012CVPR,

author = {Andreas Geiger and Philip Lenz and Raquel Urtasun},

title = {Are we ready for Autonomous Driving? The KITTI Vision Benchmark Suite},

booktitle = {CVPR},

year = {2012}

}@inproceedings{caesar2020nuscenes,

title={nuscenes: A multimodal dataset for autonomous driving},

author={Caesar, Holger and Bankiti, Varun and Lang, Alex H and Vora, Sourabh and Liong, Venice Erin and Xu, Qiang and Krishnan, Anush and Pan, Yu and Baldan, Giancarlo and Beijbom, Oscar},

booktitle={CVPR},

year={2020}

}@inproceedings{song2015sun,

title={Sun rgb-d: A rgb-d scene understanding benchmark suite},

author={Song, Shuran and Lichtenberg, Samuel P and Xiao, Jianxiong},

booktitle={CVPR},

year={2015}

}@inproceedings{dehghan2021arkitscenes,

title={{ARK}itScenes - A Diverse Real-World Dataset for 3D Indoor Scene Understanding Using Mobile {RGB}-D Data},

author={Gilad Baruch and Zhuoyuan Chen and Afshin Dehghan and Tal Dimry and Yuri Feigin and Peter Fu and Thomas Gebauer and Brandon Joffe and Daniel Kurz and Arik Schwartz and Elad Shulman},

booktitle={NeurIPS Datasets and Benchmarks Track (Round 1)},

year={2021},

}@inproceedings{hypersim,

author = {Mike Roberts AND Jason Ramapuram AND Anurag Ranjan AND Atulit Kumar AND

Miguel Angel Bautista AND Nathan Paczan AND Russ Webb AND Joshua M. Susskind},

title = {{Hypersim}: {A} Photorealistic Synthetic Dataset for Holistic Indoor Scene Understanding},

booktitle = {ICCV},

year = {2021},

}@article{objectron2021,

title={Objectron: A Large Scale Dataset of Object-Centric Videos in the Wild with Pose Annotations},

author={Ahmadyan, Adel and Zhang, Liangkai and Ablavatski, Artsiom and Wei, Jianing and Grundmann, Matthias},

journal={CVPR},

year={2021},

}