Common repo for our ongoing research on motion forecasting in self-driving vehicles.

Clone this repo, afterwards init external submodules with:

git submodule update --init --recursiveCreate a conda environment named "future-motion" with:

conda env create -f conda_env.ymlPrepare Waymo Open Motion and Argoverse 2 Forecasting datasets by following the instructions in src/external_submodules/hptr/README.md.

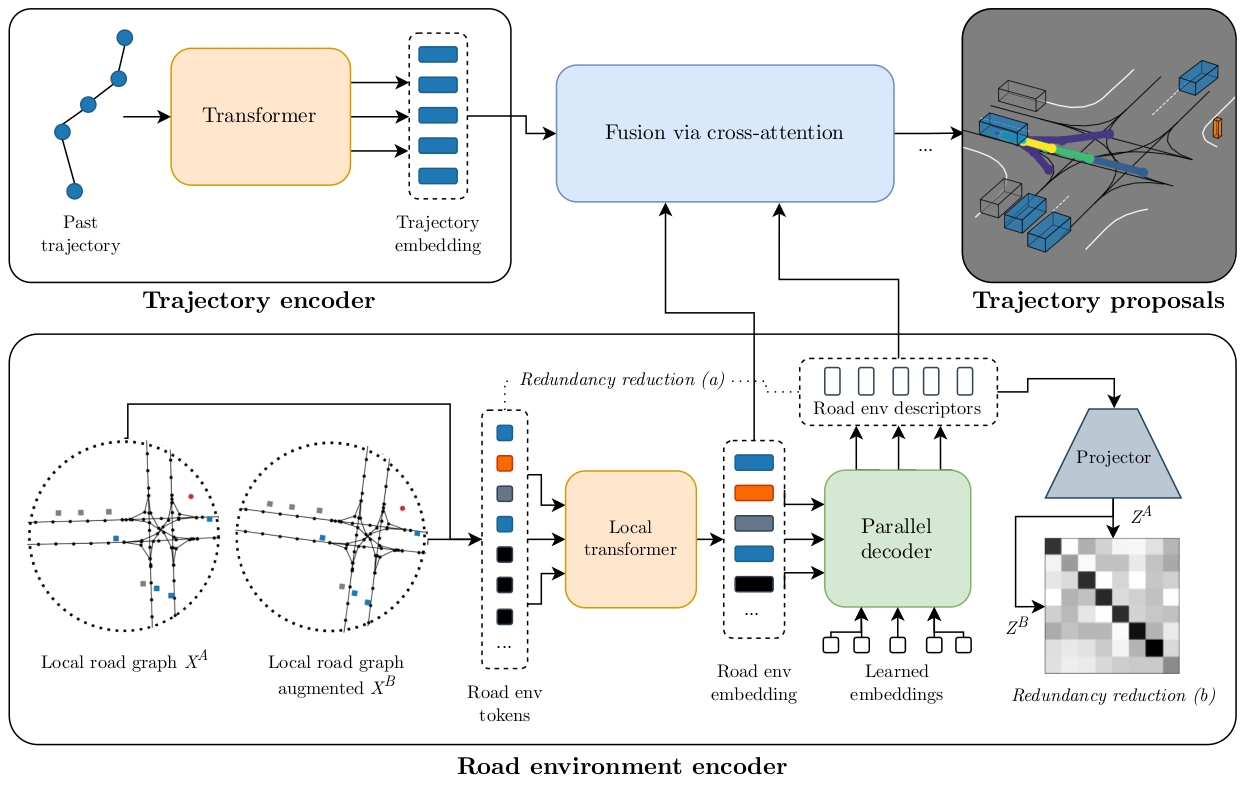

Our RedMotion model consists of two encoders. The trajectory encoder generates an embedding for the past trajectory of the current agent. The road environment encoder generates sets of local and global road environment embeddings as context. We use two redundancy reduction mechanisms, (a) architecture-induced and (b) self-supervised, to learn rich representations of road environments. All embeddings are fused via cross-attention to yield trajectory proposals per agent.

More details

This repo contains the refactored implementation of RedMotion, the original implementation is available here.

The Waymo Motion Prediction Challenge doesn't allow sharing the weights used in the challenge. However, we provide a Colab notebook for a model with a shorter prediction horizon (5s vs. 8s) as a demo.

Training

To train a RedMotion model (tra-dec config) from scratch, adapt the global variables in train.sh according to your setup (Weights & Biases, local paths, batch size and visible GPUs). The default batch size is set for A6000 GPUs with 48GB VRAM. Then start the training run with:

bash train.sh ac_red_motionFor reference, this wandb plot shows the validation mAP scores for the epochs 23 - 129 (default config, trained on 4 A6000 GPUs for ~100h).

Reference

@article{

wagner2024redmotion,

title={RedMotion: Motion Prediction via Redundancy Reduction},

author={Royden Wagner and Omer Sahin Tas and Marvin Klemp and Carlos Fernandez and Christoph Stiller},

journal={Transactions on Machine Learning Research},

year={2024},

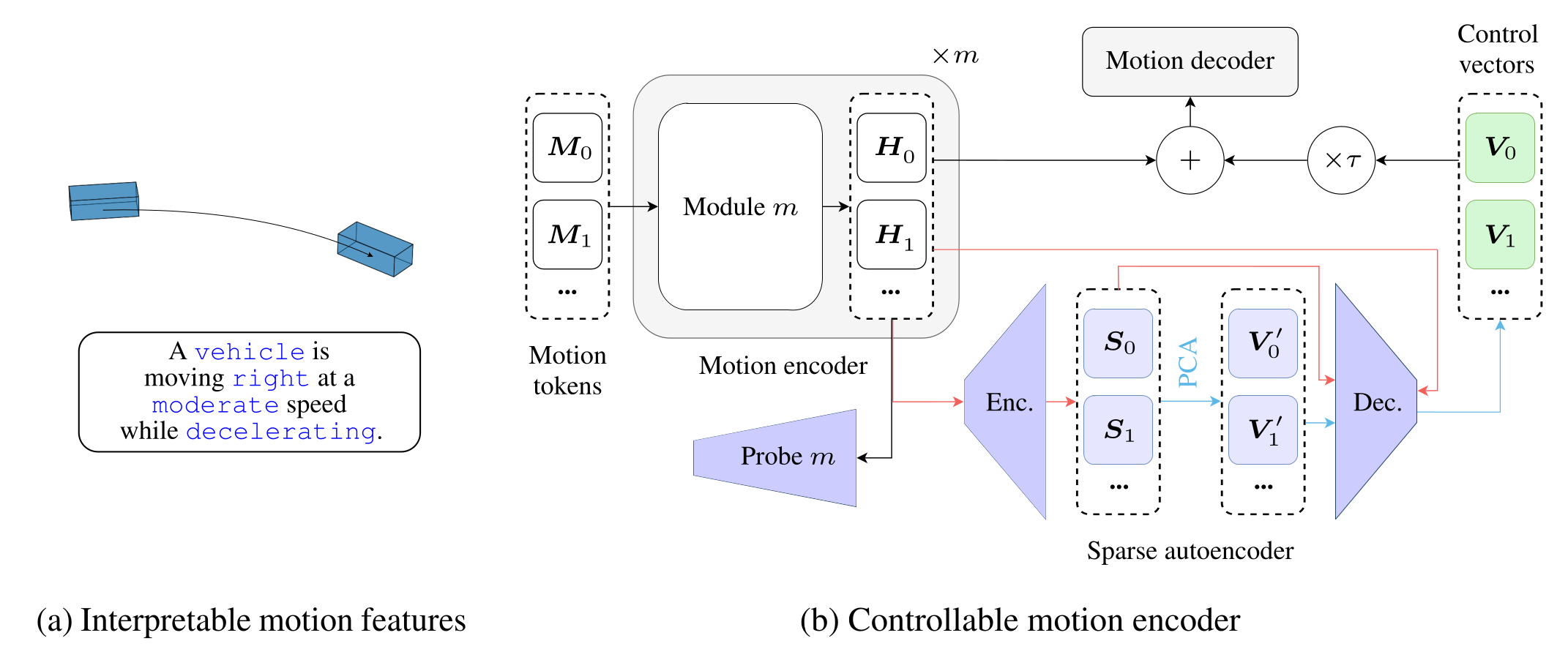

}Words in Motion is a mechanistic interpretability method for interpreting recent motion transformer models.

(a) We classify motion features in an interpretable way, as in natural language.

(b) We measure the degree to which these interpretable features are embedded in the hidden states

More details

Gradio demos

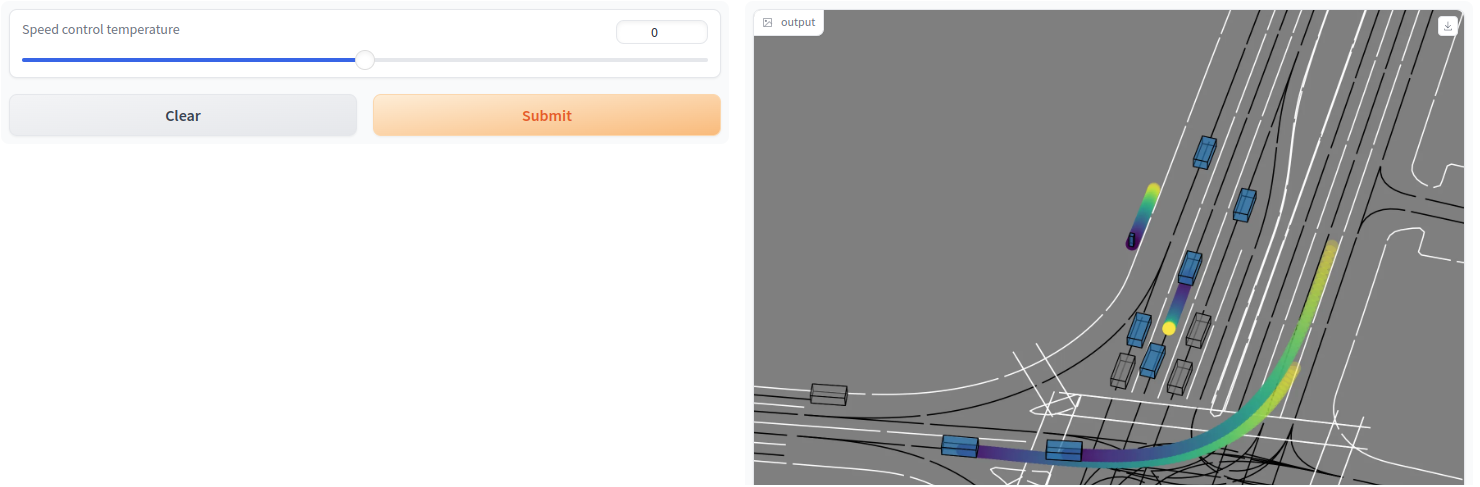

Use this Colab notebook to start Gradio demos for our speed control vectors.

In contrast to the qualitative results in our paper, we show the motion forecasts for the focal agent and 8 other agents in a scene. Press the submit button with the default temperature = 0 to visualize the default (non-controlled) forecasts, then change the temperature and resubmit to visualize the changes. The example is from the Waymo Open dataset and shows motion forecasts for vehicles and a pedestrian (top center).

For very low control temperatures (e.g, -100), almost all agents are becoming static. For very high control temperatures (e.g., 85), even the static (shown in grey) agents begin to move, and the pedestrian does not move faster anymore. We hypothesize that the model has learned a reasonable upper bound for the speed of a pedestrian.

Training

Soon to be released.

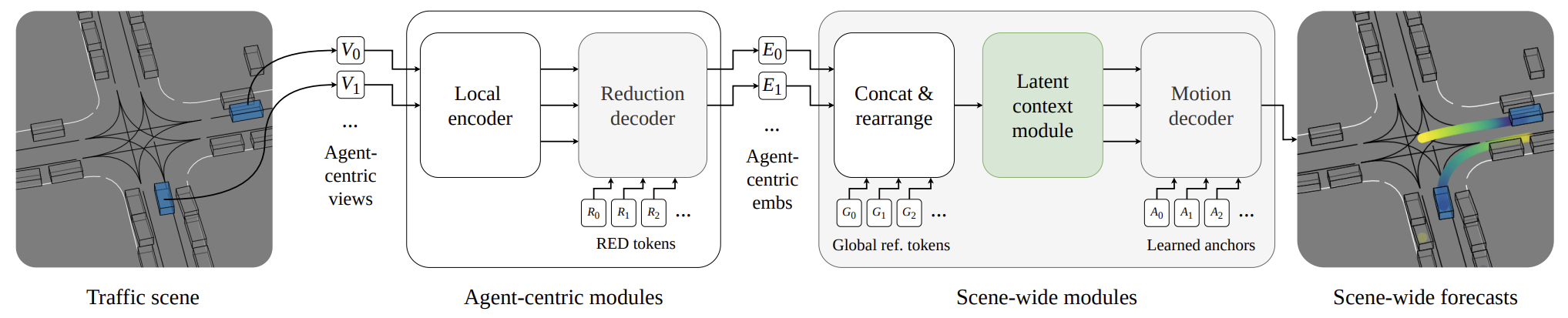

Our attention-based motion forecasting model is composed of stacked encoder and decoder modules.

Variable-sized agent-centric views

More details

Adapt the paths and accounts in sbatch/train_scene_motion_juwels.sh to your setup to train a SceneMotion model on a Juwels-like cluster with a Slurm system and at least 1 node with 4 A100 GPUs.

The training is configured for the Waymo Open Motion dataset.

To train the model for marginal motion forecasting, add model.interactive_challenge=False and model.train_metric.winner_takes_all=hard1 to the srun command in the train script.

Reference

@inproceedings{wagner2024scenemotion,

title={SceneMotion: From Agent-Centric Embeddings to Scene-Wide Forecasts},

author={Wagner, Royden and Tas, {\"O}mer Sahin and Steiner, Marlon and Konstantinidis, Fabian and K{\"o}nigshof, Hendrik and Klemp, Marvin and Fernandez, Carlos and Stiller, Christoph},

booktitle={International Conference on Intelligent Transportation Systems (ITSC)},

year={2024}

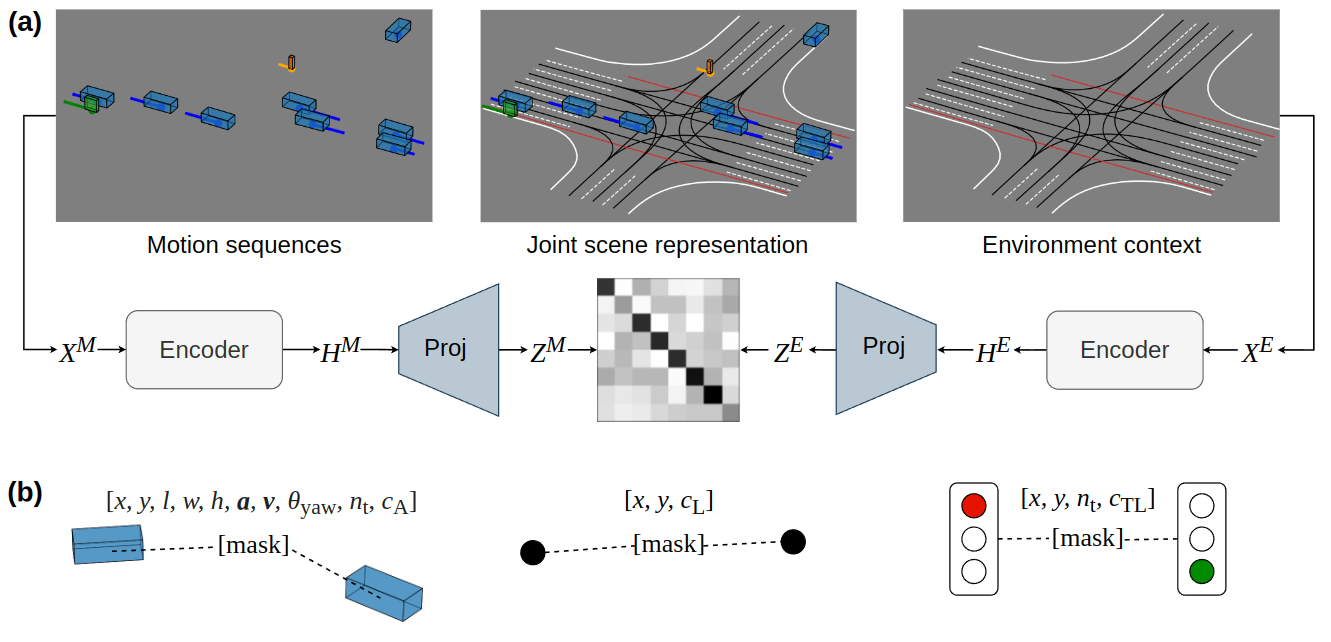

}JointMotion is a self-supervised pre-training method that improves joint motion prediction.

(a) Connecting motion and environments: Our scene-level objective learns joint scene representations via non-contrastive similarity learning of motion sequences

(b) Masked polyline modeling: Our instance-level objective refines learned representations via masked autoencoding of multimodal polyline embeddings (i.e., motion, lane, and traffic light data).

More details

Adapt the paths and accounts in sbatch/pre_train_joint_motion_scene_transformer_juwels.sh to your setup to pre-train a Scene Transformer model with the JointMotion objective on a Juwels-like cluster with a Slurm system and at least 1 node with 4 A100 GPUs.

The pre-training is configured for the Waymo Open Motion dataset and takes 10h.

The Scene Transformer model is based on the implementation in HPTR and uses their decoder.

Reference

@inproceedings{wagner2024jointmotion,

title={JointMotion: Joint Self-Supervision for Joint Motion Prediction},

author={Wagner, Royden and Tas, Omer Sahin and Klemp, Marvin and Fernandez, Carlos},

booktitle={Conference on Robot Learning (CoRL)},

year={2024},

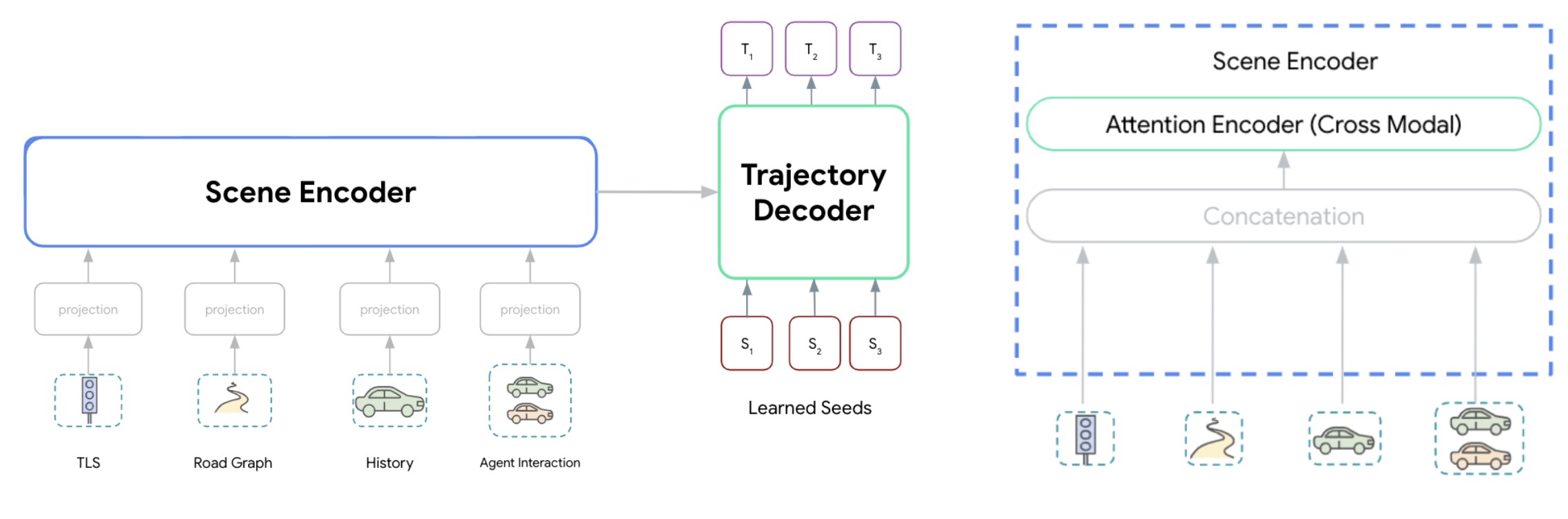

}Wayformer models take multimodal scene data as input, project it into a homogeneous (i.e., same dim) token format, and transform learned seeds (i.e., trajectory anchors) into multimodal distributions of trajectories.

We provide an open-source implementation of the Wayformer model with an early fusion scene encoder and multi-axis latent query attention. Our implementation is a refactored version of the AgentCentricGlobal model from the HPTR repo with improved performance (higher mAP scores).

The hyperparameters defined in configs/model/ac_wayformer.yaml follow the ones in Table 4 (see Appendix D in the Wayformer paper) except the number of decoders is 1 instead of 3.

More details

We use the polyline representation of MPA (Konev, 2022) as input and the non-maximum supression (NMS) algorithm of MTR (Shi et. al., 2023) to generate 6 trajetories from the predicted 64 trajectories.

Adapt the paths and accounts in sbatch/train_wayformer_juwels.sh to your setup to train a Wayformer model on a Juwels-like cluster with a Slurm system and at least 2 nodes with 4 A100 GPUs each.

The training is configured for the Waymo Open Motion dataset and takes roughly 24h.

Reference

@inproceedings{nayakanti2023wayformer,

title={Wayformer: Motion forecasting via simple \& efficient attention networks},

author={Nayakanti, Nigamaa and Al-Rfou, Rami and Zhou, Aurick and Goel, Kratarth and Refaat, Khaled S and Sapp, Benjamin},

booktitle={International Conference on Robotics and Automation (ICRA)},

year={2023},

}