Awesome BEV perception papers and toolbox for achieving state-of-the-arts performance.

This repo is associated with the survey paper "Delving into the Devils of Bird’s-eye-view Perception: A Review, Evaluation and Recipe", which provides an up-to-date literature survey for BEV perception and an open-source BEV toolbox based on PyTorch. We also introduce the BEV algorithm family, including follow-up work on BEV percepton such as VCD, GAPretrain, and FocalDistiller. We hope this repo can not only be a good starting point for new beginners but also help current researchers in the BEV perception community.

If you find some work popular enough to be cited below, email us or simply open a PR!

-

Up-to-date Literature Survey for BEV Perception

We summarize important methods in recent years about BEV perception, including different modalities (camera, LIDAR, Fusion) and tasks (Detection, Segmentation, Occupancy). More details of the survey paper list can be found here. -

Convenient BEVPerception Toolbox

We integrate a bag of tricks in the BEV toolbox that helps us achieve 1st in the camera-based detection track of the Waymo Open Challenge 2022, which can be used independently or as a plug-in for popular deep learning libraries. Moreover, we provide a suitable playground for beginners in this area, including a hands-on tutorial and a small-scale dataset (1/5 WOD in kitti format) to validate ideas. More details can be found here. -

SOTA BEV Knowledge Distillation Algorithms

We include important follow-up works of BEVFormer/BEVDepth/SOLOFusion in the perspective of knowledge distillation(VCD, GAPretrain, FocalDistiller). More details of each paper can be found in each README.md file under here.

| Method | Expert | Apprentice |

|---|---|---|

| VCD | Vision-centric multi-modal detector | Camera-only detector |

| GAPretrain | Lidar-only detector | Camera-only detector |

| FocalDistiller | Camera-only detector | Camera-only detector |

2023/11/04 Our Survey is accepted by IEEE T-PAMI.

2023/10/26 A new paper VCD is coming soon with official implementation.

2023/09/06 We have a new version of the survey. Check it out!

2023/04/06 Two new papers GAPretrain and FocalDistiller are coming soon with official implementation.

2022/10/13 v0.1 was released.

- Integrate some practical data augmentation methods for BEV camera-based 3D detection in the toolbox.

- Offer a pipeline to process the Waymo dataset (camera-based 3D detection).

- Release a baseline (with config) for the Waymo dataset and also 1/5 of the Waymo dataset in Kitti format.

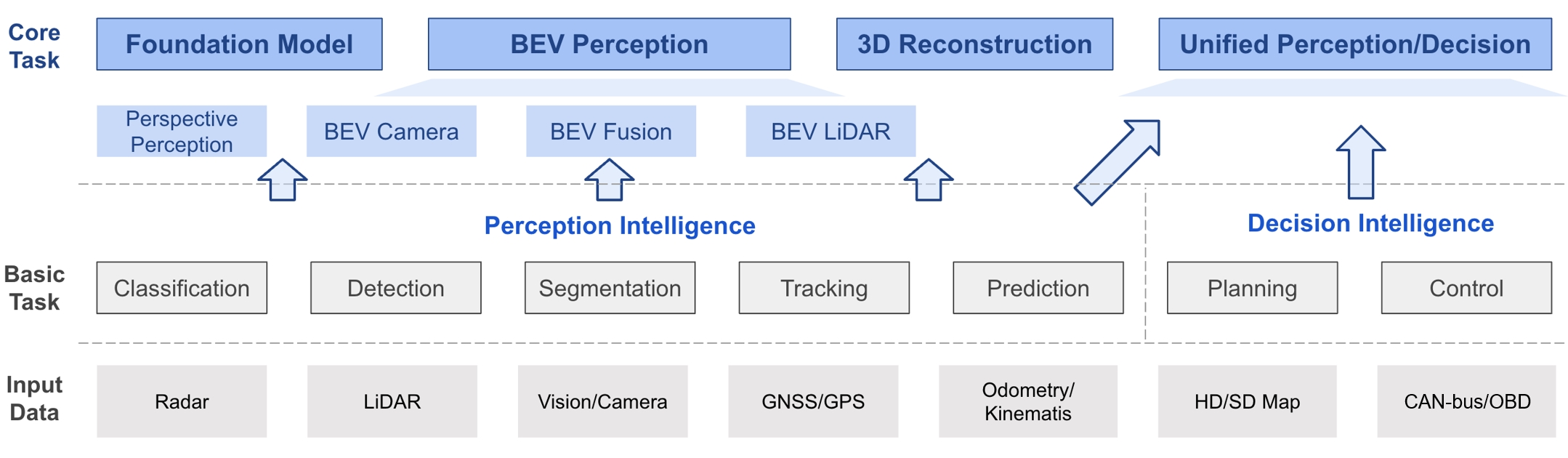

The general picture of BEV perception at a glance, where consists of three sub-parts based on the input modality. BEV perception is a general task built on top of a series of fundamental tasks. For better completeness of the whole perception algorithms in autonomous driving, we list other topics as well. More details can be found in the survey paper.

The general picture of BEV perception at a glance, where consists of three sub-parts based on the input modality. BEV perception is a general task built on top of a series of fundamental tasks. For better completeness of the whole perception algorithms in autonomous driving, we list other topics as well. More details can be found in the survey paper.

We have summarized important datasets and methods in recent years about BEV perception in academia and also different roadmaps used in industry.

We have also summarized some conventional methods for different tasks.

- Conventional Methods Camera 3D Object Detection

- Conventional Methods LiDAR Detection

- Conventional Methods LiDAR Segmentation

- Conventional Methods Sensor Fusion

The BEV toolbox provides useful recipes for BEV camera-based 3D object detection, including solid data augmentation strategies, efficient BEV encoder design, loss function family, useful test-time augmentation, ensemble policy, and so on. Please refer to bev_toolbox/README.md for more details.

The BEV algorithm family includes follow-up works of BEVFormer in different aspects, ranging from plug-and-play tricks to pre-training distillation. All paper summary is under nuscenes_playground along with official implementation, check it out!

- VCD (NeurIPS 2023)

Leveraging Vision-Centric Multi-Modal Expertise for 3D Object Detection. More details can be found in nuScenes_playground/VCD. - GAPretrain (arXiv)

Geometric-aware Pretraining for Vision-centric 3D Object Detection. More details can be found in nuScenes_playground/GAPretrain.md. - FocalDistiller (CVPR 2023)

Distilling Focal Knowledge from Imperfect Expert for 3D object Detection. More details can be found in nuScenes_playground/FocalDistiller.

This project is released under the Apache 2.0 license.

If you find this project useful in your research, please consider cite:

@article{li2022bevsurvey,

author={Li, Hongyang and Sima, Chonghao and Dai, Jifeng and Wang, Wenhai and Lu, Lewei and Wang, Huijie and Zeng, Jia and Li, Zhiqi and Yang, Jiazhi and Deng, Hanming and Tian, Hao and Xie, Enze and Xie, Jiangwei and Chen, Li and Li, Tianyu and Li, Yang and Gao, Yulu and Jia, Xiaosong and Liu, Si and Shi, Jianping and Lin, Dahua and Qiao, Yu},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={Delving Into the Devils of Bird's-Eye-View Perception: A Review, Evaluation and Recipe},

year={2023},

volume={},

number={},

pages={1-20},

doi={10.1109/TPAMI.2023.3333838}

}@misc{bevtoolbox2022,

title={{BEVPerceptionx-Survey-Recipe} toolbox for general BEV perception},

author={BEV-Toolbox Contributors},

howpublished={\url{https://github.com/OpenDriveLab/Birds-eye-view-Perception}},

year={2022}

}