Welcome to the official repository for the paper, UnlearnCanvas: A Stylized Image Dataset for Benchmarking Machine Unlearning in Diffusion Models. This dataset encompasses source codes and essential checkpoints for all experiments presented in the paper, including machine unlearning and style transfer.

The rapid advancement of diffusion models (DMs) has not only transformed various real-world industries but has also introduced negative societal concerns, including the generation of harmful content, copyright disputes, and the rise of stereotypes and biases. To mitigate these issues, machine unlearning (MU) has emerged as a potential solution, demonstrating its ability to remove undesired generative capabilities of DMs in various applications. However, by examining existing MU evaluation methods, we uncover several key challenges that can result in incomplete, inaccurate, or biased evaluations for MU in DMs. To address them, we enhance the evaluation metrics for MU, including the introduction of an often-overlooked retainability measurement for DMs post-unlearning. Additionally, we introduce UnlearnCanvas, a comprehensive high-resolution stylized image dataset that facilitates us to evaluate the unlearning of artistic painting styles in conjunction with associated image objects. We show that this dataset plays a pivotal role in establishing a standardized and automated evaluation framework for MU techniques on DMs, featuring 7 quantitative metrics to address various aspects of unlearning effectiveness. Through extensive experiments, we benchmark 5 state-of-the-art MU methods, revealing novel insights into their pros and cons, and the underlying unlearning mechanisms. Furthermore, we demonstrate the potential of UnlearnCanvas to benchmark other generative modeling tasks, such as style transfer.

This repository contains the usage instructions for UnlearnBench dataset and the source code to reproduce all the experiment results in the paper. In particular, this project contains the following subfolders.

- diffusion_model_finetuning: This folder contains the instructions and the source code to fine-tune the diffusion models on the UnlearnCanvas dataset. We provide the fine-tuning scripts for the text-to-image model StableDiffusion and the image-editing model InstructPix2Pix as an example of how this dataset can be used. In particular, we provide the fine-tuning scripts for both the

diffuserand thecompviscode structures. - machine_unlearning: This folder contains the instructions and the source code to perform machine unlearning on the fine-tuned diffusion models. In particular, we include the source code and the scripts of five machine unlearning methods reported in the paper (ESD, CA, UCE, FMN, SalUn, and SA). These source codes are inherited and modified from their original code repo to adapt to the UnlearnCanvas. We also open-sourced the source code used to generate the evaluation set and the evaluation scripts for these experiments.

- style_transfer: This folder contains the instructions and the source code to perform style transfer using the UnlearnCanvas dataset, as an example of how UnlearnCanvas can be used for accurate evaluation on more tasks. In particular, we look into in total 9 style transfer methods. For each method, we provide the checkpoints (released by the authors of each method) and the evaluation scripts.

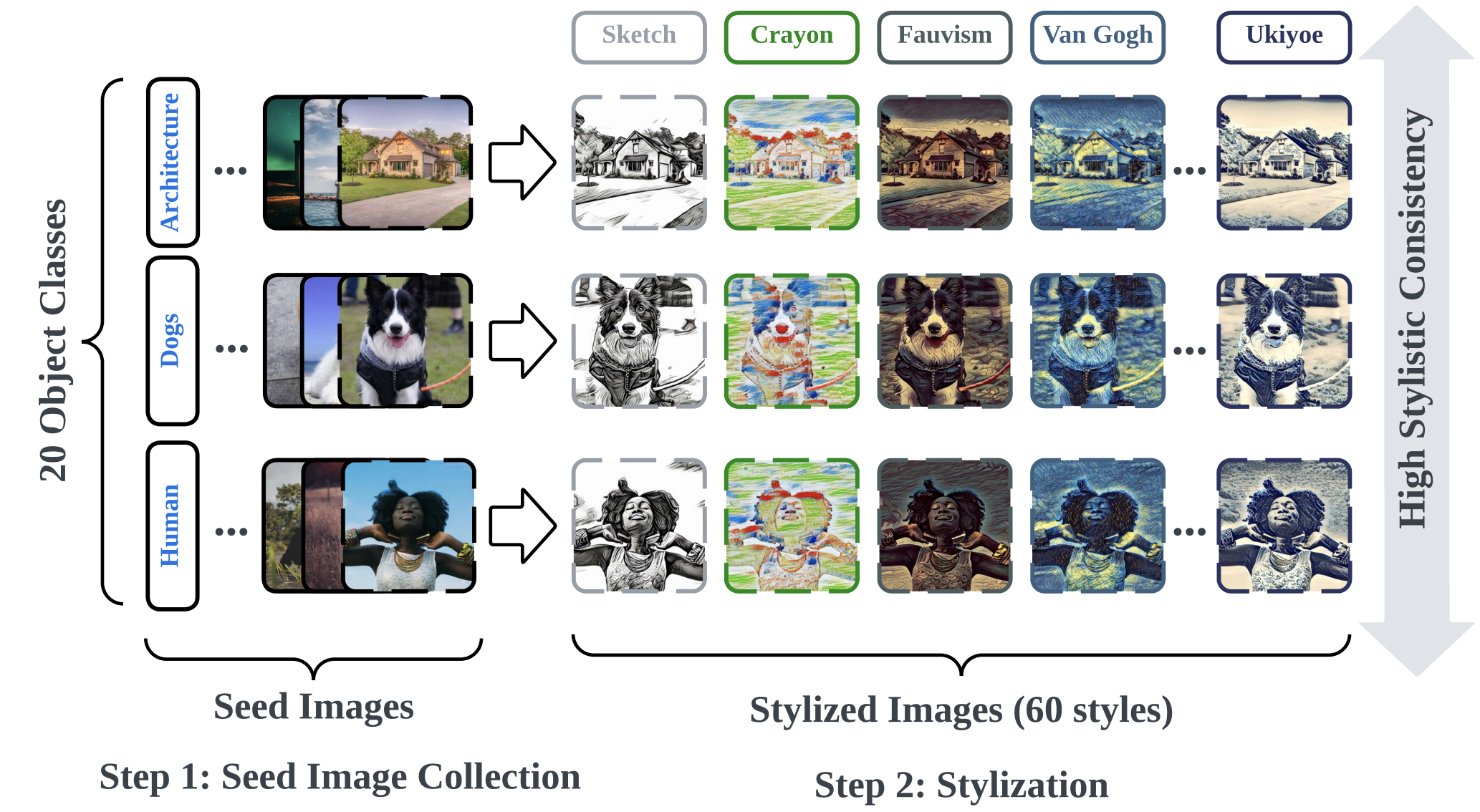

The UnlearnCanvas Dataset is now publicly available on both Google Drive and HuggingFace! The dataset contains images across 60 different artistic painting styles and for each style, we provide 400 images across 20 different object categories. The dataset follows a structured of ./style_name/object_name/image_idx.jpg Each stylized image is painted from the corresponding photo-realistic image, which are stored in the ./Seed_Image folder. For more details on how to fine-tune diffusion models with UnlearnCanvas, please refer to the diffusion_model_finetuning folder.

The UnlearnCanvas dataset is designed to facilitate the quantitative evaluation of various vision generative modeling tasks, including but are not limited to:

- Machine Unlearning (explored in the paper)

- Style Transfer (explored in the paper)

- Vision In-Context Learning

- Bias Removal for Generative Models

- Out-of-distribution Learning

- ...

The dataset has the following features:

- High-Resolution Images and Balanced Structure: The dataset contains super high-resolution images with balanced image numbers across different styles and object types, which are friendly to fine-tuning foundation models, represented by diffusion models.

- Rich Style and Object Categories: The dataset contains 60 different artistic painting styles and 20 different object categories. This allows the dataset to be used for a wide range of vision generative modeling tasks.

- High Stylistic Consistency and Distinctiveness: The dataset is designed to contain high stylistic consistency and distinctiveness across different styles categories. This attribute is crucial in helping building up an accurate style/object detector, which serves as the foundations of quantitative evaluations for many tasks.

We provide the key checkpoints used in our experiments in the paper. The checkpoints are publicly available in the Google Drive Folder. These checkpoints are organized in the following subfolders:

diffusion(StableDiffusion Fine-tuned on UnlearnBench): We provide the fine-tuned checkpoints for the StableDiffusion model on the UnlearnCanvas dataset in the format ofcompvisanddiffuserformat (to save your time on transferring them to each other). These checkpoints are used in the machine unlearning experiments in the paper and serve as the testbed for different unlearning methods.classfiers(Style/Object Classifier Fine-tuned on UnlearnBench): We provide the fine-tuned ViT-Large checkpoints for the style and object classifiers on the UnlearnCanvas dataset. These checkpoints are used in the machine unlearning experiments in the paper to evaluate the results after each unlearning method is performed.style_loss_vgg(Pretrained Checkpoints for Style Loss Evaluation): We provide the pretrained checkpoints for the style loss calculation used in the paper.

As for the detailed usage instructions of these checkpoints, please refer to the README files of the corresponding subfolders in this repo. Note, we also provide the pretrained checkpoints for all the style transfer methods, which are discussed in the README files of the corresponding subfolders.

Unless otherwise specified, the code will be running in the following environment:

conda env create -f environment.yamlPlease note that there are over 16 applications in this project (fine-tuning on text-to-image/image editing diffusion models, machine unlearning, style transfer), we suggest you to check the README file for each application before using them, in case there are any additional dependencies required.

This dataset and the relevant benchmarking experiments are built on the amazing existing code repositories. We would like to express our gratitude to the authors of the following repositories:

- Diffusion Model Fine-Tuning

- Machine Unlearning

- Erasing Concepts from Diffusion Models (ESD)

- Ablating Concepts in Text-to-Image Diffusion Models(CA):

- Unified Concept Editing in Diffusion Models (UCE):

- Forget-Me-Not: Learning to Forget in Text-to-Image Diffusion Models (FMN):

- SalUn: Empowering Machine Unlearning via Gradient-based Weight Saliency in Both Image Classification and Generation (SalUn):

- Selective Amnesia: A Continual Learning Approach for Forgetting in Deep Generative Models (SA):

- Concept Semi-Permeable Membrane (SPM)

- Get What You Want, Not What You Don't: Image Content Suppression for Text-to-Image Diffusion Models (SEOT)

- EraseDiff: Erasing Data Influence in Diffusion Models (Ediff)

- Scissorhands: Scrub Data Influence via Connection Sensitivity in Networks (SHS)

- Style Transfer

@article{zhang2024unlearncanvas,

title={UnlearnCanvas: A Stylized Image Dataset to Benchmark Machine Unlearning for Diffusion Models},

author={Zhang, Yihua and Zhang, Yimeng and Yao, Yuguang and Jia, Jinghan and Liu, Jiancheng and Liu, Xiaoming and Liu, Sijia},

journal={arXiv preprint arXiv:2402.11846},

year={2024}

}