The official repository of Omni6DPose API, as presented in Omni6DPose. (ECCV 2024)

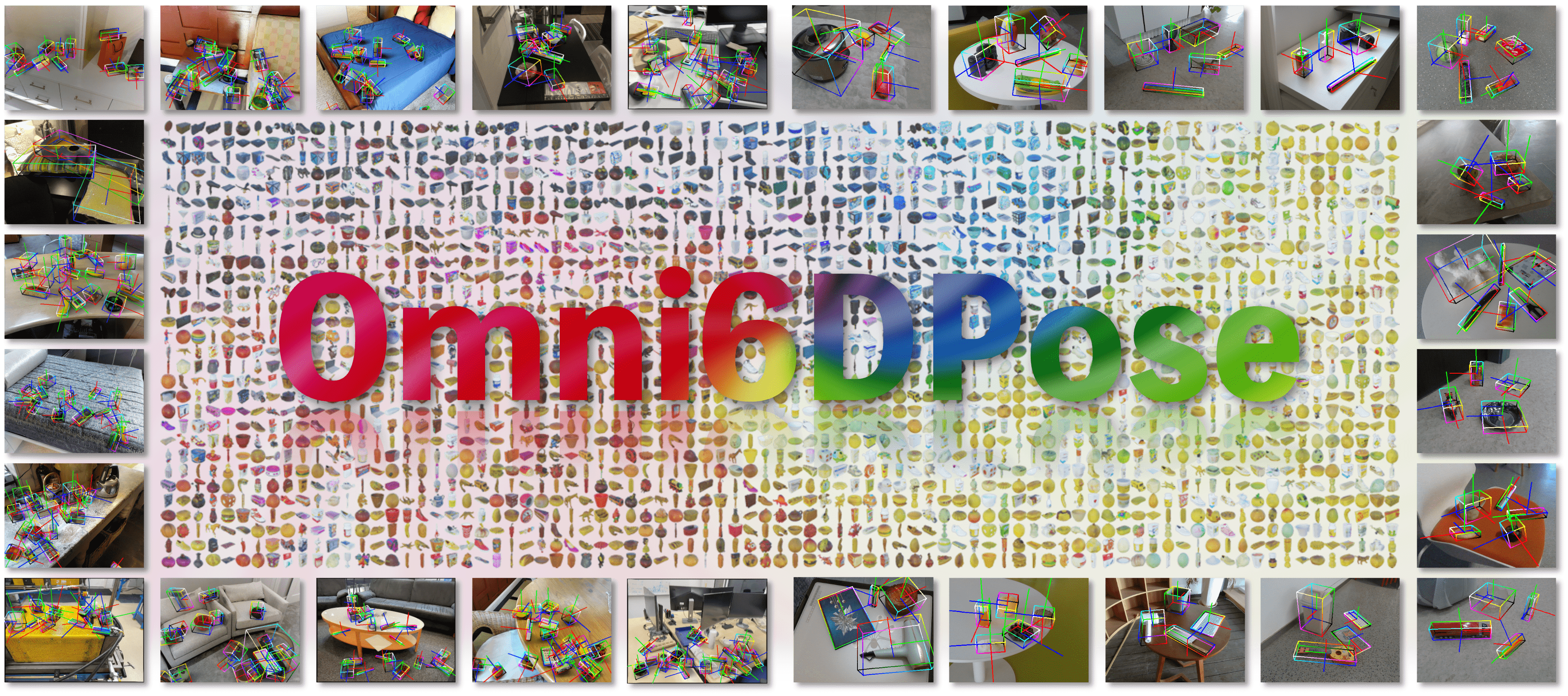

We introduce Omni6DPose, a substantial dataset featured by its diversity in object categories, large scale, and variety in object materials.

We introduce Omni6DPose, a substantial dataset featured by its diversity in object categories, large scale, and variety in object materials.

- 2024.08.10: GenPose++ is released! 🎉

- 2024.08.01: Omni6DPose dataset and API are released! 🎉

- 2024.07.01: Omni6DPose has been accepted by ECCV2024! 🎉

- Release the Omni6DPose dataset.

- Release the Omni6DPose API.

- Release the GenPose++ and pretrained models.

- Release a convenient version of GenPose++ with SAM for the downstream tasks.

The Omni6DPose dataset is available for download at Omni6DPose. The dataset is organized into four parts:

- ROPE: the real dataset for evaluation.

- SOPE: the simulated dataset for training.

- PAM: the pose aligned 3D models used in both ROPE and SOPE.

- Meta: the meta information of the objects in PAM.

Omni6DPose provides a large-scale dataset with comprehensive data modalities and accurate annotations. The dataset is organized as follows. The files marked as [Optional] may not be necessary for some methods or tasks, but they are included to support a wide range of research possibilities. The detailed file descriptions can be found in the project website.

Omni6DPose

├── ROPE

│ ├── SCENE_ID

│ │ ├── FRAME_ID_meta.json

│ │ ├── FRAME_ID_color.png

│ │ ├── FRAME_ID_mask.exr

│ │ ├── FRAME_ID_depth.exr

│ │ ├── FRAME_ID_mask_sam.npz [Optional]

│ │ └── ...

│ └── ...

├── SOPE

│ ├── PATCH_ID

│ │ ├── train

│ │ │ ├── SCENE_NAME

│ │ │ | ├── SCENE_ID

│ | | | | ├── FRAME_ID_meta.json

│ │ │ | | ├── FRAME_ID_color.png

│ │ │ | | ├── FRAME_ID_mask.exr

│ | | | | ├── FRAME_ID_depth.exr

│ │ │ | | ├── FRAME_ID_depth_1.exr [Optional]

│ │ │ | | ├── FRAME_ID_coord.png [Optional]

│ │ │ | | ├── FRAME_ID_ir_l.png [Optional]

│ │ │ | | ├── FRAME_ID_ir_r.png [Optional]

│ │ │ | | └── ...

│ │ │ | └── ...

│ │ │ └── ...

│ │ └── test

│ └── ...

├── PAM

│ └── obj_meshes

│ ├── DATASET-CLASS_ID

│ └── ...

└── Meta

├── obj_meta.json

└── real_obj_meta.json

Due to the large size of the dataset and the varying requirements of different tasks and methods, we have divided the dataset into multiple parts, each compressed separately. This allows users to download only the necessary parts of the dataset according to their needs. Please follow the instructions within the download.ipynb to download and decompress the necessary dataset parts.

Note: The meta file Meta/obj_meta.json is corresponding to the SOPE dataset, and the meta file Meta/real_obj_meta.json is corresponding to the ROPE dataset. When you using the dataset and the fllowing API, please make sure to use the correct meta file.

We provide Omni6DPose API cutoop for visualization and evaluation, which provides a convenient way to load the dataset and evaluate and visualize the results. The API is designed to be user-friendly and easy to use. Please refer to the Omni6DPose API for more details.

To enable EXR image reading by OpenCV, you need to install OpenEXR. On ubuntu, you can install it using the following command:

sudo apt-get install openexrThen, the API can be installed using the following two ways:

- Install from PyPI:

pip install cutoop- Install from source:

cd common

python setup.py installPlease refer to the documentation for more details.

We provide the official pytorch implementation of GenPose++ here.

If you have any questions, please feel free to contact us:

Jiyao Zhang: jiyaozhang@stu.pku.edu.cn

Weiyao Huang: sshwy@stu.pku.edu.cn

Bo Peng: bo.peng@stu.pku.edu.cn

This project is released under the MIT license. See LICENSE for additional details.