Wangbo Yu*, Jinbo Xing*, Li Yuan*, Wenbo Hu†, Xiaoyu Li, Zhipeng Huang,

Xiangjun Gao, Tien-Tsin Wong, Ying Shan, Yonghong Tian†

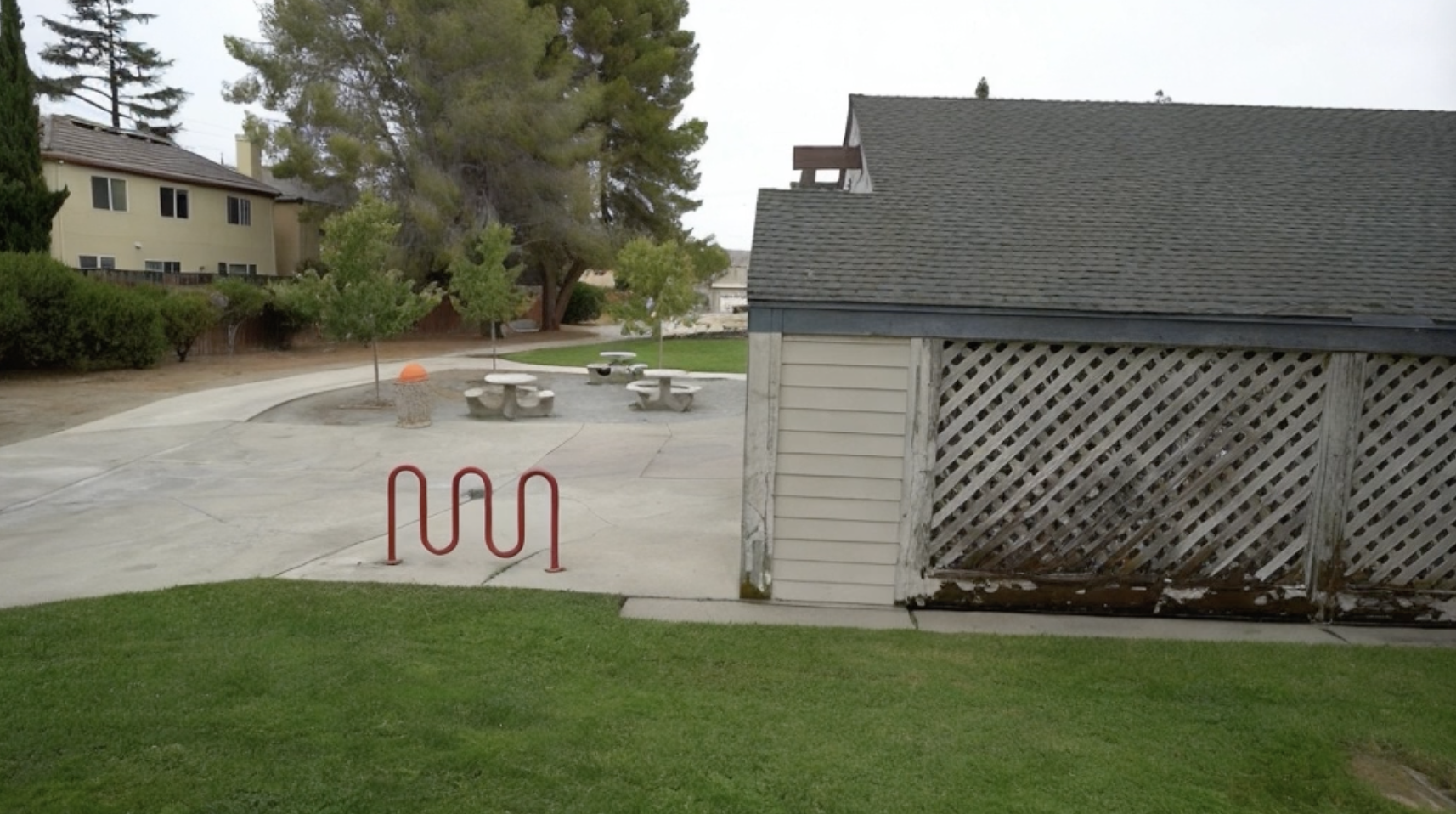

ViewCrafter can generate high-fidelity novel views from a single or sparse reference image, while also supporting highly precise pose control. Below shows an example:

| Reference image | Camera trajecotry | Generated novel view video |

|

|

|

|

|

|

|

|

|

| Reference image 1 | Reference image 2 | Generated novel view video |

|

|

|

|

|

|

|

|

|

- [2024-09-01] Launch the project page and update the arXiv preprint.

- [2024-09-01] Release pretrained models and the code for single-view novel view synthesis.

- Release the code for sparse-view novel view synthesis.

- Release the code for iterative novel view synthesis.

- Release the code for 3D-GS reconstruction.

| Model | Resolution | Frames | GPU Mem. & Inference Time (A100, ddim 50steps) | Checkpoint |

|---|---|---|---|---|

| ViewCrafter_25 | 576x1024 | 25 | 23.5GB & 120s (perframe_ae=True) |

Hugging Face |

| ViewCrafter_16 | 576x1024 | 16 | 18.3GB & 75s (perframe_ae=True) |

Hugging Face |

Currently, we provide two versions of the model: a base model that generates 16 frames at a time and an enhanced model that generates 25 frames at a time. The inference time can be reduced by using fewer DDIM steps.

git clone https://github.com/Drexubery/ViewCrafter.git

cd ViewCrafter# Create conda environment

conda create -n viewcrafter python=3.9.16

conda activate viewcrafter

pip install -r requirements.txt

# Install PyTorch3D

conda install https://anaconda.org/pytorch3d/pytorch3d/0.7.5/download/linux-64/pytorch3d-0.7.5-py39_cu117_pyt1131.tar.bz2

# Download DUSt3R

mkdir -p checkpoints/

wget https://download.europe.naverlabs.com/ComputerVision/DUSt3R/DUSt3R_ViTLarge_BaseDecoder_512_dpt.pth -P checkpoints/

(1) Download pretrained model (ViewCrafter_25 for example) and put the model.ckpt in checkpoints/model.ckpt.

(2) Run inference.py using the following script. Please refer to the configuration document and render document to set up inference parameters and camera trajectory.

sh run.shDownload the pretrained model and put it in the corresponding directory according to the previous guideline, then run:

python gradio_app.py Please consider citing our paper if our code is useful:

@article{yu2024viewcrafter,

title={ViewCrafter: Taming Video Diffusion Models for High-fidelity Novel View Synthesis},

author={Yu, Wangbo and Xing, Jinbo and Yuan, Li and Hu, Wenbo and Li, Xiaoyu and Huang, Zhipeng and Gao, Xiangjun and Wong, Tien-Tsin and Shan, Ying and Tian, Yonghong},

journal={arXiv preprint arXiv:2409.02048},

year={2024}

}