- Academic publishing has risen 2-fold in the past ten years, making it nearly impossible to sift through a large number of papers and identify broad areas of research within disciplines.

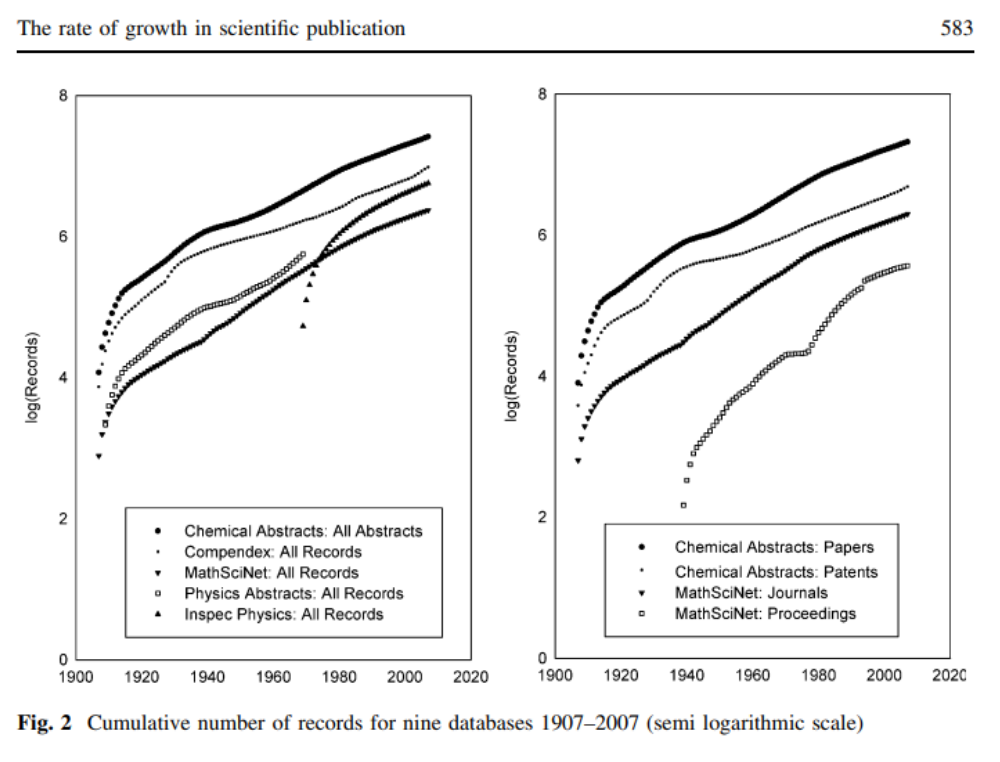

Figure 1.1 Increase in the number of scientific publications in the fields of physics and chemistry [1].

-

In order to understand such vast volumes of research, there is a need for automated text analysis tools.

-

However, existing tools such are expensive and lack in-depth analysis of publications.

-

To address these issues, we developed pyResearchThemes, an open-source, automated text analysis tool that:

- Scrape papers from scientific repositories,

- Analyse meta-data such as date and journal of publication,

- Visualizes themes of research using natural language processing.

-

To demonstrate the ability of the tool, we have analyzed the research themes from the field of Ecology & Conservation.

This project is a collaboration between Sarthak J. Shetty, from the Department of Aerospace Engineering, Indian Institute of Science and Vijay Ramesh, from the Department of Ecology, Evolution & Environmental Biology, Columbia University.

-

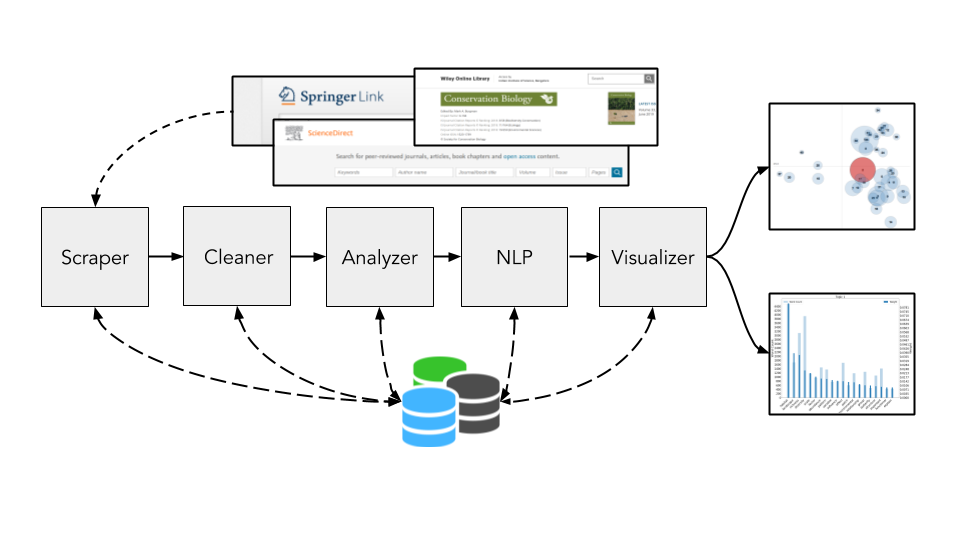

The model is made up of three parts:

-

Scraper: This component scrapes scientific repository for publications containing the specific combination of keywords.

-

Cleaner: This component cleans the corpus of text retreived from the repository and rids it of special characters that creep in during formatting and submission of manuscripts.

-

Analyzer: This component collects and measures the frequency of select keywords in the abstracts database.

-

NLP Engine: This component extracts insights from the abstracts collected by presenting topic modelling.

-

Visualizer: This component presents the results and data from the Analyzer to the end user.

-

Figure 3.1 Diagramatic representation of pipeline for collecting papers and generating visualizations.

-

The

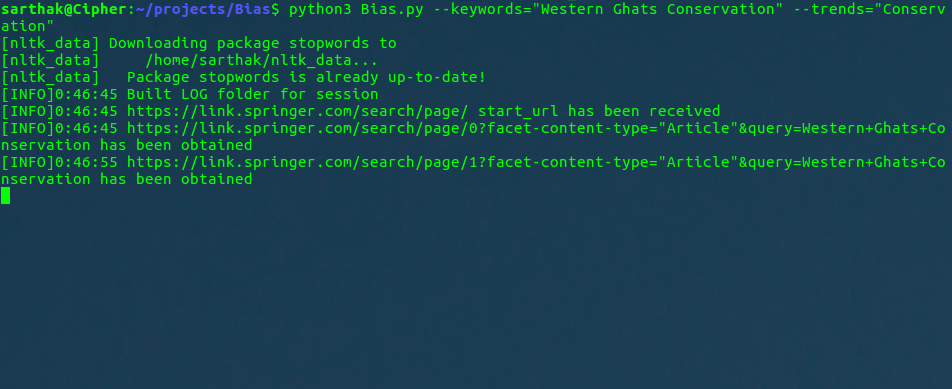

Scraper.pycurrently scrapes only the abstracts from Springer using the BeautifulSoup and urllib packages. -

A default URL is provided in the code. Once the keywords are provided, the URLs are queried and the resultant webpage is souped and

abstract_idis scraped. -

A new

abstract_id_databaseis prepared for each result page, and is referenced when a new paper is scraped. -

The

abstract_databasecontains the abstract along with the title, author and a complete URL from where the full text can be downloaded. They are saved in a.txtfile -

A

status_loggeris used to log the sequence of commands in the program.

Figure 3.2 Scraper.py script grabbing the papers from Springer.

-

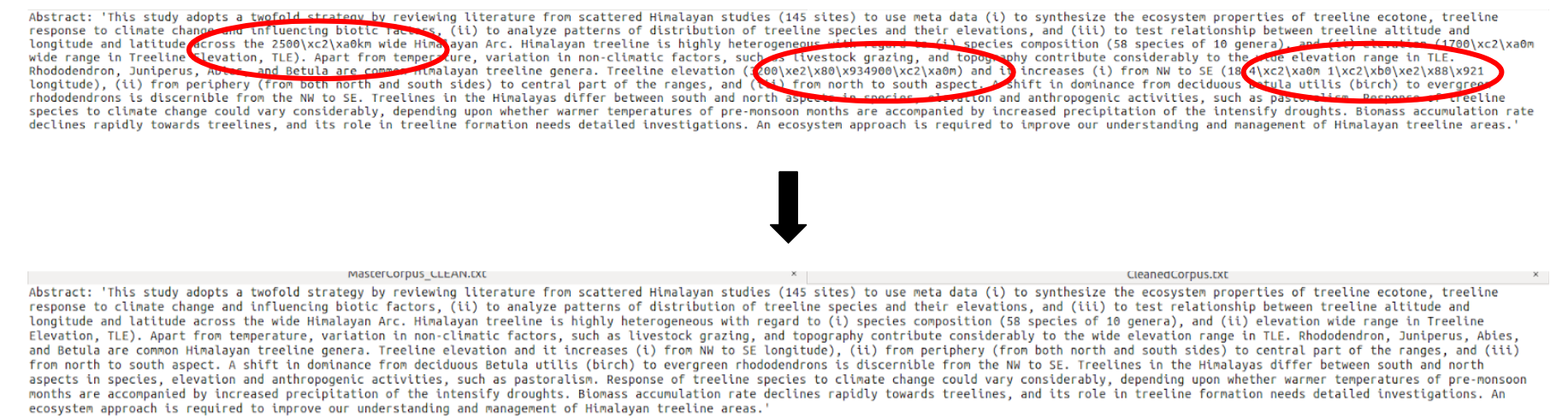

The

Cleaner.pycleans the corpus scrapped from the repository, before the topic models are generated. -

This script creates a clean variant of the

.txtcorpus file that is then stored as_ANALYTICAL.txt, for further analysis and modelling

Figure 3.3 Cleaner.py script gets rid of formatting and special characters present in the corpus.

-

The

Analyzer.pyanalyzes the frequency of different words used in the abstract, and stores it in the form of a pandas dataframe. -

It serves as an intermediary between the Scraper and the Visualizer, preparing the scraped data into a

.csv. -

This

.csvfile is then passed on to theVisualizer.pyto generate the "Trends" chart.

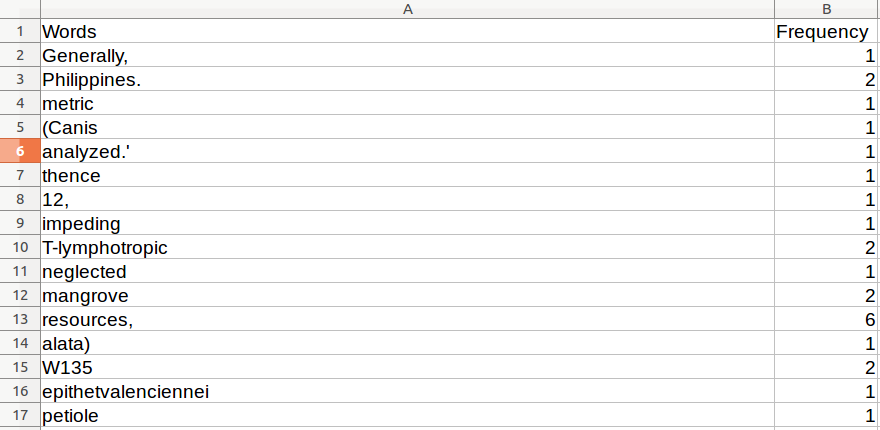

Figure 3.4 Analyzer.py script generates this .csv file for analysis by other parts of the pipeline.

-

The NLP Engine is used to generate the topic modelling charts for the Visualizer.py script.

-

The language models are generated from the corpus for analysis using gensim and spaCy packages that employ the Latent dirichlet allocation (LDA) method [2].

-

The corpus and model generated are then passed to the Visualizer.py script.

-

The top modelling chart can be pulled from here here.

Note: The

.htmlfile linked above has to be downloaded and opened in a JavaScript enabled browser to be viewed.

-

The

Visualizer.pycode is responsible for generating the visualization associated with a specific search, using the gensim and spaCy for research themes and matplotlib library for the trends. -

The research theme visualization is functional are presented under the 5.0 Results section.

-

The research themes data visualization is stored as a .html file in the LOGS directory and can be viewed in the browser.

Note: These instructions are common to both Ubuntu and Windows systems.

-

Clone this repository:

E:\>git clone https://github.com/SarthakJShetty/Bias.git -

Change directory to the 'Bias' directory:

E:\>cd Bias

-

Install

virtualenvusingpip:user@Ubuntu: pip install virtualenv -

Create a

virtualenvenvironment called "Bias" in the directory of your project:user@Ubuntu: virtualenv --no-site-packages BiasNote: This step usually takes about 30 seconds to a minute.

-

Activate the virtualenv enviroment:

user@Ubuntu: ~/Bias$ source Bias/bin/activateYou are now inside the

Biasenvironment. -

Install the requirements from

ubuntu_requirements.txt:(Bias) user@Ubuntu: pip3 install -r ubuntu_requirements.txtNote: This step usually takes a few minutes, depending on your network speed.

-

Create a new

condaenvironment:E:\Bias conda create --name Bias python=3.5 -

Enter the new

Biasenvironment created:E:\Bias activate Bias -

Install the required packages from

conda_requirements.txt:(Bias) E:\Bias conda install --yes --file conda_requirements.txtNote: This step usually takes a few minutes, depending on your network speed.

To run the code and generate the topic distribution and trend of research graphs:

(Bias) E:\Bias python Bias.py --keywords="Western Ghats" --trends="Conservation"

- This command will scrape the abstracts from Springer that are related to "Western Ghats", and calculate the frequency with which the term "Conservation" appears in their abstract.

Currently, the results from the various biodiversity runs are stored as tarballs, in the LOGS folder, primarily to save space.

To view the logs, topic-modelling results & trends chart from the tarballs, run the following commands:

tar zxvf <log_folder_to_be_unarchived>.tar.gz

Example:

To view the logs & results generated from the run on "East Melanesian Islands":

tar zxvf LOG_2019-04-24_19_35_East_Melanesian_Islands.tar.gz

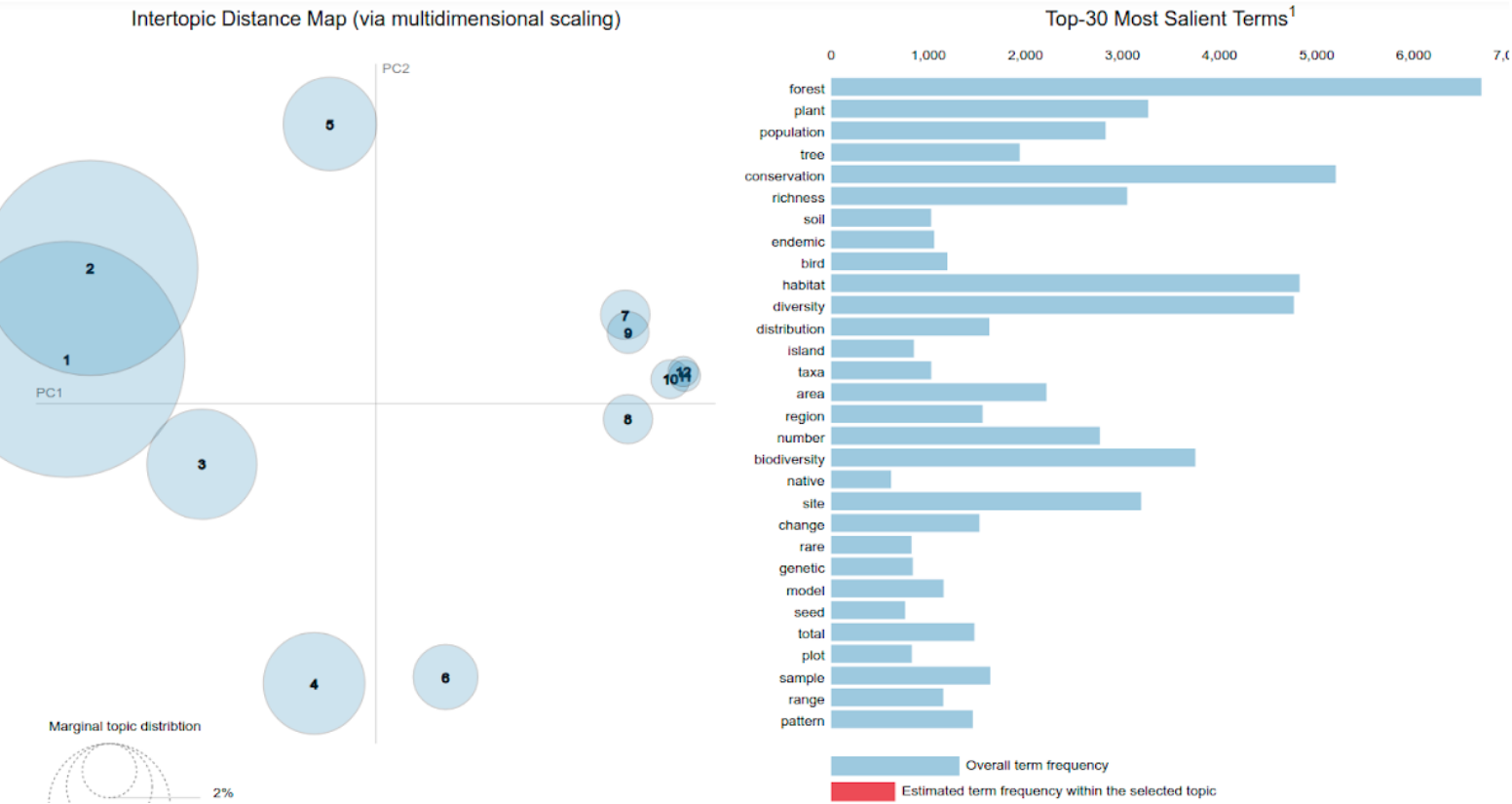

The NLP_Engine.py module creates topic modelling charts such as the one shown below.

Figure 5.1 Distribution of topics discussed in publications pulled from 8 conservation and ecology themed journals.

- Circles indicate topics generated from the

.txtfile supplied to theNLP_Engine.py, as part of theBiaspipeline. - Each topic is made of a number of top keywords that are seen on the right, with an adjustable relevancy metric on top.

- More details regarding the visualizations and the udnerlying mechanics can be checked out here.

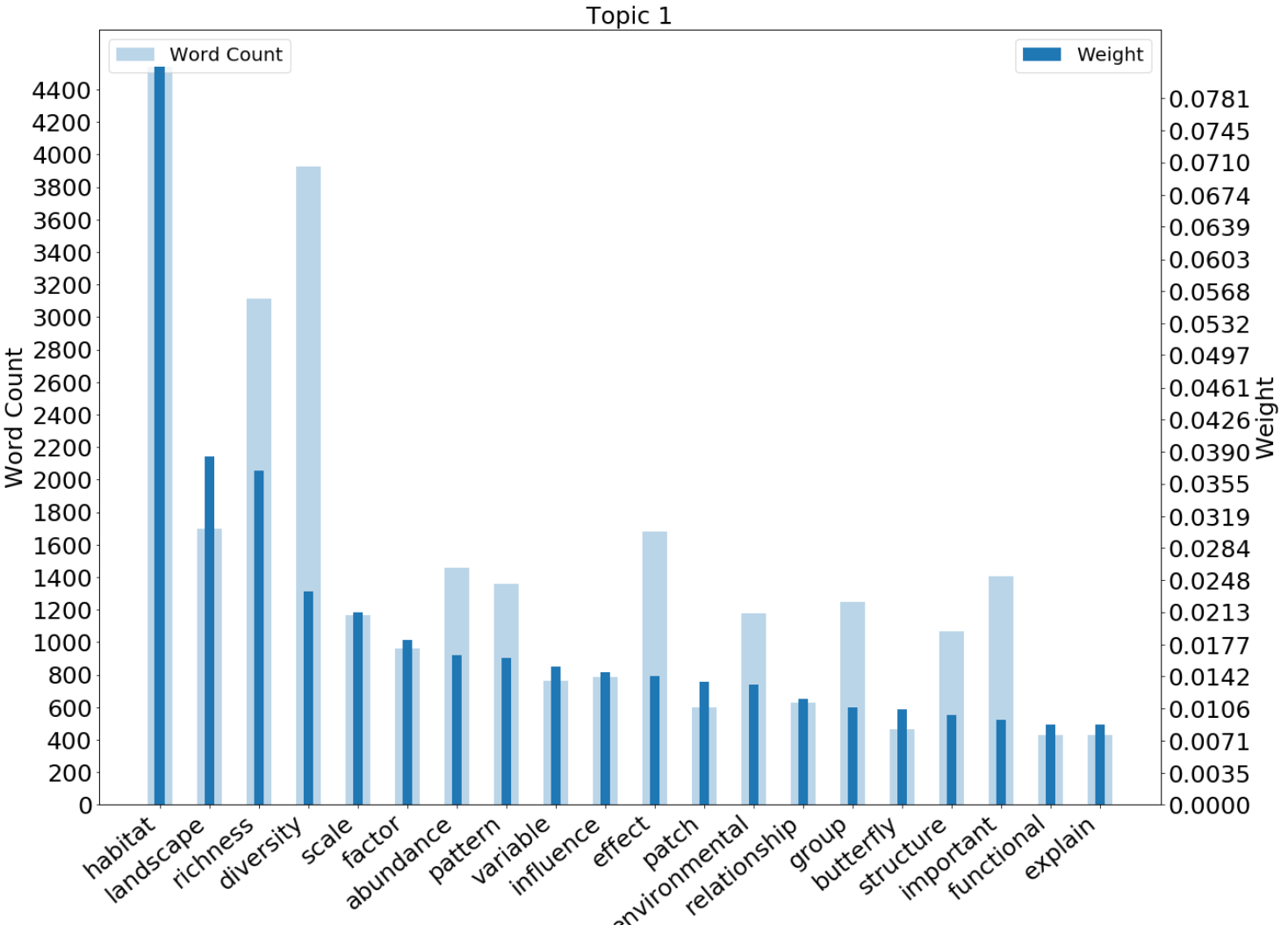

Figure 5.2 Here, we plot the variation in the weights and frequency of keywords falling under topic one from the chart above.

-

Here, "weights" is a proxy for the importance of a specific keyword to a highlighted topic. The weight of a keyword is calculated by: i) absolute frequency and, ii) frequency of occurance with other keywords in the same topic.

-

Factors i) and ii) result in variable weights being assigned to different keywords and emphasize it's importance in the topic.

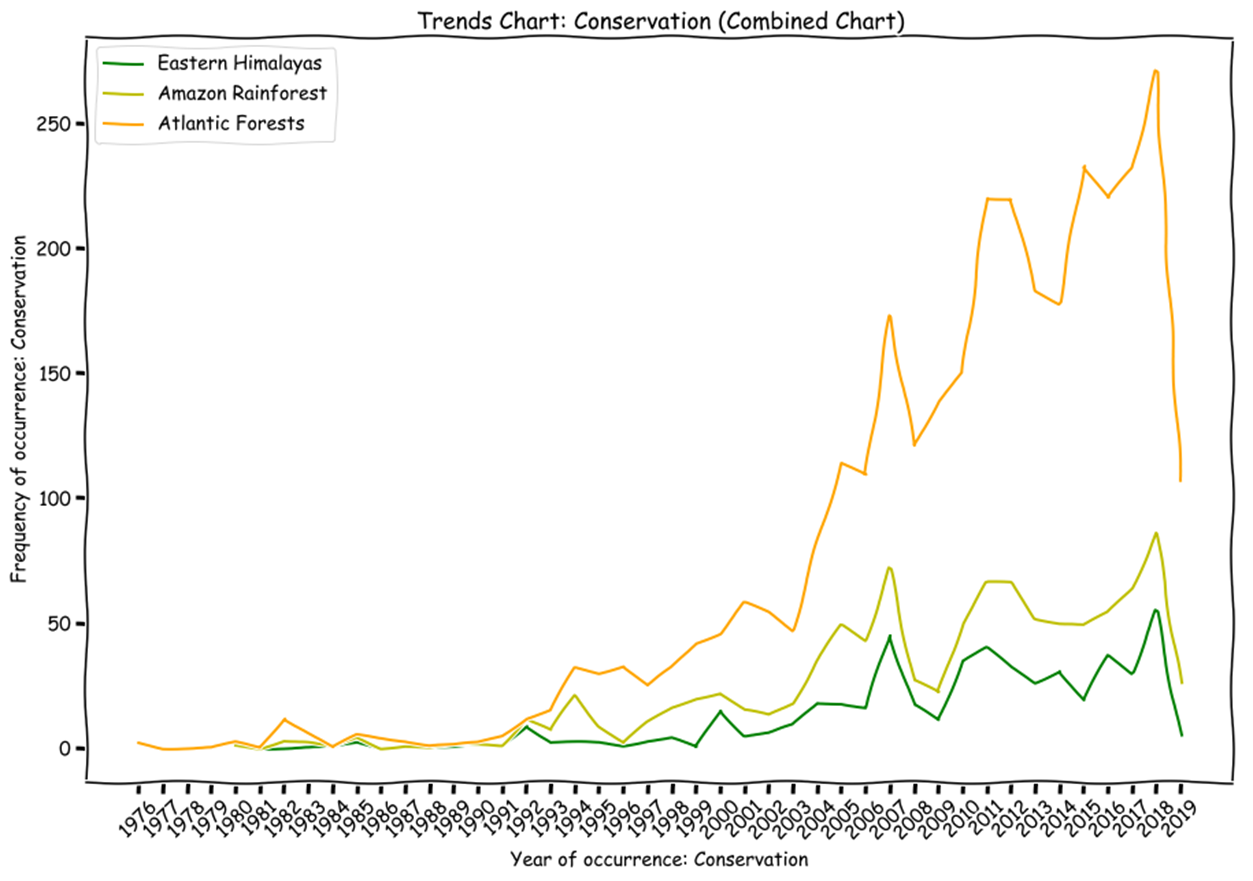

Figure 5.3 Variation in the frequency of a the term "Conservation" over time in the corpus of text scrapped.

- Here, abstracts pertaining to Eastern Himalayas were scrapped and temporally trend of occurance for "Conservation" was checked.

- The frequency is presented alongisde the bubble for each year on the chart.

- * We are still working on how to effectively present the trends and usage variations temporally. This feature is not part of the main package.

- [1] - Gabriela C. Nunez‐Mir Basil V. Iannone III. Automated content analysis: addressing the big literature challenge in ecology and evolution. Methods in Ecology and Evolution. June, 2016.

- [2] - David Blei, Andrew Y. Ng, Michael I. Jordan. Latent dirichlet allocation. The Journal of Machine Learning Research. March 2003.