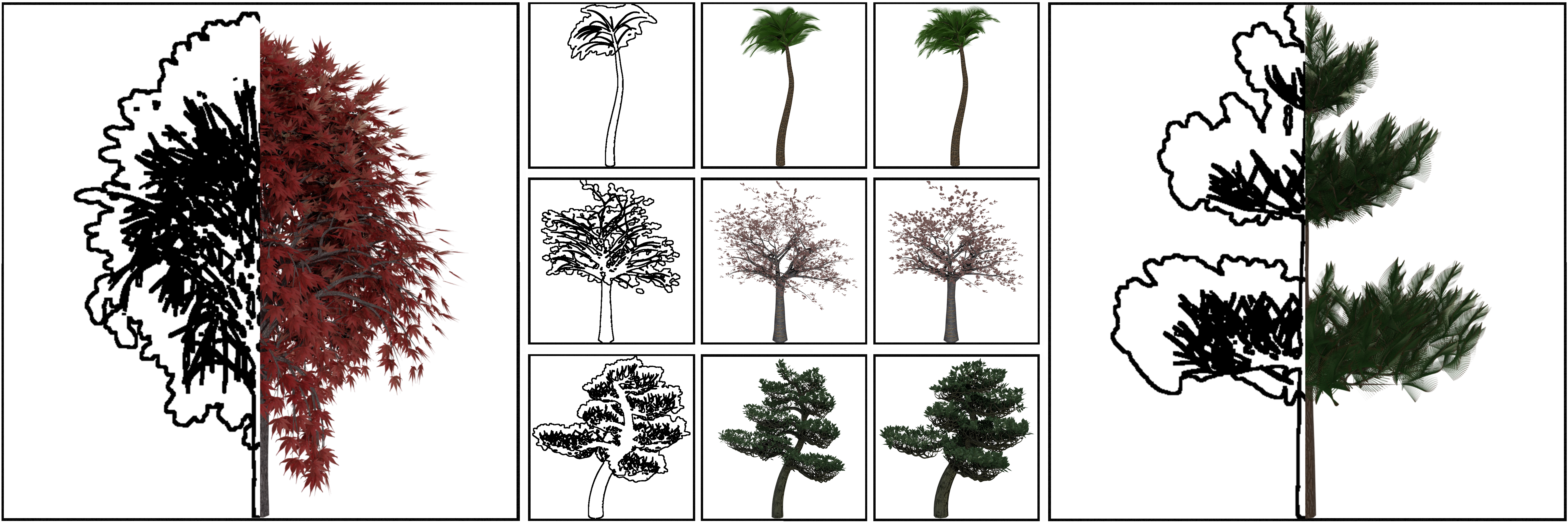

TreeSketchNet is an automated procedural modelling 1 system based on Deep convolutional Neural Network (DNN) which is described in our paper. This system allows to create 3D tree meshes by predicting the Weber and Penn2 parameters using simple hand drawn sketches as input of DNN. In particular this system can recognize and create 3D mesh of specific 5 trees such as Maple, Cherry, Pine, Bonsai, and Plam.

In this repository, we provide:

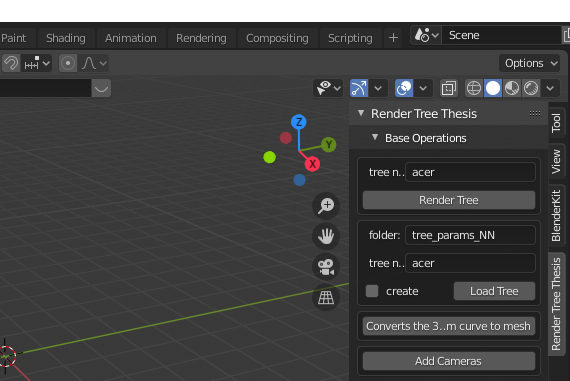

- Render Tree (RT) Blender add-on to generate synthetic hand-made (SHM) sketches defining Weber and Penn parameters

- Our training and validation datasets which cosist of SHM and parameters obtained through RT addon

- The code of our system from which you can choose one of the core model architectures that we tested for our purpose (AlexNet4, ResNet-505, VGG-166, Inception V37, and EfficientNet-B78)

- Our pretrained EfficientNet-B7 final architecture of which our weight are available

- Python 3.7.4

- Tensorflow 2.8.0, tensorflow-addons, Cuda 11.2

- Numpy

- Blender 2.82

For Blender installation click on this link.

For Tensorflow and Cuda installation follow this guide.

After installing tensorflow, you can install numpy and tensorflow-addons via the Python pip package manager, as follows:

$ pip install numpy tensorflow-addons

In NeuralNetwork folder you can find the code related to the neural network architecture.

-

You can get the dataset used in our work here. You need to unpack the .zip file and copy its content in the NeuralNetwork/train_validation_set folder.

You can create your own dataset using the Blender add-on Render Tree. -

To test our pre-trained network you can download the model form here, copy the entire folder in NeuralNetwork/logs_archive and execute the

test.pyfile. -

You can find all the network architectures tested in this paper in my_model.py file. To train one of these networks, in

train.pyyou need to set themodel_namevariable, choosing from the strings listed below:resnet50_multiple: ResNet50inception_multiple: InceptionNet V3vgg16_multiple_skip: VGG-16 with skip connectionsalexnet_multiple: AlexNetcoatnet_multiple: CoAtNetefficientnet_multiple: EfficientNet-B7

-

You can also change the directory in which to save the trained model by editing the

log_dirvariable in thetrain.pyfile. Remember that if you need to test your trained network you have to change the same variable in thetest.pyfile! -

You can early stop the training from terminal with

Ctrl + C. The procedure will save the model with best weights. -

To continue the training of an early stopped model, in the

train.pyfile you must- change the

load_dirvariable with the directory where the saved model is located; - set the

load_modelvariable to True.

Remember that the saved model architecture must be the same as that indicated by the

model_namevariable. - change the

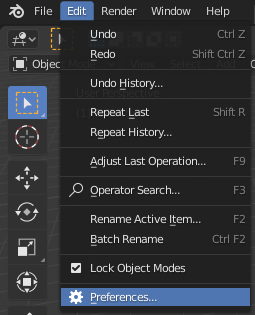

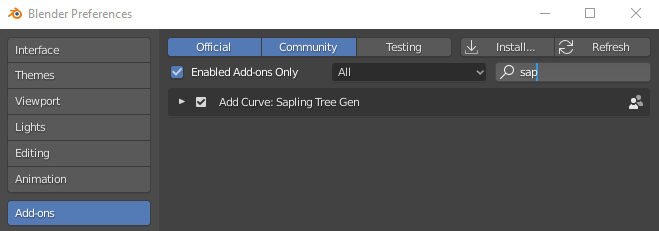

For the installation of the Render Tree add-on you need to follow these steps:

- In the installation folder of Blender 2.82 (for example

C:\Program Files\Blender Foundation\Blender 2.82\), openaddonsfolder following this pathBlender 2.82\2.82\scripts\addons\. Download the add_curve_sapling.zip file, unzip it and paste theadd_curve_saplingfolder in theaddonsdirectory replacing the existing files. - Enable the Sapling Tree Gen add-on as shown in the following images

- Download addon_render_tree.zip file.

- In

Blender Preferences -> Add-onsclick onInstall...and selected the .zip file downloaded in point 2. After the installation enable the Render Tree add-on by checking the box to the left of its name.

- After the installation, you can find the add-on in the right panel of the 3D Viewport.

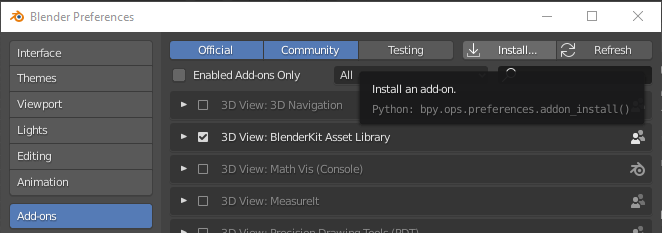

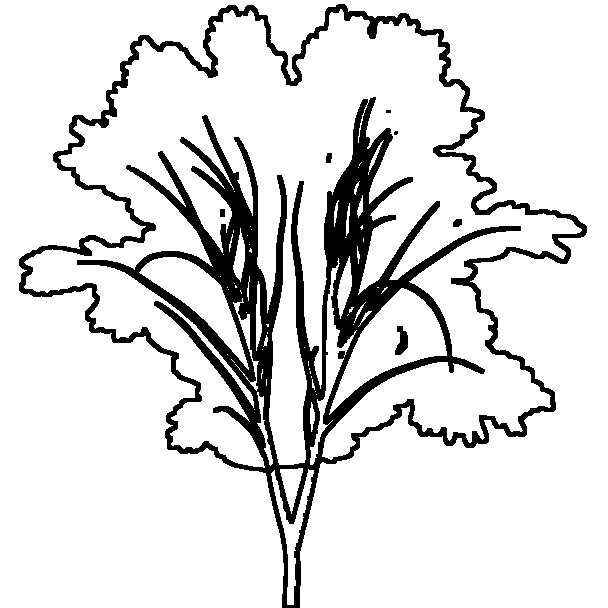

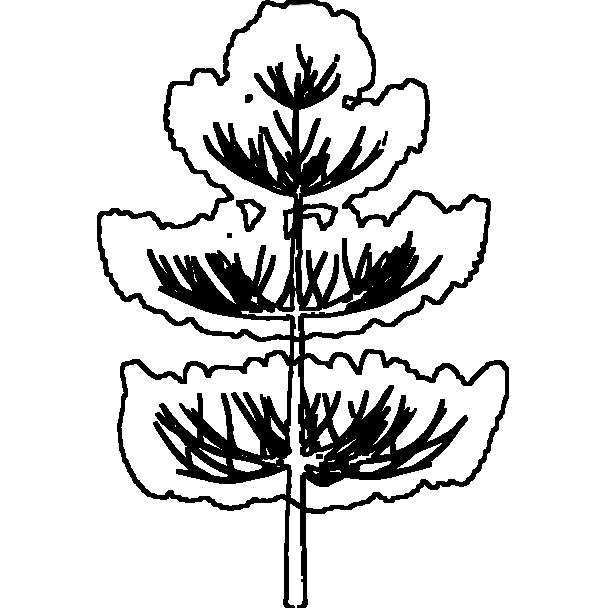

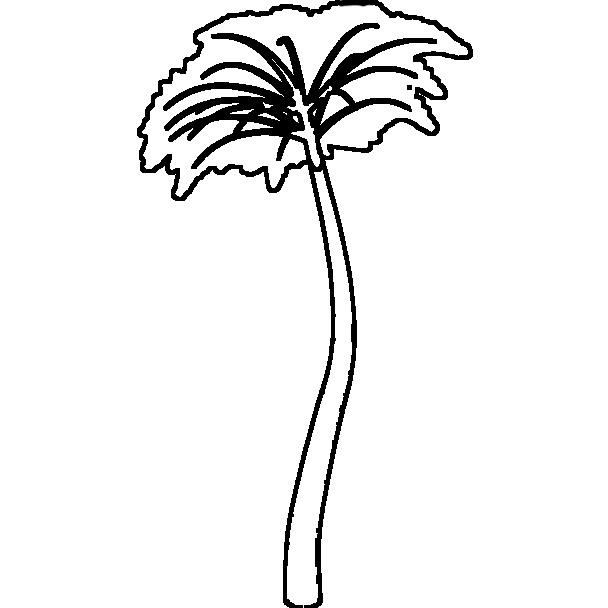

Here we report some examples of sketches. To drawn skecth you can use an image manipulation program such as Adobe Photoshop or GIMP. In particular stroke thinkess has to be 5px and brush hardness is 100% for both Photoshop and GIMP with dark as color. Alternatively you can use a light pen to draw the sketch. The input of our DNN must be in PNG format. The foliage shape must be as closed as possible and the sketch background has to be white.

Please be inspired by the examples provided if you want to get a good result. Try to draw the branches as lines and the secondary ones as lines starting from the primary ones, etc. Remember that only the 5 types of trees shown in the following images are recognized by our system

|

|

|

|

|

|---|---|---|---|---|

| Maple | Pine | Palm | Cherry | Bonsai |

Copyright (c) 2022, Gilda Manfredi, Nicola Capece, and Ugo Erra. All right reserved.

The code is distributed under a BSD license. See LICENSE for information.

@article{10.1145/3579831,

author = {Manfredi, Gilda and Capece, Nicola and Erra, Ugo and Gruosso, Monica},

title = {TreeSketchNet: From Sketch to 3D Tree Parameters Generation},

year = {2023},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

issn = {2157-6904},

url = {https://doi.org/10.1145/3579831},

doi = {10.1145/3579831},

journal = {ACM Trans. Intell. Syst. Technol.},

month = {jan},

keywords = {3D trees, neural networks, deep learning, 3D generation, datasets, procedural modeling}

}

- Texturing & modeling: a procedural approach. Ebert, David S and Musgrave, F Kenton and Peachey, Darwyn and Perlin, Ken and Worley, Steven. Morgan Kaufmann. link

- Creation and Rendering of Realistic Trees. Jason Weber and Joseph Penn. 1995. InProceedings of the 22nd Annual Conference on Computer Graphics and InteractiveTechniques (SIGGRAPH ’95). Association for Computing Machinery, New York, NY,USA, 119–128. link

- PlantCV v2: Image analysis software for high-throughput plant phenotyping. Gehan, Malia A and Fahlgren, Noah and Abbasi, Arash and Berry, Jeffrey C and Callen, Steven T and Chavez, Leonardo and Doust, Andrew N and Feldman, Max J and Gilbert, Kerrigan B and Hodge, John G and others. PeerJ Inc. link

- Imagenet classification with deep convolutional neural networks. Krizhevsky, Alex and Sutskever, Ilya and Hinton, Geoffrey E. Advances in neural information processing systems. link

- Deep Residual Learning for Image Recognition. K. He and X. Zhang and S. Ren and J. Sun. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) link

- Very Deep Convolutional Networks for Large-Scale Image Recognition. Karen Simonyan and Andrew Zisserman. International Conference on Learning Representations. link

- Rethinking the inception architecture for computer vision. Szegedy, Christian and Vanhoucke, Vincent and Ioffe, Sergey and Shlens, Jon and Wojna, Zbigniew. Proceedings of the IEEE conference on computer vision and pattern recognition. link

- EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. Mingxing Tan, and Quoc Le. Proceedings of the 36th International Conference on Machine Learning, PMLR 97:6105-6114, 2019. link