Bin Xia, Shiyin Wang, Yingfan Tao, Yitong Wang, and Jiaya Jia

New Version (Accepted by ECCV2024):

- [2024.07.04] I am organizing and uploading the new version of the LLMGA code and the dataset and model. I will have a status update when I complete this process, please wait for me for a few days. Notably, in this new version, we build our LLMGA on different base LLM models, such as Llama2 7b, Mistral 7b, LLama3 8b, Qwen2 0.5b, Qwen2 1.5b, Qwen2 7b, Phi3 3b, and gemma 2b. They have different performance and model sizes, as well as commercial licenses, there is always one that can meet your usage scenario.

Old Version:

- [2023.12.20] 🔥 We release LLMGA's training datasets.

- [2023.12.20] We release the gradio codes of LLMGA7b-SDXL-T2I.

- [2023.12.08] 🔥 We release LLMGA7b-SDXL-T2I demo.

- [2023.11.30] We have released the code for DiffRIR. It can effectively eliminate differences in brightness, contrast, and texture between generated and preserved regions in inpainting and outpainting. Considering its applicability to projects beyond LLMGA, we have open-sourced it at Github.

- [2023.11.29] 🔥 The models is released at Huggingface.

- [2023.11.29] 🔥 The training and inference code is released.

- [2023.11.29] We will upload all models, code, and data within a week and further refine this project.

- [2023.11.28] 🔥 GitHub repo is created.

Abstract: In this paper, we introduce a Multimodal Large Language Model-based Generation Assistant (LLMGA), leveraging the vast reservoir of knowledge and proficiency in reasoning, comprehension, and response inherent in Large Language Models (LLMs) to assist users in image generation and editing. Diverging from existing approaches where Multimodal Large Language Models (MLLMs) generate fixed-size embeddings to control Stable Diffusion (SD), our LLMGA provides a detailed language generation prompt for precise control over SD. This not only augments LLM context understanding but also reduces noise in generation prompts, yields images with more intricate and precise content, and elevates the interpretability of the network. To this end, we curate a comprehensive dataset comprising prompt refinement, similar image generation, inpainting & outpainting, and visual question answering. Moreover, we propose a two-stage training scheme. In the first stage, we train the MLLM to grasp the properties of image generation and editing, enabling it to generate detailed prompts. In the second stage, we optimize SD to align with the MLLM's generation prompts. Additionally, we propose a reference-based restoration network to alleviate texture, brightness, and contrast disparities between generated and preserved regions during image editing. Extensive results show that LLMGA has promising generative capabilities and can enable wider applications in an interactive manner.

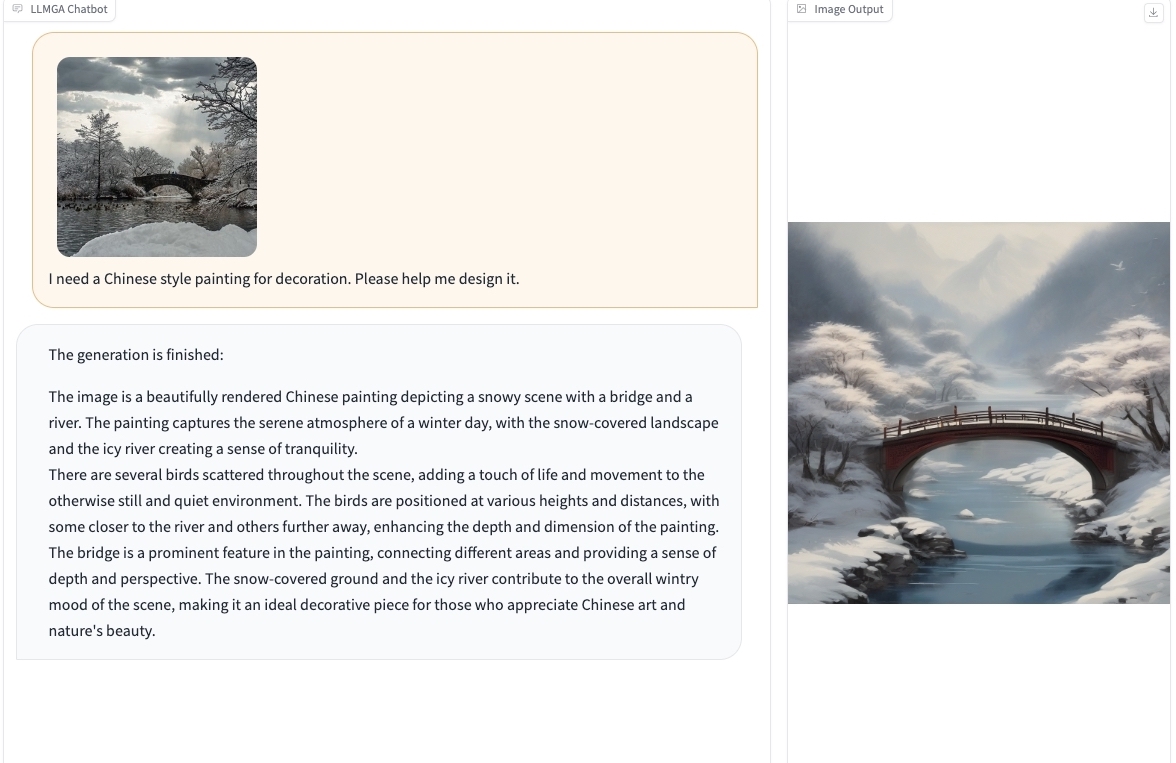

We provide some selected examples in this section. More examples can be found in our project page. Feel free to try our online demo!

Please follow the instructions below to install the required packages.

- Clone this repository

git clone https://github.com/dvlab-research/LLMGA.git- Install Package

conda create -n llmga python=3.9 -y

conda activate llmga

cd LLMGA

pip install --upgrade pip # enable PEP 660 support

pip install -e .

cd ./llmga/diffusers

pip install . - Install additional packages for training cases

pip install datasets

pip install albumentations

pip install ninja

pip install flash-attn --no-build-isolationWe provide the processed image-based data for LLMGA training. We organize the data in the format of LLaVA, please organize the training image-based data following this.

please download Laion-aesthetic dataset, COCO2017 dataset, and LLMGA dataset and organize them as in Structure.

We recommend users to download the pretrained MLLM-7b weights or MLLM-13b weights, which use the training scheme similar to LLaVa. Then put them in checkpoints/Training following Structure.

| MLLM pretrained Model | Pretrained Models |

|---|---|

| MLLM7b | Download |

| MLLM13b | Download |

Please download MLLM Models and SD models from the following links. For example, you can download LLMGA-MLLM7b and LLMGA-SDXL-T2I to realize LLMGA7b-T2I functionality. Please organize them as in Structure.

| MLLM Model | Pretrained Models |

|---|---|

| LLMGA-MLLM7b | Download |

| LLMGA-MLLM13b | Download |

| SD Model | Pretrained Models |

|---|---|

| LLMGA-SD15-T2I | Download |

| LLMGA-SD15-Inpainting | Download |

| LLMGA-SDXL-T2I | Download |

| LLMGA-SDXL-Inpainting | Download |

The folder structure should be organized as follows before training.

LLMGA

├── llmga

├── scripts

├── work_dirs

├── checkpoints

│ ├── Training

│ │ ├── llmga-llama-2-7b-pretrain

│ │ ├── llmga-llama-2-13b-pretrain

│ ├── Inference

│ │ ├── llmga-llama-2-7b-chat-full-finetune

│ │ ├── llmga-llama-2-13b-chat-full-finetune

│ │ ├── llmga-sdxl-t2i

│ │ ├── llmga-sdxl-inpainting

│ │ ├── llmga-sd15-t2i

│ │ ├── llmga-sd15-inpainting

├── data

│ │── LLMGA-dataset

│ │── LAION-Aesthetic

│ ├── COCO

│ │ ├── train2017

LLMGA is trained on 8 A100 GPUs with 80GB memory. To train on fewer GPUs, you can reduce the per_device_train_batch_size and increase the gradient_accumulation_steps accordingly. Always keep the global batch size the same: per_device_train_batch_size x gradient_accumulation_steps x num_gpus.

Please make sure you download and organize the data following Preparation before training.

train LLMGA based on LLaMA2-7b

bash train_LLMGA_7b_S1.shor train LLMGA based on LLaMA2-13b

bash train_LLMGA_13b_S1.shtrain LLMGA based on SD1.5-T2I

bash train_LLMGA_SD15_S2.shtrain LLMGA based on SD1.5-Inpainting

bash train_LLMGA_SD15_S2_inpaint.shtrain LLMGA based on SDXL-T2I

bash train_LLMGA_SDXL_S2.shtrain LLMGA based on SDXL-Inpainting

bash train_LLMGA_SDXL_S2_inpaint.shUse LLMGA without the need of Gradio interface. It also supports multiple GPUs, 4-bit and 8-bit quantized inference. With 4-bit quantization. Please try this for inference:

test LLMGA7b-SDXL for T2I with image input at first. You can ask LLMGA to assist in T2I generation around your input image.

python3 -m llmga.serve.cli-sdxl \

--model-path binxia/llmga-llama-2-7b-chat-full-finetune \

--sdmodel_id binxia/llmga-sdxl-t2i \

--save_path ./res/t2i/llmga7b-sdxl \

--image-file /PATHtoIMGtest LLMGA7b-SDXL for Inpainting with image input at first. You can ask LLMGA to assist in inpainting or outpainting around your input image.

python3 -m llmga.serve.cli-sdxl-inpainting \

--model-path binxia/llmga-llama-2-7b-chat-full-finetune \

--sdmodel_id binxia/llmga-sdxl-inpainting \

--save_path ./res/inpainting/llmga7b-sdxl \

--image-file /PATHtoIMG \

--mask-file /PATHtomasktest LLMGA7b-SDXL for T2I generation without image input at first. You can ask LLMGA to assist in T2I generation by only chatting.

python3 -m llmga.serve.cli2-sdxl \

--model-path binxia/llmga-llama-2-7b-chat-full-finetune \

--sdmodel_id binxia/llmga-sdxl-t2i \

--save_path ./res/t2i/llmga7b-sdxl \python3 llmga.serve.gradio_web_server.py \

--model-path binxia/llmga-llama-2-7b-chat-full-finetune \

--sdmodel_id binxia/llmga-sdxl-inpainting \

--load-4bit \

--model-list-mode reload \

--port 8334 \- Support gradio demo.

If you find this repo useful for your research, please consider citing the paper

@article{xia2023llmga,

title={LLMGA: Multimodal Large Language Model based Generation Assistant},

author={Xia, Bin and Wang, Shiyin, and Tao, Yingfan and Wang, Yitong and Jia, Jiaya},

journal={arXiv preprint arXiv:2311.16500},

year={2023}

}

We would like to thank the following repos for their great work: