LSD is an open source perception architecture for autonomous vehicle and robotics.

LSD currently supports many features:

- support multiple LiDAR, camera, radar and INS/IMU sensors.

- support user-friendly calibration for LiDAR and camera etc.

- support software time sync, data record and playback.

- support voxel 3D-CNN based pointcloud object detection, tracking and prediction.

- support GICP, FLOAM and FastLIO based frontend odometry and G2O based pose graph optimization.

- support Web based interactive map correction tool(editor).

- support communication with ROS.

- Support traffic light detection.

- Support GIOU, Two-stage association of object tracking.

- Support voxel 3D-CNN based freespace detection.

- Support Transformer based motion prediction.

[2023-10-08] Better 3DMOT (GIOU, Two-stage association).

| Performance (WOD val) | AMOTA ↑ | AMOTP ↓ | IDs(%) ↓ |

|---|---|---|---|

| AB3DMOT | 47.84 | 0.2584 | 0.67 |

| GIOU + Two-stage | 54.79 | 0.2492 | 0.19 |

[2023-07-06] A new detection model (CenterPoint-VoxelNet) is support to run realtime (30FPS+).

| Performance (WOD val) | Vec_L1 | Vec_L2 | Ped_L1 | Ped_L2 | Cyc_L1 | Cyc_L2 |

|---|---|---|---|---|---|---|

| PointPillar | 73.71/73.12 | 65.71/65.17 | 71.70/60.90 | 63.52/53.78 | 65.30/63.77 | 63.12/61.64 |

| CenterPoint-VoxelNet (1 frame) | 74.75/74.24 | 66.09/65.63 | 77.66/71.54 | 68.57/63.02 | 72.03/70.93 | 69.63/68.57 |

| CenterPoint-VoxelNet (4 frame) | 77.55/77.03 | 69.65/69.17 | 80.72/77.80 | 72.91/70.15 | 72.63/71.72 | 70.55/69.67 |

Note: the CenterPoint-VoxelNet is built on libspconv and the GPU with SM80+ is required.

[2023-06-01] Web UI(JS code of preview, tviz and map editor) is uploaded.

LSD can be worked both on x86 PC(with GPU, SM 80+) and nvidia Orin (old version can be worked on Xavier NX/AGX).

Ubuntu20.04, Python3.8, Eigen 3.3.7, Ceres 1.14.0, Protobuf 3.8.0, NLOPT 2.4.2, G2O, OpenCV 4.5.5, PCL 1.9.1, GTSAM 4.0

nvidia-docker2 is needed to install firstly Installation.

A x86_64 docker image is provided to test.

sudo docker pull 15liangwang/lsd-cuda # sudo docker pull 15liangwang/auto-ipu, if you don't have GPU

sudo docker run --gpus all -it -d --net=host --privileged --shm-size=4g --name="LSD" -v /media:/root/exchange 15liangwang/lsd-cuda

sudo docker exec -it LSD /bin/bashClone this repository and build the source code

cd /home/znqc/work/

git clone https://github.com/w111liang222/lidar-slam-detection.git

cd lidar-slam-detection/

unzip slam/data/ORBvoc.zip -d slam/data/

python setup.py install

bash sensor_inference/pytorch_model/export/generate_trt.shRun LSD

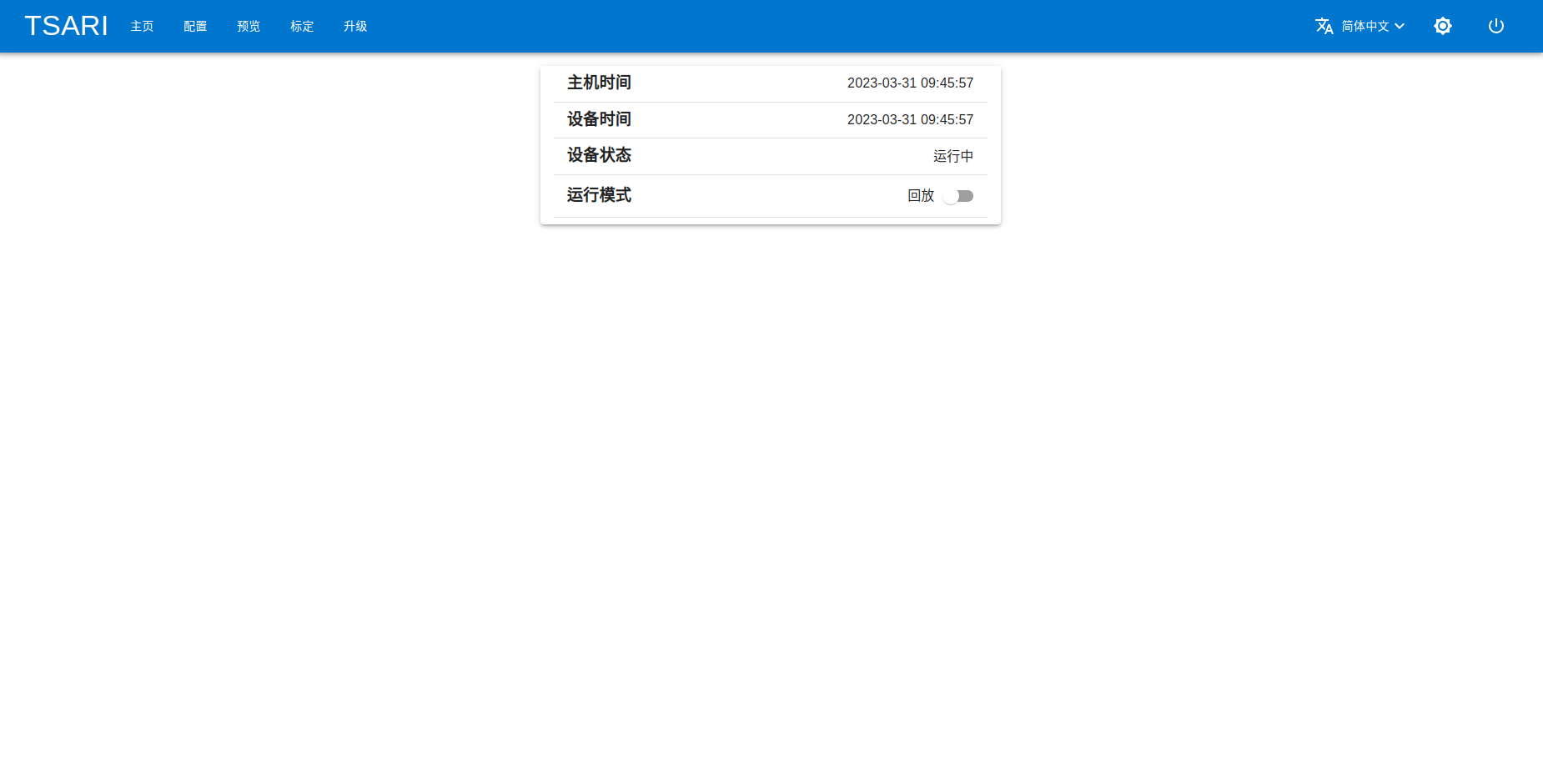

tools/scripts/start_system.shOpen http://localhost (or http://localhost:1234) in your browser, e.g. Chrome, and you can see this screen.

Download the demo data Google Drive | 百度网盘(密码sk5h) and unzip it

unzip demo_data.zip -d /home/znqc/work/

tools/scripts/start_system.sh # re-run LSDMore usages can be found here

LSD is NOT built on the Robot Operating System (ROS), but we provides some tools to bridge the communication with ROS.

- rosbag proxy: a tool which send the ros topic data to LSD.

- pickle to rosbag: a convenient tool to convert the pickle files which are recorded by LSD to rosbag.

The LSD is tested on Orin with JetPack5.0.2, Installation

LSD is released under the Apache 2.0 license.

In the development of LSD, we stand on the shoulders of the following repositories:

- lidar_align: A simple method for finding the extrinsic calibration between a 3D lidar and a 6-dof pose sensor.

- lidar_imu_calib: automatic calibration of 3D lidar and IMU extrinsics.

- OpenPCDet: OpenPCDet Toolbox for LiDAR-based 3D Object Detection.

- AB3DMOT: 3D Multi-Object Tracking: A Baseline and New Evaluation Metrics.

- FAST-LIO: A computationally efficient and robust LiDAR-inertial odometry package.

- R3LIVE: A Robust, Real-time, RGB-colored, LiDAR-Inertial-Visual tightly-coupled state Estimation and mapping package.

- FLOAM: Fast and Optimized Lidar Odometry And Mapping for indoor/outdoor localization.

- hdl_graph_slam: an open source ROS package for real-time 6DOF SLAM using a 3D LIDAR.

- hdl_localization: Real-time 3D localization using a (velodyne) 3D LIDAR.

- ORB_SLAM2: Real-Time SLAM for Monocular, Stereo and RGB-D Cameras, with Loop Detection and Relocalization Capabilities.

- scancontext: Global LiDAR descriptor for place recognition and long-term localization.

If you find this project useful in your research, please consider cite and star this project:

@misc{LiDAR-SLAM-Detection,

title={LiDAR SLAM & Detection: an open source perception architecture for autonomous vehicle and robotics},

author={LiangWang},

howpublished = {\url{https://github.com/w111liang222/lidar-slam-detection}},

year={2023}

}

LiangWang 15lwang@alumni.tongji.edu.cn