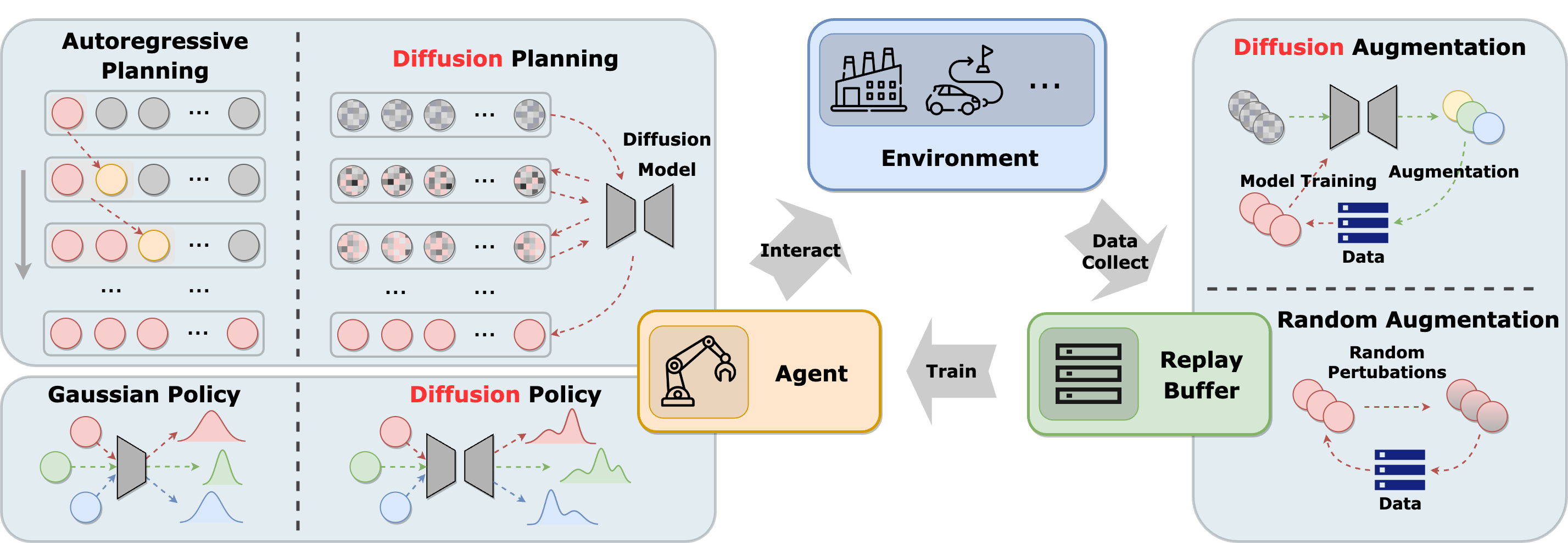

This repository contains a collection of resources and papers on Diffusion Models for RL.

🚀 Please check out our survey paper Diffusion Models for Reinforcement Learning: A Survey

-

Planning with Diffusion for Flexible Behavior Synthesis

Michael Janner, Yilun Du, Joshua B. Tenenbaum, Sergey Levine

ICML 2022

-

Diffusion Policies as an Expressive Policy Class for Offline Reinforcement Learning

Zhendong Wang, Jonathan J Hunt, Mingyuan Zhou

ICLR 2023

-

Offline Reinforcement Learning via High-fidelity Generative Behavior Modeling

Huayu Chen, Cheng Lu, Chengyang Ying, Hang Su, Jun Zhu

ICLR 2023

-

Is Conditional Generative Modeling all you need for Decision-Making?

Anurag Ajay, Yilun Du, Abhi Gupta, Joshua B. Tenenbaum, T. Jaakkola, Pulkit Agrawal

ICLR 2023

-

AdaptDiffuser: Diffusion Models as Adaptive Self-evolving Planners

Zhixuan Liang, Yao Mu, Mingyu Ding, Fei Ni, Masayoshi Tomizuka, Ping Luo

ICML 2023

-

Metadiffuser: Diffusion Model as Conditional Planner for Offline Meta-RL

Fei Ni, Jianye Hao, Yao Mu, Yifu Yuan, Yan Zheng, Bin Wang, Zhixuan Liang

ICML 2023

-

Hierarchical Diffusion for Offline Decision Making

Wenhao Li, Xiangfeng Wang, Bo Jin, Hongyuan Zha.

ICML 2023

-

Contrastive Energy Prediction for Exact Energy-guided Diffusion Sampling in Offline Reinforcement Learning

Cheng Lu, Huayu Chen, Jianfei Chen, Hang Su, Chongxuan Li, Jun Zhu

ICML 2023

-

Language Control Diffusion: Efficiently Scaling through Space, Time, and Tasks

Edwin Zhang, Yujie Lu, William Wang, Amy Zhang

arXiv 2023

-

IDQL: Implicit Q-Learning as an Actor-Critic Method with Diffusion Policies

Philippe Hansen-Estruch, Ilya Kostrikov, Michael Janner, Jakub Grudzien Kuba, Sergey Levine

arXiv 2023

-

Diffusion Model is an Effective Planner and Data Synthesizer for Multi-Task Reinforcement Learning

Haoran He, Chenjia Bai, Kang Xu, Zhuoran Yang, Weinan Zhang, Dong Wang, Bin Zhao, Xuelong Li

NeurIPS 2023

-

EDGI: Equivariant Diffusion for Planning with Embodied Agents

Johann Brehmer, Joey Bose, Pim de Haan, Taco Cohen

NeurIPS 2023

-

Extracting Reward Functions from Diffusion Models

Felipe Nuti, Tim Franzmeyer, João F. Henriques

NeurIPS 2023

-

Can Pre-Trained Text-to-Image Models Generate Visual Goals for Reinforcement Learning?

Jialu Gao, Kaizhe Hu, Guowei Xu, Huazhe Xu

NeurIPS 2023

-

Reward-Directed Conditional Diffusion: Provable Distribution Estimation and Reward Improvement

Hui Yuan, Kaixuan Huang, Chengzhuo Ni, Minshuo Chen, Mengdi Wang

NeurIPS 2023

-

Refining Diffusion Planner for Reliable Behavior Synthesis by Automatic Detection of Infeasible Plans

Kyowoon Lee, Seongun Kim, Jaesik Choi

NeurIPS 2023

-

SafeDiffuser: Safe Planning with Diffusion Probabilistic Models

Wei Xiao, Tsun-Hsuan Wang, Chuang Gan, Daniela Rus

arXiv 2023

-

Efficient Diffusion Policies for Offline Reinforcement Learning

Bingyi Kang, Xiao Ma, Chao Du, Tianyu Pang, Shuicheng Yan

arXiv 2023

-

MADiff: Offline Multi-agent Learning with Diffusion Models

Zhengbang Zhu, Minghuan Liu, Liyuan Mao, Bingyi Kang, Minkai Xu, Yong Yu, Stefano Ermon, Weinan Zhang

arXiv 2023

-

Beyond Conservatism: Diffusion Policies in Offline Multi-agent Reinforcement Learning

Zhuoran Li, Ling Pan, Longbo Huang

arXiv 2023

-

Fighting Uncertainty with Gradients: Offline Reinforcement Learning via Diffusion Score Matching

H.J. Terry Suh, Glen Chou, Hongkai Dai, Lujie Yang, Abhishek Gupta, Russ Tedrake

CoRL 2023

-

Value function estimation using conditional diffusion models for control

Bogdan Mazoure, Walter Talbott, Miguel Angel Bautista, Devon Hjelm, Alexander Toshev, Josh Susskind

arXiv 2023

-

Instructed Diffuser with Temporal Condition Guidance for Offline Reinforcement Learning

Jifeng Hu, Yanchao Sun, Sili Huang, SiYuan Guo, Hechang Chen, Li Shen, Lichao Sun, Yi Chang, Dacheng Tao

arXiv 2023

-

Diffusion Policies for Out-of-Distribution Generalization in Offline Reinforcement Learning

Suzan Ece Ada, Erhan Oztop, Emre Ugur

arXiv 2023

-

Diffusion Policies as Multi-Agent Reinforcement Learning Strategies

Jinkun Geng, Xiubo Liang, Hongzhi Wang, Yu Zhao

ICANN 2023

-

DiffCPS: Diffusion Model based Constrained Policy Search for Offline Reinforcement Learning

Longxiang He, Linrui Zhang, Junbo Tan, Xueqian Wang

arXiv 2023

-

Score Regularized Policy Optimization through Diffusion Behavior

Huayu Chen, Cheng Lu, Zhengyi Wang, Hang Su, Jun Zhu

ICLR 2024

-

Adaptive Online Replanning with Diffusion Models

Siyuan Zhou, Yilun Du, Shun Zhang, Mengdi Xu, Yikang Shen, Wei Xiao, Dit-Yan Yeung, Chuang Gan

arXiv 2023

-

AlignDiff: Aligning Diverse Human Preferences via Behavior-Customisable Diffusion Model

Zibin Dong, Yifu Yuan, Jianye Hao, Fei Ni, Yao Mu, Yan Zheng, Yujing Hu, Tangjie Lv, Changjie Fan, Zhipeng Hu

arXiv 2023

-

SkillDiffuser: Interpretable Hierarchical Planning via Skill Abstractions in Diffusion-Based Task Execution

Zhixuan Liang, Yao Mu, Hengbo Ma, Masayoshi Tomizuka, Mingyu Ding, Ping Luo

CVPR 2024

-

Learning a Diffusion Model Policy from Rewards vis Q-score Matching

Michael Psenka, Alejandro Escontrela, Pieter Abbeel, Yi Ma

arXiv 2023

-

Simple Hierarchical Planning with Diffusion

Chang Chen, Fei Deng, Kenji Kawaguchi, Caglar Gulcehre, Sungjin Ahn

ICLR 2024

-

Reasoning with Latent Diffusion in Offline Reinforcement Learning

Siddarth Venkatraman, Shivesh Khaitan, Ravi Tej Akella, John Dolan, Jeff Schneider, Glen Berseth

ICLR 2024

-

Efficient Planning with Latent Diffusion

Wenhao Li

ICLR 2024

-

Contrastive Diffuser: Planning Towards High Return States via Contrastive Learning

Yixiang Shan, Zhengbang Zhu, Ting Long, Qifan Liang, Yi Chang, Weinan Zhang, Liang Yin

arXiv 2024

-

DMBP: Diffusion model-based predictor for robust offline reinforcement learning against state observation perturbations

Zhihe YANG, Yunjian Xu

ICLR 2024

-

Entropy-regularized Diffusion Policy with Q-Ensembles for Offline Reinforcement Learning

Ruoqi Zhang, Ziwei Luo, Jens Sjölund, Thomas B. Schön, Per Mattsson

arXiv 2024

-

Diffusion World Model

Zihan Ding, Amy Zhang, Yuandong Tian, Qinqing Zheng

arXiv 2024

-

Diffusion World Models

Eloi Alonso, Adam Jelley, Anssi Kanervisto, Tim Pearce

OpenReview 2024

-

Policy-Guided Diffusion

Matthew Thomas Jackson, Michael Tryfan Matthews, Cong Lu, Benjamin Ellis, Shimon Whiteson, Jakob Foerster

arXiv 2024

-

Policy Representation via Diffusion Probability Model for Reinforcement Learning

Long Yang, Zhixiong Huang, Fenghao Lei, Yucun Zhong, Yiming Yang, Cong Fang, Shiting Wen, Binbin Zhou, Zhouchen Lin

arXiv 2023

-

Boosting Continuous Control with Consistency Policy

Yuhui Chen, Haoran Li, Dongbin Zhao

arXiv 2023

-

Diffusion Reward: Learning Rewards via Conditional Video Diffusion

Tao Huang*, Guangqi Jiang*, Yanjie Ze, Huazhe Xu

arXiv 2023

-

ATraDiff: Accelerating Online Reinforcement Learning with Imaginary Trajectories

Qianlan Yang, Yu-Xiong Wang

OpenReview 2024

-

Imitating Human Behaviour with Diffusion Models

Tim Pearce, Tabish Rashid, Anssi Kanervisto, Dave Bignell, Mingfei Sun, Raluca Georgescu, Sergio Valcarcel Macua, Shan Zheng Tan, Ida Momennejad, Katja Hofmann, Sam Devlin**

ICLR 2023

-

Diffusion Policy: Visuomotor Policy Learning via Action Diffusion

Cheng Chi, Siyuan Feng, Yilun Du, Zhenjia Xu, Eric Cousineau, Benjamin Burchfiel, Shuran Song

RSS 2023

-

Goal-Conditioned Imitation Learning using Score-based Diffusion Policies

Moritz Reuss, Maximilian Li, Xiaogang Jia, Rudolf Lioutikov

RSS 2023

-

To the Noise and Back: Diffusion for Shared Autonomy

Takuma Yoneda, Luzhe Sun, and Ge Yang, Bradly Stadie, Matthew Walter

RSS 2023

-

DALL-E-Bot: Introducing Web-Scale Diffusion Models to Robotics

Ivan Kapelyukh, Vitalis Vosylius, Edward Johns

RAL 2023

-

Scaling Up and Distilling Down: Language-Guided Robot Skill Acquisition

Huy Ha, Pete Florence, Shuran Song

CoRL 2023

-

XSkill: Cross Embodiment Skill Discovery

Mengda Xu, Zhenjia Xu, Cheng Chi, Manuela Veloso, Shuran Song

CoRL 2023

-

ChainedDiffuser: Unifying Trajectory Diffusion and Keypose Prediction for Robotic Manipulation

Zhou Xian, Nikolaos Gkanatsios, Theophile Gervet, Tsung-Wei Ke, Katerina Fragkiadaki

CoRL 2023

-

PlayFusion: Skill Acquisition via Diffusion from Language-Annotated Play

Lili Chen, Shikhar Bahl, Deepak Pathak

CoRL 2023

-

Generative Skill Chaining: Long-Horizon Skill Planning with Diffusion Models

Utkarsh A. Mishra, Shangjie Xue, Yongxin Chen, Danfei Xu

CoRL 2023

-

Multimodal Diffusion Transformer for Learning from Play

Moritz Reuss, Rudolf Lioutikov

CoRL 2023

-

GNFactor: Multi-Task Real Robot Learning with Generalizable Neural Feature Fields

Yanjie Ze, Ge Yan, Yueh-Hua Wu, Annabella Macaluso, Yuying Ge, Jianglong Ye, Nicklas Hansen, Li Erran Li, Xiaolong Wang

CoRL 2023

-

Crossway Diffusion: Improving Diffusion-based Visuomotor Policy via Self-supervised Learning

Xiang Li, Varun Belagali, Jinghuan Shang, Michael S. Ryoo

arXiv 2023

-

Diffusion Co-Policy for Synergistic Human-Robot Collaborative Tasks

Eley Ng, Ziang Liu, Monroe Kennedy III

arXiv 2023

-

Compositional Foundation Models for Hierarchical Planning

Anurag Ajay, Seungwook Han, Yilun Du, Shuang Li, Abhi Gupta, Tommi Jaakkola, Josh Tenenbaum, Leslie Kaelbling, Akash Srivastava, Pulkit Agrawal

NeurIPS 2023

-

Generating Behaviorally Diverse Policies with Latent Diffusion Models

Shashank Hegde, Sumeet Batra, K. R. Zentner, Gaurav S. Sukhatme

NeurIPS 2023

-

NoMaD: Goal Masking Diffusion Policies for Navigation and Exploration

Ajay Sridhar, Dhruv Shah, Catherine Glossop, Sergey Levine

arXiv 2023

-

Zero-Shot Robotic Manipulation with Pretrained Image-Editing Diffusion Models

Kevin Black, Mitsuhiko Nakamoto, Pranav Atreya, Homer Walke, Chelsea Finn, Aviral Kumar, Sergey Levine

arXiv 2023

-

Imitation Learning from Purified Demonstrations

Yunke Wang, Minjing Dong, Bo Du, Chang Xu

arXiv 2023

-

Planning as In-Painting: A Diffusion-Based Embodied Task Planning Framework for Environments under Uncertainty

Cheng-Fu Yang, Haoyang Xu, Te-Lin Wu, Xiaofeng Gao, Kai-Wei Chang, Feng Gao

arXiv 2023

-

Diffusion Meets DAgger: Supercharging Eye-in-hand Imitation Learning

Xiaoyu Zhang, Matthew Chang, Pranav Kumar, Saurabh Gupta

arXiv 2024

-

3D Diffusion Policy

Yanjie Ze, Gu Zhang, Kangning Zhang, Chenyuan Hu, Muhan Wang, Huazhe Xu

arXiv 2024

-

Large-Scale Actionless Video Pre-Training via Discrete Diffusion for Efficient Policy Learning

Haoran He, Chenjia Bai, Ling Pan, Weinan Zhang, Bin Zhao, Xuelong Li

arxiv 2024

-

SculptDiff: Learning Robotic Clay Sculpting from Humans with Goal Conditioned Diffusion Policy

Alison Bartsch, Arvind Car, Charlotte Avra, Amir Barati Farimani

arXiv 2024

-

Subgoal Diffuser: Coarse-to-fine Subgoal Generation to Guide Model Predictive Control for Robot Manipulation

Zixuan Huang, Yating Lin, Fan Yang, Dmitry Berenson

ICRA 2024

-

MotionDiffuse: Text-Driven Human Motion Generation with Diffusion Model

Mingyuan Zhang, Zhongang Cai, Liang Pan, Fangzhou Hong, Xinying Guo, Lei Yang, Ziwei Liu

arXiv 2022

-

Human Motion Diffusion Model

Guy Tevet, Sigal Raab, Brian Gordon, Yonatan Shafir, Daniel Cohen-Or, Amit H. Bermano

ICLR 2023

-

Executing your Commands via Motion Diffusion in Latent Space

Xin Chen, Biao Jiang, Wen Liu, Zilong Huang, Bin Fu, Tao Chen, Jingyi Yu, Gang Yu

CVPR 2023

-

MoFusion: A Framework for Denoising-Diffusion-based Motion Synthesis

Rishabh Dabral, Muhammad Hamza Mughal, Vladislav Golyanik, Christian Theobalt

CVPR 2023

-

ReMoDiffuse: Retrieval-Augmented Motion Diffusion Model

Mingyuan Zhang, Xinying Guo, Liang Pan, Zhongang Cai, Fangzhou Hong, Huirong Li, Lei Yang, Ziwei Liu

ICCV 2023

-

MotionDiffuser: Controllable Multi-Agent Motion Prediction using Diffusion

Chiyu Max Jiang, Andre Cornman, Cheolho Park, Ben Sapp, Yin Zhou, Dragomir Anguelov

CVPR 2023

-

Learning Universal Policies via Text-Guided Video Generation

Yilun Du, Mengjiao Yang, Bo Dai, Hanjun Dai, Ofir Nachum, Joshua B. Tenenbaum, Dale Schuurmans, Pieter Abbeel

NeurIPS 2023

-

EquiDiff: A Conditional Equivariant Diffusion Model For Trajectory Prediction

Kehua Chen, Xianda Chen, Zihan Yu, Meixin Zhu, Hai Yang

arXiv 2023

-

Motion Planning Diffusion: Learning and Planning of Robot Motions with Diffusion Models

Joao Carvalho, An T. Le, Mark Baierl, Dorothea Koert, Jan Peters

IROS 2023

-

EDMP: Ensemble-of-costs-guided Diffusion for Motion Planning

Kallol Saha, Vishal Mandadi, Jayaram Reddy, Ajit Srikanth, Aditya Agarwal, Bipasha Sen, Arun Singh, Madhava Krishna

arXiv 2023

-

Sampling Constrained Trajectories Using Composable Diffusion Models

Thomas Power, Rana Soltani-Zarrin, Soshi Iba, Dmitry Berenson

IROS 2023

-

DiMSam: Diffusion Models as Samplers for Task and Motion Planning under Partial Observability

Xiaolin Fang, Caelan Reed Garrett, Clemens Eppner, Tomás Lozano-Pérez, Leslie Pack Kaelbling, Dieter Fox

arXiv 2023

-

Conditioned Score-Based Models for Learning Collision-Free Trajectory Generation

Joao Carvalho, Mark Baierl, Julen Urain, Jan Peters

NeurIPSW 2022

-

Video Language Planning

Yilun Du, Mengjiao Yang, Pete Florence, Fei Xia, Ayzaan Wahid, Brian Ichter, Pierre Sermanet, Tianhe Yu, Pieter Abbeel, Joshua B. Tenenbaum, Leslie Kaelbling, Andy Zeng, Jonathan Tompson

arXiv 2023

-

Learning to Act from Actionless Video through Dense Correspondences

Po-Chen Ko, Jiayuan Mao, Yilun Du, Shao-Hua Sun, Joshua B. Tenenbaum

arXiv 2023

-

Learning Interactive Real-World Simulators

Mengjiao Yang, Yilun Du, Kamyar Ghasemipour, Jonathan Tompson, Dale Schuurmans, Pieter Abbeel

arXiv 2023

-

DNAct: Diffusion Guided Multi-Task 3D Policy Learning

Ge Yan, Yueh-Hua Wu, Xiaolong Wang

arXiv 2024

-

Scaling Robot Learning with Semantically Imagined Experience

Tianhe Yu, Ted Xiao, Austin Stone, Jonathan Tompson, Anthony Brohan, Su Wang, Jaspiar Singh, Clayton Tan, Dee M, Jodilyn Peralta, Brian Ichter, Karol Hausman, Fei Xia

RSS 2023

-

GenAug: Retargeting behaviors to unseen situations via Generative Augmentation

Zoey Chen, Sho Kiami, Abhishek Gupta, Vikash Kumar

RSS 2023

-

Synthetic Experience Replay

Cong Lu, Philip J. Ball, Yee Whye Teh, Jack Parker-Holder

NeurIPS 2023

-

World Models via Policy-Guided Trajectory Diffusion

Marc Rigter, Jun Yamada, Ingmar Posner

arXiv 2023

-

Distilling Conditional Diffusion Models for Offline Reinforcement Learning through Trajectory Stitching

Shangzhe Li, Xinhua Zhang

arXiv 2024

-

DiffStitch: Boosting Offline Reinforcement Learning with Diffusion-based Trajectory Stitching

Guanghe Li, Yixiang Shan, Zhengbang Zhu, Ting Long, Weinan Zhang

arXiv 2024

-

Flow to Better: Offline Preference-based Reinforcement Learning via Preferred Trajectory Generation

Zhilong Zhang, Yihao Sun, Junyin Ye, Tian-Shuo Liu, Jiaji Zhang, Yang Yu

ICLR 2024

@article{zhu2023diffusion,

title={Diffusion Models for Reinforcement Learning: A Survey},

author={Zhu, Zhengbang and Zhao, Hanye and He, Haoran and Zhong, Yichao and Zhang, Shenyu and Yu, Yong and Zhang, Weinan},

journal={arXiv preprint arXiv:2311.01223},

year={2023}

}