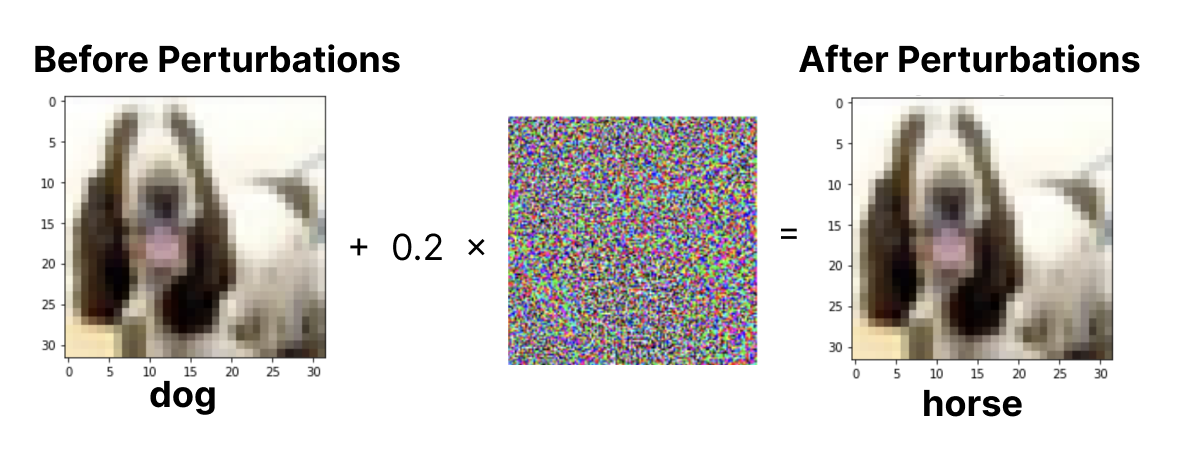

An ongoing area of research involves defenses against adversarial examples, which are specifically designed inputs that attack the model's inference. We explore the impact on adversarial examples against common deep learning classification architectures such as CNNs and RNNs, specifically testing on a CNN and CNN-LSTM hybrid, trained on the CIFAR-10 dataset. We implemented an efficient adversarial attack known as the Fast-Gradient Sign Method (FGSM) to generate perturbations against the baseline model. We then developed more robust networks specifically to defend against adversarial attacks by performing data augmentation on the original dataset with adversarial examples. We then trained our improved networks on this dataset. Our baseline models had a 80.374% accuracy for the CNN and 79.001% for the CNN-LSTM hybrid architecture, and when attacked with adversarial examples with

The following repository contains the scripts, models, and data relevant to this project. The data used for this project is from the CIFAR-10 data set. For more information about the implementation and results, please refer to the project paper.

attacks/: implementations of adversarial example generation with FGSMdata/: data used for training and testing (included in the .gitignore)models/: saved checkpoints for the trained modelsnetworks/: implementations of the CNN and CNN-LSTM hybrid architecturesutils/: supporting utility functionsanalysis.ipynb: notebook for performing cross architecture comparisoncnn.ipynb: notebook for training and testing the CNN architecture with and without adversarial trainingrnn.ipynb: notebook for training and testing the CNN-LSTM hybrid architecture with and without adversarial trainingmain.ipynb: notebook for an older CNN architecture used to show baseline adversarial example generation(test/train)-cnn.py: scripts for training and testing the baseline CNN architecture(test/train)-cnn-lstm.py: scripts for training and testing the baseline CNN-LSTM hybrid architecture

In order to run the code and notebooks in this repository, please set up a virtual environment using the following comamnd.

python3 -m virtualenv venvIn order to activate the virtual environment, run the following command.

source venv/bin/activateOnce the virtual environment is activated, you can install all of the necessry dependencies using the following command.

pip install -r requirements.txtFor downloading the dataset, the torchvision module should download and create the data/ containing the training and testing CIFAR-10 dataset when running any of the scripts/notebooks for the first time.

This repository was for "Developing Robust Networks to Defend Against Adversarial Examples" by Benson Liu & Isabella Qian for ECE C147: Neural Networks & Deep Learning at UCLA in Winter 2023. For any questions or additional infromation about this project, please contact the authors.