(for DETR3D, BEVFormer, BEVDet, BEVDepth and Semantic Occupancy Prediction)

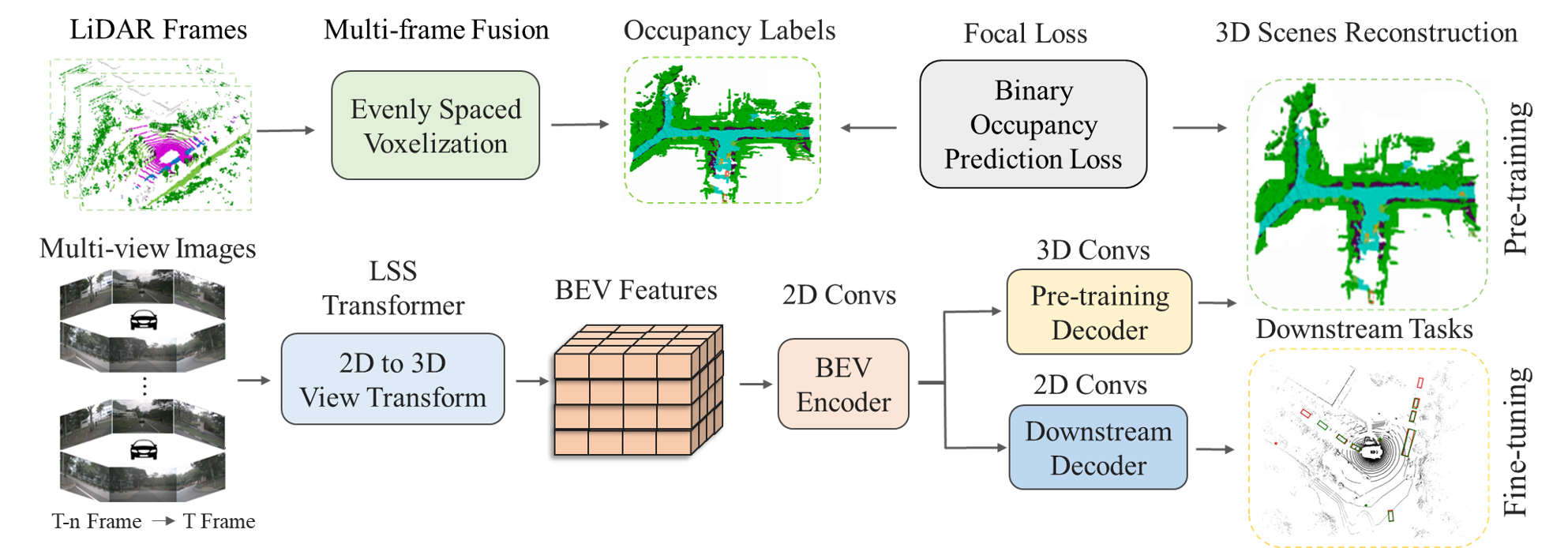

Multi-camera 3D perception has emerged as a prominent research field in autonomous driving, offering a viable and cost-effective alternative to LiDAR-based solutions. The existing multi-camera algorithms primarily rely on monocular 2D pre-training. However, the monocular 2D pre-training overlooks the spatial and temporal correlations among the multi-camera system. To address this limitation, we propose the first multi-camera unified pre-training framework, called UniScene, which involves initially reconstructing the 3D scene as the foundational stage and subsequently fine-tuning the model on downstream tasks. Specifically, we employ Occupancy as the general representation for the 3D scene, enabling the model to grasp geometric priors of the surrounding world through pre-training.

| Backbone | Method | Pre-training | Lr Schd | NDS | mAP | Config |

|---|---|---|---|---|---|---|

| R101-DCN | BEVFormer | ImageNet | 24ep | 47.7 | 37.7 | config/[model] |

| R101-DCN | BEVFormer | ImageNet + UniScene | 24ep | 50.0 | 39.7 | config/model |

| R101-DCN | BEVFormer | FCOS3D | 24ep | 51.7 | 41.6 | config/model |

| R101-DCN | BEVFormer | FCOS3D + UniScene | 24ep | 53.4 | 43.8 | config/pre-trained model/fine-tune-model |

If this work is helpful for your research, please consider citing the following BibTeX entry.

@article{UniScene,

title={Multi-Camera Unified Pre-Training Via 3D Scene Reconstruction},

author={Min, Chen and Xiao, Liang and Zhao, Dawei and Nie, Yiming and Dai, Bin},

journal={IEEE Robotics and Automation Letters},

year={2024},

publisher={IEEE}

}

Many thanks to these excellent open source projects: