For this project, you will train an agent to navigate (and collect bananas!) in a large, square world.

| Random agent | Trained agent |

|---|---|

|

|

A reward of +1 is provided for collecting a yellow banana, and a reward of -1 is provided for collecting a blue banana. Thus, the goal of your agent is to collect as many yellow bananas as possible while avoiding blue bananas.

The state space has 37 dimensions and contains the agent's velocity, along with ray-based perception of objects around agent's forward direction. Given this information, the agent has to learn how to best select actions. Four discrete actions are available, corresponding to:

0- move forward.1- move backward.2- turn left.3- turn right.

The task is episodic, and in order to solve the environment, your agent must get an average score of +13 over 100 consecutive episodes.

-

Download the environment from one of the links below. You need only select the environment that matches your operating system:

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

(For Windows users) Check out this link if you need help with determining if your computer is running a 32-bit version or 64-bit version of the Windows operating system.

(For AWS) If you'd like to train the agent on AWS (and have not enabled a virtual screen), then please use this link to obtain the environment.

-

Place the file in this folder, unzip (or decompress) the file and then write the correct path in the argument for creating the environment under the notebook

Navigation_solution.ipynb:

env = env = UnityEnvironment(file_name="Banana.app")dqn_agent.py: code for the agent used in the environmentprioritised_double_dqn_agent.py: code for the agent, which uses prioritised double DQNmodel.py: code containing the Q-Network used as the function approximator by the agentdqn.pth: saved model weights for the original DQN modelddqn.pth: saved model weights for the Double DQN modeldueling_dqn.pth: saved model weights for the Dueling Double DQN modelNavigation.ipynb: explore the unity environment & provide the solution

Follow the instructions in Navigation.ipynb to get started with training your own agent!

To watch a trained smart agent, follow the instructions below:

- DQN: If you want to run the original DQN algorithm, use the checkpoint

dqn.pthfor loading the trained model. Also, choose the parameterqnetworkasQNetworkwhile defining the agent and the parameterupdate_typeasdqn. - Double DQN: If you want to run the Double DQN algorithm, use the checkpoint

double_dqn.pthfor loading the trained model. Also, choose the parameterqnetworkasQNetworkwhile defining the agent and the parameterupdate_typeasdouble_dqn. - Dueling Double DQN: If you want to run the Dueling Double DQN algorithm, use the checkpoint

dueling_dqn.pthfor loading the trained model. Also, choose the parameterqnetworkasDuelingQNetworkwhile defining the agent and the parameterupdate_typeasdouble_dqn. - Prioritised Double DQN: If you want to run the Prioritised Double DQN algorithm, use the checkpoint

prioritised_dqn.pthfor loading the trained model.

Several enhancements to the original DQN algorithm have also been incorporated:

- Double DQN [Paper] [Code]

- Prioritized Experience Replay [Paper] [Code] (WIP)

- Dueling DQN [Paper] [Code]

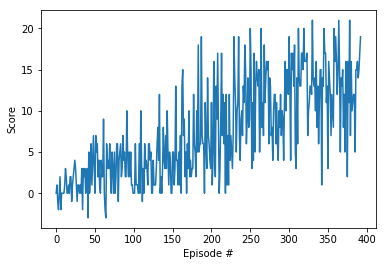

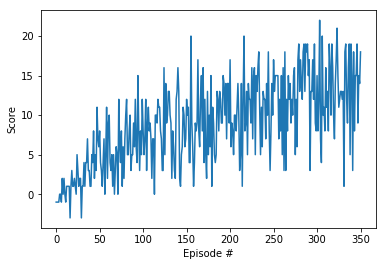

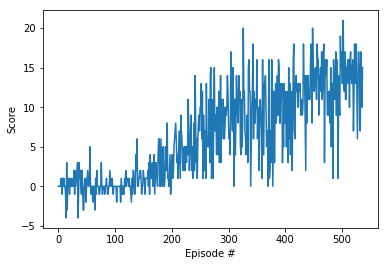

Plot showing the score per episode over all the episodes. The environment was solved in 377 episodes (currently).

| Double DQN | DQN | Dueling DQN |

|---|---|---|

|

|

|

Use the requirements.txt file to install the required dependencies via pip.

pip install -r requirements.txt