Kerui Ren*, Lihan Jiang*, Tao Lu, Mulin Yu, Linning Xu, Zhangkai Ni, Bo Dai ✉️

[2024.09.25] 🎈We propose Octree-AnyGS, a general anchor-based framework that supports explicit Gaussians (2D-GS, 3D-GS) and neural Gaussians (Scaffold-GS). Additionally, Octree-GS has been adapted to the aforementioned Gaussian primitives, enabling Level-of-Detail representation for large-scale scenes. This framework holds potential for application to other Gaussian-based methods, with relevant SIBR visualizations forthcoming.(https://github.com/city-super/Octree-AnyGS)

[2024.05.30] 👀We update new mode (depth, normal, Gaussian distribution and LOD Bias) in the viewer for Octree-GS.

[2024.05.30] 🎈We release the checkpoints for the Mip-NeRF 360, Tanks&Temples, Deep Blending and MatrixCity Dataset.

[2024.04.08] 🎈We update the latest quantitative results on three datasets.

[2024.04.01] 🎈👀 The viewer for Octree-GS is available now.

[2024.04.01] We release the code.

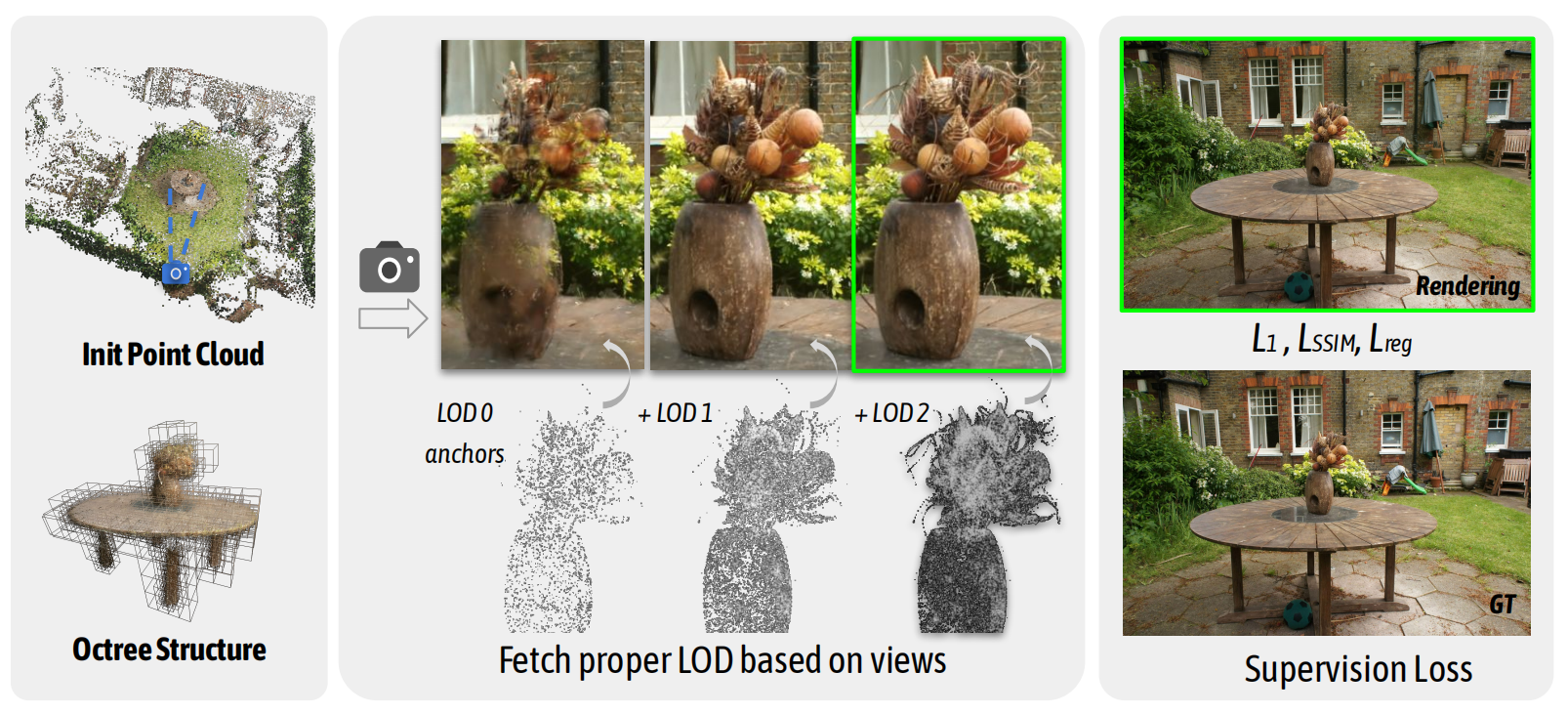

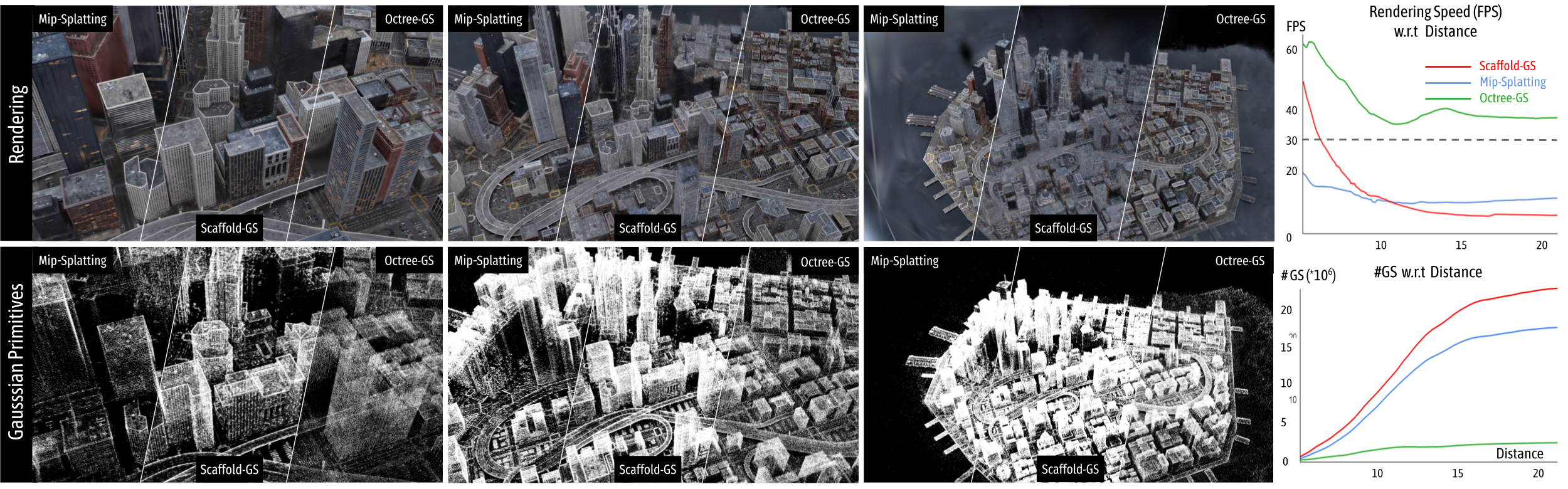

Inspired by the Level-of-Detail (LOD) techniques, we introduce \modelname, featuring an LOD-structured 3D Gaussian approach supporting level-of-detail decomposition for scene representation that contributes to the final rendering results. Our model dynamically selects the appropriate level from the set of multi-resolution anchor points, ensuring consistent rendering performance with adaptive LOD adjustments while maintaining high-fidelity rendering results.

We tested on a server configured with Ubuntu 18.04, cuda 11.6 and gcc 9.4.0. Other similar configurations should also work, but we have not verified each one individually.

- Clone this repo:

git clone https://github.com/city-super/Octree-GS --recursive

cd Octree-GS

- Install dependencies

SET DISTUTILS_USE_SDK=1 # Windows only

conda env create --file environment.yml

conda activate octree_gs

First, create a data/ folder inside the project path by

mkdir data

The data structure will be organised as follows:

data/

├── dataset_name

│ ├── scene1/

│ │ ├── images

│ │ │ ├── IMG_0.jpg

│ │ │ ├── IMG_1.jpg

│ │ │ ├── ...

│ │ ├── sparse/

│ │ └──0/

│ ├── scene2/

│ │ ├── images

│ │ │ ├── IMG_0.jpg

│ │ │ ├── IMG_1.jpg

│ │ │ ├── ...

│ │ ├── sparse/

│ │ └──0/

...

- The MipNeRF360 scenes are provided by the paper author here.

- The SfM data sets for Tanks&Temples and Deep Blending are hosted by 3D-Gaussian-Splatting here.

- The BungeeNeRF dataset is available in Google Drive/百度网盘[提取码:4whv].

- The MatrixCity dataset can be downloaded from Hugging Face/Openxlab/百度网盘[提取码:hqnn]. Point clouds used for training in our paper: pcd

Download and uncompress them into the

data/folder.

For custom data, you should process the image sequences with Colmap to obtain the SfM points and camera poses. Then, place the results into data/ folder.

To train multiple scenes in parallel, we provide batch training scripts:

- MipNeRF360:

train_mipnerf360.sh - Tanks&Temples:

train_tandt.sh - Deep Blending:

train_db.sh - BungeeNeRF:

train_bungeenerf.sh - MatrixCity:

train_matrix_city.sh

run them with

bash train_xxx.sh

Notice 1: Make sure you have enough GPU cards and memories to run these scenes at the same time.

Notice 2: Each process occupies many cpu cores, which may slow down the training process. Set

torch.set_num_threads(32)accordingly in thetrain.pyto alleviate it.

For training a single scene, modify the path and configurations in single_train.sh accordingly and run it:

bash single_train.sh

- scene: scene name with a format of

dataset_name/scene_name/orscene_name/; - exp_name: user-defined experiment name;

- gpu: specify the GPU id to run the code. '-1' denotes using the most idle GPU.

- ratio: sampling interval of the SfM point cloud at initialization

- appearance_dim: dimensions of appearance embedding

- fork: proportion of subdivisions between LOD levels

- base_layer: the coarsest layer of the octree, corresponding to LOD 0, '<0' means scene-based setting

- visible_threshold: the threshold ratio of anchor points with low training frequency

- dist2level: the way floating-point values map to integers when estimating the LOD level

- update_ratio: the threshold ratio of anchor growing

- progressive: whether to use progressive learning

- levels: The number of LOD levels, '<0' means scene-based setting

- init_level: initial level of progressive learning

- extra_ratio: the threshold ratio of LOD bias

- extra_up: Increment of LOD bias per time

For these public datasets, the configurations of 'voxel_size' and 'fork' can refer to the above batch training script.

This script will store the log (with running-time code) into outputs/dataset_name/scene_name/exp_name/cur_time automatically.

We've integrated the rendering and metrics calculation process into the training code. So, when completing training, the rendering results, fps and quality metrics will be printed automatically. And the rendering results will be save in the log dir. Mind that the fps is roughly estimated by

torch.cuda.synchronize();t_start=time.time()

rendering...

torch.cuda.synchronize();t_end=time.time()

which may differ somewhat from the original 3D-GS, but it does not affect the analysis.

Meanwhile, we keep the manual rendering function with a similar usage of the counterpart in 3D-GS, one can run it by

python render.py -m <path to trained model> # Generate renderings

python metrics.py -m <path to trained model> # Compute error metrics on renderings

| scene | PSNR | SSIM | LPIPS | GS(k) | Mem(MB) |

|---|---|---|---|---|---|

| bicycle | 25.14 | 0.753 | 0.238 | 701 | 252.07 |

| garden | 27.69 | 0.86 | 0.119 | 1344 | 272.67 |

| stump | 26.61 | 0.763 | 0.265 | 467 | 145.50 |

| room | 32.53 | 0.937 | 0.171 | 377 | 118.00 |

| counter | 30.30 | 0.926 | 0.166 | 457 | 106.98 |

| kitchen | 31.76 | 0.933 | 0.115 | 793 | 105.16 |

| bonsai | 33.41 | 0.953 | 0.169 | 474 | 97.16 |

| flowers | 21.47 | 0.598 | 0.342 | 726 | 238.57 |

| treehill | 23.19 | 0.645 | 0.347 | 545 | 211.90 |

| avg | 28.01 | 0.819 | 0.215 | 654 | 172.00 |

| paper | 27.73 | 0.815 | 0.217 | 686 | 489.59 |

| +0.28 | +0.004 | -0.002 | -4.66% | -64.87% |

| scene | PSNR | SSIM | LPIPS | GS(k) | Mem(MB) |

|---|---|---|---|---|---|

| truck | 26.17 | 0.892 | 0.127 | 401 | 84.42 |

| train | 23.04 | 0.837 | 0.184 | 446 | 84.45 |

| avg | 24.61 | 0.865 | 0.156 | 424 | 84.44 |

| paper | 24.52 | 0.866 | 0.153 | 481 | 410.48 |

| +0.09 | -0.001 | +0.003 | -11.85% | -79.43% |

| scene | PSNR | SSIM | LPIPS | GS(k) | Mem(MB) |

|---|---|---|---|---|---|

| drjohnson | 29.89 | 0.911 | 0.234 | 132 | 132.43 |

| playroom | 31.08 | 0.914 | 0.246 | 93 | 53.94 |

| avg | 30.49 | 0.913 | 0.240 | 113 | 93.19 |

| paper | 30.41 | 0.913 | 0.238 | 144 | 254.87 |

| +0.08 | - | +0.002 | -21.52% | -63.44% |

| scene | PSNR | SSIM | LPIPS | GS(k) | Mem(GB) |

|---|---|---|---|---|---|

| Block_All | 26.99 | 0.833 | 0.257 | 453 | 2.36 |

| paper | 26.41 | 0.814 | 0.282 | 665 | 3.70 |

| +0.59 | +0.019 | -0.025 | -31.87% | -36.21% |

The viewers for Octree-GS is available now. Please follow the following format

<location>

|---point_cloud

| |---point_cloud.ply

| |---color_mlp.pt

| |---cov_mlp.pt

| |---opacity_mlp.pt

| (|---embedding_appearance.pt)

|---cameras.json

|---cfg_args

or

<location>

|---point_cloud

| |---iteration_{ITERATIONS}

| | |---point_cloud.ply

| | |---color_mlp.pt

| | |---cov_mlp.pt

| | |---opacity_mlp.pt

| | (|---embedding_appearance.pt)

|---cameras.json

|---cfg_args

- Kerui Ren: renkerui@pjlab.org.cn

- Lihan Jiang: mr.lhjiang@gmail.com

If you find our work helpful, please consider citing:

@article{ren2024octree,

title={Octree-gs: Towards consistent real-time rendering with lod-structured 3d gaussians},

author={Ren, Kerui and Jiang, Lihan and Lu, Tao and Yu, Mulin and Xu, Linning and Ni, Zhangkai and Dai, Bo},

journal={arXiv preprint arXiv:2403.17898},

year={2024}

}Please follow the LICENSE of 3D-GS.

We thank all authors from 3D-GS and Scaffold-GS for presenting such an excellent work.