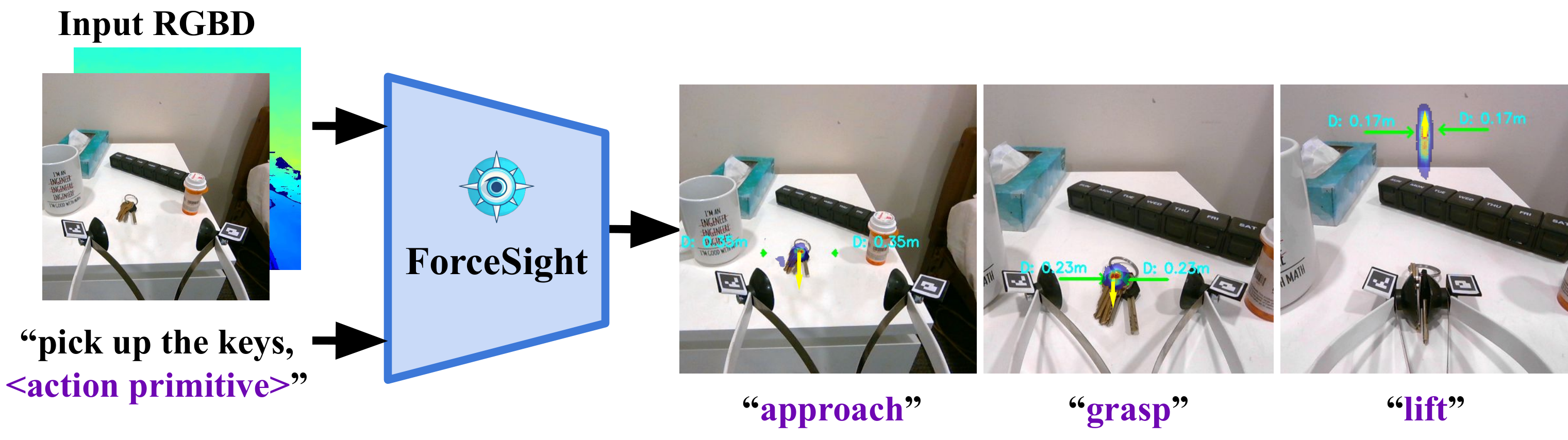

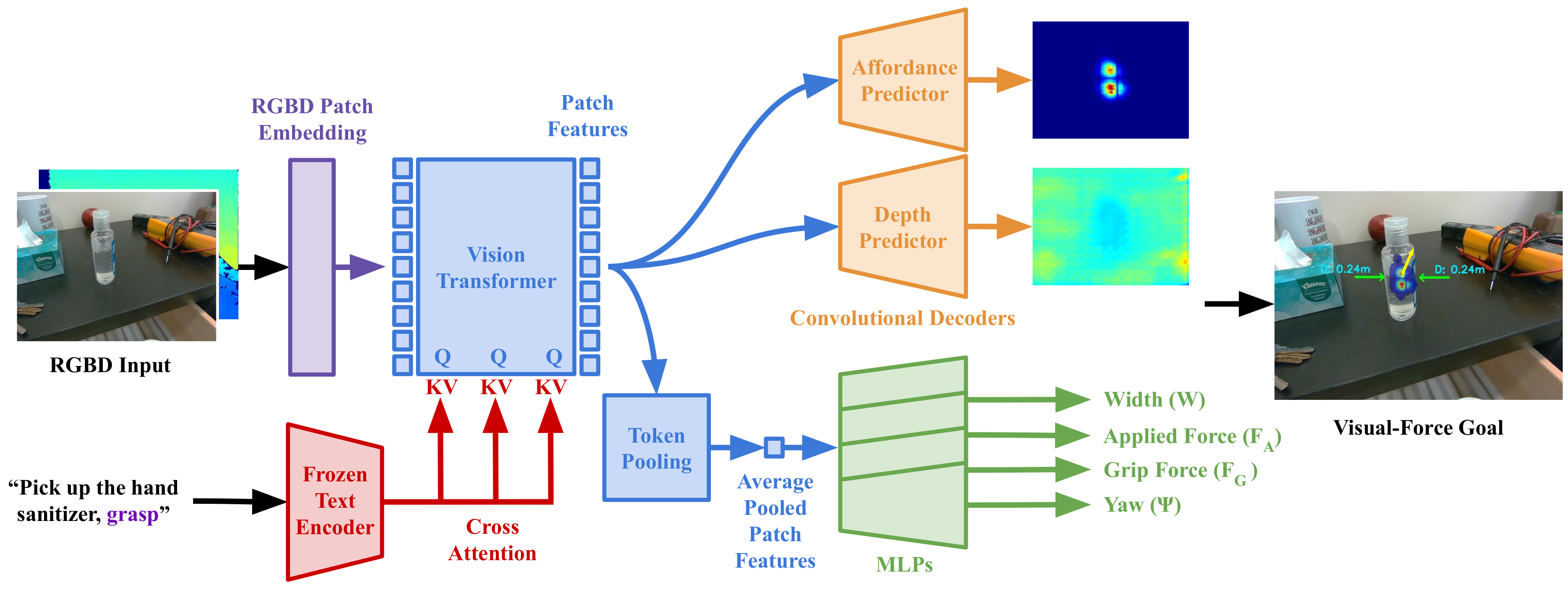

Given an RGBD image and a text prompt, ForceSight produces visual-force goals for a robot, enabling mobile manipulation in unseen environments with unseen object instances.

Install the conda environment forcesight

conda env create -f environment.yml

conda activate forcesightOR

(Optional) Manually install the dependencies:

# First create a conda environment

conda create -n fs python=3.8

conda activate fsIf manually installing dependencies, install PyTorch from here, then:

conda install libffi

pip3 install -r requirements.txtThe following is a quick start guide for the project. The robot is not required for this part.

-

Download the dataset, model, and hardware here. Place the model in

checkpoints/forcesight_0/and place the dataset indata/. -

Train a model

Skip this if using a trained checkpoint

python -m prediction.trainer --config forcesight

-

Evaluate the prediction

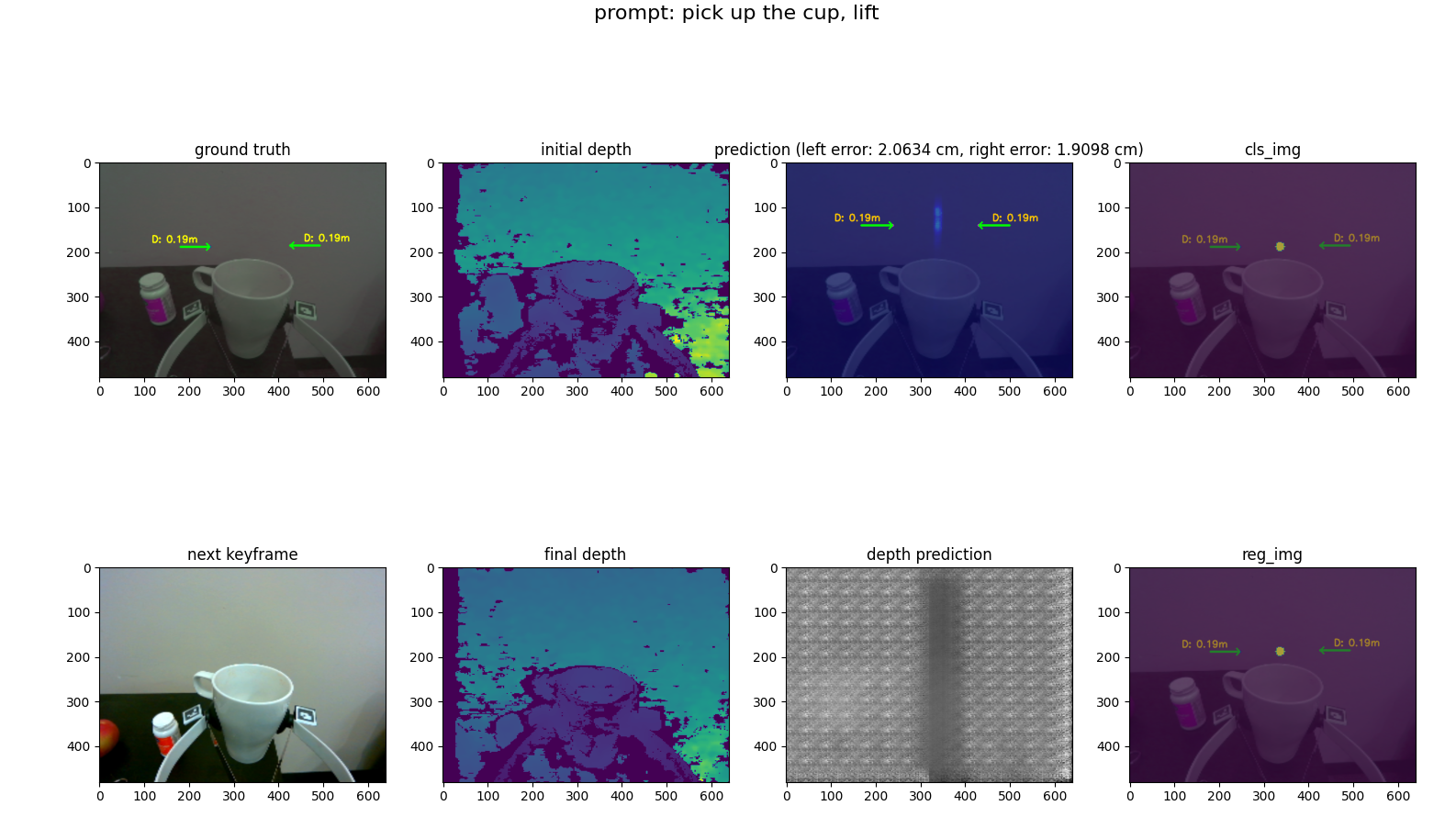

python -m prediction.view_preds \ --config forcesight \ --folder data/test_new_objects_and_env \ --index 0 --epoch best --ignore_prefilter # --ignore_prefilter is used to ignore the prefiltering step, for faster initYou will seen the output plot like this:

-

Show live view

This requires a realsense d405 camera.

python -m prediction.live_model --config forcesight --index 0 --epoch best --prompt "pick up the keys"Press "p" to change the prompt. For more info about the key control, please refer to keyboard_teleop

Beyond this point, the Documentation contains more detailed information about the project. This will involve the usage of the Stretch robot and the Realsense D405 camera.

We assume that you have a Stretch Robot and a force/torque sensor mounted on the wrist of the robot. Hardware to mount an ATI Mini45 force/torque sensor to the Stretch can be found here.

- Requires installation of stretch_remote:

git clone https://github.com/Healthcare-Robotics/stretch_remote.git

cd stretch_remote

pip install -e .Run the stretch remote server on the robot:

python3 stretch_remote/stretch_remote/robot_server.pyconda activate forcesight- test data collection,

cd ~/forcesight

# first task

# OUTPUT Folder format: <TASK>_frame_<STAGE1>_<STAGE2>

python -m recording.capture_data --config <CONFIG> --stage train --folder <OUTPUT FOLDER> --prompt "pick up the apple" --realsense_id <ID>

# stage 1-> 2

python -m recording.capture_data --config <CONFIG> --stage train --folder pick_up_the_apple_frame_1_2 --prompt "pick up the apple" --realsense_id <ID>

# stage 2 -> 3

python -m recording.capture_data --config <CONFIG> --stage train --folder pick_up_the_apple_frame_2_3 --prompt "pick up the apple" --realsense_id <ID>

# stage 3 -> 4

python -m recording.capture_data --config <CONFIG> --stage train --folder pick_up_the_apple_frame_3_4 --prompt "pick up the apple" --realsense_id <ID>Key control:

wasdkey: up down front back[]key: robot baseijklkeys: wristh: homeenter: switch stepspace: save framebackspace: delete/: randomize the position of the end effector

We use a randomizer to quickly obtain varied data, in robot/robot_utils.py, if keycode == ord('/'). This speeds up the data collection process.

Data collection for grip force model

python3 -m recording.capture_grip_data --bipartite 0 --config grip_force_5_21 --folder grip_force_5_25_frame_0_0 --stage train --ip 100.99.105.59We will try to load the data with a loader to check the newly collected raw data.

python -m prediction.loader --config <CONFIG> --folder data/rawSet up a config for each model. The config used for ForceSight is provided in config/forcesight.yaml. For more details, please refer to the config files in configs/ directory.

Start the training:

python -m prediction.trainer --config <CONFIG>Since grip force measurement is not available from the robot, we train a grip force model to predict the grip force, given fingertip locations, motor effort, and motor position. A default model is provided in grip_force_checkpoints/ directory.

Grip force data collection

python -m recording.capture_data --config <CONFIG> --stage raw --folder grip_force_5_25 --realsense_id <ID> --bipartite 0

Train the grip force prediction model

python -m prediction.grip_force_trainer --config <CONFIG> --bipartite 0

After training, we can run the model on the robot. We will use ForceSight to generate kinematic and force goals for the robot, and the low-level controller will then control the robot to reach the goals.

To run the robot, we will need to run stretch_remote/stretch_remote/robot_server.py on the robot, and then run the visual_servo.py. The visual_servo.py can be run on a different computer with a GPU, and communication is specified by the --ip argument.

Test model with live view and visual servoing

# Visual Servo: Press 'p' to insert prompt,

# Press 't' to switch between view model and visual servoing mode

# add --ros_viz arg to visualize the 3d scene on rviz

python -m robot.visual_servo --config forcesight --index 0 --epoch best --prompt "pick up the keys" --ip <ROBOT IP>tkey to switch between view model and visual servoing modepkey to insert prompt- `wasd[]ijkl`` to control the robot (refer above)

hhomecswitch between publish or not publish point cloud (if using --ros_viz)

If you do not have a force/torque sensor, you can still run ForceSight on the robot by passing --use_ft 0 as an arg. If running visual_servo.py, set USE_FORCE_OBJECTIVE to False to ignore forces. Note that performance will suffer without the use of force goals, however.

Util scripts to run aruco detection and visualize the point cloud.

# Run realsense camera

python utils/realsense_utils.py

python utils/realsense_utils.py --cloud

# Run aruco deteciont with realsense

python -m utils.aruco_detect --rsTo install rospy in conda env, run conda install -c conda-forge ros-rospy, ***make sure you are using Python 3.8 or follow this: https://robostack.github.io/GettingStarted.html

Note: ROS tends to be unfriendly with conda env, so this installation will not be seamless.

roslaunch realsense2_camera rs_camera.launch enable_pointcloud:=1 infra_width:=640

# view the point cloud

rviz -d ros_scripts/ros_viz.rviz

# then run the marker pose estimation script

python3 -m ros_scripts.ros_aruco_detect

# ros viz to visualize the pointcloud and contact in rviz. --rs to use realsense --ft to use ft sensor

python -m ros_scripts.ros_viz --rsOthers

ROS to visualize the urdf. URDF describes the robot model, and it is helpful to calculate the forward and inverse kinematics of the robot.

roslaunch ros_scripts/urdf_viewer.launch model:=robot/stretch_robot.urdfTest joints of the robot

roslaunch ros_scripts/urdf_viewer.launch model:=robot/stretch_robot.urdf joints_pub:=false

python3 joint_state_pub.py --joints 0 0 0 0 0 0Extra, to convert xacro to urdf from stretch_ros, run:

rosrun xacro xacro src/stretch_ros/stretch_description/urdf/stretch_description.xacro -o output.urdfWe tested various methods of data augmentation during pilot experiments.

python -m utils.test_aug --no_gripper --data <PATH TO DATA FOLDER>

python -m utils.test_aug --translate_pic --data <PATH TO DATA FOLDER>- There are some caveats when using D405 Camera with ROS. The current realsense driver doesnt support the D405 version, since the devel effort are in ros2. This fork is used: https://github.com/rjwb1/realsense-ros

- Make sure that the image resolution is corresponding to the one its intrinsic parameters. Different image res for the same camera will have different ppx, ppy, fx, fy values.

rs-enumerate-devices -c - To teleop the Stretch robot, use: https://github.com/Healthcare-Robotics/stretch_remote

- if getting MESA driver error when running open3d viz in conda env, try

conda install -c conda-forge libstdcxx-ng - There are 2 IK/FK solvers being used here:

kdlandkinpy. Kinpy is a recent migration from KDL since kdl is dependent on ROS, which is a headache for conda installation.

@misc{collins2023forcesight,

title={ForceSight: Text-Guided Mobile Manipulation with Visual-Force Goals},

author={Jeremy A. Collins and Cody Houff and You Liang Tan and Charles C. Kemp},

year={2023},

eprint={2309.12312},

archivePrefix={arXiv},

primaryClass={cs.RO}

}