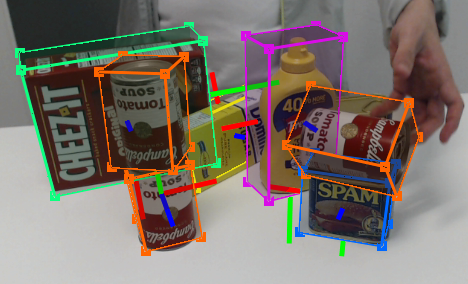

This is the official DOPE ROS package for detection and 6-DoF pose estimation of known objects from an RGB camera. The network has been trained on the following YCB objects: cracker box, sugar box, tomato soup can, mustard bottle, potted meat can, and gelatin box. For more details, see our CoRL 2018 paper and video.

Note: The instructions below refer to inference only. Training code is also provided but not supported.

03/07/2019 - ROS interface update (thanks to Martin Günther)

11/06/2019 - Added bleach YCB weights

We have tested on Ubuntu 16.04 with ROS Kinetic with an NVIDIA Titan X with python 2.7. The code may work on other systems.

The following steps describe the native installation. Alternatively, use the provided Docker image and skip to Step #7.

-

Install ROS

Follow these instructions. You can select any of the default configurations in step 1.4; even the ROS-Base (Bare Bones) package (

ros-kinetic-ros-base) is enough. -

Create a catkin workspace (if you do not already have one). To create a catkin workspace, follow these instructions:

$ mkdir -p ~/catkin_ws/src # Replace `catkin_ws` with the name of your workspace $ cd ~/catkin_ws/ $ catkin_make -

Download the DOPE code

$ cd ~/catkin_ws/src $ git clone https://github.com/NVlabs/Deep_Object_Pose.git dope -

Install python dependencies

$ cd ~/catkin_ws/src/dope $ pip install -r requirements.txt -

Install ROS dependencies

$ cd ~/catkin_ws $ rosdep install --from-paths src -i --rosdistro kinetic $ sudo apt-get install ros-kinetic-rosbash ros-kinetic-ros-comm -

Build

$ cd ~/catkin_ws $ catkin_make -

Download the weights and save them to the

weightsfolder, i.e.,~/catkin_ws/src/dope/weights/.

-

Start ROS master

$ cd ~/catkin_ws $ source devel/setup.bash $ roscore -

Start camera node (or start your own camera node)

$ roslaunch dope camera.launch # Publishes RGB images to `/dope/webcam_rgb_raw`The camera must publish a correct

camera_infotopic to enable DOPE to compute the correct poses. Basically all ROS drivers have acamera_info_urlparameter where you can set the calibration info (but most ROS drivers include a reasonable default).For details on calibration and rectification of your camera see the camera tutorial.

-

Edit config info (if desired) in

~/catkin_ws/src/dope/config/config_pose.yamltopic_camera: RGB topic to listen totopic_camera_info: camera info topic to listen totopic_publishing: topic namespace for publishinginput_is_rectified: Whether the input images are rectified. It is strongly suggested to use a rectified input topic.downscale_height: If the input image is larger than this, scale it down to this pixel height. Very large input images eat up all the GPU memory and slow down inference. Also, DOPE works best when the object size (in pixels) has appeared in the training data (which is downscaled to 400 px). For these reasons, downscaling large input images to something reasonable (e.g., 400-500 px) improves memory consumption, inference speed and recognition results.weights: dictionary of object names and there weights path name, comment out any line to disable detection/estimation of that objectdimensions: dictionary of dimensions for the objects (key values must match theweightsnames)class_ids: dictionary of class ids to be used in the messages published on the/dope/detected_objectstopic (key values must match theweightsnames)draw_colors: dictionary of object colors (key values must match theweightsnames)model_transforms: dictionary of transforms that are applied to the pose before publishing (key values must match theweightsnames)meshes: dictionary of mesh filenames for visualization (key values must match theweightsnames)mesh_scales: dictionary of scaling factors for the visualization meshes (key values must match theweightsnames)thresh_angle: undocumentedthresh_map: undocumentedsigma: undocumentedthresh_points: Thresholding the confidence for object detection; increase this value if you see too many false positives, reduce it if objects are not detected.

-

Start DOPE node

$ roslaunch dope dope.launch [config:=/path/to/my_config.yaml] # Config file is optional; default is `config_pose.yaml`

-

The following ROS topics are published (assuming

topic_publishing == 'dope'):/dope/webcam_rgb_raw # RGB images from camera /dope/dimension_[obj_name] # dimensions of object /dope/pose_[obj_name] # timestamped pose of object /dope/rgb_points # RGB images with detected cuboids overlaid /dope/detected_objects # vision_msgs/Detection3DArray of all detected objects /dope/markers # RViz visualization markers for all objectsNote:

[obj_name]is in {cracker, gelatin, meat, mustard, soup, sugar} -

To debug in RViz, run

rviz, then add one or more of the following displays:Add > Imageto view the raw RGB image or the image with cuboids overlaidAdd > Poseto view the object coordinate frame in 3D.Add > MarkerArrayto view the cuboids, meshes etc. in 3D.Add > Camerato view the RGB Image with the poses and markers from above.

If you do not have a coordinate frame set up, you can run this static transformation:

rosrun tf2_ros static_transform_publisher 0 0 0 0.7071 0 0 -0.7071 world <camera_frame_id>, where<camera_frame_id>is theframe_idof your input camera messages. Make sure that in RViz'sGlobal Options, theFixed Frameis set toworld. Alternatively, you can skip thestatic_transform_publisherstep and directly set theFixed Frameto your<camera_frame_id>. -

If

rosrundoes not find the package ([rospack] Error: package 'dope' not found), be sure that you calledsource devel/setup.bashas mentioned above. To find the package, runrospack find dope.

DOPE returns the poses of the objects in the camera coordinate frame. DOPE uses the aligned YCB models, which can be obtained using NVDU (see the nvdu_ycb command).

If you use this tool in a research project, please cite as follows:

@inproceedings{tremblay2018corl:dope,

author = {Jonathan Tremblay and Thang To and Balakumar Sundaralingam and Yu Xiang and Dieter Fox and Stan Birchfield},

title = {Deep Object Pose Estimation for Semantic Robotic Grasping of Household Objects},

booktitle = {Conference on Robot Learning (CoRL)},

url = "https://arxiv.org/abs/1809.10790",

year = 2018

}

Copyright (C) 2018 NVIDIA Corporation. All rights reserved. Licensed under the CC BY-NC-SA 4.0 license.

Thanks to Jeffrey Smith (jeffreys@nvidia.com) for creating the Docker image.

Jonathan Tremblay (jtremblay@nvidia.com), Stan Birchfield (sbirchfield@nvidia.com)