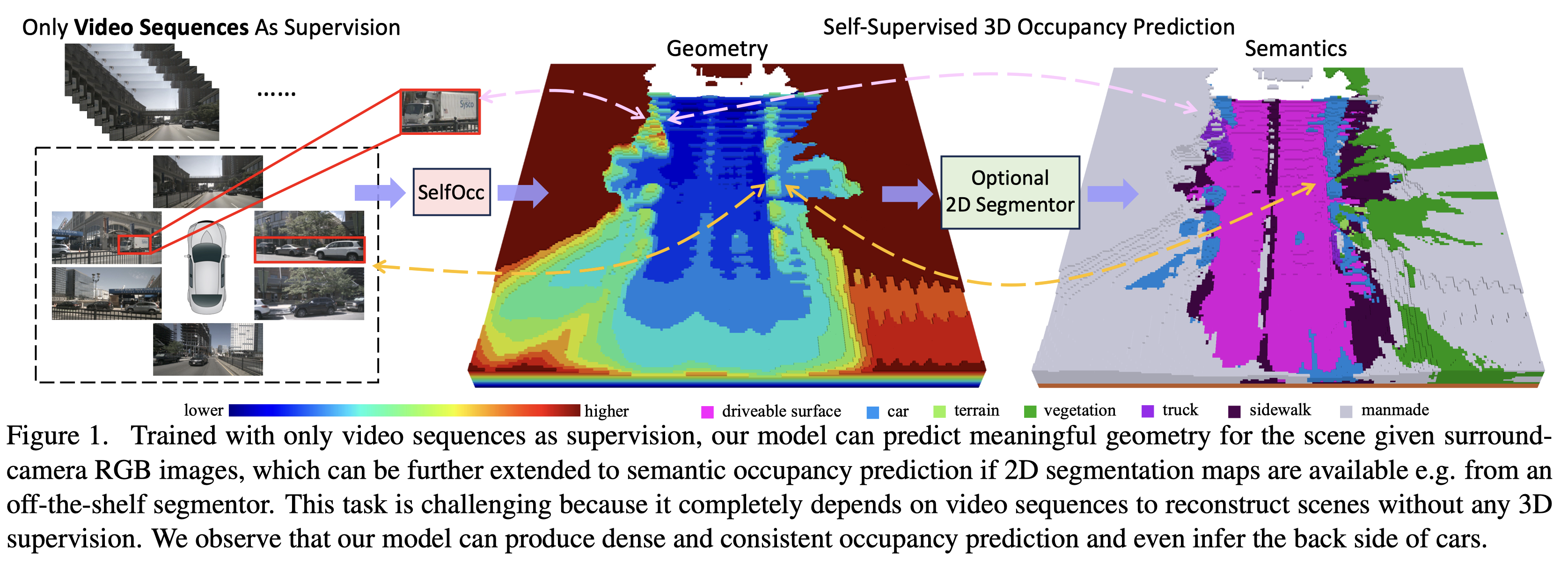

SelfOcc: Self-Supervised Vision-Based 3D Occupancy Prediction, CVPR 2024

Yuanhui Huang*, Wenzhao Zheng*

$\dagger$ , Borui Zhang, Jie Zhou, Jiwen Lu$\ddagger$

* Equal contribution

SelfOcc empowers 3D autonomous driving world models (e.g., OccWorld) with scalable 3D representations, paving the way for interpretable end-to-end large driving models.

- [2024/2/26] SelfOcc is accepted to CVPR 2024!

- [2023/12/16] Training code release.

- [2023/11/28] Evaluation code release.

- [2023/11/20] Paper released on arXiv.

- [2023/11/20] Demo release.

Trained using an additional off-the-shelf 2D segmentor (OpenSeeD):

More demo videos can be downloaded here.

-

We first transform the images into the 3D space (e.g., bird's eye view, tri-perspective view) to obtain 3D representation of the scene. We directly impose constraints on the 3D representations by treating them as signed distance fields. We can then render 2D images of previous and future frames as self-supervision signals to learn the 3D representations.

-

Our SelfOcc outperforms the previous best method SceneRF by 58.7% using a single frame as input on SemanticKITTI and is the first self-supervised work that produces reasonable 3D occupancy for surround cameras on nuScenes.

-

SelfOcc produces high-quality depth and achieves state-of-the-art results on novel depth synthesis, monocular depth estimation, and surround-view depth estimation on the SemanticKITTI, KITTI-2015, and nuScenes, respectively.

Follow detailed instructions in Installation.

Follow detailed instructions in Prepare Dataset.

[23/12/16 Update] Please update the timm package to 0.9.2 to run the training script.

Download model weights HERE and put it under out/nuscenes/occ/

# train

python train.py --py-config config/nuscenes/nuscenes_occ.py --work-dir out/nuscenes/occ_train --depth-metric

# eval

python eval_iou.py --py-config config/nuscenes/nuscenes_occ.py --work-dir out/nuscenes/occ --resume-from out/nuscenes/occ/model_state_dict.pth --occ3d --resolution 0.4 --sem --use-mask --scene-size 4Download model weights HERE and put it under out/nuscenes/novel_depth/

# train

python train.py --py-config config/nuscenes/nuscenes_novel_depth.py --work-dir out/nuscenes/novel_depth_train --depth-metric

# evak

python eval_novel_depth.py --py-config config/nuscenes/nuscenes_novel_depth.py --work-dir out/nuscenes/novel_depth --resume-from out/nuscenes/novel_depth/model_state_dict.pthDownload model weights HERE and put it under out/nuscenes/depth/

# train

python train.py --py-config config/nuscenes/nuscenes_depth.py --work-dir out/nuscenes/depth_train --depth-metric

# eval

python eval_depth.py --py-config config/nuscenes/nuscenes_depth.py --work-dir out/nuscenes/depth --resume-from out/nuscenes/depth/model_state_dict.pth --depth-metric --batch 90000Note that evaluating at a resolution (450*800) of 1:2 against the raw image (900*1600) takes about 90 min, because we batchify rays for rendering due to GPU memory limit. You can change the rendering resolution by the variable NUM_RAYS in utils/config_tools.py

More details on more datasets are detailed in Run and Eval.

Our code is based on TPVFormer and PointOcc.

Also thanks to these excellent open-sourced repos: SurroundOcc OccFormer BEVFormer

If you find this project helpful, please consider citing the following paper:

@article{huang2023self,

title={SelfOcc: Self-Supervised Vision-Based 3D Occupancy Prediction},

author={Huang, Yuanhui and Zheng, Wenzhao and Zhang, Borui and Zhou, Jie and Lu, Jiwen },

journal={arXiv preprint arXiv:2311.12754},

year={2023}

}