Website | Huggingface | arXiv | Model | Discord

- [2024/06/05] Bench2Drive realases the Full dataset (10000 clips), evaluation tools, baseline code, and benchmark results.

- [2024/04/27] Bench2Drive releases the Mini (10 clips) and Base (1000 clips) split of the official training data.

- The datasets has 3 subsets, namely Mini (10 clips), Base (1000 clips) and Full (10000 clips), to accommodate different levels of computational resource.

- Detailed explanation of dataset structure, annotation information, and visualization of data.

| Subset | Hugging Face |

Baidu Cloud |

Approx. Size |

|---|---|---|---|

| Mini | Download script | Download script | 4G |

| Base | Hugging Face Link | Baidu Cloud Link | 400G |

| Full | Hugging Face Link | Uploading | 4T |

- Download and setup CARLA 0.9.15

mkdir carla cd carla wget https://carla-releases.s3.us-east-005.backblazeb2.com/Linux/CARLA_0.9.15.tar.gz tar -xvf CARLA_0.9.15.tar.gz cd Import && wget https://carla-releases.s3.us-east-005.backblazeb2.com/Linux/AdditionalMaps_0.9.15.tar.gz cd .. && bash ImportAssets.sh export CARLA_ROOT=YOUR_CARLA_PATH echo "$CARLA_ROOT/PythonAPI/carla/dist/carla-0.9.15-py3.7-linux-x86_64.egg" >> YOUR_CONDA_PATH/envs/YOUR_CONDA_ENV_NAME/lib/python3.7/site-packages/carla.pth # python 3.8 also works well, please set YOUR_CONDA_PATH and YOUR_CONDA_ENV_NAME

- Add your agent to leaderboard/team_code/your_agent.py & Link your model folder under the Bench2Drive directory.

Bench2Drive\ assets\ docs\ leaderboard\ team_code\ --> Please add your agent HEAR scenario_runner\ tools\ --> Please link your model folder HEAR

- Debug Mode

# Verify the correctness of the team agent, need to set GPU_RANK, TEAM_AGENT, TEAM_CONFIG bash leaderboard/scripts/run_evaluation_debug.sh - Multi-Process Multi-GPU Parallel Eval. If your team_agent saves any image for debugging, it might take lots of disk space.

# Please set TASK_NUM, GPU_RANK_LIST, TASK_LIST, TEAM_AGENT, TEAM_CONFIG, recommend GPU: Task(1:2). # It is normal that certain model can not finsih certain routes, no matter how many times we restart the evaluation. It should be treated as failing as it usually happens in the routes where agents behave badly. bash leaderboard/scripts/run_evaluation_multi.sh

- Visualization - make a video for debugging with canbus info printed on the sequential images.

python tools/generate_video.py -f your_rgb_folder/

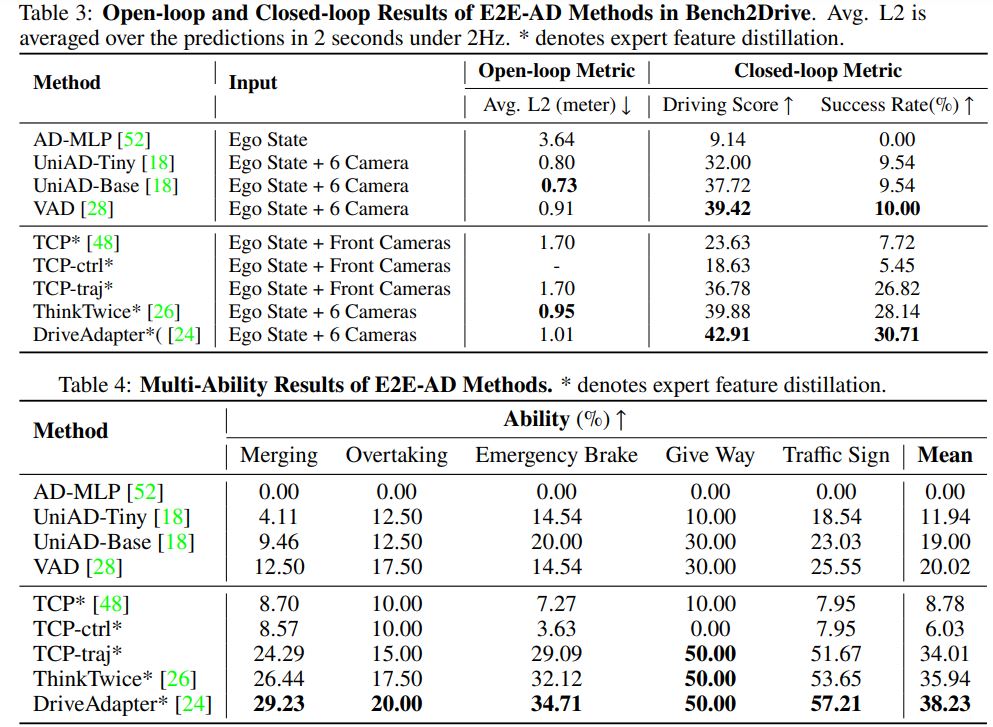

- Metric

# Merge eval json and get driving score and success rate # This script will assume the total number of routes with results is 220. If there is not enough, the missed ones will be treated as 0 score. python tools/merge_reoute_json.py -f your_json_folder/ # Get multi-ability results python tools/ability_benchmark.py -r merge.json

All assets and code are under the Apache 2.0 license unless specified otherwise.

Please consider citing our papers if the project helps your research with the following BibTex:

@article{jia2024bench,

title={Bench2Drive: Towards Multi-Ability Benchmarking of Closed-Loop End-To-End Autonomous Driving},

author={Xiaosong Jia and Zhenjie Yang and Qifeng Li and Zhiyuan Zhang and Junchi Yan},

journal={arXiv preprint arXiv:2406.03877},

year={2024}

}

@article{li2024think,

title={Think2Drive: Efficient Reinforcement Learning by Thinking in Latent World Model for Quasi-Realistic Autonomous Driving (in CARLA-v2)},

author={Qifeng Li and Xiaosong Jia and Shaobo Wang and Junchi Yan},

journal={arXiv preprint arXiv:2402.167200},

year={2024}

}