Here is preview on readme in codes. I'm trying my best on updating all codes and datasets.

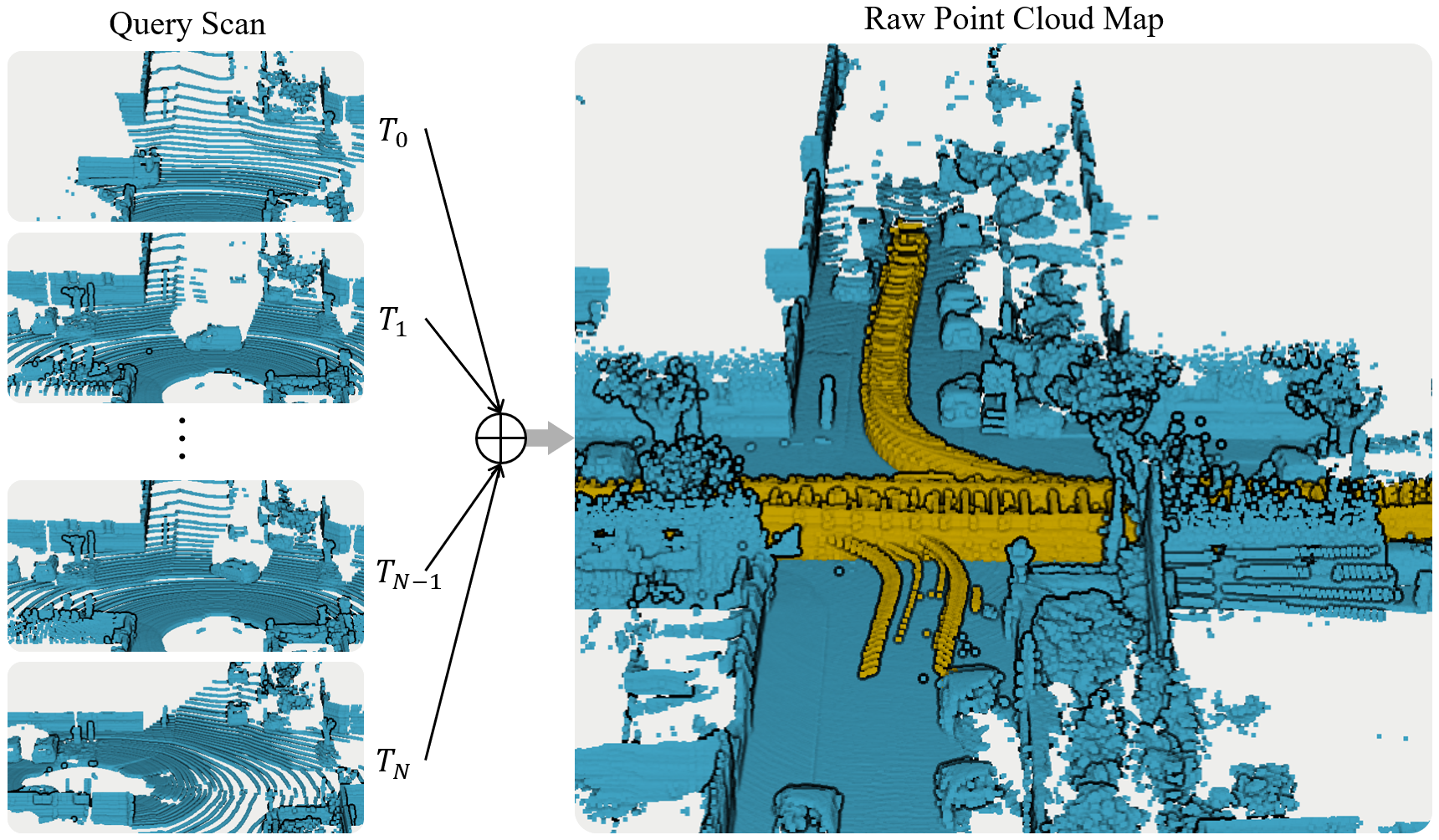

Task detect dynamic points in maps and remove them, enhancing the maps:

Folder quick view:

methods: contains all the methods in the benchmarkscripts/py/eval: eval the result pcd compared with ground truth, get quantitative tablescripts/py/data: pre-process data before benchmark. We also directly provided all the dataset we tested in the map. We run this benchmark offline in computer, so we will extract only pcd files from custom rosbag/other data format [KITTI, Argoverse2]

Quick try:

- Teaser data on KITTI sequence 00 only 530Mb, download through personal One Drive

- Go to methods folder, build and run through

./build/${methods_name}_run ${data_path, e.g. /data/00} ${config.yaml} -1

- Clone our repo:

git clone --recurse-submodules https://github.com/KTH-RPL/DynamicMap_Benchmark.git

Please check in methods folder.

- ERASOR: RAL 2021 official link, benchmark implementation

- Removert: IROS 2020 official link, benchmark implementation

- Octomap w GF: Benchmark improvement ITSC 2023 origin mapping from ICRA2010 & AR 2013 official link

- DUFOMap: Under review official link

- dynablox: ETH Arxiv official link, Benchmark Adaptation

Please note that we provided the comparison methods also but modified a little bit for us to run the experiments quickly, but no modified on their methods' core. Please check the LICENSE of each method in their official link before using it.

You will find all methods in this benchmark under methods folder. So that you can easily reproduce the experiments. And we will also directly provide the result data so that you don't need to run the experiments by yourself.

Last but not least, feel free to pull request if you want to add more methods. Welcome!

Download all these dataset from Zenodo online drive. Or create by yourself through the scripts we provided.

- Semantic-Kitti, outdoor small town VLP-64

- Argoverse2.0, outdoor US cities VLP-32

- [UDI-Plane] Our own dataset, Collected by VLP-16 in a small vehicle.

- [KTH-Campuse] Our Multi-Campus Dataset, Collected by Leica RTC360 Total Station.

- [KTH-Indoor] Our own dataset, Collected by VLP-16/Mid-70 in kobuki.

Welcome to contribute your dataset with ground truth to the community through pull request.

First all the methods will output the clean map, if you are only user on map clean task, it's enough. But for evaluation, we need to extract the ground truth label from gt label based on clean map. Why we need this? Since maybe some methods downsample in their pipeline, we need to extract the gt label from the downsampled map.

Check create dataset readme part in the scripts folder to get more information. But you can directly download the dataset through the link we provided. Then no need to read the creation; just use the data you downloaded.

-

Visualize the result pcd files in CloudCompare or the script to provide, one click to get all evaluation benchmarks and comparison images like paper have check in scripts/py/eval.

-

All color bar also provided in CloudCompare, here is tutorial how we make the animation video.

This benchmark implementation is based on codes from several repositories as we mentioned in the beginning. Thanks for these authors who kindly open-sourcing their work to the community. Please see our paper reference section to get more information.

This work was partially supported by the Wallenberg AI, Autonomous Systems and Software Program (WASP) funded by the Knut and Alice Wallenberg Foundation

Please cite our work if you find these useful for your research.

Benchmark:

@article{zhang2023benchmark,

author={Qingwen Zhang, Daniel Duberg, Ruoyu Geng, Mingkai Jia, Lujia Wang and Patric Jensfelt},

title={A Dynamic Points Removal Benchmark in Point Cloud Maps},

journal={arXiv preprint arXiv:2307.07260},

year={2023}

}

DUFOMap:

@article{duberg2023dufomap,

author={Daniel Duberg*, Qingwen Zhang*, Mingkai Jia and Patric Jensfelt},

title={{DUFOMap}: TBD},

}