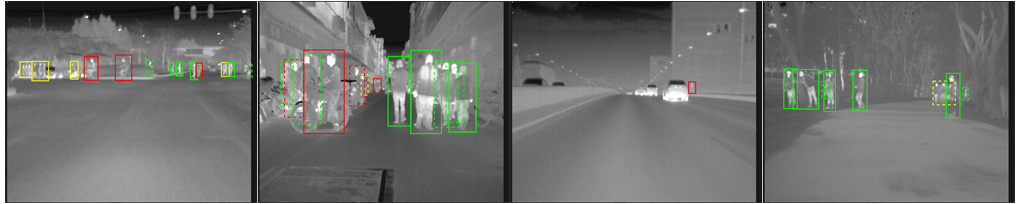

A new benchmark dataset and baseline for on-road FIR pedestrian detection

The SCUT FIR Pedestrian Datasets is a large far infrared pedestrian detection dataset. It consist of about 11 hours-long image sequences (

The SCUT FIR Pedestrian Datasets is a large far infrared pedestrian detection dataset. It consist of about 11 hours-long image sequences (

more details see statistic.xlsx.

- S0-S10

| Label | Frames with anno. | Bounding boxes | Occluded BB | Unique | Avg. frames per obj. |

|---|---|---|---|---|---|

| walk person | 48857 | 103239 | 31630 | 1613 | 64.00 |

| ride person | 40519 | 71793 | 8697 | 807 | 88.96 |

| squat person | 1520 | 1608 | 324 | 22 | 73.09 |

| people | 26385 | 38358 | 9805 | 612 | 62.68 |

| person? | 13059 | 16820 | 6943 | 496 | 33.91 |

| people? | 6855 | 8479 | 2457 | 153 | 55.42 |

| Summary | 70517 | 240297 | 59856 | 3703 | 64.89 |

- S11-S20

| Label | Frames with anno. | Bounding boxes | Occluded BB | Unique | Avg. frames per obj. |

|---|---|---|---|---|---|

| walk person | 43421 | 90526 | 26185 | 1523 | 59.44 |

| ride person | 43153 | 86201 | 8689 | 1017 | 84.76 |

| squat person | 8010 | 837 | 184 | 14 | 59.79 |

| people | 24098 | 33572 | 8903 | 647 | 51.89 |

| person? | 17208 | 22483 | 8759 | 642 | 35.02 |

| people? | 3399 | 3991 | 1604 | 97 | 41.14 |

| Summary | 73115 | 237610 | 54324 | 3940 | 60.31 |

videos GoogleDrive BaiduYun

annotations GoogleDrive BaiduYun (please contact with xzhewei@gmail.com)

- Github

Seq video format. Data Format is compatible with Caltech Pedestrian Dataset Format- datatool. Evaluation/labeling code for our dataset which is based on Caltech Dataset.

- toolbox. The

datatooldepended tool which is based on Piotr's Matlab Toolbox.

- Thermal Faster R-CNN (TFRCN) in xzhewei/TFRCN released

| Reasonable All | Overall | |

|---|---|---|

| RPN+BF | 8.28 | 25.19 |

| TFRCN | 9.98 | 32.32 |

| RPN (RPN+BF) | 12.07 | 32.94 |

| Faster R-CNN-vanilla | 19.75 | 52.00 |

| RPN-vanilla | 34.87 | 61.20 |

| ACF-T+THOG | 43.70 | 62.11 |

Ther mertic is log-average miss rate over the range of [$10^{-2}$,

- Reasonable All

- Reasonable Walk Person

- Reasonable Ride Person

- Scale=Near

- Scale=Medium

- Scale=far

- No Occlusion

- Occlusion

- Overall

Please contact Zhewei Xu [xzhewei at gmail.com] with questions.