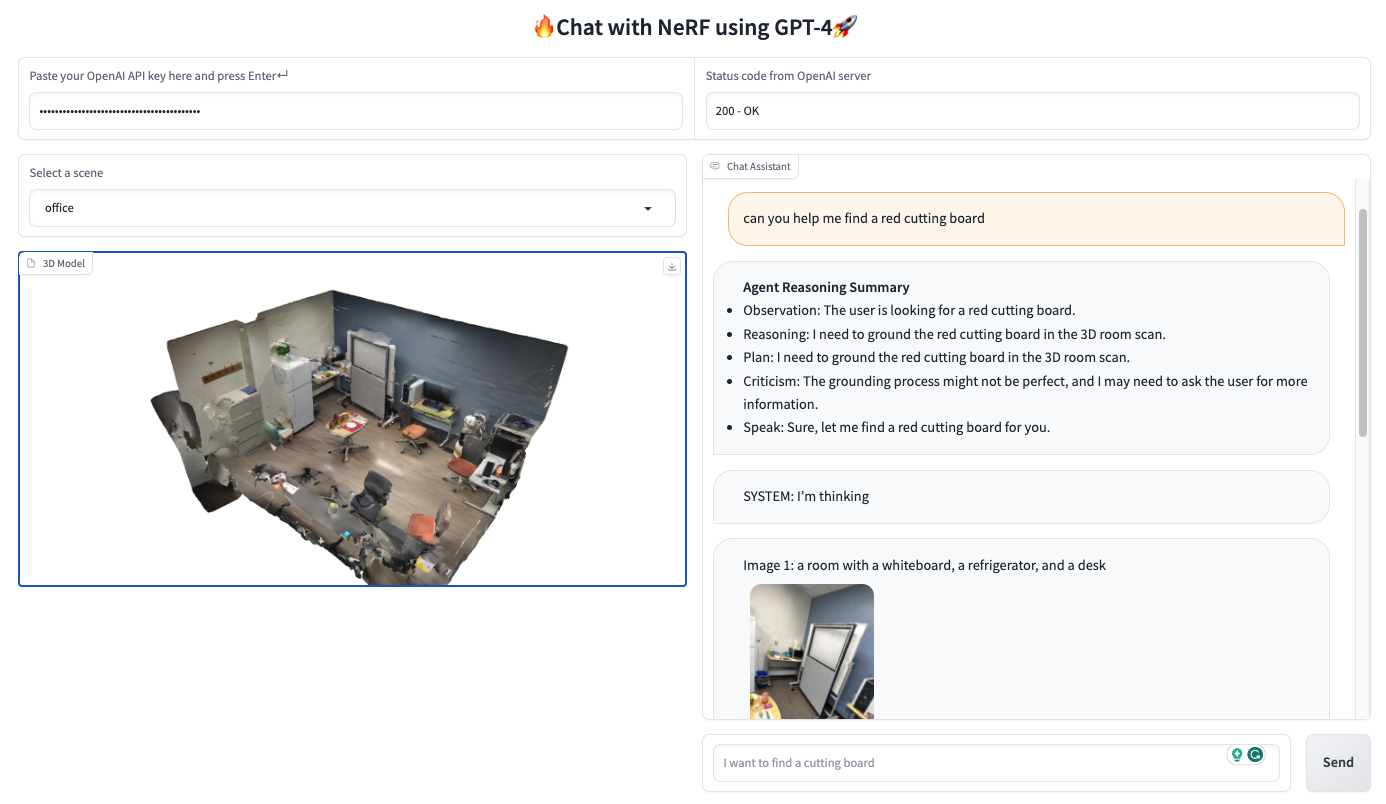

- Open-Vocabulary 3D Localization. Locate anything with natural language dialog!

- Dynamic Grounding. Humans will be able to chat with agent to localize novel objects.

[2023-05-15] The first version of chat-with-nerf is available now! Please try out demo!

- Use LLaVA to replace GPT-4-text-only + BLIP for an end-to-end multimodal grounding pipeline.

To install the dependencies we provide a Dockerfile:

docker build -t chat-with-nerf:latest .Or if you want to pull remote image from Dockerhub to save significant time, please try:

docker pull jedyang97/chat-with-nerf:latestOtherwise, if you prefer build it locally:

conda create --name nerfstudio -y python=3.8

conda activate nerfstudio

pip install torch==1.13.1 torchvision functorch --extra-index-url https://download.pytorch.org/whl/cu117

pip install ninja git+https://github.com/NVlabs/tiny-cuda-nn/#subdirectory=bindings/torch

pip install nerfstudio

git clone https://github.com/kerrj/lerf

python -m pip install -e .

ns-train -hNote that specific CUDA 11.3 is required. For further information, please check nerfstudio installation guide.

Then locally you need to run

git clone https://github.com/sled-group/chat-with-nerf.gitIf using Docker, you can use the following command to spin up a docker container with chat-with-nerf mounted under workspace

docker run --gpus "device=0" -v /<parent_path_chat-with-nerf>/:/workspace/ -v /home/<your_username>/.cache/:/home/user/.cache/ --rm -it --shm-size=12gb chat-with-nerf:latestThen install Chat with NeRF dependencies

cd /workspace/chat-with-nerf

pip install -e .

pip install -e .[dev](or use your favorite virtual environment manager)

To run the demo:

cd /workspace/chat-with-nerf

export $(cat .env | xargs); gradio chat_with_nerf/app.py

@misc{chat-with-nerf-2023,

title = {Chat with NeRF: Grounding 3D Objects in Neural Radiance Field through Dialog},

url = {\url{https://github.com/sled-group/chat-with-nerf}},

author = {Yang, Jianing and Chen, Xuweiyi and Qian, Shengyi and Fouhey, David and Chai, Joyce},

month = {May},

year = {2023}

}