Demo • Introduction • Framework • Features • Docs • Tutorials • Contributions

FedEm is an open-source library empowering community members to actively participate in the training and fine-tuning of foundational models, fostering transparency and equity in AI development. It aims to democratize the process, ensuring inclusivity and collective ownership in model training.

See this presentation

$ pip install fedemThe emergence of ChatGPT captured widespread attention, marking the first instance where individuals outside of technical circles could engage with Generative AI. This watershed moment sparked a surge of interest in cultivating secure applications of foundational models, alongside the exploration of domain-specific or community-driven alternatives to ChatGPT. Notably, the unveiling of LLaMA 2, an LLM generously open-sourced by Meta, catalyzed a plethora of advancements. This release fostered the creation of diverse tasks, tools, and resources, spanning from datasets to novel models and applications. Additionally, the introduction of Phi 2, an SLM by Microsoft, demonstrated that modestly-sized models could rival their larger counterparts, offering a compelling alternative that significantly reduces both training and operational costs.

Yet, amid these strides, challenges persist. The training of foundational models within current paradigms demands substantial GPU resources, presenting a barrier to entry for many eager contributors from the broader community. In light of these obstacles, we advocate for FedEm.

FedEm (Federated Emergence) stands as an open-source library dedicated to decentralizing the training process of foundational models, with a commitment to transparency, responsibility, and equity. By empowering every member of the community to participate in the training and fine-tuning of foundational models, FedEm mitigates the overall computational burden per individual, fostering a more democratic approach to model development. In essence, FedEm epitomizes a paradigm shift, where foundational models are crafted not just for the people, but by the people, ensuring inclusivity and collective ownership throughout the training journey.

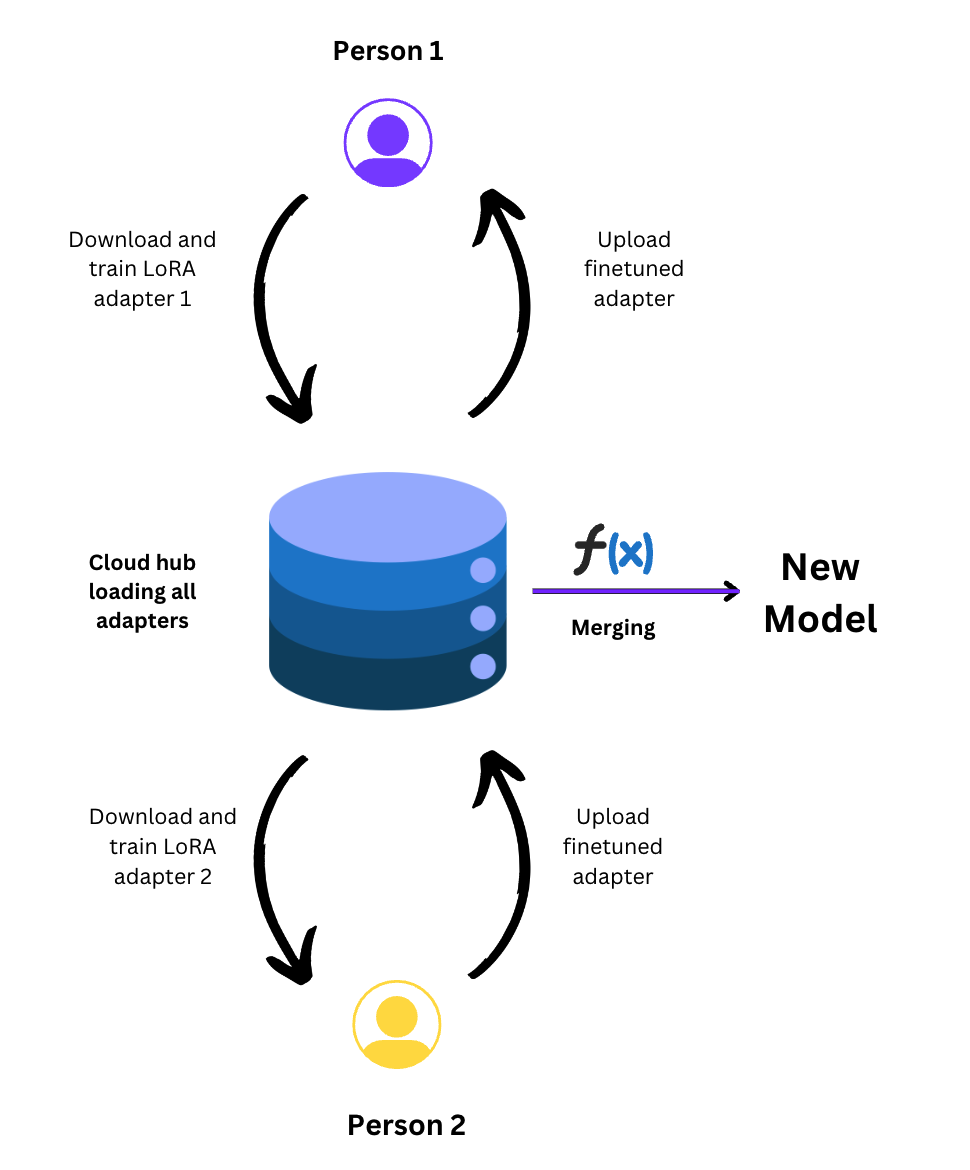

FedEm proposes a methodology to train a foundational model continuously, utilizing adapters. FedEm can be elaborated in mainly two sections. Decentralization of adapter training using CRFs and large scale updation using continuous pretraining checkpoints. We introduce the concept of continuous relay finetuning (CRF), which employs parameter-efficient LoRA adapters in a relay-like fashion for training foundational models. In this method, a client conducts local training of an adapter on a specified dataset, followed by its transmission to a cloud server for subsequent download by another client for further finetuning. FedEm ensures the continuous training of adapters, which are subsequently merged with a foundational model to create an updated model. CRF facilitates community engagement throughout the training process and offers a transparent framework for tracking and continuously updating adapters as new data becomes available, thereby enhancing the adaptability and inclusivity of AI development efforts.The server-side cloud hub exhibits the capability for perpetual training and deployment of refreshed foundational models at specified intervals, such as monthly or daily cycles. Simultaneously, the CRF adapters engage in iterative refinement against these newly updated models, fostering continual adaptation in response to evolving datasets.

For continuous relay finetuning, It is important to schedule the adapter training in a fashion that no two clients have the same adapter for training at one point of time. To ensure this access control, we use a time-dependent adapter scheduling. A client downloads an adapter at time T. The adapter will get locked for any other client i.e. cannot be finetuned till one client does not stop finetuning of that adapter. The hub checks periodically in every 5 minutes for access control of adapters. The adapter gets unlocked if any of the following conditions are met:

- time elapsed for finetuning adapter A > 3 hours.

- client pushes the finetuned adapters before 3 hours.

Further, majority, with the exception of some Chinese LLMs, are English-centric and other languages have a token representation (no pun intended). Often, LLMs have a particulalr tokenizer -- which makes extension to other languages/ domains hard. Vocabulary size and Transfomers Computational Efficiency have an uneasy relationship. Developing SLMs or LLMs is still a compute heavy problem. Therefore, only large consortia with deep pockets, massive talent concentration and GPU farms can afford to build such models.

- has GPU, registers on HuggingFace/mlsquare for write access

- familair with HuggingFace ecosystem (transfomers, peft, datasets, hub)

- [optional] can donate time or data or both

Runs client side script which

- downloads data, pretrains model

- SFTs via LoRA

- pushes the adapter to HuggingFace model hub

- has (big) GPU(s)

- is familair with HuggingFace ecosystem (transfomers, peft, datasets, hub), databases, ML Enginneering in general

- [optional] can donate time or data or both

- Pretrains a multi-lingual Mamba model, publishes a checkpoint

- Evaluated the community contributed adapters in a single-blind fashion, and merges them into the pretrained model

- Does continous pretrainning, and releases checkpoints periodically

- experiment and identify good federating learning policies

- figure out effective training configurations to PT, CPT, SFT, FedT SLMs and LLMs

- develop new task specific adapters

- contribute your local, vernacular data

- curate datasets

Fedem is an open-source project, and contributions are welcome. If you want to contribute, you can create new features, fix bugs, or improve the infrastructure. Please refer to the CONTRIBUTING.md file in the repository for more information on how to contribute.

The views expressed or approach being taken - is of the individuals, and they do not represent any organization explicitly or implicitly. Likewise, anyone who wants to contribute their time, compute or data must understand that, this is a community experiment to develop LLMs by the community, and may not result in any significant outcome. On the contrary, this may end up in total failure. The contributors must take this risk on their own.

To see how to contribute, visit Contribution guidelines

Initial Contributors: @dhavala, @yashwardhanchaudhuri, & @SaiNikhileshReddy

Make Mamba compatiable with Transformer classTest LoRA adapters (adding, training, merging)Pretrain an SLM, SFT on LoRA, Merge, Push

Outcome: A working end-to-end Pretraining and SFT-ing pipeline[DONE]

Develop client-side codeOn multi-lingual indic dataset such as samantar, pretrain a model

Outcome: Release a checkpoint[DONE]

Drive SFT via community (at least two users)Run Federated SFT-ing

- Benchmark and eval on test set (against other OSS LLMS)

- Perplexity vs Epochs (and how Seshu is maturing)

- Mamba: Linear-Time Sequence Modeling with Selective State Spaces, paper, 1st, Dec, 2023

- MambaByte: Token-free Selective State Space Model paper, 24th, Jan, 2024

- BlackMamba - Mixture of Experts of State Space Models paper, code

- ByT5: Towards a token-free future with pre-trained byte-to-byte models paper, 28th, May, 2023

- RomanSetu: Efficiently unlocking multilingual capabilities of Large Language Models models via Romanization paper, 25th, Jan, 2024

- Open Hathi - blog from sarvam.ai, 12th Dec, 2023

- MaLA-500: Massive Language Adaptation of Large Language Models paper

- Model soups: averaging weights of multiple fine-tuned models improves accuracy without increasing inference time paper

- Editing Models with Task Arithmetic paper

- TIES-Merging: Resolving Interference When Merging Models paper

- Language Models are Super Mario: Absorbing Abilities from Homologous Models as a Free Lunch paper

- Rethinking Alignment via In-Context Learning (implments token distribution shift) blog

- PhatGoose: The Challenge of Recycling PEFT Modules for Zero-Shot Generalization blog

- LoRA Hub: Efficient Cross-Task Generalization via Dynamic LoRA Composition (https://arxiv.org/abs/2307.13269)

- samantar here

- Mamba-HF: Mamba model compatible with HuggingFace transformers here

- Mamba pretrained model collection here

- Mamba-minimal: a minimal implementaiton of Mamba architecture here

- Mamba: original implementation by Mama authors here

- OLMo: A truly open LLM blog

- Petals: decentralized inference and finetuning of large language models blog, paper, git repo

- position blog on Petals: a shift in training LLMs with Petals network techcrunch blog

- Shepherd: A Platform Supporting Federated Instruction Tuning here

- FATE-LM is a framework to support federated learning for large language models(LLMs) here

- FEDML Open Source: A Unified and Scalable Machine Learning Library for Running Training and Deployment Anywhere at Any Scale here

- mergekit for model merign to implement multiple model merging techiques here