By Anh Nguyen, Quang D. Tran, Thanh-Toan Do, Ian Reid, Darwin G. Caldwell, Nikos G. Tsagarakis

- Tensorflow (version > 1.0)

- Hardware

- A gpu with ~6GB

- Clone the repo to your

$PROJECT_PATHfolder - Download pretrained weight from this link, and put it under your

$PROJECT_PATH\trained_weightfolder - Download the Flickr5k dataset, and put it under your

$PROJECT_PATH\data\VOCdevkit2007folder - Change the project path in file

lib/model/config.py:__C.root_folder_path = '$PROJECT_PATH' - Build the lib module:

cd $PROJECT_PATH/libthenmake - Run the demo:

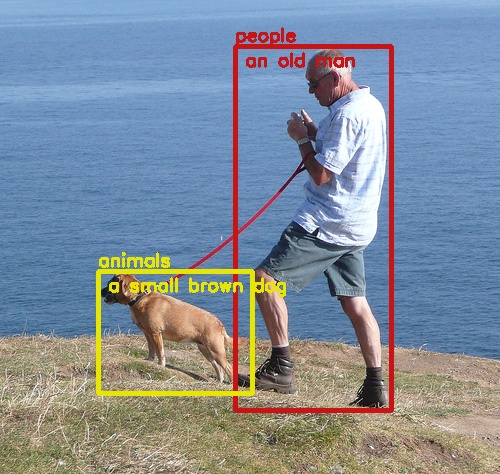

cd $PROJECT_PATH/toolthenpython demo_caption.pyto generate captions for your images

-

We train the network on Flickr5k dataset

- We need to format Flickr5k dataset as in Pascal-VOC dataset for training.

- For your convinience, we did it for you. Just download this file (Google Drive and extract it into your

$PROJECT_PATH\data\VOCdevkit2007folder.

-

Train the network:

python $PROJECT_PATH/tool/trainval_net.py

If you find this source code useful in your research, please consider citing:

@article{Nguyen_objcaption,

author = {Anh Nguyen and

Duy Q. Tran and

Thanh{-}Toan Do and

Ian D. Reid and

Darwin G. Caldwell and

Nikos G.Tsagarakis},

title = {Object Captioning and Retrieval with Natural Language},

journal = {International Conference on Computer Vision Workshop},

year = {2019},

}

MIT License

This repo used a lot of source code from Faster-RCNN and AffordanceNet

If you have any questions or comments, please send an email to: a.nguyen@ic.ac.uk