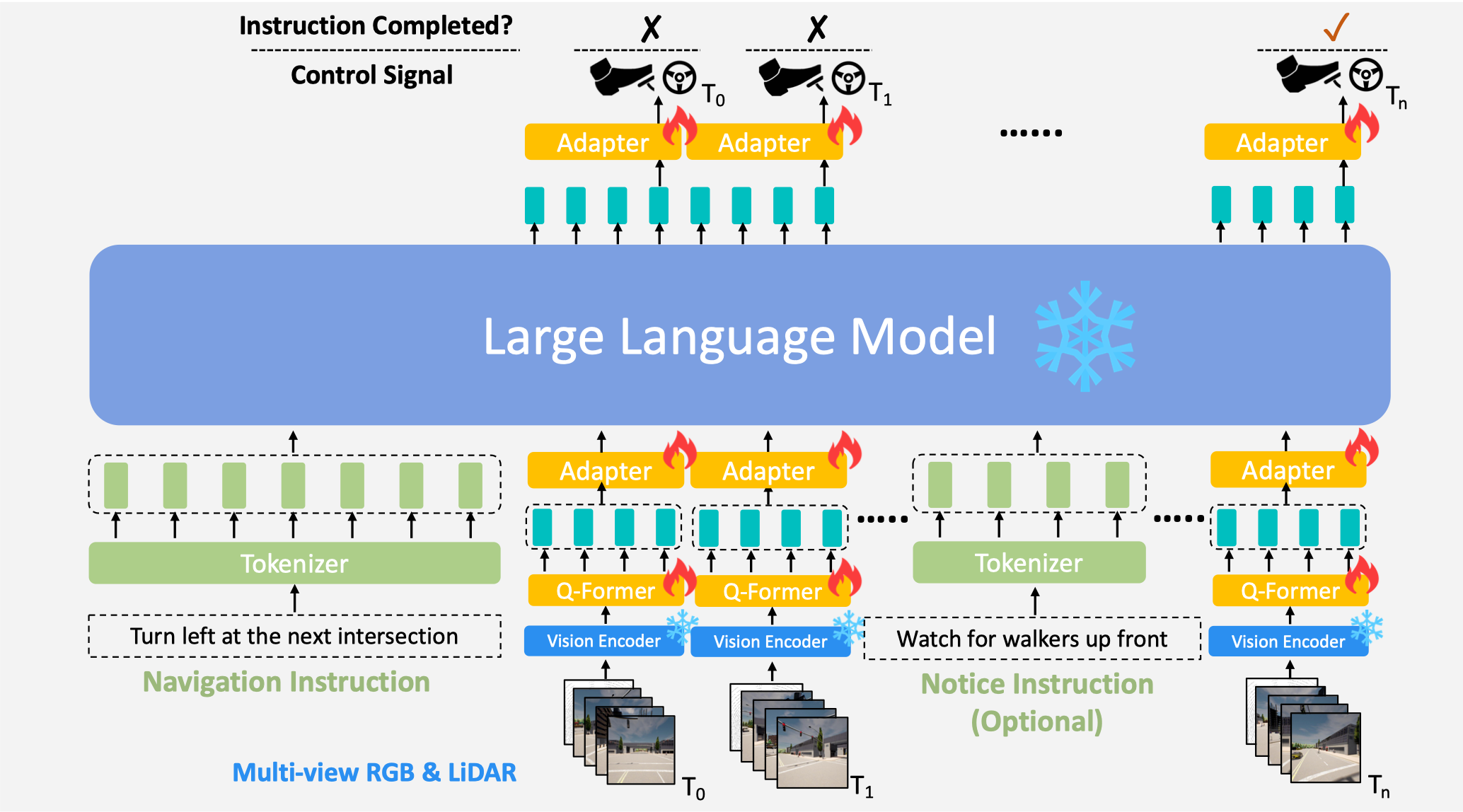

An end-to-end, closed-loop, language-based autonomous driving framework, which interacts with the dynamic environment via multi-modal multi-view sensor data and natural language instructions.

[Project Page] [Paper] [Dataset(hugging face)] [Model Zoo]

[Dataset(OpenXlab)] [Model Zoo(OpenXLab)]

[02/27]LMDrive is accepted by CVPR 2024 🎉🎉🎉[01/25]We uploaded our models to OpenXLab[01/23]We gave a talk at ZhiDongXi (智东西)[01/20]We uploaded our dataset to OpenXLab[12/21]We released our project website here

Hao Shao, Yuxuan Hu, Letian Wang, Steven L. Waslander, Yu Liu, Hongsheng Li.

This repository contains code for the paper LMDrive: Closed-Loop End-to-End Driving with Large Language Models. This work proposes a novel language-guided, end-to-end, closed-loop autonomous driving framework.

demo_video.mp4

Our project is built on three parts: (1) vision encoder (corresponding repo: timm); (2) vision LLM (corresponding repo: LAVIS); (3) data collection, agent controller (corresponding repo: InterFuser, Leaderboard, ScenarioRunner).

Install anaconda

wget https://repo.anaconda.com/archive/Anaconda3-2020.11-Linux-x86_64.sh

bash Anaconda3-2020.11-Linux-x86_64.sh

source ~/.bashrcClone the repo and build the environment

git clone https://github.com/opendilab/LMDrive.git

cd LMDrive

conda create -n lmdrive python=3.8

conda activate lmdrive

cd vision_encoder

pip3 install -r requirements.txt

python setup.py develop # if you have installed timm before, please uninstall it

cd ../LAVIS

pip3 install -r requirements.txt

python setup.py develop # if you have installed LAVIS before, please uninstall it

pip install flash-attn --no-build-isolation # optionalDownload and setup CARLA 0.9.10.1

chmod +x setup_carla.sh

./setup_carla.sh

pip install carlaIf you encounter some problems related to Carla, please refer to Carla Issues and InterFuser Issues first.

If you are interested in including any other details in Model Zoo, please open an issue :)

| Version | Size | Checkpoint | VisionEncoder | LLM-base | DS (LangAuto) | DS (LangAuto-short) |

|---|---|---|---|---|---|---|

| LMDrive-1.0 (LLaVA-v1.5-7B) | 7B | LMDrive-llava-v1.5-7b-v1.0 | R50 | LLaVA-v1.5-7B | 36.2 | 50.6 |

| LMDrive-1.0 (Vicuna-v1.5-7B) | 7B | LMDrive-vicuna-v1.5-7b-v1.0 | R50 | Vicuna-v1.5-7B | 33.5 | 45.3 |

| LMDrive-1.0 (LLaMA-7B) | 7B | LMDrive-llama-7b-v1.0 | R50 | LLaMA-7B | 31.3 | 42.8 |

DS denotes the driving score

We aim to develop an intelligent driving agent that can generate driving actions based on three sources of input: 1) sensor data (multi-view camera and LiDAR), so that the agent can generate actions that are aware of and compliant with the current scene; 2) navigation instructions (e.g. lane changing, turning), so that the agent can drive to meet the requirement in natural language (instruction from humans or navigation software); and 3) human notice instruction, so that the agent can interact with humans and adapt to human's suggestions and preferences (e.g. pay attention to adversarial events, deal with long-tail events, etc).

We provide a dataset with about 64K data clips, where each clip includes one navigation instruction, several notice instructions, a sequence of multi-modal multi-view sensor data, and control signals. The duration of the clip spans from 2 to 20 seconds. The dataset used in our paper can be downloaded here. If you want to create your own dataset, please follow the steps we've outlined below.

The data is generated with leaderboard/team_code/auto_pilot.py in 8 CARLA towns using the routes and scenarios files provided at leaderboard/data on CARLA 0.9.10.1 . The dataset is collected at a high frequency (~10Hz).

Once you have downloaded our dataset or collected your own dataset, it's necessary to systematically organize the data as follows. DATASET_ROOT is the root directory where your dataset is stored.

├── $DATASET_ROOT

│ └── dataset_index.txt # for vision encoder pretraining

│ └── navigation_instruction_list.txt # for instruction finetuning

│ └── notice_instruction_list.json # for instruction finetuning

│ └── routes_town06_long_w7_11_28_18_28_35 # data folder

│ └── routes_town01_short_w2_11_16_08_27_10

│ └── routes_town02_short_w2_11_16_22_55_25

│ └── routes_town01_short_w2_11_16_11_44_08

├── rgb_full

├── lidar

└── ...

The navigation_instruction_list.txt and notice_instruction_list.txt can be generated with our scripts by the data parsing scripts.

Each subfolder in the dataset you've collected should be structured as follows:

- routes_town(town_id)_{tiny,short,long}_w(weather_id)_timestamp: corresponding to different towns and routes files

- routes_X: contains data for an individual route

- rgb_full: a big multi-view camera image at 400x1200 resolution, which can be split into four images (left, center, right, rear)

- lidar: 3d point cloud in .npy format. It only includes the LiDAR points captured in 1/20 second, covering 180 degrees of horizontal view. So if you want to utilize 360 degrees of view, you need to merge it with the data from lidar_odd.

- lidar_odd: 3d point cloud in .npy format.

- birdview: topdown segmentation images, LAV and LBC used this type of data for training

- topdown: similar to birdview but it's captured by the down-facing camera

- 3d_bbs: 3d bounding boxes for different agents

- affordances: different types of affordances

- actors_data: contains the positions, velocities and other metadata of surrounding vehicles and the traffic lights

- measurements: contains ego agent's position, velocity, future waypoints, and other metadata

- measurements_full: merges measurement and actors_data

- measurements_all.json: merges the files in measurement_full into a single file

The $DATASET_ROOT directory must contain a file named dataset_index.txt, which can be generated by our data pre-processing script. It should list the training and evaluation data in the following format:

<relative_route_path_dir> <num_data_frames_in_this_dir>

routes_town06_long_w7_11_28_18_28_35/ 1062

routes_town01_short_w2_11_16_08_27_10/ 1785

routes_town01_short_w2_11_16_09_55_05/ 918

routes_town02_short_w2_11_16_22_55_25/ 134

routes_town01_short_w2_11_16_11_44_08/ 569

Here, <relative_route_path_dir> should be a relative path to the $DATASET_ROOT. The training code will concatenate the $DATASET_ROOT and <relative_route_path_dir> to create the full path for loading the data.

In this format, 1062 represents the number of frames in the routes_town06_long_w7_11_28_18_28_35/rgb_full directory or routes_town06_long_w7_11_28_18_28_35/lidar, etc.

In addition to the dataset, we have also provided all the scripts used for generating data and these can be modified as required for different CARLA versions. The dataset is collected by a rule-based expert agent in different weathers and towns.

# Start 4 carla servers: ip [localhost], port [2000, 2002, 2004, 2006]. You can adjust the number of CARLA servers according to your situation and more servers can collect more data. If you use N servers to collect data, it means you have collected data N times on each route, except that the weather and traffic scenarios are random each time.

cd carla

CUDA_VISIBLE_DEVICES=0 ./CarlaUE4.sh --world-port=2000 -opengl &

CUDA_VISIBLE_DEVICES=1 ./CarlaUE4.sh --world-port=2002 -opengl &

CUDA_VISIBLE_DEVICES=2 ./CarlaUE4.sh --world-port=2004 -opengl &

CUDA_VISIBLE_DEVICES=3 ./CarlaUE4.sh --world-port=2006 -opengl &Instructions for setting up docker are available here. Pull the docker image of CARLA 0.9.10.1 docker pull carlasim/carla:0.9.10.1.

Docker 18:

docker run -it --rm -p 2000-2002:2000-2002 --runtime=nvidia -e NVIDIA_VISIBLE_DEVICES=0 carlasim/carla:0.9.10.1 ./CarlaUE4.sh --world-port=2000 -opengl

Docker 19:

docker run -it --rm --net=host --gpus '"device=0"' carlasim/carla:0.9.10.1 ./CarlaUE4.sh --world-port=2000 -openglIf the docker container doesn't start properly then add another environment variable -e SDL_AUDIODRIVER=dsp.

Generate scripts for collecting data in batches.

cd dataset

python init_dir.py

cd ..

cd data_collection

# You can modify FPS, waypoints distribution strength in auto_agent.yaml ...

# If you do not use 4 servers, the following Python scripts are needed to modify

python generate_bashs.py

python generate_batch_collect.py

cd ..Run batch-run scripts of the town and route type that you need to collect.

bash data_collection/batch_run/run_route_routes_town01_long.sh

bash data_collection/batch_run/run_route_routes_town01_short.sh

...

bash data_collection/batch_run/run_route_routes_town07_tiny.sh

...

bash data_collection/batch_run/run_route_routes_town10_tiny.shNote: Our scripts will use a random weather condition for data collection

With a single CARLA server, roll out the autopilot to start data generation.

carla/CarlaUE4.sh --world-port=2000 -opengl

./leaderboard/scripts/run_evaluation.shThe expert agent used for data generation is defined in leaderboard/team_code/auto_pilot.py. Different variables which need to be set are specified in leaderboard/scripts/run_evaluation.sh.

We provide some Python scripts for pre-processing the collected data in tools/data_preprocessing, some of them are optional. Please execute them in the order:

python get_list_file.py $DATASET_ROOT: obtain the dataset_list.txt.python batch_merge_data.py $DATASET_ROOT: merge several scattered data files into one file to reduce IO time when training. [Optional]python batch_rm_rgb_data.py $DATASET_ROOT: delete redundant files after we have merged them into new files. [Optional]python batch_stat_blocked_data.py $DATASET_ROOT: find the frames that the ego-vehicle is blocked for a long time. By removing them, we can enhance data distribution and decrease the overall data size.python batch_rm_blocked_data.py $DATASET_ROOT: delete the blocked frames.python batch_recollect_data.py $DATASET_ROOT: since we have removed some frames, we need to reorganize them to ensure that the frame ids are continuous.python batch_merge_measurements.py $DATASET_ROOT: merge the measurement files from all frames in one route folder to reduce IO time

After collecting and pre-processing the data, we need to parse the navigation instructions and notice instructions data with some Python scripts in tools/data_parsing.

The script for parsing navigation instructions:

python3 parse_instruction.py $DATSET_ROOT The parsed navigation clips will be saved in $DATSET_ROOT/navigation_instruction_list.txt, under the root directory of the dataset.

The script for parsing notice instructions:

python3 parse_notice.py $DATSET_ROOT The parsed notice clips will be saved in $DATSET_ROOT/notice_instruction_list.txt.

The script for parsing misleading instructions:

python3 parse_misleading.py $DATSET_ROOT The parsed misleading clips will be saved in $DATSET_ROOT/misleading_data.txt.

LMDrive's training consists of two stages: 1) the vision encoder pre-training stage, to generate visual tokens from sensor inputs; and 2) the instruction-finetuning stage, to align the instruction/vision and control signal.

LMDrive is trained on 8 A100 GPUs with 80GB memory (the first stage can be trained on GPUS with 32G memory). To train on fewer GPUs, you can reduce the batch-size and the learning-rate while maintaining their proportion.

Please download the multi-modal dataset with instructions collected in the CARLA simulator we use in the paper here or openxlab (uploading)], if you do not collect the dataset by yourself. You can only download part of them to verify our framework or your improvement.

Pretrain takes around 2~3 days for the visual encoder on 8x A100 (80G). Once the training is completed, you can locate the checkpoint of the vision encoder in the output/ directory.

cd vision_encoder

bash scripts/train.shSome options to note:

GPU_NUM: the number of GPUs you want to use. By default, it is set to 8.DATASET_ROOT: the root directory for storing the dataset.--model: the structure of visual model. You can choose memfuser_baseline_e1d3_r26 which replaces ResNet50 with ResNet26. It's also possible to create new model variants invisual_encoder/timm/models/memfuser.py--train-towns/train-weathers: the data filter for the training dataset. Similarly, there are corresponding options,val-towns/val-weathersto filter the validation dataset accordingly.

Instruction finetuning takes around 2~3 days for the visual encoder on 8x A100 (80G). Once the training is completed, you can locate the checkpoint of the adapter and qformer in the lavis/output/ directory.

cd LAVIS

bash run.sh 8 lavis/projects/lmdrive/notice_llava15_visual_encoder_r50_seq40.yaml # 8 is the GPU numberSome options in the config.yaml to note:

preception_model: the model architecture of the vision encoder.preception_model_ckpt: the checkpoint path of the vision encoder.llm_model: the checkpoint path of the llm (Vicuna/LLaVA).use_notice_prompt: whether to use notice instruction data when training.split_section_num_for_visual_encoder: the number of sections the frames are divided into during the forward encoding of visual features. Higher values can save more memory, and it needs to be a factor oftoken_max_length.- datasets:

storage: the root directory for storing the dataset.towns/weathers: the data filter for training/evaluating.token_max_length: the maximum number of frames, if the number of frames exceeds this value, they will be truncated.sample_interval: the interval at which frames are sampled.

Start a CARLA server (described above) and run the required agent. The adequate routes and scenarios files are provided in leaderboard/data and the required variables need to be set in leaderboard/scripts/run_evaluation.sh.

Some options need to be updated in the leaderboard/team_code/lmdrive_config.py:

preception_model: the model architecture of the vision encoder.preception_model_ckpt: the checkpoint path of the vision encoder (obtained in the vision encoder pretraining stage).llm_model: the checkpoint path of the llm (LLaMA/Vicuna/LLaVA).lmdrive_ckpt: the checkpoint path of the lmdrive (obtained in the instruction finetuing stage).

Update leaderboard/scripts/run_evaluation.sh to include the following code for evaluating the model on Town05 Long Benchmark.

export CARLA_ROOT=/path/to/carla/root

export TEAM_AGENT=leaderboard/team_code/lmdrive_agent.py

export TEAM_CONFIG=leaderboard/team_code/lmdrive_config.py

export CHECKPOINT_ENDPOINT=results/lmdrive_result.json

export SCENARIOS=leaderboard/data/official/all_towns_traffic_scenarios_public.json

export ROUTES=leaderboard/data/LangAuto/long.xmlCUDA_VISIBLE_DEVICES=0 ./leaderboard/scripts/run_evaluation.shHere, the long.json and long.xml files are replaced with short.json and short.xml for the evaluation of the agent in the LangAuto-Short benchmark.

For LangAuto-Tiny benchmark evaluation, replace the long.json and long.xml files with tiny.json and tiny.xml:

export SCENARIOS=leaderboard/data/LangAuto/tiny.json

export ROUTES=leaderboard/data/LangAuto/tiny.xmlSet the agent_use_notice as True in the lmdriver_config.py.

If you find our repo, dataset or paper useful, please cite us as

@misc{shao2023lmdrive,

title={LMDrive: Closed-Loop End-to-End Driving with Large Language Models},

author={Hao Shao and Yuxuan Hu and Letian Wang and Steven L. Waslander and Yu Liu and Hongsheng Li},

year={2023},

eprint={2312.07488},

archivePrefix={arXiv},

primaryClass={cs.CV}

}This implementation is based on code from several repositories.

- InterFuser

- Transfuser

- 2020_CARLA_challenge

- CARLA Leaderboard

- Scenario Runner

- LAVIS

- pytorch-image-models

All code within this repository is under Apache License 2.0.