UniAD.mp4

- Highlights

- News

- Getting Started

- Results and Models

- TODO List

- License

- Citation

- 🔥 See Also: GenAD & Vista

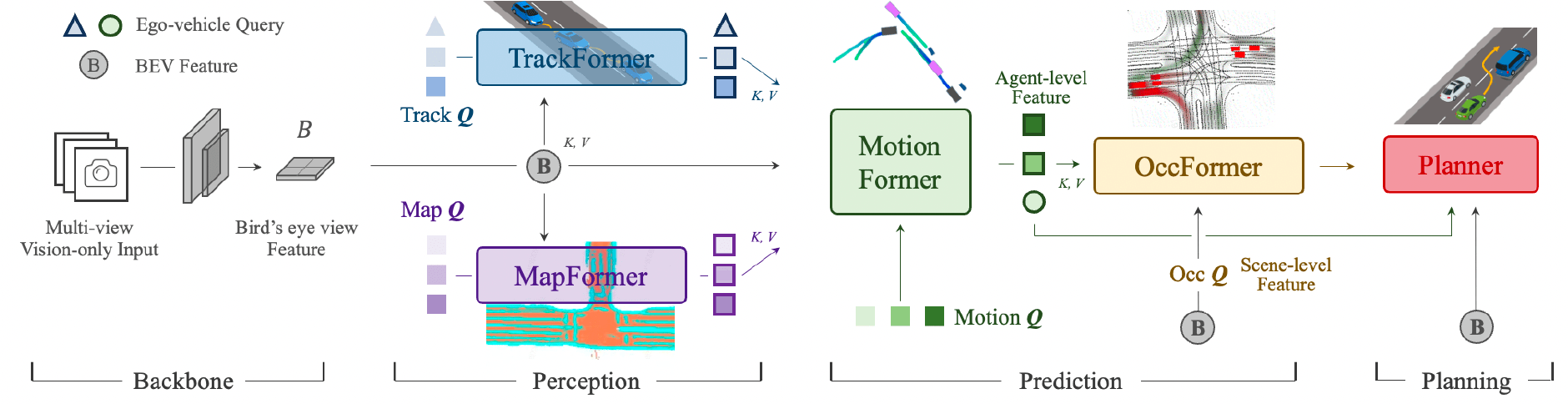

- 🚘 Planning-oriented philosophy: UniAD is a Unified Autonomous Driving algorithm framework following a planning-oriented philosophy. Instead of standalone modular design and multi-task learning, we cast a series of tasks, including perception, prediction and planning tasks hierarchically.

- 🏆 SOTA performance: All tasks within UniAD achieve SOTA performance, especially prediction and planning (motion: 0.71m minADE, occ: 63.4% IoU, planning: 0.31% avg.Col)

-

Paper Title Change: To avoid confusion with the "goal-point" navigation in Robotics, we change the title from "Goal-oriented" to "Planning-oriented" suggested by Reviewers. Thank you! -

Planning Metric: Discussion [Ref: OpenDriveLab#29]: Clarification and Notice regarding open-loop planning results comparison. -

2024/08/27New feature: Implementation for CARLA and closed-loop evaluation on CARLA Leaderboard 2.0 scenarios are available in Bench2Drive. -

2023/08/03Bugfix [Commit]: Previously, the visualized planning results were in opposition on the x axis, compared to the ground truth. Now it's fixed. -

2023/06/12Bugfix [Ref: OpenDriveLab#21]: Previously, the performance of the stage1 model (track_map) could not be replicated when trained from scratch, due to mistakenly addingloss_past_trajand freezingimg_neckandBN. By removingloss_past_trajand unfreezingimg_neckandBNin training, the reported results could be reproduced (AMOTA: 0.393, stage1_train_log). -

2023/04/18New feature: You can replace BEVFormer with other BEV Encoding methods, e.g., LSS, as long as you provide thebev_embedandbev_posin track_train and track_inference. Make sure your bevs and ours are of the same shape. -

2023/04/18Base-model checkpoints are released. -

2023/03/29Code & model initial releasev1.0. -

2023/03/21🌟🌟 UniAD is accepted by CVPR 2023, as an Award Candidate (12 out of 2360 accepted papers)! -

2022/12/21UniAD paper is available on arXiv.

UniAD is trained in two stages. Pretrained checkpoints of both stages will be released and the results of each model are listed in the following tables.

We first train the perception modules (i.e., track and map) to obtain a stable weight initlization for the next stage. BEV features are aggregated with 5 frames (queue_length = 5).

| Method | Encoder | Tracking AMOTA |

Mapping IoU-lane |

config | Download |

|---|---|---|---|---|---|

| UniAD-B | R101 | 0.390 | 0.297 | base-stage1 | base-stage1 |

We optimize all task modules together, including track, map, motion, occupancy and planning. BEV features are aggregated with 3 frames (queue_length = 3).

| Method | Encoder | Tracking AMOTA |

Mapping IoU-lane |

Motion minADE |

Occupancy IoU-n. |

Planning avg.Col. |

config | Download |

|---|---|---|---|---|---|---|---|---|

| UniAD-B | R101 | 0.363 | 0.313 | 0.705 | 63.7 | 0.29 | base-stage2 | base-stage2 |

- Download the checkpoints you need into

UniAD/ckpts/directory. - You can evaluate these checkpoints to reproduce the results, following the

evaluationsection in TRAIN_EVAL.md. - You can also initialize your own model with the provided weights. Change the

load_fromfield topath/of/ckptin the config and follow thetrainsection in TRAIN_EVAL.md to start training.

The overall pipeline of UniAD is controlled by uniad_e2e.py which coordinates all the task modules in UniAD/projects/mmdet3d_plugin/uniad/dense_heads. If you are interested in the implementation of a specific task module, please refer to its corresponding file, e.g., motion_head.

All assets and code are under the Apache 2.0 license unless specified otherwise.

If you find our project useful for your research, please consider citing our paper and codebase with the following BibTeX:

@inproceedings{hu2023_uniad,

title={Planning-oriented Autonomous Driving},

author={Yihan Hu and Jiazhi Yang and Li Chen and Keyu Li and Chonghao Sima and Xizhou Zhu and Siqi Chai and Senyao Du and Tianwei Lin and Wenhai Wang and Lewei Lu and Xiaosong Jia and Qiang Liu and Jifeng Dai and Yu Qiao and Hongyang Li},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2023},

}@misc{contributors2023_uniadrepo,

title={Planning-oriented Autonomous Driving},

author={UniAD contributors},

howpublished={\url{https://github.com/OpenDriveLab/UniAD}},

year={2023}

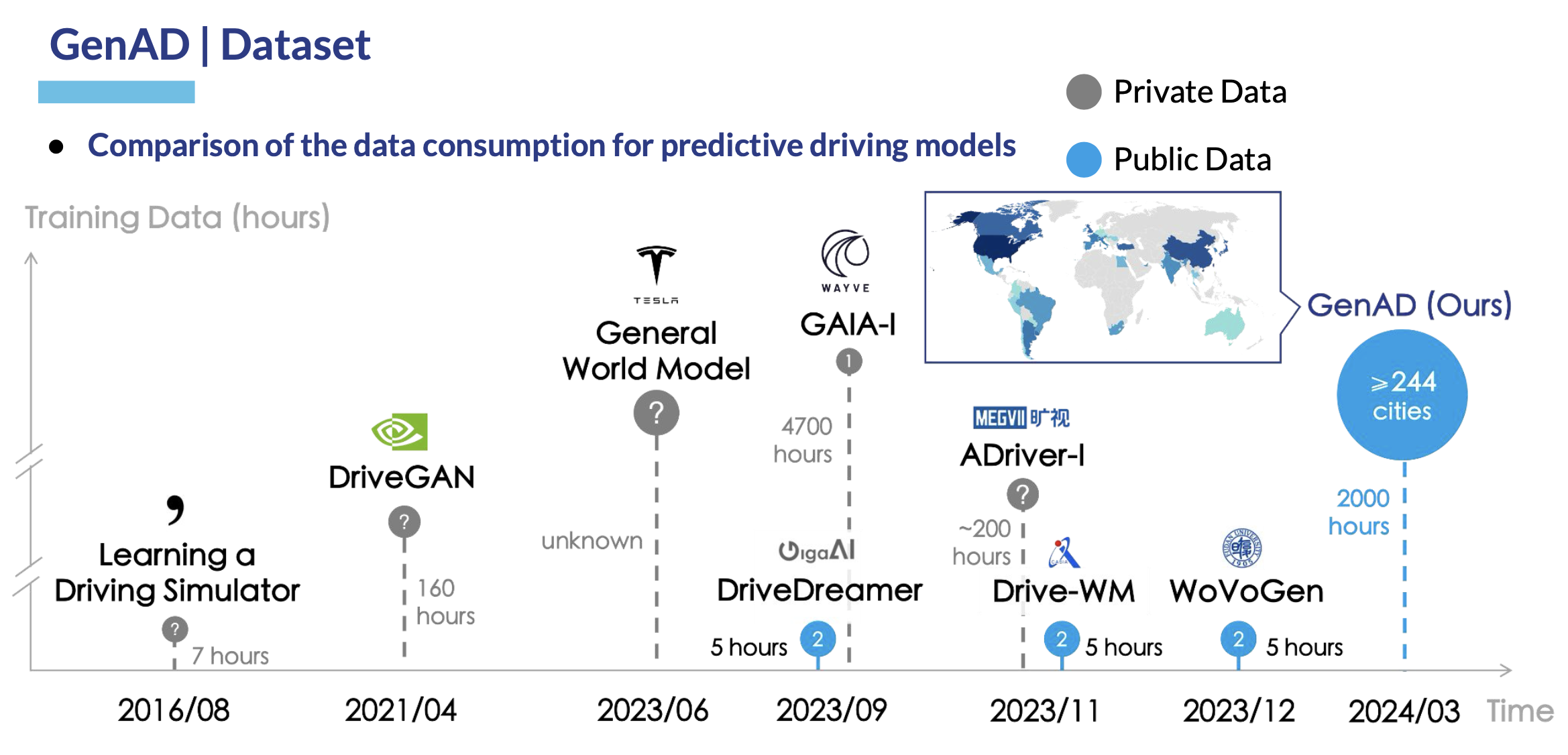

}We are thrilled to launch our recent line of works: GenAD and Vista, to advance driving world models with the largest driving video dataset collected from the web - OpenDV.

GenAD: Generalized Predictive Model for Autonomous Driving (CVPR'24, Highlight ⭐)

Vista: A Generalizable Driving World Model with High Fidelity and Versatile Controllability 🌏