Table of Contents

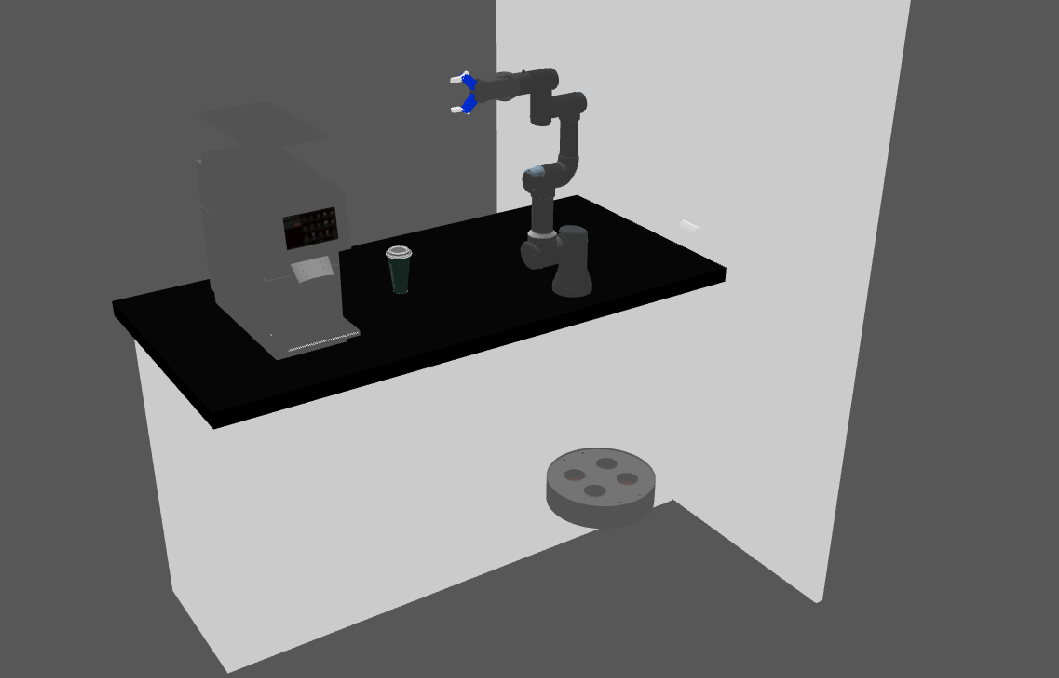

Reinforcement learning project for a robotic arm, the main goal is to train the arm to pick up a coffee cup and place it on a delivery robot platform.

- Ubuntu 22.04

- ROS2 Humble

- Docker

- Docker-compose

- Clone the repo:

cd ~ && \ git clone https://github.com/pvela2017/The-Construct-Starbots-Coffee-Shop

- Compile the image:

cd masterclass-project/docker && \ docker build -t mefistocl/masterclassproject:latest .

- Setup the docker compose file:

environment: - DISPLAY=:0 # Select the dispaly to be shared, can be replaced by $DISPLAY - GAZEBO_MODEL_PATH=/root/ros2_ws/src/the_construct_office_gazebo/models:/root/ros2_ws/src/the_construct_office_gazebo/barista_ros2/barista_description:/root/ros2_ws/src/ur_arm:$${GAZEBO_MODEL_PATH} # No need to change volumes: - /tmp/.X11-unix:/tmp/.X11-unix # No need to change - /dev/shm:/dev/shm # No need to change - /home/mefisto/masterclass/ros2_ws:/root/ros2_ws # Change the first part to your ros2_ws path

- Allow the container to use the screen:

xhost +

- Start the container:

docker-compose run masterclass_project /bin/bash

- Compile and launch the simulation:

cd /root/ros2_ws && \ colcon build && \ source /root/ros2_ws/install/setup.bash && \ ros2 launch the_construct_office_gazebo starbots_ur3e.launch.xml

- Moveit:

source /root/ros2_ws/install/setup.bash && \ ros2 launch my_moveit_config move_group.launch.py && \ ros2 launch my_moveit_config moveit_rviz.launch.py

- Barista robot detector:

source /root/ros2_ws/install/setup.bash && \ ros2 launch hole_detector hole_detector_sim.launch.py

- Pick and Place:

source /root/ros2_ws/install/setup.bash && \ ros2 launch pick_and_place pick_and_place_perception_sim.launch.py

- Start the web application:

cd /root/webpage_ws && \ http-server --port 7000 # Locally

- Launch the rosbridge node:

ros2 launch rosbridge_server rosbridge_websocket_launch.xml

- Launch the web video server node:

ros2 run web_video_server web_video_server

- Connect to the website:

https://ip/webpage/

- Install packages:

sudo apt update && \ sudo apt install -y ros-humble-async-web-server-cpp - Compile:

cd ~/ros2_ws && \ rm -rf ./src/gazebo_ros_pkgs && \ colcon build && \ source ~/ros2_ws/install/setup.bash

- Check hardware is working properly:

ros2 param set /D415/D415 enable_color True && \ ros2 param set /D415/D415 enable_depth True && \ ros2 param set /D415/D415 rgb_camera.profile 480x270x6 && \ ros2 param set /D415/D415 depth_module.profile 480x270x6 && \ ros2 param set /D415/D415 align_depth.enable True && \ ros2 control list_controllers

- Moveit:

ros2 launch real_my_moveit_config move_group.launch.py ros2 launch real_my_moveit_config moveit_rviz.launch.py

- Barista robot detector:

source ~/ros2_ws/install/setup.bash && \ ros2 launch hole_detector hole_detector_real.launch.py

- Pick and Place:

source ~/ros2_ws/install/setup.bash && \ ros2 launch pick_and_place pick_and_place_perception_real.launch.py

- Test without website:

ros2 topic pub /webpage std_msgs/msg/Int16 data:\ 1

- Start the web application:

cd ~/webpage_ws && \ python3 -m http.server 7000 # The construct website

- Launch the rosbridge node:

ros2 launch rosbridge_server rosbridge_websocket_launch.xml

- Launch web video server:

source ~/ros2_ws/install/setup.bash && \ ros2 run web_video_server web_video_server --ros-args -p port:=11315

- Check the url in the construct, locally the address will be display on the terminal:

rosbridge_address

- Replace the field rosbridge_address on the app.js file:

rosbridge_address: 'wss://i-00cbdc40fcccd3514.robotigniteacademy.com/7e4d6577-22bd-40b2-b93e-1dab1f84d000/rosbridge/', - Connect to the website:

https://i-072786a1118392265.robotigniteacademy.com/5aa33093-8141-45ca-9477-52ba0c8be6e5/webpage/

- gazebo-ros-pkgs cloned from ros2 branch fixes the unordered pointcloud obtained in the simulation.

- https://roboticsbackend.com/ros2-package-for-both-python-and-cpp-nodes/

- https://ros2-tutorial.readthedocs.io/en/latest/using_python_library.html

- https://moveit.picknik.ai/humble/doc/examples/move_group_interface/move_group_interface_tutorial.html

- Moveit - plannning with orientation constraint.

- Moveit - Attach object to the arm for planning.

- Perception - Use RGB and Depth aligned image to get object coordinates.