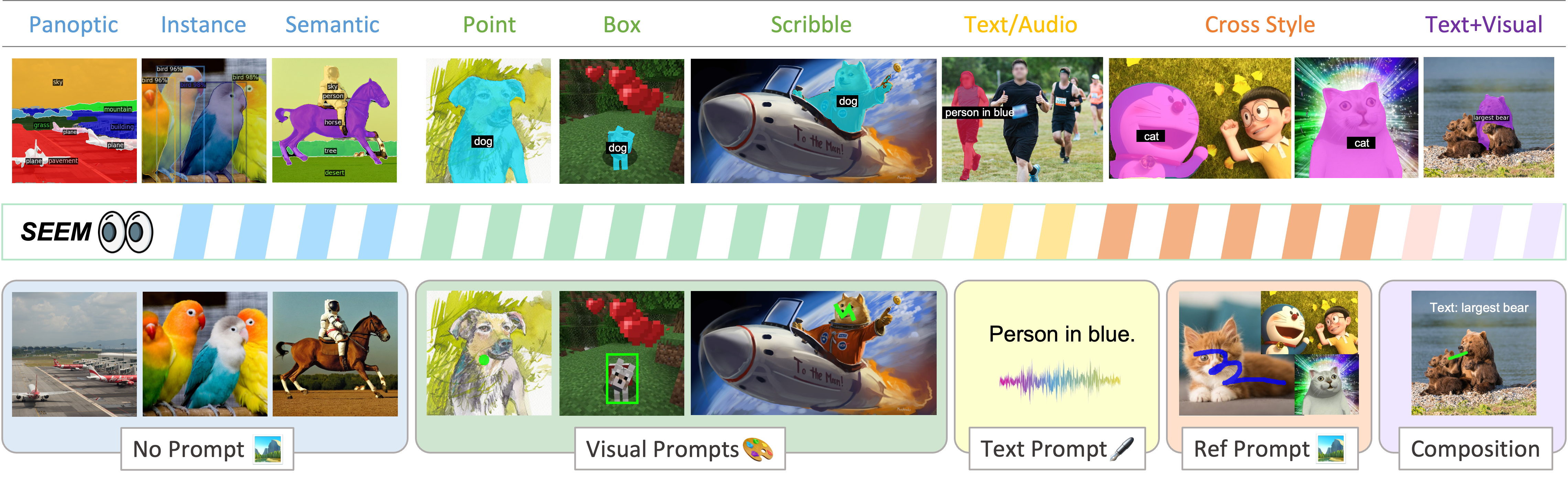

We introduce SEEM that can Segment Everything Everywhere with Multi-modal prompts all at once. SEEM allows users to easily segment an image using prompts of different types including visual prompts (points, marks, boxes, scribbles and image segments) and language prompts (text and audio), etc. It can also work with any combinations of prompts or generalize to custom prompts!

🍇 [Read our arXiv Paper] 🍎 [Try Hugging Face Demo]

One-Line Getting Started with Linux:

git clone git@github.com:UX-Decoder/Segment-Everything-Everywhere-All-At-Once.git && cd Segment-Everything-Everywhere-All-At-Once/demo_code && sh run_demo.sh👉 [New] Latest Checkpoints and Numbers:

| COCO | Ref-COCOg | VOC | SBD | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Method | Checkpoint | backbone | PQ | mAP | mIoU | cIoU | mIoU | AP50 | NoC85 | NoC90 | NoC85 | NoC90 |

| X-Decoder | ckpt | Focal-T | 50.8 | 39.5 | 62.4 | 57.6 | 63.2 | 71.6 | - | - | - | - |

| X-Decoder-oq201 | ckpt | Focal-L | 56.5 | 46.7 | 67.2 | 62.8 | 67.5 | 76.3 | - | - | - | - |

| SEEM | ckpt | Focal-T | 50.6 | 39.4 | 60.9 | 58.5 | 63.5 | 71.6 | 3.54 | 4.59 | * | * |

| SEEM | - | Davit-d3 | 56.2 | 46.8 | 65.3 | 63.2 | 68.3 | 76.6 | 2.99 | 3.89 | 5.93 | 9.23 |

| SEEM-oq101 | ckpt | Focal-L | 56.2 | 46.4 | 65.5 | 62.8 | 67.7 | 76.2 | 3.04 | 3.85 | * | * |

🔥 Related projects:

- FocalNet : Focal Modulation Networks; We used FocalNet as the vision backbone.

- UniCL : Unified Contrastive Learning; We used this technique for image-text contrastive learning.

- X-Decoder : Generic decoder that can do multiple tasks with one model only;We built SEEM based on X-Decoder.

🔥 Other projects you may find interesting:

- OpenSeed : Strong open-set segmentation methods.

- Grounding SAM : Combining Grounding DINO and Segment Anything; Grounding DINO: A strong open-set detection model.

- X-GPT : Conversational Visual Agent supported by X-Decoder.

- LLaVA : Large Language and Vision Assistant.

- [2023.05.02] We have released the SEEM Focal-L and X-Decoder Focal-L checkpoints and configs!

- [2023.04.28] We have updated the ArXiv that shows better interactive segmentation results than SAM, which trained on x50 more data than us!

- [2023.04.26] We have released the Demo Code and SEEM-Tiny Checkpoint! Please try the One-Line Started!

- [2023.04.20] SEEM Referring Video Segmentation is out! Please try the Video Demo and take a look at the NERF examples.

Inspired by the appealing universal interface in LLMs, we are advocating a universal, interactive multi-modal interface for any type of segmentation with ONE SINGLE MODEL. We emphasize 4 important features of SEEM below.

- Versatility: work with various types of prompts, for example, clicks, boxes, polygons, scribbles, texts, and referring image;

- Compositionaliy: deal with any compositions of prompts;

- Interactivity: interact with user in multi-rounds, thanks to the memory prompt of SEEM to store the session history;

- Semantic awareness: give a semantic label to any predicted mask;

A brief introduction of all the generic and interactive segmentation tasks we can do.

A brief introduction of all the generic and interactive segmentation tasks we can do.

- Try our default examples first;

- Upload an image;

- Select at least one type of prompt of your choice (If you want to use referred region of another image please check "Example" and upload another image in referring image panel);

- Remember to provide the actual prompt for each prompt type you select, otherwise you will meet an error (e.g., remember to draw on the referring image);

- Our model by default support the vocabulary of COCO 80 categories, others will be classified to 'others' or misclassified. If you want to segment using open-vocabulary labels, include the text label in 'text' button after drawing scribbles.

- Click "Submit" and wait for a few seconds.

An example of Transformers. The referred image is the truck form of Optimus Prime. Our model can always segment Optimus Prime in target images no matter which form it is in. Thanks Hongyang Li for this fun example.

- Inspired by the example in SA3D, we tried SEEM on NERF Examples and works well :)

With a simple click or stoke from the user, we can generate the masks and the corresponding category labels for it.

SEEM can generate the mask with text input from the user, providing multi-modality interaction with human.

With a simple click or stroke on the referring image, the model is able to segment the objects with similar semantics on the target images.

SEEM understands the spatial relationship very well. Look at the three zebras! The segmented zebras have similar positions with the referred zebras. For example, when the leftmost zebra is referred on the upper row, the leftmost zebra on the bottom row is segmented.

No training on video data needed, SEEM works perfectly for you to segment videos with whatever queries you specify!

We use Whisper to turn audio into text prompt to segment the object. Try it in our demo!

An example of segmenting a meme.

An example of segmenting trees in cartoon style.

An example of segmenting a Minecraft image.

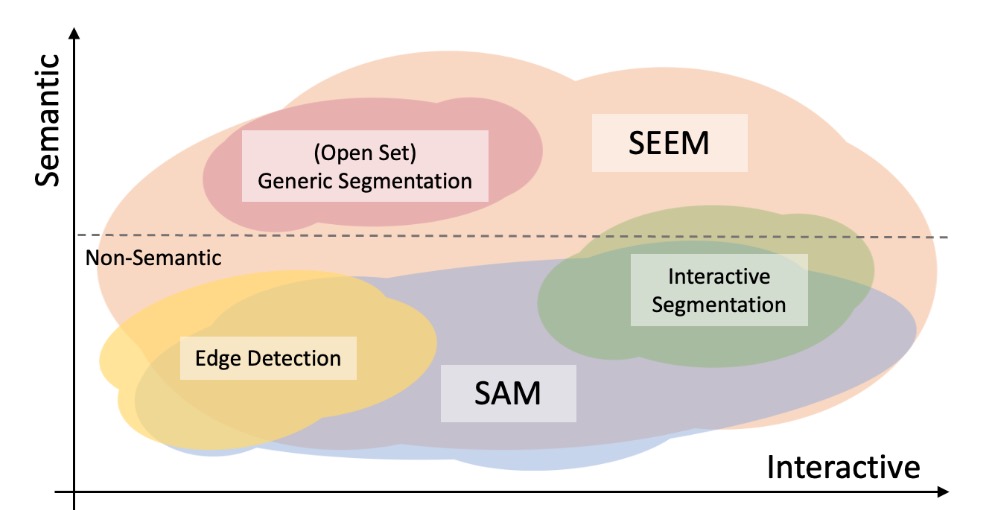

An example of using referring image on a popular teddy bear.In the following figure, we compare the levels of interaction and semantics of three segmentation tasks (edge detection, open-set, and interactive segmentation). Open-set Segmentation usually requires a high level of semantics and does not require interaction. Compared with SAM, SEEM covers a wider range of interaction and semantics levels. For example, SAM only supports limited interaction types like points and boxes, while misses high-semantic tasks since it does not output semantic labels itself. The reasons are: First, SEEM has a unified prompt encoder that encodes all visual and language prompts into a joint representation space. In consequence, SEEM can support more general usages. It has potential to extend to custom prompts. Second, SEEM works very well on text to mask (grounding segmentation) and outputs semantic-aware predictions.

- SEEM Demo

- Inference and Installation Code

- (Soon) Evaluation Code

- (TBD When) Training Code

- We appreciate hugging face for the GPU support on demo!