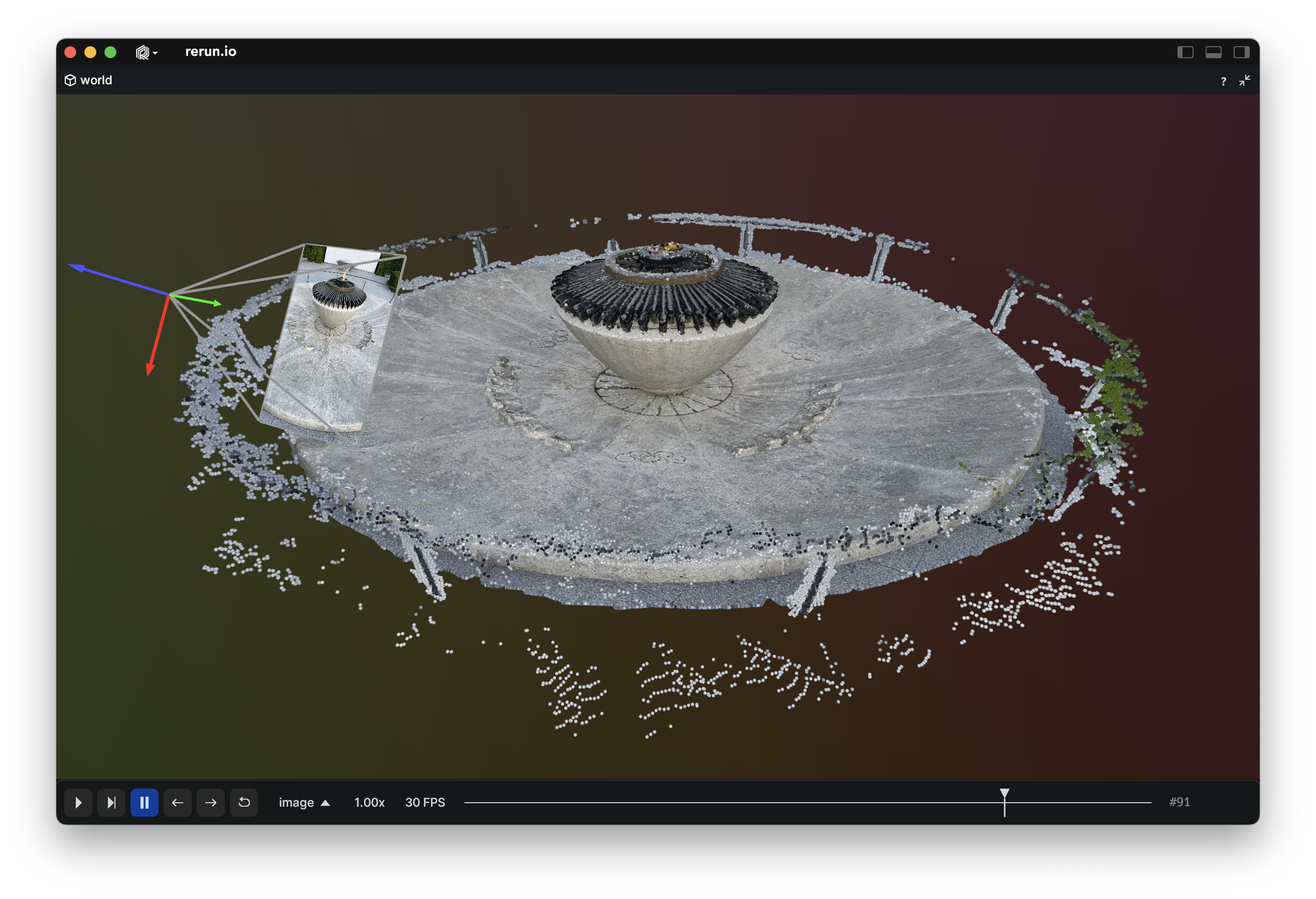

Use the Rerun SDK (available for C++, Python and Rust) to log data like images, tensors, point clouds, and text. Logs are streamed to the Rerun Viewer for live visualization or to file for later use.

import rerun as rr # pip install rerun-sdk

rr.init("rerun_example_app")

rr.connect() # Connect to a remote viewer

# rr.spawn() # Spawn a child process with a viewer and connect

# rr.save("recording.rrd") # Stream all logs to disk

# Associate subsequent data with 42 on the “frame” timeline

rr.set_time_sequence("frame", 42)

# Log colored 3D points to the entity at `path/to/points`

rr.log("path/to/points", rr.Points3D(positions, colors=colors))

…

To stream log data over the network or load our .rrd data files you also need the rerun binary.

It can be installed with pip install rerun-sdk or with cargo install rerun-cli --locked.

Note that only the Python SDK comes bundled with the Viewer whereas C++ & Rust always rely on a separate install.

You should now be able to run rerun --help in any terminal.

- 📚 High-level docs

- ⏃ Loggable Types

- ⚙️ Examples

- 🌊 C++ API docs

- 🐍 Python API docs

- 🦀 Rust API docs

⁉️ Troubleshooting

We are in active development. There are many features we want to add, and the API is still evolving. Expect breaking changes!

Some shortcomings:

- Multi-million point clouds are slow

- The viewer slows down when there are too many entities

- The data you want to visualize must fit in RAM

- See https://www.rerun.io/docs/howto/limit-ram for how to bound memory use.

- We plan on having a disk-based data store some time in the future.

Rerun is built to help you understand complex processes that include rich multimodal data, like 2D, 3D, text, time series, tensors, etc. It is used in many industries, including robotics, simulation, computer vision, or anything that involves a lot of sensors or other signals that evolve over time.

Say you're building a vacuum cleaning robot and it keeps running into walls. Why is it doing that? You need some tool to debug it, but a normal debugger isn't gonna be helpful. Similarly, just logging text won't be very helpful either. The robot may log "Going through doorway" but that won't explain why it thinks the wall is a door.

What you need is a visual and temporal debugger, that can log all the different representations of the world the robots holds in its little head, such as:

- RGB camera feed

- depth images

- lidar scan

- segmentation image (how the robot interprets what it sees)

- its 3D map of the apartment

- all the objects the robot has detected (or thinks it has detected), as 3D shapes in the 3D map

- its confidence in its prediction

- etc

You also want to see how all these streams of data evolve over time so you can go back and pinpoint exactly what went wrong, when and why.

Maybe it turns out that a glare from the sun hit one of the sensors in the wrong way, confusing the segmentation network leading to bad object detection. Or maybe it was a bug in the lidar scanning code. Or maybe the robot thought it was somewhere else in the apartment, because its odometry was broken. Or it could be one of a thousand other things. Rerun will help you find out!

But seeing the world from the point of the view of the robot is not just for debugging - it will also give you ideas on how to improve the algorithms, new test cases to set up, or datasets to collect. It will also let you explain the brains of the robot to your colleagues, boss, and customers. And so on. Seeing is believing, and an image is worth a thousand words, and multimodal temporal logging is worth a thousand images :)

Of course, Rerun is useful for much more than just robots. Any time you have any form of sensors, or 2D or 3D state evolving over time, Rerun would be a great tool.

Rerun uses an open-core model. Everything in this repository will stay open source and free (both as in beer and as in freedom). In the future, Rerun will offer a commercial product that builds on top of the core free project.

The Rerun open source project targets the needs of individual developers. The commercial product targets the needs specific to teams that build and run computer vision and robotics products.

When using Rerun in your research, please cite it to acknowledge its contribution to your work. This can be done by including a reference to Rerun in the software or methods section of your paper.

Suggested citation format:

@software{RerunSDK,

title = {Rerun: A Visualization SDK for Multimodal Data},

author = {{Rerun Development Team}},

url = {https://www.rerun.io},

version = {insert version number},

date = {insert date of usage},

year = {2024},

publisher = {{Rerun Technologies AB}},

address = {Online},

note = {Available from https://www.rerun.io/ and https://github.com/rerun-io/rerun}

}Please replace "insert version number" with the version of Rerun you used and "insert date of usage" with the date(s) you used the tool in your research. This citation format helps ensure that Rerun's development team receives appropriate credit for their work and facilitates the tool's discovery by other researchers.

ARCHITECTURE.mdCODE_OF_CONDUCT.mdCODE_STYLE.mdCONTRIBUTING.mdBUILD.mdrerun_py/README.md- instructions for Python SDKrerun_cpp/README.md- instructions for C++ SDK

- Download the correct

.whlfrom GitHub Releases - Run

pip install rerun_sdk<…>.whl(replace<…>with the actual filename) - Test it:

rerun --version