1RWTH Aachen University 2ETH AI Center 3ETH Zurich 4NVIDIA

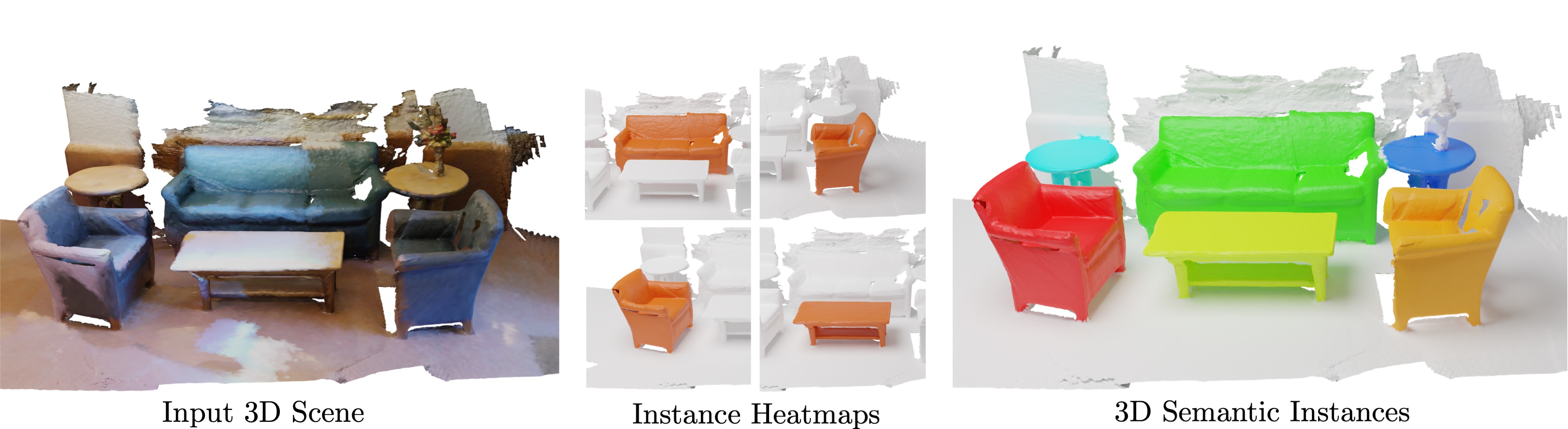

Mask3D predicts accurate 3D semantic instances achieving state-of-the-art on ScanNet, ScanNet200, S3DIS and STPLS3D.

[Project Webpage] [Paper] [Demo]

- 17. January 2023: Mask3D is accepted at ICRA 2023. 🔥

- 14. October 2022: STPLS3D support added.

- 10. October 2022: Mask3D ranks 2nd on the STPLS3D Challenge hosted by the Urban3D Workshop at ECCV 2022.

- 6. October 2022: Mask3D preprint released on arXiv.

- 25. September 2022: Code released.

We adapt the codebase of Mix3D which provides a highly modularized framework for 3D Semantic Segmentation based on the MinkowskiEngine.

├── mix3d

│ ├── main_instance_segmentation.py <- the main file

│ ├── conf <- hydra configuration files

│ ├── datasets

│ │ ├── preprocessing <- folder with preprocessing scripts

│ │ ├── semseg.py <- indoor dataset

│ │ └── utils.py

│ ├── models <- Mask3D modules

│ ├── trainer

│ │ ├── __init__.py

│ │ └── trainer.py <- train loop

│ └── utils

├── data

│ ├── processed <- folder for preprocessed datasets

│ └── raw <- folder for raw datasets

├── scripts <- train scripts

├── docs

├── README.md

└── saved <- folder that stores models and logs

The main dependencies of the project are the following:

python: 3.10.6

cuda: 11.6You can set up a conda environment as follows

conda create --name=mask3d python=3.10.6

conda activate mask3d

conda update -n base -c defaults conda

conda install openblas-devel -c anaconda

pip install torch torchvision --extra-index-url https://download.pytorch.org/whl/cu116

pip install torch-scatter -f https://data.pyg.org/whl/torch-1.12.1+cu116.html

pip install ninja==1.10.2.3

pip install pytorch-lightning fire imageio tqdm wandb python-dotenv pyviz3d scipy plyfile scikit-learn trimesh loguru albumentations volumentations

pip install antlr4-python3-runtime==4.8

pip install black==21.4b2

pip install omegaconf==2.0.6 hydra-core==1.0.5 --no-deps

pip install 'git+https://github.com/facebookresearch/detectron2.git@710e7795d0eeadf9def0e7ef957eea13532e34cf' --no-deps

cd third_party/pointnet2 && python setup.py install

After installing the dependencies, we preprocess the datasets.

First, we apply Felzenswalb and Huttenlocher's Graph Based Image Segmentation algorithm to the test scenes using the default parameters.

Please refer to the original repository for details.

Put the resulting segmentations in ./data/raw/scannet_test_segments.

python datasets/preprocessing/scannet_preprocessing.py preprocess \

--data_dir="PATH_TO_RAW_SCANNET_DATASET" \

--save_dir="../../data/processed/scannet" \

--git_repo="PATH_TO_SCANNET_GIT_REPO" \

--scannet200=false/true

The S3DIS dataset contains some smalls bugs which we initially fixed manually. We will soon release a preprocessing script which directly preprocesses the original dataset. For the time being, please follow the instructions here to fix the dataset manually. Afterwards, call the preprocessing script as follows:

python datasets/preprocessing/s3dis_preprocessing.py preprocess \

--data_dir="PATH_TO_Stanford3dDataset_v1.2" \

--save_dir="../../data/processed/s3dis"

python datasets/preprocessing/stpls3d_preprocessing.py preprocess \

--data_dir="PATH_TO_STPLS3D" \

--save_dir="../../data/processed/stpls3d"

Train Mask3D on the ScanNet dataset:

python main_instance_segmentation.pyPlease refer to the config scripts (for example here) for detailed instructions how to reproduce our results. In the simplest case the inference command looks as follows:

python main_instance_segmentation.py \

general.checkpoint='PATH_TO_CHECKPOINT.ckpt' \

general.train_mode=falseWe provide detailed scores and network configurations with trained checkpoints.

S3DIS (pretrained on ScanNet train+val)

Following PointGroup, HAIS and SoftGroup, we finetune a model pretrained on ScanNet (config and checkpoint).

| Dataset | AP | AP_50 | AP_25 | Config | Checkpoint 💾 | Scores 📈 | Visualizations 🔭 |

|---|---|---|---|---|---|---|---|

| Area 1 | 69.3 | 81.9 | 87.7 | config | checkpoint | scores | visualizations |

| Area 2 | 44.0 | 59.5 | 66.5 | config | checkpoint | scores | visualizations |

| Area 3 | 73.4 | 83.2 | 88.2 | config | checkpoint | scores | visualizations |

| Area 4 | 58.0 | 69.5 | 74.9 | config | checkpoint | scores | visualizations |

| Area 5 | 57.8 | 71.9 | 77.2 | config | checkpoint | scores | visualizations |

| Area 6 | 68.4 | 79.9 | 85.2 | config | checkpoint | scores | visualizations |

S3DIS (from scratch)

| Dataset | AP | AP_50 | AP_25 | Config | Checkpoint 💾 | Scores 📈 | Visualizations 🔭 |

|---|---|---|---|---|---|---|---|

| Area 1 | 74.1 | 85.1 | 89.6 | config | checkpoint | scores | visualizations |

| Area 2 | 44.9 | 57.1 | 67.9 | config | checkpoint | scores | visualizations |

| Area 3 | 74.4 | 84.4 | 88.1 | config | checkpoint | scores | visualizations |

| Area 4 | 63.8 | 74.7 | 81.1 | config | checkpoint | scores | visualizations |

| Area 5 | 56.6 | 68.4 | 75.2 | config | checkpoint | scores | visualizations |

| Area 6 | 73.3 | 83.4 | 87.8 | config | checkpoint | scores | visualizations |

| Dataset | AP | AP_50 | AP_25 | Config | Checkpoint 💾 | Scores 📈 | Visualizations 🔭 |

|---|---|---|---|---|---|---|---|

| ScanNet val | 55.2 | 73.7 | 83.5 | config | checkpoint | scores | visualizations |

| ScanNet test | 56.6 | 78.0 | 87.0 | config | checkpoint | scores | visualizations |

| Dataset | AP | AP_50 | AP_25 | Config | Checkpoint 💾 | Scores 📈 | Visualizations 🔭 |

|---|---|---|---|---|---|---|---|

| ScanNet200 val | 27.4 | 37.0 | 42.3 | config | checkpoint | scores | visualizations |

| ScanNet200 test | 27.8 | 38.8 | 44.5 | config | checkpoint | scores | visualizations |

| Dataset | AP | AP_50 | AP_25 | Config | Checkpoint 💾 | Scores 📈 | Visualizations 🔭 |

|---|---|---|---|---|---|---|---|

| STPLS3D val | 57.3 | 74.3 | 81.6 | config | checkpoint | scores | visualizations |

| STPLS3D test | 63.4 | 79.2 | 85.6 | config | checkpoint | scores | visualizations |

@article{Schult23ICRA,

title = {{Mask3D for 3D Semantic Instance Segmentation}},

author = {Schult, Jonas and Engelmann, Francis and Hermans, Alexander and Litany, Or and Tang, Siyu and Leibe, Bastian},

booktitle = {{International Conference on Robotics and Automation (ICRA)}},

year = {2023}

}