This codebase implements the system described in the paper:

Unsupervised Scale-consistent Depth and Ego-motion Learning from Monocular Video

Jia-Wang Bian, Zhichao Li, Naiyan Wang, Huangying Zhan, Chunhua Shen, Ming-Ming Cheng, Ian Reid

NeurIPS 2019 [PDF] [Project webpage]

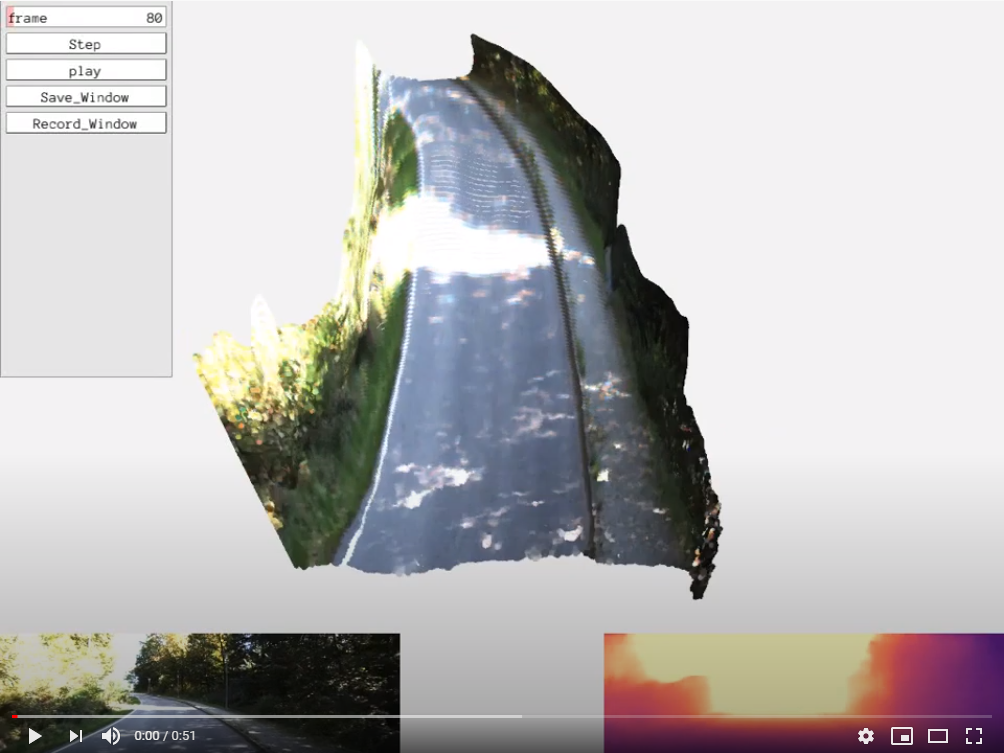

- A geometry consistency loss, which makes the predicted depths to be globally scale consistent.

- A self-discovered mask, which detects moving objects and occlusions effectively and efficiently.

- The scale-consistent predictions allow for doing Monocular Visual Odometry on long videos.

@inproceedings{bian2019depth,

title={Unsupervised Scale-consistent Depth and Ego-motion Learning from Monocular Video},

author={Bian, Jia-Wang and Li, Zhichao and Wang, Naiyan and Zhan, Huangying and Shen, Chunhua and Cheng, Ming-Ming and Reid, Ian},

booktitle= {Thirty-third Conference on Neural Information Processing Systems (NeurIPS)},

year={2019}

}

Note that this is an updated version, and you can find the original version in 'Release / NeurIPS Version' for reproducing the results reported in paper. Compared with NeurIPS version, we (1) Change networks by using Resnet18 and Resnet50 pretrained model (on ImageNet) for depth and pose encoders. (2) We add 'auto_mask' by Monodepth2 to remove stationary points.

We add training and testing on NYUv2 indoor depth dataset. See Unsupervised-Indoor-Depth for details.

This codebase was developed and tested with python 3.6, Pytorch 1.0.1, and CUDA 10.0 on Ubuntu 16.04. It is based on Clement Pinard's SfMLearner implementation.

pip3 install -r requirements.txtor install manually the following packages :

torch >= 1.5.1

imageio

matplotlib

scipy

argparse

tensorboardX

blessings

progressbar2

path

It is also advised to have python3 bindings for opencv for tensorboard visualizations

See "scripts/run_prepare_data.sh".

For KITTI Raw dataset, download the dataset using this script http://www.cvlibs.net/download.php?file=raw_data_downloader.zip) provided on the official website.

For KITTI Odometry dataset, download the dataset with color images.

Or you can download our pre-processed dataset from the following link

kitti_256 (for kitti raw) | kitti_vo_256 (for kitti odom) | kitti_depth_test (eigen split) | kitti_vo_test (seqs 09-10)

The "scripts" folder provides several examples for training and testing.

You can train the depth model on KITTI Raw by running

sh scripts/train_resnet18_depth_256.shor train the pose model on KITTI Odometry by running

sh scripts/train_resnet50_pose_256.shThen you can start a tensorboard session in this folder by

tensorboard --logdir=checkpoints/and visualize the training progress by opening https://localhost:6006 on your browser.

You can evaluate depth on Eigen's split by running

sh scripts/test_kitti_depth.shevaluate visual odometry by running

sh scripts/test_kitti_vo.shand visualize depth by running

sh scripts/run_inference.shTo evaluate the NeurIPS models, please download the code from 'Release/NeurIPS version'.

| Models | Abs Rel | Sq Rel | RMSE | RMSE(log) | Acc.1 | Acc.2 | Acc.3 |

|---|---|---|---|---|---|---|---|

| resnet18 | 0.119 | 0.858 | 4.949 | 0.197 | 0.863 | 0.957 | 0.981 |

| resnet50 | 0.115 | 0.814 | 4.705 | 0.191 | 0.873 | 0.960 | 0.982 |

| Metric | Seq. 09 | Seq. 10 |

|---|---|---|

| t_err (%) | 7.31 | 7.79 |

| r_err (degree/100m) | 3.05 | 4.90 |

-

SfMLearner-Pytorch (CVPR 2017, our baseline framework.)

-

Depth-VO-Feat (CVPR 2018, trained on stereo videos for depth and visual odometry)

-

DF-VO (ICRA 2020, use scale-consistent depth with optical flow for more accurate visual odometry)

-

Kitti-Odom-Eval-Python (python code for kitti odometry evaluation)

-

Unsupervised-Indoor-Depth (Using SC-SfMLearner in NYUv2 dataset)