Rishit Dagli1 · Shivesh Prakash1 · Rupert Wu1 · Houman Khosravani1,2,3

1University of Toronto 2Temerty Centre for Artificial Intelligence Research and Education in Medicine 3Sunnybrook Research Institute

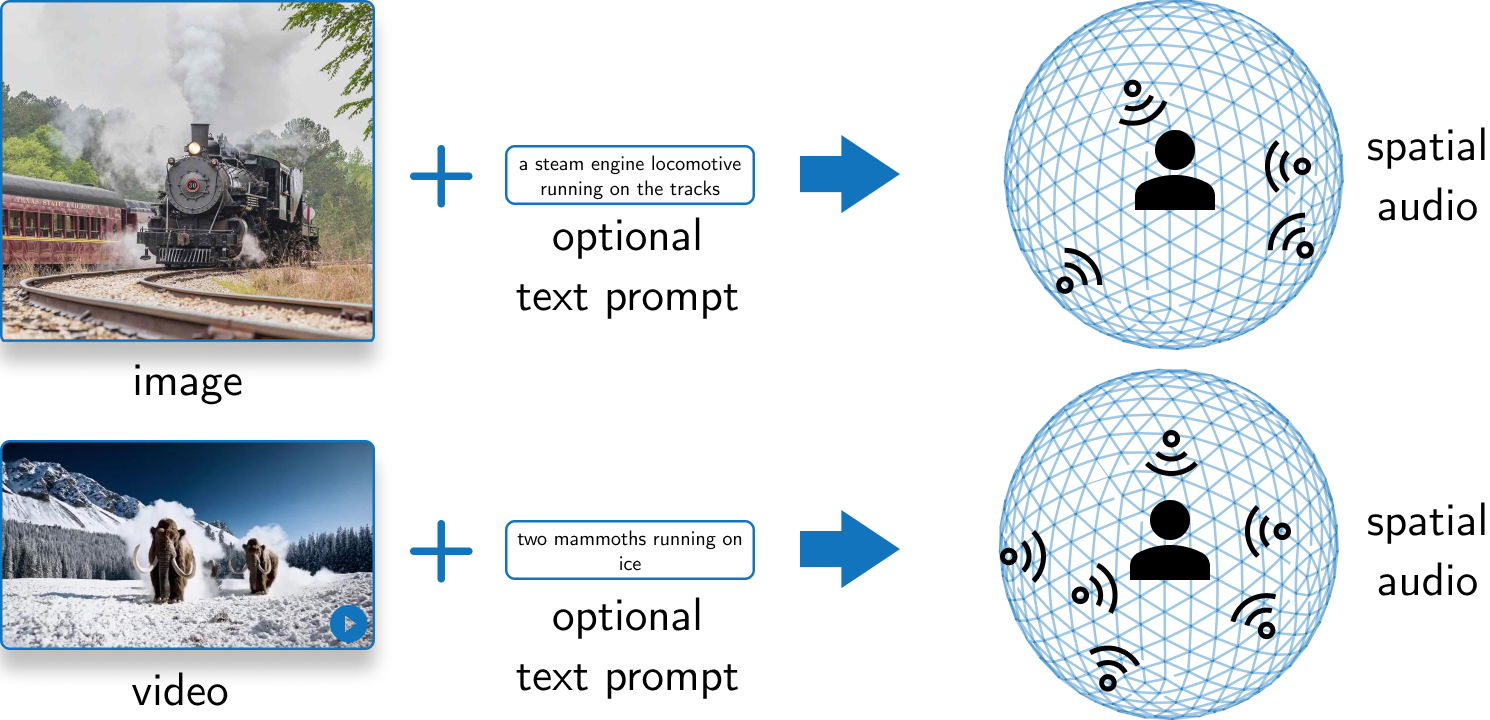

This work presents SEE-2-SOUND, a method to generate spatial audio from images, animated images, and videos to accompany the visual content. Check out our website to view some results of this work.

You could also skip this section and run this entirely in a docker container, for which you can find the instructions in Run in Docker, or using Gradio (for any HF/Gradio issues cc @jadechoghari 🤗).

First, install the pip package by running:

pip install -e git+https://github.com/see2sound/see2sound.git#egg=see2soundNow, install all the required packages:

git clone https://github.com/see2sound/see2sound

cd see2sound

pip install -r requirements.txtEvaluating the code (not inference though) requires the Visual Acoustic Matching (AViTAR) codebase. However, due to the many changes required to run AViTAR, you should install the codebase through a fork we host. Install this by running:

pip install git+https://github.com/Rishit-dagli/visual-acoustic-matching-s2sgit clone https://github.com/Rishit-dagli/visual-acoustic-matching-s2s

cd visual-acoustic-matching-s2s

pip install -e .Check out the Tips section for tips on installing the requirements.

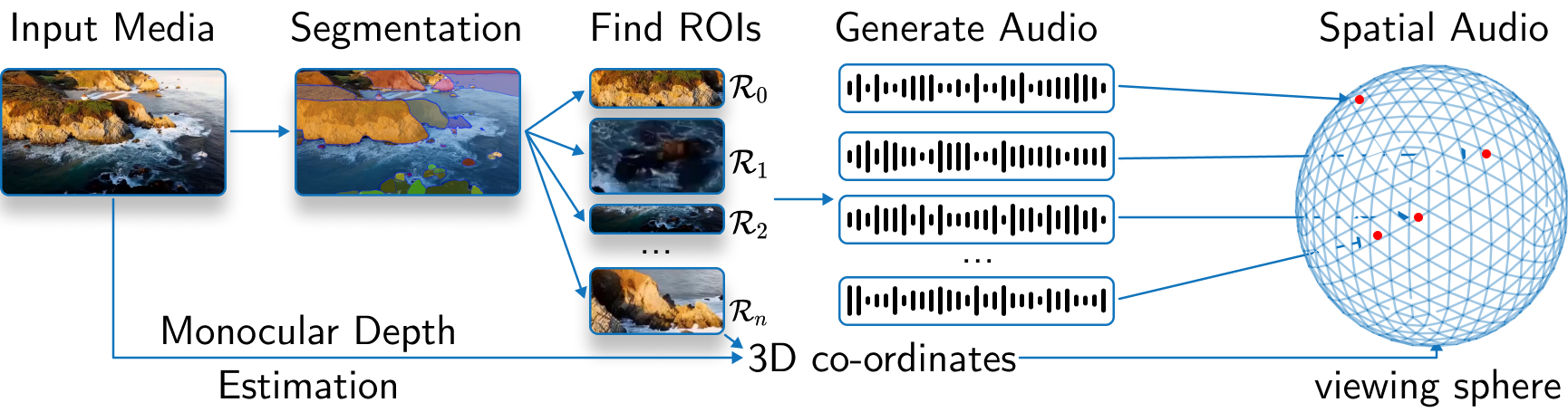

SEE-2-SOUND consists of three main components: source estimation, audio generation, and surround sound spatial audio generation.

In the source estimation phase, the model identifies regions of interest in the input media and estimates their 3D positions on a viewing sphere. It also estimates the monocular depth map of the input image.

Next, in the audio generation phase, the model generates mono audio clips for each identified region of interest, leveraging a pre-trained CoDi model. These audio clips are then combined with the spatial information to create a 4D representation for each region.

Finally, the model generates 5.1 surround sound spatial audio by placing sound sources in a virtual room and computing Room Impulse Responses (RIRs) for each source-microphone pair. Microphones are positioned according to the 5.1 channel configuration, ensuring compatibility with prevalent audio systems and enhancing the immersive quality of the audio output.

For evaluation, we propose a new quantitative evaluation technique and also do a user study. We propose a new method due to the difficulty in evaluating such a system, especially in the absence of any baselines. For quantitative evaluation, we produce outputs from an image to audio system (CoDi) which serves as a baseline and our system. We then run these approaches through AViTAR which edits the audio to match the visual content and then we compute similarity scores between pairs of these audio for each image.

The See2Sound class has a few main methods:

setupthat downloads and loads models into memory (in high memory mode)adjust_audiothat simulates a room and computes the spatial audiorunthat puts together inference code

The eval_See2Sound class has a few main methods

setupto download and load models into memory (in high memory mode)generate_audioto generate mono audiorun_avitarto run the AViTAR modelcompute_acoustic_similarityto compute quantitative metrics

Here is a guide on using this codebase in general it should be relatively quick to get started with this since it's well packaged as a pip package.

All of the code is designed to be run from the root of this repository.

import see2sound

config_file_path = "default_config.yaml"

model = see2sound.See2Sound(config_path = config_file_path)

model.setup()

model.run(path = "test.png", output_path = "test.wav")You should only run evaluation to do any quantitative evaluations with the quantitative evaluation method we propose in our work.

from see2sound.evaluation import eval_See2Sound

image_dir_path = "path to a directory with all images"

evaluator = evalSee2Sound(config_path = config_file_path)

evaluator.setup()

evaluator.evaluate(image_dir_path)You could run the inference and evaluation in a container, for the purpose of writing a guide to run the container image we use Docker. However, you should be able to use any other container runtime too.

Start by building the container image by running:

docker build . -t rishitdagli/see2sound:latestor you can also directly use the prebuilt image (41 GB compressed):

docker pull rishitdagli/see2sound:latest

You can now use docker run and start running inference or evaluation in the container with the environment setup and models pre-downloaded for you.

You could also setup the app using Gradio.

pip install -r gradio-req.txt

python app.pyWe share some tips on running the code and reproducing our results.

- If you just want to run inference, I recommend using

torch==2.3.0and using this slimrequirements.txtfile. - You could find some ways to perform the quantitative evaluation with the original Visual Acoustic Matching repository, we would, however, suggest using the fork which has some additional features which are required if you want to run our code from the

pippackage. - The repository has the dependency

tensorflowwhich is required byspeech_metricsandvam. However, this is only needed for the quantitative evaluations in our work and not for inference. - Our codebase works with PyTorch 2.x, to this extent all of our code and results were produced with PyTorch 2.3.0, our

requirements.txtfile, however, has PyTorch 1.13.1 since PyTorch 1.x is required for our quantitative evaluation.

- If you are running inference, the code downloads a few artifacts: Segment Anything weights, Depth Anything weights, CoDi weights, and CLIP ViT-H Tokenizer.

- If you are running evaluation, the code downloads a few artifacts: Segment Anything weights, Depth Anything weights, CoDi weights, CLIP ViT-H Tokenizer, and AViTAR weights.

- The paths to all of these files can be entered in the config

yamlwhere the package will look for them or download them at. Thus one could make the inference or evaluation run without any network connection.

- We have currently optimized the code for and run all of the experiments on a A100 - 80 GB GPU. However, we have also tested the code on a A100 - 40 GB GPU, a H100 - 80 GB GPU, and a V100 - 32 GB GPU (run with the low memory mode) where the inference and evaluation seem to work pretty fast.

- In general, we would recommend a GPU above 40 GB vRAM, you could, however, run this on a GPU with 24 GB or more vRAM in the low memory mode (trades off-peak vRAM usage with the time taken).

- We would recommend having at least 24 GB CPU RAM for the code to work well, ideally, we would recommend 32 GB CPU RAM though.

- All of our experiments were run with Segment Anything ViT-H and Depth Anything ViT-L. However, any of the models can be replaced for the smaller variants through the config

yamlfile or also different models altogether. - We would suggest running the inference for at least 500 diffusion steps and somewhere between 3 to 5 as

num_audios.

This code base is built on top of, and thanks to them for maintaining the repositories:

If you find See-2-Sound helpful, please consider citing:

@misc{dagli2024see2sound,

title={SEE-2-SOUND: Zero-Shot Spatial Environment-to-Spatial Sound},

author={Rishit Dagli and Shivesh Prakash and Robert Wu and Houman Khosravani},

year={2024},

eprint={2406.06612},

archivePrefix={arXiv},

primaryClass={cs.CV}

}