Learning environments for robotic manipulation using MuJoCo with the dm_control package. (this repo also serves as project for learning to use both myself, so not everything is implemented in the best/ most canonical way).

All these tasks have both dense and sparse rewards, and both visual and state observations.

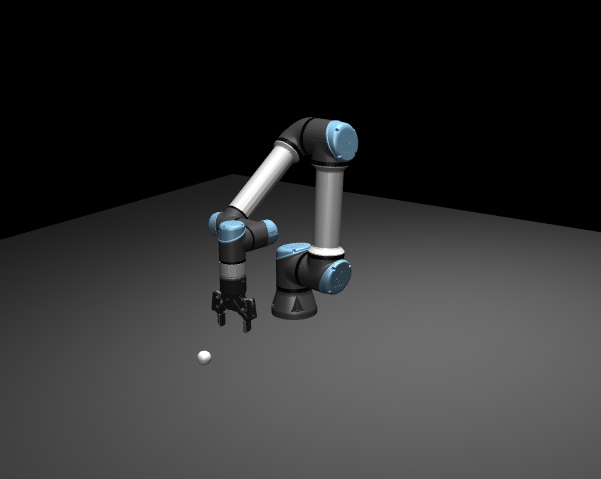

Reach The agent has to reach a target location in the Euclidean space by providing delta steps for the position (xyz).

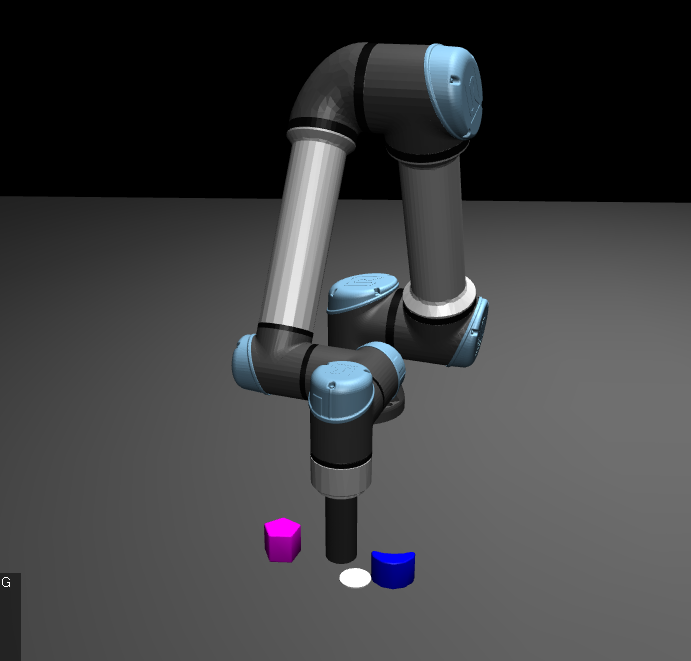

Planar Push The agent controls delta xy coordinates of a cylindrical EEF and has to push a configurable number of objects to a target location (indicated by a white disc).

/mujoco_sim

/entities # all phyiscal objects in the environments, using the composer.Entity abstraction

/arenas # roots of the entity tree, contain the 'robot setup'

/robots # actual robots

/eef # grippers etc.

/props # non-actuated elements

/environments

/tasks # implements the actual learning tasks

dmc2gym.py # converts the DMC environment interface to a gym interface for interacting with most RL frameworks

/mjcf # contains the mjcf xml files for all the entities

git clonegit submodules update --initconda env create -f environment.yamlpip install -e ur_ikfast/pip install -e airo-core/

for learning:

pip install -e .[sb3]

- mujoco_menagerie: library of MJCF models

- ur_ikfast: python ikfast wrapper for UR robot class