This is a repository about the continual learning for pedestrian trajectory prediction

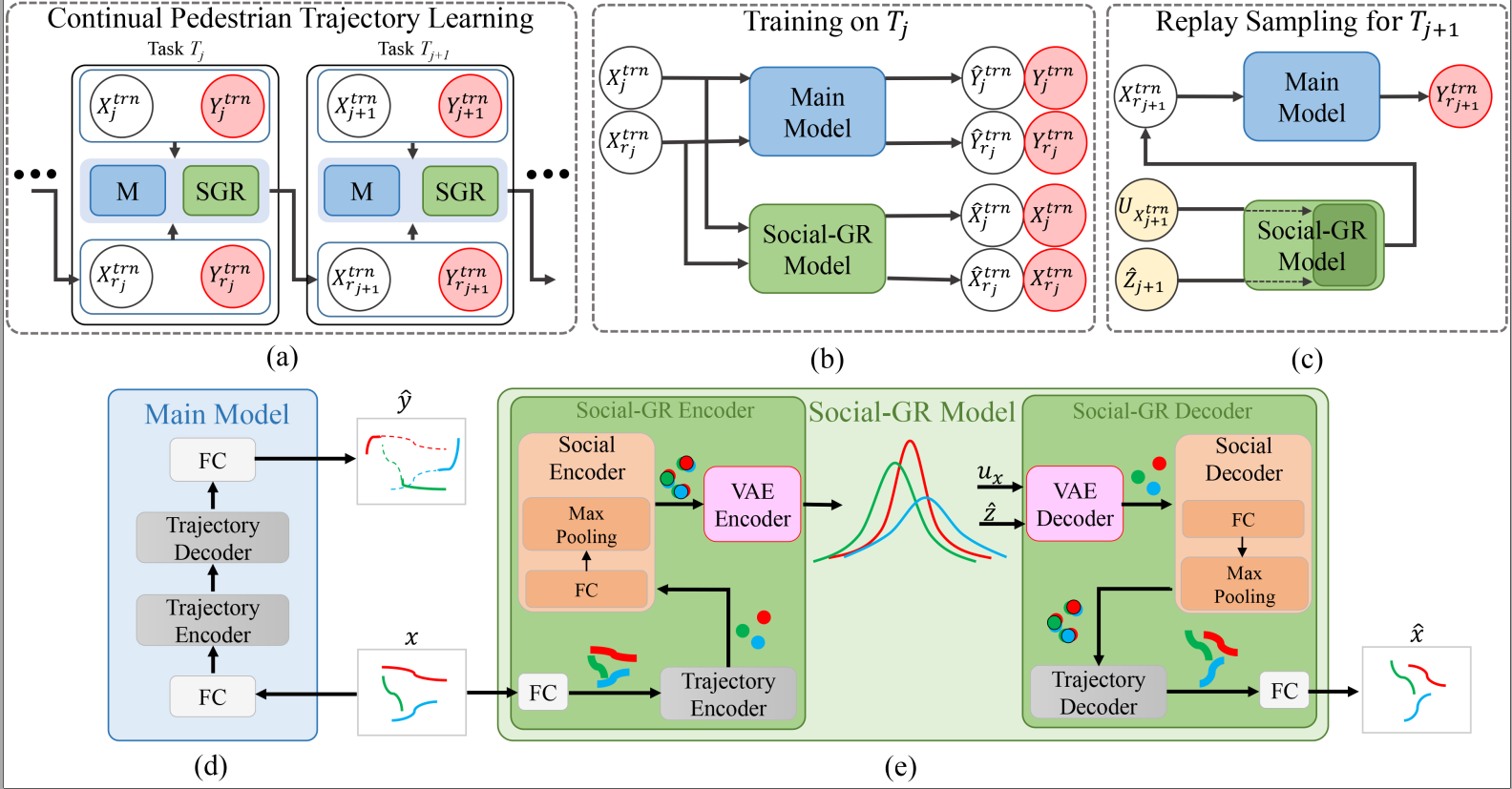

Learning to predict the trajectories of pedestrians is particularly important for safe and effective autonomous driving and becomes more challenging when the vehicle needs to drive through multiple environments in which the motion patterns of pedestrians are fundamentally different between environments. Existing pedestrian trajectory prediction models heavily rely on the availability of representative data samples during training. In the presence of additional training data from a new environment, these models need to be retrained on all data sets to avoid catastrophic forgetting the knowledge obtained from the already supported environments.

|-- data

|-- loader.py

|-- datasets

|-- generative_model

|-- vae_models.py

|-- main_model

|-- encoder.py

|-- main.py

|-- train.py

|-- readme.md

|-- requirements.txtThe current version of the code has been tested with

python 3.6.13torch 1.7.1torchvision 0.8.2

You can run conda install --yes --file requirements.txt to install all dependencies.

First, we use --main_model=lstm as an example to illustrate.

For the STGAT, we only need to change the main_model.

In our benchmark, the datasets include only pedestrians. Therefore, for both inD and INTERACTION datasets we select only pedestrian data. In addition, for INTERACTION we also re-labeled the pedestrians. So you need to download our processed dataset before running the code, which is in the {dataset} file.

- You can run

python main.py --method=batch_learning --log_dir=ETH --dataset_name=ETH, the best model will be saved after the training is completed, - Then you can run

python evaluate_batch_learning.py --log_dir=ETH --dataset_name_train=ETH --dataset_name_test=ETHto get the results. - If you want to change dataset, you can change the

dataset_name.

Individual experiments can be run with main.py and use the default in code, and all results will be saved to ./results.

-

You can run

python main.py --replay=nonefor CL-NR. -

You can run

python main.py --replay=exemplarsfor CL-ER, in addition, you can change the percentage in./helper/memory_eth.py,./helper/memory_eth_ucy.py,./helper/memory_eth_ucy_ind.py -

You can run

python main.py --replay=generative --replay_model=conditionfor CL-CGR -

You can run

python main.py --replay=generativefor CL-SGR

- You can run

python main.py --replay=generativefor CL_SGR_STR, you need to go to the./ablation/CL-SGR-STRfolder. - You can run

python main.py --replay=generativefor CL_SGR_CPD, you need to go to the./ablation/CL-SGR-CPDfolder. - You can run

python main.py --replay=generativefor CL_SGR_RIN, you need to go to the./ablation/CL-SGR-RINfolder.

- First, you need to change the task order in

main.pyline 177, 178, 179, then you can runpython main.py --replay=noneandpython main.py --replay=generativeto get the results of Table 3.

Our default task order is ETH -> UCY -> inD -> INTERACTION.

If you want to try another parameter. Main options are:

--replay: whether use generative replay model? (none | generative)--replay_model: the generative replay model (lstm | condition)--main_model: determine which main model to choose. (lstm | gat, default islstm)--iters: the number of epochs. (default is400)--z_dim: the dimension of hidden vary z in VAE. (default is200)--batch_size: the mini batch size of current dataset. (default is64)--replay_batch_size: the mini batch size of previous dataset. (default is64)--lr: the learning rate. (default is0.001)--pdf: whether save the results to a pdf.--visdom: on-the-fly plots during training.--val: whether use the val dataset to select model, default=True.--val_class: use the val dataset of current task to validation model.

The above parameters are applicable to both GR and SGR.

For example, you can run python main.py --replay=none --main_model=lstm --iters=400 --z_dim=200 --batch_size=64 --replay_batch_size=64 --lr=0.001 --lr_gen=0.001 --val --val_class=current --pdf --visdom, this code will work.

If you have any problems, you can contact wuya@gmail.com or a.bighashdel@tue.nl

Our codes borrow some ideas from continual-learning, thanks for their work.

- van de Ven, Gido M., Hava T. Siegelmann, and Andreas S. Tolias. "Brain-inspired replay for continual learning with artificial neural networks." Nature communications 11.1 (2020): 1-14.