Simon Klenk1,2* Marvin Motzet1,2* Lukas Koestler1,2 Daniel Cremers1,2

*equal contribution

1Technical University of Munich (TUM) 2Munich Center for Machine Learning (MCML)

International Conference on 3D Vision (3DV) 2024, Davos, CH

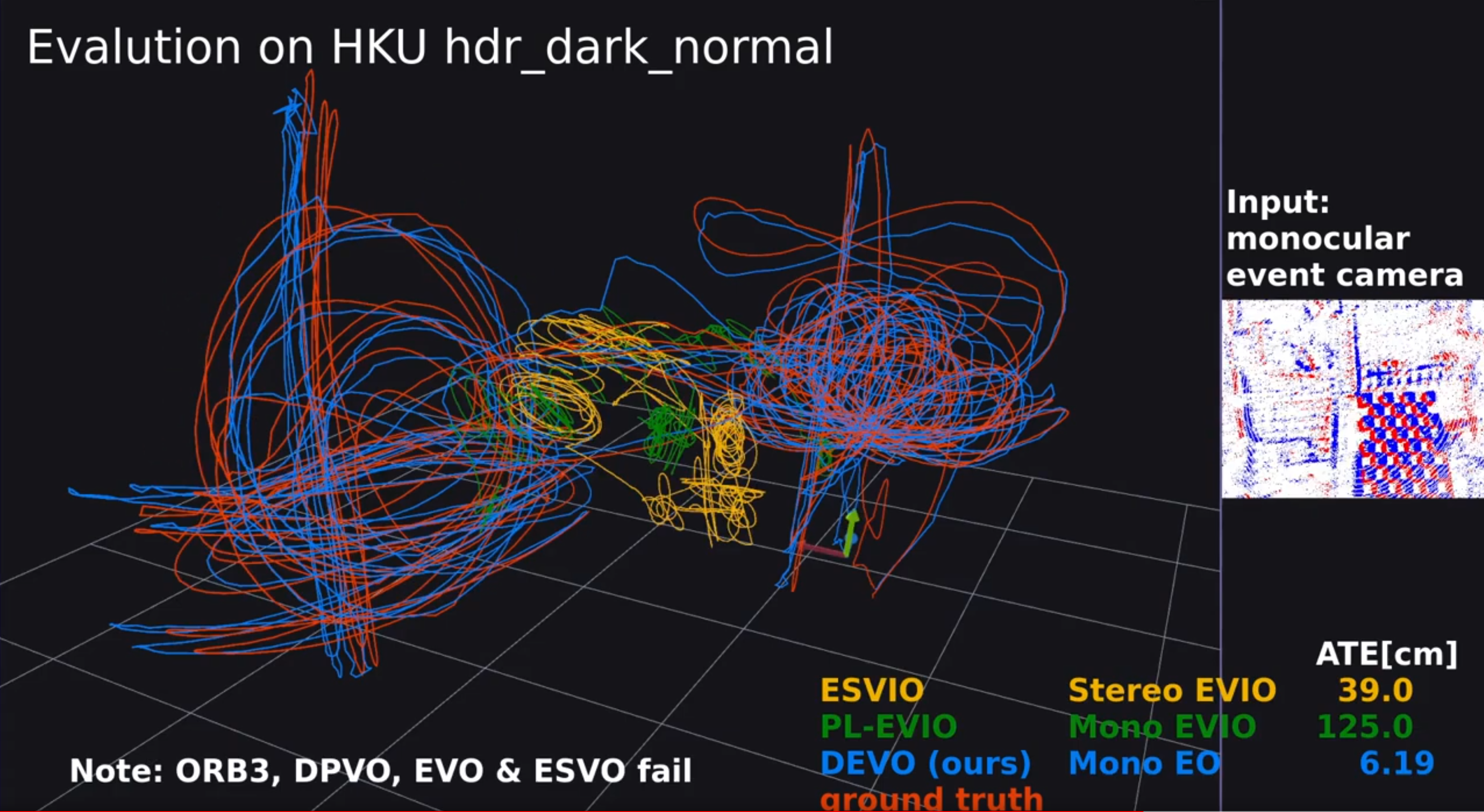

Event cameras offer the exciting possibility of tracking the camera's pose during high-speed motion and in adverse lighting conditions. Despite this promise, existing event-based monocular visual odometry (VO) approaches demonstrate limited performance on recent benchmarks. To address this limitation, some methods resort to additional sensors such as IMUs, stereo event cameras, or frame-based cameras. Nonetheless, these additional sensors limit the application of event cameras in real-world devices since they increase cost and complicate system requirements. Moreover, relying on a frame-based camera makes the system susceptible to motion blur and HDR. To remove the dependency on additional sensors and to push the limits of using only a single event camera, we present Deep Event VO (DEVO), the first monocular event-only system with strong performance on a large number of real-world benchmarks. DEVO sparsely tracks selected event patches over time. A key component of DEVO is a novel deep patch selection mechanism tailored to event data. We significantly decrease the pose tracking error on seven real-world benchmarks by up to 97% compared to event-only methods and often surpass or are close to stereo or inertial methods.

During training, DEVO takes event voxel grids

The code was tested on Ubuntu 22.04 and CUDA Toolkit 11.x. We use Anaconda to manage our Python environment.

First, clone the repo

git clone https://github.com/tum-vision/DEVO.git --recursive

cd DEVOThen, create and activate the Anaconda environment

conda env create -f environment.yml

conda activate devoNext, install the DEVO package

wget https://gitlab.com/libeigen/eigen/-/archive/3.4.0/eigen-3.4.0.zip

unzip eigen-3.4.0.zip -d thirdparty

# install DEVO

pip install .Check scripts/pp_DATASETNAME.py for the way to pre-process the original datasets. This will create the necessary files for you, e.g. rectify_map.h5, calib_undist.json and t_offset_us.txt.

Please note, the training data have the size of about 1.1TB (rbg: 300GB, evs: 370GB).

First, download all RGB images and depth maps of TartanAir from the left camera (~500GB) to <TARTANPATH>

python thirdparty/tartanair_tools/download_training.py --output-dir <TARTANPATH> --rgb --depth --only-leftNext, generate event voxel grids using vid2e

python # TODO release simulationWe provide scene infomation (including frame graph for co-visability used by clip sampling). (Building dataset is expensive).

# download data (~450MB)

./download_data.shWe provide a pretrained model for our simulated event data

# download model (~40MB)

./download_model.shMake sure you have run ./download_data.sh. Your directory structure should look as follows

├── datasets

├── TartanAirEvs

├── abandonedfactory

├── abandonedfactory_night

├── ...

├── westerndesert

...

To train (log files will be written to runs/<your name>). Model will be run on the validation split every 10k iterations

python train.py -c="config/DEVO_base.conf" --name=<your name>python evals/eval_evs/eval_XXX_evs.py --datapath=<path to xxx dataset> --weights="DEVO.pth" --stride=1 --trials=1 --expname=<your name>- Code and model are released.

- [] TODO Release code for simulation

If you find our work useful, please cite our paper:

@article{klenk2023devo,

title = {Deep Event Visual Odometry},

author = {Klenk, Simon and Motzet, Marvin and Koestler, Lukas and Cremers, Daniel},

journal = {arXiv preprint arXiv:2312.09800},

year = {2023}

}We thank the authors of the following repositories for publicly releasing their work:

- DPVO

- TartanAir

- vid2e

- E2Calib

- rpg_trajectory_evaluation

- Event-based Vision for VO/VIO/SLAM in Robotics

This work was supported by the ERC Advanced Grant SIMULACRON.