FedMSA

This directory contains source code for evaluating federated stochastic approximation with multiple coupled sequences (FedMSA) with different optimizers on various models and tasks. In FedMSA, the objective is to find the optimal values of

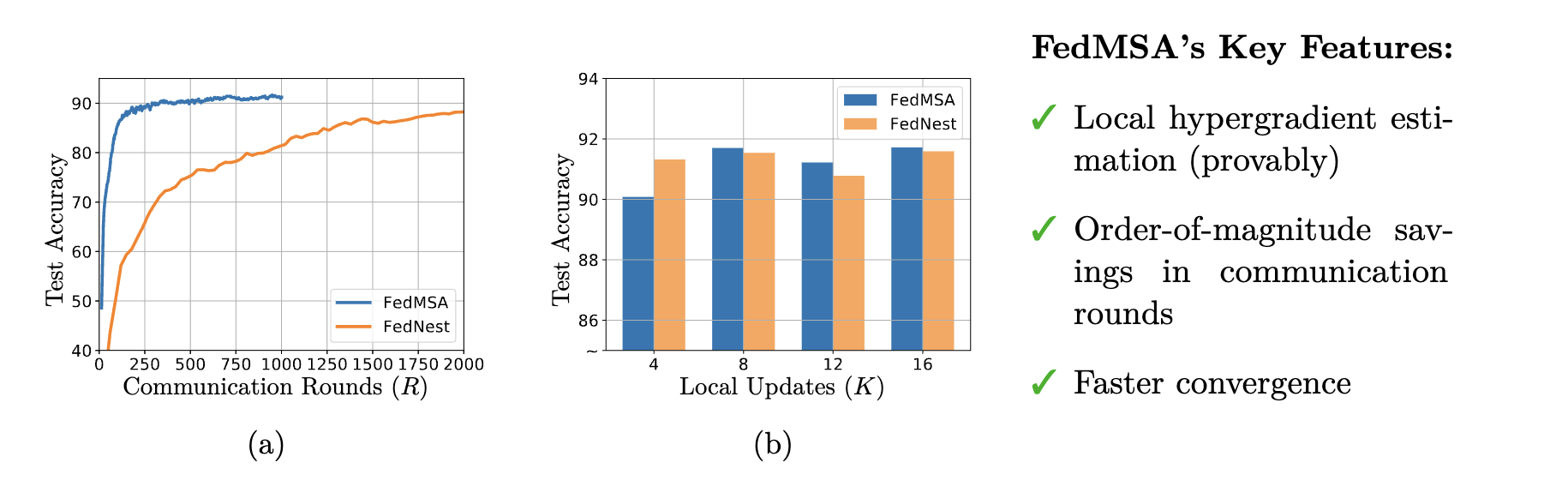

FedMSA has found broad applications in machine learning as it encompasses a rich class of problems including bilevel optimization (BLO), multi-level compositional optimization (MCO), and reinforcement learning (specifically, actor-critic methods). The code was originally developed for the paper "Federated Multi-Sequence Stochastic Approximation with Local Hypergradient Estimation" (arXiv link).

Note: The scripts will be slow without the implementation of parallel computing.

Requirements

python>=3.6

pytorch>=0.4

Reproducing Results on FL Benchmark Tasks

FedBLO: Loss Function Tuning on Imbalanced Dataset

-

The parametric loss tuning experiments on imbalanced dataset follows the loss function design idea of AutoBalance: Optimized Loss Functions for Imbalanced Data (Mingchen Li, Xuechen Zhang, Christos Thrampoulidis, Jiasi Chen, Samet Oymak), but we only use MNIST in imbalanced loss function design. This code uses the bilevel implenmentation of Optimizing Millions of Hyperparameters by Implicit Differentiation (Jonathan Lorraine, Paul Vicol, David Duvenaud)

-

Code is adopted from FedNest. Please check the reproduce folder to reproduce the result.

To reproduce the result, run the following 4 python scripts in the order of:

python reproduce/run_blo_10clients.py

python reproduce/run_fednest.py

python figure_q.py

python figure_tau.py

The training process for the model under FedMSA can be executed by running the script run_blo_10clients.py. On the other hand, the baseline results can be reproduced using the original code and parameters from FedNest by running the script run_fednest. In order to adapt FedNest, we made specific modifications to the core.Client file, incorporating core.ClientManage_blo and main_imbalance_blo.

FedMCO: Federated Risk-Averse Stochastic Optimization

-

The algorithm is also implemented on a (synthetic) federated multilevel stochastic composite optimization problems. Our example is specifically chosen from the field of risk-averse stochastic optimization, which involves multilevel stochastic composite optimization problems. It can be formulated as follows:

$$\min_{x}{\mathbb{E}[U({x}, \xi)]+\lambda \sqrt{\mathbb{E}[\max(0, U({x},\xi)-\mathbb{E} [U({x},\xi)])^2]}}.$$ -

Code is in the jupyter notebook file, fedMCO_stochastic_final.ipynb.

More arguments are avaliable in options.py. And the reproduce scripts also provides several scripts to run the experiments.